Agile Metrics: The Good, the Bad, and the Ugly

Suitable agile metrics reflect either a team’s progress in becoming agile or your organization’s progress in becoming a learning organization.

At the team level, qualitative agile metrics typically work better than quantitative metrics. At the organizational level, this is reversed: quantitative agile metrics provide better insights than qualitative ones.

Good Agile Metrics

Generally speaking, metrics are used to understand the current situation better as well as to gain insight on change over time. Without metrics, assessing any effort or development will be open to gut feeling and bias-based interpretations.

A metric should, therefore, be a leading indicator for a pattern change, providing an opportunity to analyze the cause in time. The following three general rules for agile metrics have proven to be useful:

- Only to track those that apply to the team. Ignore those that measure the individual.

- Don't measure parameters just because they are easy to follow. This practice often is a consequence of using various agile tools that offer out-of-the-box reports.

- Record context. Data without context, for example, the number of the available team member, or the intensity of incidents during a sprint, may turn out to be nothing more than noise.

For example, if the (common) sentiment on the technical debt metric (see below) is slowly but steadily decreasing, this may indicate that the team:

- Started sacrificing code quality to meet deadlines.

- Deliberately built some temporary solution to speed up experimentation.

While the latter probably is a good thing, the first interpretation is worrying. (You would need to analyze this with the team during a retrospective.)

Good Qualitative Agile Metrics: Self-Assessment Tests

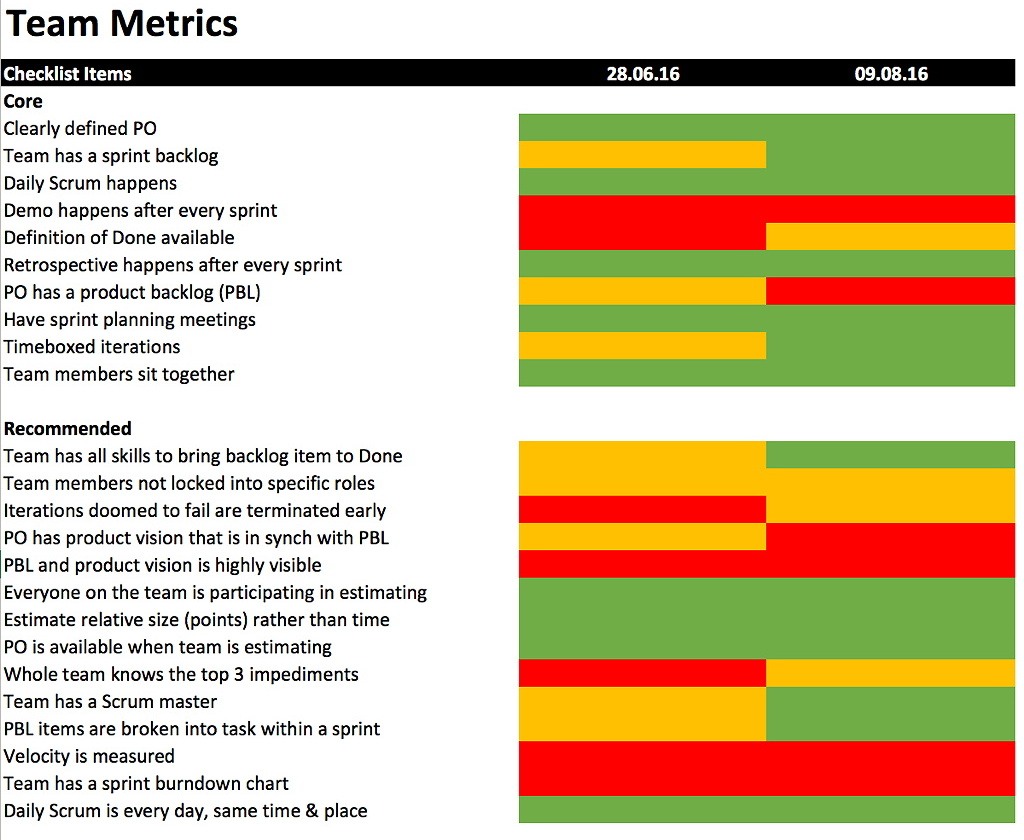

If you like to track a team’s progress in adopting agile techniques and processes, self-assessment tests are a good idea. I like to use the Scrum Checklist by Henrik Kniberg.

All you have to do is run the questionnaire every four to six weeks during a retrospective, record the results, and aggregate them:

In this example, we were using a kind of estimation poker to answer each question with one of the three values green, orange, and red. The colors were coded as follows:

- Green: It worked for the team.

- Orange: It worked for the team, but there was room for improvement.

- Red: It either didn’t apply (for example, the team wasn’t using burn down charts), or the practice was still failing.

If the resulting Scrum practices map is getting greener over time, then the team is on the right track. Otherwise, you have to dig deeper to understand the reasons why there is no continuous improvement and adapt accordingly.

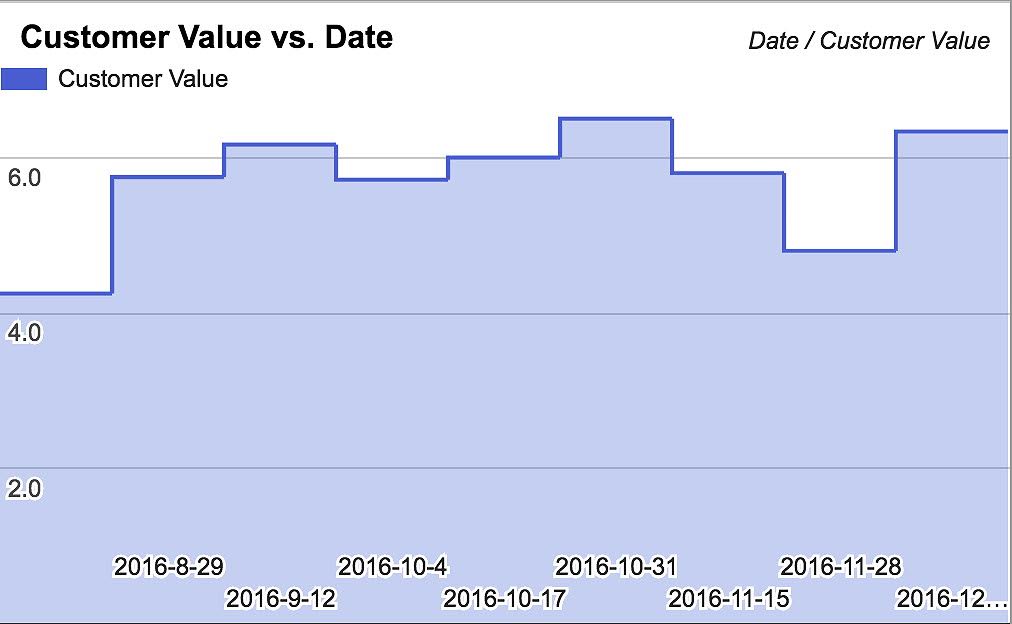

In addition to this exercise, I also like to run an anonymous poll at the end of every two-week Sprint. The poll is comprising of three questions that are each answered on a scale from 1 to 10:

- What value did the team deliver the last Sprint? 1 means that we didn’t deliver any value; 10 means that we delivered the maximum value possible.

![Agile metrics: customer value delivered – Age of Product]()

- How has the level of technical debt developed during the last Sprint? 1 means that we need to rewrite the application from scratch; 10 means that there is no technical debt.

- Are you happy working with your teammates? 1 means, "I am looking for a new job;" 10 means, "I cannot wait to get back to the office on Monday mornings."

The poll takes less than 30 seconds of each team member’s time, and the results are, of course, available to everyone. Again, tracking the development of the three qualitative metrics provides insight into trends that otherwise might go unnoticed.

Good Quantitative Agile Metrics: Lead Time and Cycle Time

Ultimately, the purpose of any agile transition is to become a learning organization, thus gaining a competitive advantage over the competition. The following metrics apply to the (software) product delivery process but can be adapted to various other processes accordingly.

In the long run, this will not only require restructuring the organization from functional silos to more or less cross-functional teams (where applicable); it will also require analyzing the system itself (for example, to figure out where value creation is impeded by queues).

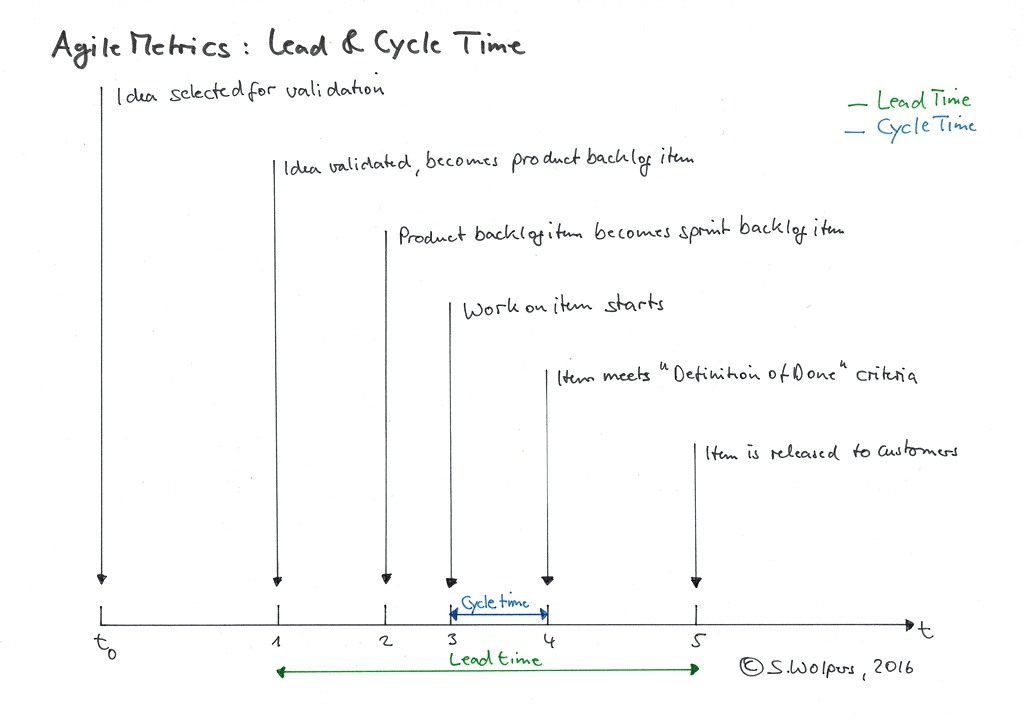

To identify the existing queues in the product delivery process, you start recording five dates:

- The date when a previously validated idea (for example, a user story for a new feature) becomes a product backlog item.

- The date when this product backlog item becomes a sprint backlog item.

- The date when development starts on this sprint backlog item.

- The date when the sprint backlog item meets the team’s Definition of Done.

- The date when the sprint backlog item is released to customers.

The lead time is the time elapsed between the first and the fifth date. The cycle time is the time elapsed between the third and the fourth date.

The objective is to reduce both lead time and cycle time to improve the organization’s capability to deliver value to customers. The objective, as mentioned earlier, is accomplished by eliminating dependencies and hand-overs between teams within the product delivery process.

Helpful practices in this respect are:

- Creating cross-functional and co-located teams.

- Having feature teams instead of component teams.

- Furthering a whole-product perspective and systems-thinking among all team members.

Measuring lead time and cycle time does not require a fancy agile tool or business intelligence software. A simple spreadsheet will do if all teams stick to a simple rule: note the date once you move a ticket. This method even works with index cards.

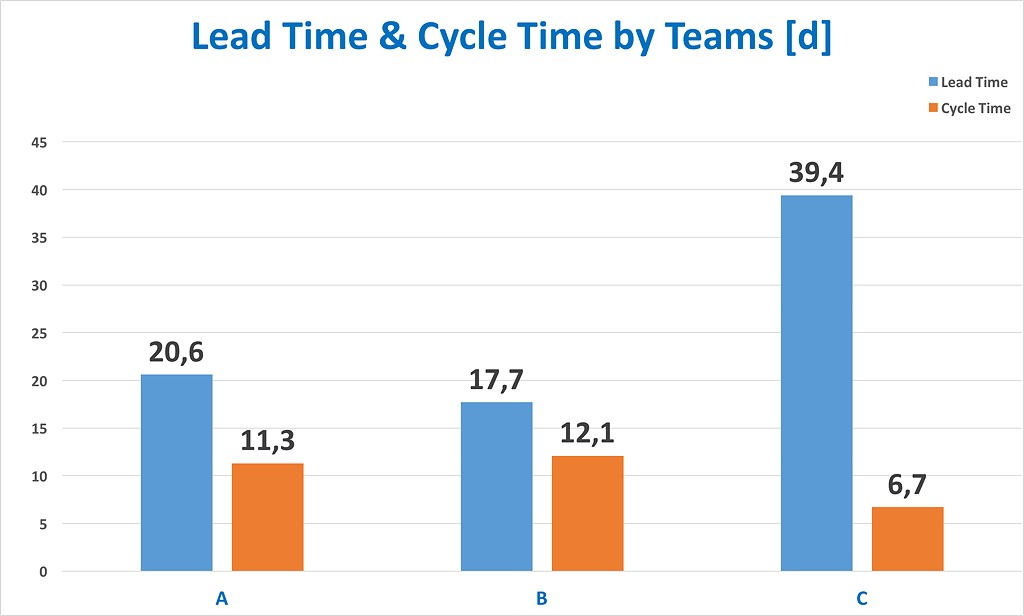

The following graphic compares median values of lead time and cycle time of three Scrum teams:

The values were derived from analyzing tickets—both user stories as well as bug tickets—from a period of three months. (It is planned to change that interval to two weeks in 2017.)

Other Good Agile Metrics

Esther Derby suggests in her article Metrics for Agile to also measure the ratio of fixing work to feature work and the number of defects escaping to production.

Bad Agile Metrics

A bad, yet popular, agile metric is team velocity. Team velocity is a notoriously volatile metric, and hence actually only usable by the team itself.

Some of the many factors that make even intra-team Sprint comparisons so difficult are:

- The team onboards new members.

- Veteran team members leave.

- Seniority levels of team members change.

- The team is working in unchartered territory.

- The team is working on legacy code.

- The team is running into unexpected technical debt.

- Holiday and sick leave reduce capacity during the Sprint.

- The team had to deal with serious bugs.

You would need to normalize a team’s performance each Sprint to derive a value of at least some comparable value. (This usually is not done.)

Additionally, velocity is a metric that can be easily manipulated. I usually include an exercise in how to cook the “agile books” when coaching new teams. I have never worked with a team that did not manage to come up with suitable ideas how to make to sure that it would meet any reporting requirements based on its velocity. You should not be surprised by this — it is referred to as the Hawthorne effect:

The Hawthorne effect (also referred to as the observer effect) is a type of reactivity in which individuals modify or improve an aspect of their behavior in response to their awareness of being observed.

To make things worse, you cannot compare velocities between different teams since all of them are estimating differently. This practice is acceptable, as estimates are not supposed to be pursued for reporting purposes. Estimates are a mere side-effect of the attempt to create a shared understanding among team members on the why, how, and what of a user story.

So, don’t use velocity as an agile metric.

Ugly Agile Metrics

The ugliest agile metric I have encountered so far is “story points per developer per time interval.” This metric equals “lines of code” or “hours spent” from a traditional project reporting approach. The metric is completely useless, as it doesn’t provide any context for interpretation or comparison.

Equally useless “agile metrics” are the number of certified team members or the number of team members that accomplished workshops on agile practices.

Conclusion

If you can only record a few data points, go with start and end dates to measure lead time and circle time. If you have just started your agile journey, you may consider also tracking the adoption rate of an individual team by measuring qualitative signals, for example, based on self-assessment tests like the Scrum test by Henrik Kniberg.

What do you measure to track your progress? Please share with us in the comments or join our Slack team, “Hands-On Agile” — we have a channel for agile metrics.

Comments