Are You Stuck in the New DevOps Matrix From Hell?

See how Docker solved the matrix-from-hell problem, and how DevOps techniques can help avoid the config sprawl that comes with microservices.

Join the DZone community and get the full member experience.

Join For Freeif you google "matrix from hell," you'll see many articles about how docker solves the matrix from hell. so what is the matrix from hell? put simply, it is the challenge of packaging any application, regardless of language/frameworks/dependencies, so that it can run on any cloud, regardless of operating systems/hardware/infrastructure.

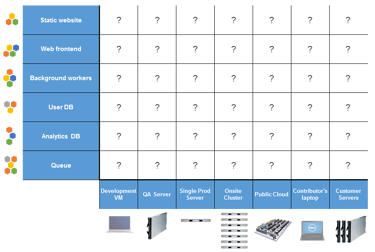

the original matrix from hell: applications were tightly coupled with underlying hardware.

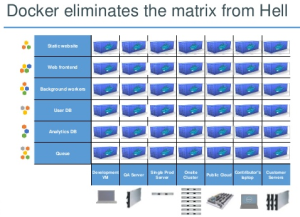

docker solved for the matrix from hell by decoupling the application from the underlying operating system and hardware. it did this by packaging all dependencies inside docker containers, including the os. this makes docker containers "portable", i.e. they can run on any cloud or machine without the dreaded "it works on this machine" problems. this is the single biggest reason docker is considered the hottest new technology of the last decade.

with devops principles gaining center stage over the last few years, ops teams have started automating their tasks like provisioning infrastructure, managing config, triggering production deployments, etc. it automation tools like ansible and terraform help tremendously with these use cases since they allow you to represent your infrastructure-as-code, which can be versioned and committed to source control. most of these tools are configured with a yaml or json based language which describes the activity you're trying to achieve.

the new matrix from hell

let's consider a simple scenario. you have a 3-tier application with an api, middleware, and web layers, and three environments: test, staging, and prod. you're using a container service such as kubernetes, though this example is true of all similar platforms like amazon ecs, docker swarm, and google container engine (gke). here is how your config looks:

notice the problem?

the configuration of the app changes in each environment. you, therefore, need a config file that is specific to application/service/microservice and the environment!

notice the problem?

the configuration of the app changes in each environment. you, therefore, need a config file that is specific to application/service/microservice and the environment!

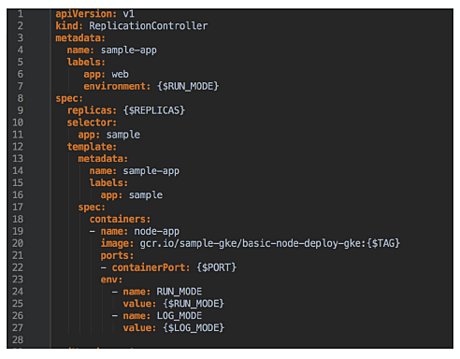

your first instinct is probably to point out that you can, in fact, templatize the yaml scripts. for example, the following config would ensure that the same yaml will work across all environments and apps:

in theory, this is correct. a string replace will replace all values depending on the application-environment combination and you should be good to go.

however, this approach also has a few issues:

- there are no audit trails, so you have no information about the application version that was deployed, or who deployed it, or when. you don't even know what the exact configuration was, unless you have knowledge of where the config is stored and can access it.

- there is no repeatability, so you cannot just go run an earlier config. rollbacks or roll forwards are super challenging. you can potentially solve this by archiving all deployment files on s3 or github, but now you have to secure secrets that should not be in cleartext, which creates its own nightmare.

-

you also need a way to actually figure out what the values are for each deployment and to set the environment variables before the scripts are executed. for example, the value for

$tagin the snippet above will change for each deployment. you need to maintain this information somewhere and update it for each deployment, but now you need application-environment specific config files anyway. - the biggest issue is that there is no way to do a string replace for tags that aren't in the template. not every environment needs every tag, so it is incredibly difficult to create a template config that can describe the application's deployment into every environment.

faced with these challenges, most teams don't bother templatizing and take the path of least resistance - creating deployment config files that are app-env specific. and this leads to..... a devops matrix from hell! can you imagine the matrix below for 50+ microservices?

| test | staging | production | |

| api | api-test.yml | api-staging.yml | api-prod.yml |

| middleware | mw-test.yml | mw-staging.yml | mw-prod.yml |

| web | web-test.yml | web.staging.yml |

web.prod.yml |

the devops matrix from hell: automation scripts are tightly coupled with app/env combination.

avoiding the devops matrix from hell

the fundamental issue behind this new matrix from hell is that application configuration is currently being treated as a design-time decision with static deployment config files. you can only change this by having the ability to dynamically generate the deployment config, depending on the requirements for an environment. this configuration consists of two parts:

- environment-specific settings, such as number of instances of the application

- container specific settings that do not change across environments, such as the tag you want to deploy, or cpu/memory requirements

to generate the deployment config dynamically at runtime, your automation platform needs to be aware of the environment the application is being deployed to, as well as knowledge of the package version that needs to be deployed.

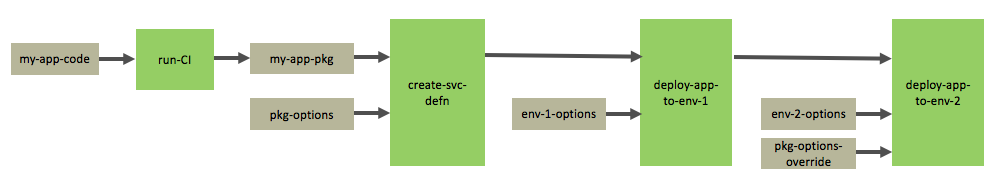

the image above shows a conceptual workflow of how you can dynamically generate your deployment config:

-

my-app-codeis the source code repository. any commit tomy-app-repotriggers the ci job,run-ci. -

run-cibuilds and tests the source code, and then creates an application packagemy-app-pkg, which can be a jar/war file, docker image, etc. this is pushed to a hub like amazon ecr or jfrog artifactory. -

the

create-svc-defnjob creates a service definition for the application. this includesmy-app-pkgand a bunch of config that is needed to run the application, represented aspkg-options. this could be settings for cpu, memory, ports, volumes, etc. -

the

deploy-app-to-env-1job takes this service definition and also some environment specific optionsenv-1-options, such as number of instances you want to run in env-1. it generates the deployment config for env-1 and deploys the application there. -

later, when deploying the same service definition to env-2, the job takes

deploy-app-to-env-2env-2-optionsand if needed, the package options can also be overriden withpkg-options-override. it generates the deployment config for env-2 and deploys the application there.

a devops assembly lines platform like shippable can help avoid config sprawl and the devops matrix from hell by configuring the workflow above fairly easily, while also giving you repeatability and audit trails. you can also go the diy route with jenkins/ansible combination and use s3 for storage of state, but that would require extensive scripting and handling state and versions yourself. whatever path you choose to take, it is better to have a process and workflow in place as soon as possible, to avoid building technical debt as you adopt microservices or build smaller applications.

Published at DZone with permission of Manisha Sahasrabudhe, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments