Testing Features of ML Models

In this post, you will learn about different types of test cases that you could come up for testing features of the data science/Machine Learning models.

Join the DZone community and get the full member experience.

Join For FreeIn this post, you will learn about different types of test cases that you could come up for testing features of the Data Science/Machine Learning models. Testing features are one of the key sets of which needs to be performed for ensuring the high performance of Machine Learning models in a consistent and sustained manner.

Features make the most important part of a Machine Learning model. Features are nothing but the predictor variable, which is used to predict the outcome or response variable. Simply speaking, the following function represents y as the outcome variable and x1, x2, and x1x2 as predictor variables.

y = a1x1 + a2x2 + a3x1x2 + e

In the above function, note the feature x1x2. This feature is derived by multiplying x1 and x2. In other words, the feature x1x2 is extracted from feature x1 and x2 by multiplying them. Also, note that a1, a2, and a3 are called as coefficients of the corresponding features. They are used to represent to measure the impact of change brought to outcome variable y by a unit change of the corresponding features. For example, with the unit value change in x1, the outcome variable y will change by a1x1 times.

What Needs to Be Quality Assured or Tested With Features?

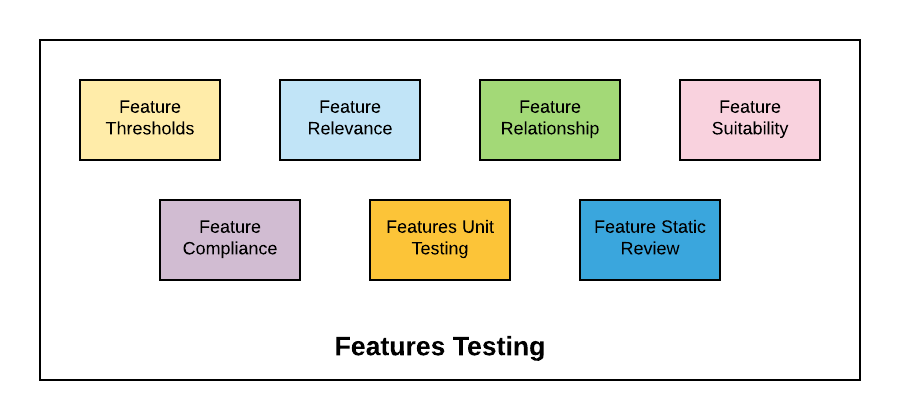

The following diagram represents key aspects that need to be tested in relation to doing quality control checks/testing of features of Machine Learning models.

- Features thresholds: Each of the features may found to have expected values fall within a given threshold value (lower, upper or both lower and upper limits). This needs to be determined by working with the product managers/business analyst. Test Engineers would need to plan test cases around the expected/threshold values. For example, let us say the age of the human being has threshold value or 0 to 100. The thing not in this bound should be raised as alert or defect. Well, there are people whose age is more than 100, but they are very few in numbers. The test cases could look like the following. These tests could easily be automated using scripts.

- Test whether the value of x1 lies between V1 and V2; Or,

- Test whether the value of x1 is greater than V1

- Features relevance/importance: Testing feature relevance is about testing the importance of the features in terms of which features best contribute to the accuracy of the model. Feature importance can be evaluated using feature selection techniques such as regularization methods which are often termed as embedded methods. Some of the most common regularization algorithms include LASSO, elastic net, and ridge regression. Methods such as stepwise selection could also be used to measure feature relevance in terms of which all features improves prediction accuracy. However, it works best when only a few of the features have a strong relationship with the outcome variable.

- The test cases would include an assertion of the fact that the features determined earlier as the most important remained so or any other features have turned out to be of greater importance.

- Features relationship with outcome variables: Test the feature relationship with outcome variables by calculating the correlation coefficients and comparing the data with tests results from past to determine the change in the relationship between features and outcome variable.

- Features suitability/cost: Test whether a feature is suitable by evaluating cost associated with generating the features. Plan tests around calculating some of the following in relation to features generation/extraction:

- RAM usage

- Upstream data dependencies

- Inference latency

- Features compliance: Testing feature compliance is about making sure that features (original or derived/extracted) do not violate the data compliance-related issues as per the business guidelines. For example, if the business agreement with customers mentions that their monthly invoices must not be used for any analysis whatsoever, the same must be ensured as part of testing/quality control checks.

- Unit tests for code generating features: Unit tests written by the data scientists for the code generating features should be executed in an automated manner as part of the regular build automation. As a matter of fact, it should be mandated that data scientists do write unit tests for the code used for generating features.

- Static code review of code generating features: The code used for generating features should be reviewed using both manual and automated manner. Static code analysis tools such as Sonar could be used to assess the quality of code.

7 Different Types of Test Cases for Features QA

The following represents a test plan for testing features of Machine Learning models:

- Test whether the value of features lies between the threshold values

- Test whether the feature importance changed with respect to previous QA run

- Test whether the feature relationship with outcome variable in terms of correlation coefficients.

- Test the feature unsuitability by testing RAM usage, inference latency etc.

- Test/review whether the generated feature violates the data compliance related issues

- Test/review the code coverage of the code generating features

- Test/review the static code analysis outcome of code generating features

References

Summary

In this post, you learned about details related to different aspects of testing features of the Machine Learning models. In addition, you also learned about different test cases which could form part of the test plan for testing ML models. Here are some of the posts I have already done in relation to doing QA for Machine Learning models:

Published at DZone with permission of Ajitesh Kumar, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments