Multi-Tenancy in Kubernetes Using Vcluster

Discover effortless multi-tenancy implementation in Kubernetes with Loft's vcluster project. Explore tenancy models and their pros/cons.

Join the DZone community and get the full member experience.

Join For FreeKubernetes has revolutionized how organizations deploy and manage containerized applications, making it easier to orchestrate and scale applications across clusters. However, running multiple heterogeneous workloads on a shared Kubernetes cluster comes with challenges like resource contention, security risks, lack of customization, and complex management.

There are several approaches to implementing isolation and multi-tenancy within Kubernetes:

- Kubernetes namespaces: Namespaces allow some isolation by dividing cluster resources between different users. However, namespaces share the same physical infrastructure and kernel resources. So, there are limits to isolation and customization.

- Kubernetes distributions: Popular Kubernetes distributions like Red Hat OpenShift and Rancher support virtual clusters. These leverage Kubernetes-native capabilities like namespaces, RBAC, and network policies more efficiently. Other benefits include centralized control planes, pre-configured cluster templates, and easy-to-use management.

- Hierarchical namespaces: In a traditional Kubernetes cluster, each namespace is independent of the others. This means that users and applications in one namespace cannot access resources in another namespace unless they have explicit permissions. Hierarchical namespaces solve this problem by allowing you to define a parent-child relationship between namespaces. This means that a user or application with permissions in the parent namespace will automatically have permissions in all of the child namespaces. This makes it much easier to manage permissions across multiple namespaces.

- Vcluster project: The virtual cluster (vcluster) project addresses these pain points by dividing a physical Kubernetes cluster into multiple isolated software-defined clusters. vcluster allows organizations to provide development teams, applications, and customers with dedicated Kubernetes environments with guaranteed resources, security policies, and custom configurations. This post will dive deep into vcluster, its capabilities, different implementation options, use cases, and challenges. We will also look into the best practices for maximizing utilization and simplifying the management of vcluster.

What Is Vcluster?

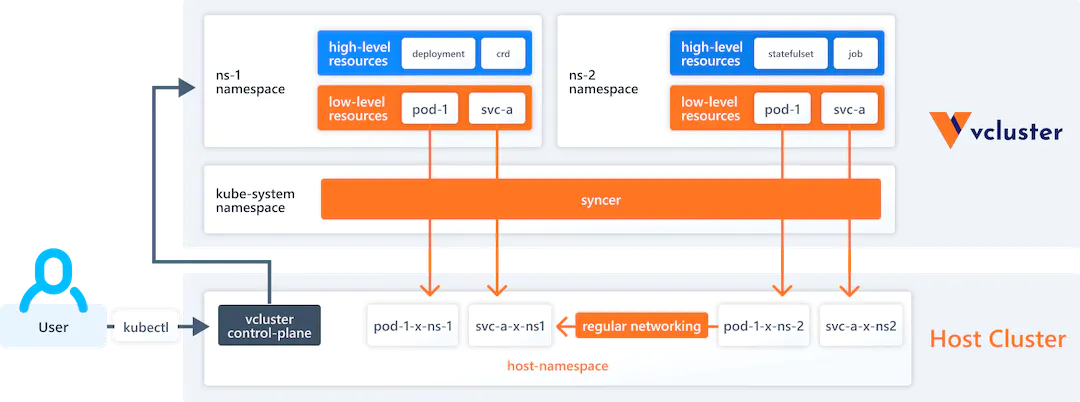

vcluster is an open-source tool that allows you to create and manage virtual Kubernetes clusters. A virtual Kubernetes cluster is a fully functional Kubernetes cluster that runs on top of another Kubernetes cluster. vcluster works by creating a virtual cluster inside a namespace of the underlying Kubernetes cluster. The virtual cluster has its own control plane, but it shares the worker nodes and networking of the underlying cluster. This makes vcluster a lightweight solution that can be deployed on any Kubernetes cluster.

When you create a vcluster, you specify the number of worker nodes that you want the virtual cluster to have. The vcluster CLI will then create the virtual cluster and start the control plane pods on the worker nodes. You can then deploy workloads to the virtual cluster using the kubectl CLI.

You can learn more about vcluster on the vcluster website.

Benefits of Using Vcluster

Resource Isolation

vcluster allows you to allocate a portion of the central cluster's resources like CPU, memory, and storage to individual virtual clusters. This prevents noisy neighbor issues when multiple teams share the same physical cluster. Critical workloads can be assured of the resources they need without interference.

Access Control

With vcluster, access policies can be implemented at the virtual cluster level, ensuring only authorized users have access. For example, sensitive workloads like financial applications can run in an isolated vcluster. Restricting access is much simpler compared to namespace-level policies.

Customization

vcluster allows extensive customization for individual teams' needs, and different Kubernetes versions, network policies, ingress rules, and resource quotas can be defined. Developers can have permission to modify their vcluster without impacting others.

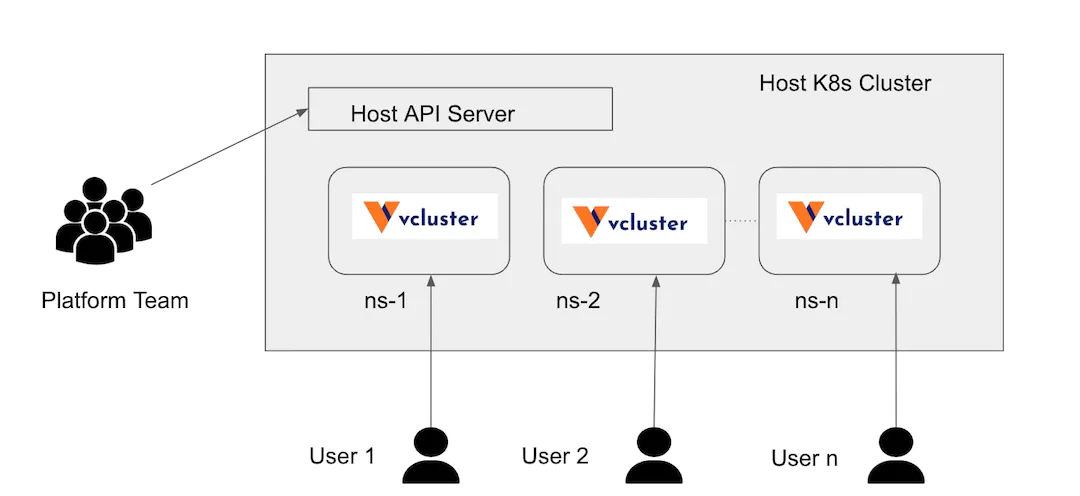

Multitenancy

Organizations must often provide Kubernetes access to multiple internal teams or external customers. vcluster makes multi-tenancy easy to implement by creating separate isolated environments in the same physical cluster. Refer to this article for more information.

Easy Scaling

Additional vcluster can be quickly spun up or down to handle dynamic workloads and scale requirements. New development and testing environments can be provisioned instantly without having to scale the entire physical cluster.

Workload Isolation Approaches Before Vcluster

Organizations have leveraged various Kubernetes native features to enable some workload isolation before virtual clusters emerged as a solution:

- Namespaces: Namespaces segregate cluster resources between different teams or applications. They provide basic isolation via resource quotas and network policies. However, there is no hypervisor-level isolation.

- Network Policies: Granular network policies restrict communication between pods and namespaces. This creates network segmentation between workloads. However, resource contention can still occur.

- Taints and Tolerations: Applying taints to nodes prevents specified pods from scheduling onto them. Pods must have tolerances to match taints. This enables restricting pods to certain nodes.

- Cloud Virtual Networks: On public clouds, using multiple virtual networks helps isolate Kubernetes cluster traffic. But pods within a cluster can still communicate.

- Third-Party Network Plugins: CNI plugins like Calico, Weave, and Cilium enable building overlay networks and fine-grained network policies to segregate traffic.

- Custom Controllers: Developing custom Kubernetes controllers allows programmatically isolating resources. But this requires significant programming expertise.

Demo of Vcluster

Install Vcluster CLI

Requirements:

- kubectl (check via kubectl version)

- helm v3 (check with helm version)

- a working kube-context with access to a Kubernetes cluster (check with kubectl to get namespaces).

Use the following command to download the vcluster CLI binary for arm64-based Ubuntu machines:

curl -L -o vcluster "https://github.com/loft-sh/vcluster/releases/latest/download/vcluster-linux-arm64" && sudo install -c -m 0755 cluste /usr/local/bin && rm -f vcluster

bashTo confirm that vcluster CLI is successfully installed, test via:

vcluster --version

bashFor installations on other machines, please refer to the following link. Install vcluster CLI

Deploy Vcluster

Let's create a virtual cluster my-first-vcluster

vcluster create my-first-vcluster

bashConnection to the Vcluster

To connect to the vcluster, enter the following command:

vcluster connect my-first-vcluster

bashUse kubectl command to get the namespaces in the connected vcluster.

kubectl get namespaces

bashDeploy an application to the vcluster

Now, let's deploy a sample nginx deployment inside the vcluster. To create a deployment:

kubectl create namespace demo-nginx

kubectl create deployment nginx-deployment -n demo-nginx --image=nginx

bashThis will isolate the application in a namespace demo-nginx inside the vcluster.

You can check that this demo deployment will create pods inside the vcluster:

kubectl get pods -n demo-nginx

bashCheck Deployments From the Host Cluster

Now that we have confirmed the deployments in the vcluster, let us now try to check the deployments from the host cluster.

To disconnect from the vcluster:

vcluster disconnect

bashThis will move the kube context back to the host cluster. Now, let us check if there are any deployments available in the host cluster.

kubectl get deployments -n vcluster-my-first-vcluster

bashThere will be no resources found in the vcluster-my-vcluster namespace. This is because the deployment is isolated in the vcluster that is not accessible from other clusters.

Now, let us check if any pods are running in all of the namespaces using the following command.

kubectl get pods -n vcluster-my-first-vcluster

bashVoila! We can now see that the nginx container is running in the vcluster namespace.

Vcluster Use Cases

Virtual clusters enable several important use cases by providing isolated and customizable Kubernetes environments within a single physical cluster. Let's explore some of these in more detail:

Development and Testing Environments

Allocating dedicated virtual clusters for developer teams allows them to fully control the configuration without affecting production workloads or other developers. Teams can customize their vclusters with required Kubernetes versions, network policies, resource quotas, and access controls. Development teams can rapidly spin up and tear down vclusters to test different configurations. Since vclusters provide guaranteed compute and storage resources, developers don't have to compete. They also won't impact the performance of applications running in other vclusters.

Production Application Isolation

Enterprise applications like ERP, CRM, and financial systems require predictable performance, high availability, and strict security. Dedicated vclusters allow these production workloads to operate unaffected by other applications. Mission-critical applications can be allocated reserved capacity to avoid resource contention. Custom network policies guarantee isolation. Vclusters also allow granular role-based access control to meet regulatory compliance needs. Rather than overprovisioning large clusters to avoid interference, vclusters provide guaranteed resources at a lower cost.

Multitenancy

Service providers and enterprises with multiple business units often need to securely provide Kubernetes access to different internal teams or external customers. vclusters simplify multi-tenancy by creating separate self-service environments for each tenant with appropriate resource limits and access policies applied. Providers can easily onboard new customers by spinning up additional vclusters. This removes noisy neighbor issues and allows a high density of workloads by packing vclusters according to actual usage rather than peak needs.

Regulatory Compliance

Heavily regulated industries like finance and healthcare have strict security and compliance requirements around data privacy, geography, and access controls. Dedicated vclusters with internal network segmentation, role-based access control, and resource isolation make it easier to host compliant workloads safely alongside other applications in the same cluster.

Temporary Resources

vclusters allow instantly spinning up temporary Kubernetes environments to handle use cases like

- Testing cluster upgrades: New Kubernetes versions can be deployed to lower environments with no downtime or impact on production.

- Evaluating new applications: Applications can be deployed into disposable vclusters instead of shared dev clusters to prevent conflicts.

- Capacity spikes: New vclusters provide burst capacity for traffic spikes versus overprovisioning the entire cluster.

- Special events: vclusters can be created temporarily for workshops, conferences, and other events.

Once the need is over, these vclusters can simply be deleted with no lasting footprint on the cluster.

Workload Consolidation

As organizations scale their Kubernetes footprint, there is a need to consolidate multiple clusters onto shared infrastructure without interfering with existing applications. Migrating applications into vclusters provides logical isolation and customization, allowing them to run seamlessly alongside other workloads. This improves utilization and reduces operational overhead. vclusters allow enterprise IT to provide a consistent Kubernetes platform across the organization while preserving isolation. In summary, vclusters are an essential tool for optimizing Kubernetes environments via workload isolation, customization, security, and density. The use cases highlight how they benefit diverse needs from developers to Ops to business units within an organization.

Challenges With Vclusters

While delivering significant benefits, some downsides to weigh include:

Complexity

Managing multiple virtual clusters, albeit smaller ones, introduces more operational overhead compared to a single large Kubernetes cluster. Additional tasks include:

- Provisioning and configuring multiple control planes.

- Applying security policies and access controls consistently across vclusters.

- Monitoring and logging across vclusters.

- Maintaining designated resources and capacity for each vcluster.

For example, a cluster administrator has to configure and update RBAC policies across 20 vclusters rather than a single cluster. This takes more effort compared to the centralized management of a single cluster. The static IP addresses and ports on Kubernetes might cause conflicts or errors.

Resource Allocation and Management

Balancing the resource consumption and performance of vclusters can be tricky, as they may have different demands or expectations.

For example, vclusters may need to scale up or down depending on the workload or share resources with other vclusters or namespaces. A vcluster sized for an application's peak demand may have excess unused capacity during non-peak periods that sits idle and cannot be leveraged by other vclusters.

Limited Customization

The ability to customize vclusters varies across implementations. Namespaces offer the least flexibility, while Cluster API provides the most. Tools like OpenShift balance customization with simplicity. For example, namespaces cannot run different Kubernetes versions or network plugins. The Cluster API allows full customization but with more complexity.

Conclusion

Vcluster empowers Kubernetes users to customize, isolate, and scale workloads within a shared physical cluster. By allocating dedicated control plane resources and access policies, vclusters provide strong technical isolation. For use cases like multitenancy, vclusters deliver simplified and more secure Kubernetes management.

Vcluster can also be used to reduce Kubernetes cost overhead and can be used for ephemeral environments. Tools like OpenShift, Rancher, and Kubernetes Cluster API make deploying and managing vclusters much easier. As adoption increases, we can expect more innovations in the vcluster space to further simplify operations and maximize utilization. While vclusters have some drawbacks, for many organizations, the benefits outweigh the added complexity.

Published at DZone with permission of Pavan Shiraguppi. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments