Reinforcement Learning for the Enterprise

Reinforcement learning is a first step towards artificial intelligence that can survive in a variety of environments instead of being tied to certain rules or models.

Join the DZone community and get the full member experience.

Join For FreeHumanity has a unique ability to adapt to dynamic environments and learn from their surroundings and failures. It is something that machines lack, and that is where artificial intelligence seeks to correct this deficiency. However, traditional supervised machine learning techniques require a lot of proper historical data to learn patterns and then act based on them. Reinforcement learning is an upcoming AI technique which goes beyond traditional supervised learning to learn and improves performance based on the actions and feedback received from a machine’s surroundings, like the way humans learn. Reinforcement learning is the first step towards artificial intelligence that can survive in a variety of environments, instead of being tied to certain rules or models. It is an important and exciting area for enterprises to explore when they want their systems to operate without expert supervision. Let’s take a deep dive into what reinforcement learning encompasses, followed by some of its applications in various industries.

So, What Constitutes Reinforcement Learning?

Let’s think of the payroll staff whom we all have in our organizations. The compensation and benefits (C&B) team comes up with different rewards and recognition programs every year to award employees for various achievements.

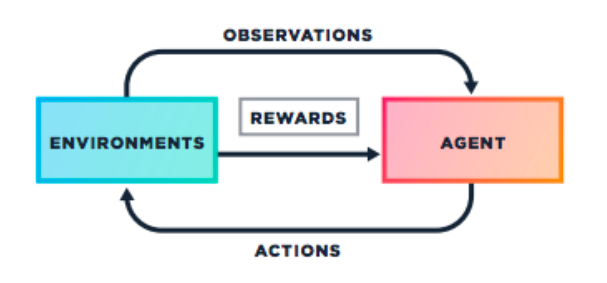

These achievements are always laid down in line with an organization’s business goals. With the desire to win these prizes and excel in their careers, employees try to maximize their potential and give their best performance. They might not receive the award at their first attempt. However, their manager provides feedback on what they need to improve to succeed. They learn from these mistakes and try to improve their performance next year. This helps an organization reach its goals by maximizing the potential of its employees. This is how reinforcement learning works. In technical terms, we can consider the employees as agents, C&B as rewards, and the organization as the environment. So, reinforcement learning is a process where the agent interacts with the environments to learn and receive the maximum possible rewards. Thus, they achieve their objective by taking the best possible action. The agents are not told what steps to take. Instead, they discover the actions that yield maximum results.

There are five elements associated with reinforcement learning:

An agent is an intelligent program that is the primary component and decision-maker in the reinforcement learning environment.

The environment is the surrounding area, which has a goal for the agent to perform.

An internal state, which is maintained by an agent to learn the environment.

Actions, which are the tasks carried out by the agent in an environment.

Rewards, which are used to train the agents.

Fundamentals of the Learning Approach

I have just started learning about artificial intelligence. One way for me to learn is to pick up a machine learning algorithm from the Internet, choose some data sets, and keep applying the algorithm to the data. With this approach, I might succeed in creating some good models. However, most of the time, I might not get the expected result. This formal way to learn is the exploitation learning method, and it is not the optimal way to learn. Another way to learn is the exploration mode, where I start searching different algorithms and choose the algorithm that suits my data set. However, this might not work out, either, so I have to find a proper balance between the two ways to learn and create the best model. This is known as an exploration-exploitation trade off and forms the rationale behind the reinforcement learning method. Ideally, we should optimize the trade-off between exploration and exploitation learning methods by defining a good policy for learning.

This brings us to the mathematical framework known as Markov Decision Processes, which are used to model decision using states, actions, and rewards. It consists of:

S – Set of states

A – Set of actions

R – Reward functions

P – Policy

V – Value

So, in a Markov Decision Process (MDP), an agent (decision-maker) is in some state (S). The agent has to take action (A) to transit to a new state (S). Based on this response, the agent receives a reward (R). This reward can be of positive or negative value (V). The goal to gain maximum rewards is defined in the policy (P). Thus, the task of the agent is to get the best rewards by choosing the correct policy.

Q-Learning

MDP forms the basic gist of Q-learning, one of the methods of reinforcement learning. It is a strategy that finds the optimal action selection policy for any MDP. It minimizes behavior of a system through trial and error. Q-learning updates its policy (state-action mapping) based on a reward.

A simple representation of Q-learning algorithm is as follows.

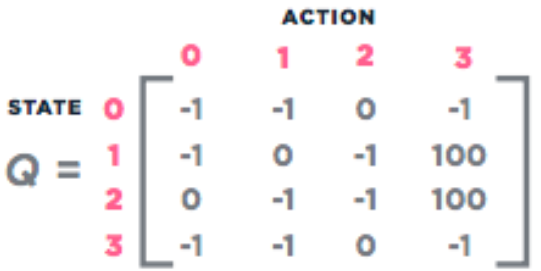

STEP 1: Initialize the state-action matrix (Q-Matrix), which defines the possible actions in each state. The rows of matrix Q represent the current state of the agent, and the columns represent the possible actions leading to the next state as shown in the figure below:

Note: The -1 represents no direct link between the nodes. For example, the agent cannot traverse from state 0 to state 3.

STEP 2: Initialize the state-action matrix (Q-Matrix) to zero or the minimum value.

STEP 3: For each episode:

Choose one possible action.

Perform action.

Measure Reward.

Repeat STEP 2 (a to c) until it finds the action that yields maximum Q value.

Update Q value.

STEP 4: Repeat until the goal state has been reached.

Getting Started With Reinforcement Learning

Luckily, we need not code the algorithms ourselves. Various AI communities have done this challenging work, thanks to the ever-growing technocrats and organizations who are making our days easier. The only thing we need to do is to think of the problem that exists in our enterprises, map it to a possible reinforcement learning solution, and implement the model.

Keras-RL implements state-of-the-art deep reinforcement learning algorithms and integrates with the deep learning library Keras. Due to this integration, it can work either with Theano or Tensorflow and can be used in either a CPU or GPU machines. It is implemented in Python Deep Q-learning (DQN), Double DQN (removes the bias from the max operator in Q-learning), DDPG, Continuous DQN, and CEM.

PyBrain is another Python-based reinforcement learning, artificial intelligence, and neural network package that implements standard RL algorithms like Q-Learning and more advanced ones such as neural fitted Q-iteration. It also includes some black-box policy optimization methods (like CMA-ES, genetic algorithms, etc.).

OpenAI Gym is a toolkit that provides a simple interface to a growing collection of reinforcement learning tasks. You can use it with Python, as well as other languages in the future.

TeachingBox is a Java-based reinforcement learning framework. It provides a classy and convenient toolbox for easy experimentation with different reinforcement algorithms. It has embedded techniques to relieve the robot developer from programming sophisticated robot behaviors.

Possible Use Cases for Enterprises

Reinforcement learning finds extensive applications in those scenarios where human interference is involved, and cannot be solved by rule-based automation and traditional machine learning algorithms. This includes robotic process automation, packing of materials, self-navigating cars, strategic decisions, and much more.

1. Manufacturing

Reinforcement learning can be used to power up the brains of industrial robots to learn by themselves. One of the notable examples in the recent past is an industrial robot developed by a Japanese company, Faunc, that learned a new job overnight. This industrial robot used reinforcement learning to figure out on how to pick up objects from containers with high precision overnight. It recorded its every move and found the right path to identify and select the objects.

2. Digital Marketing

Enterprises can deploy reinforcement learning models to show advertisements to a user based on his or her activities. The model can learn the best ad based on user behavior and show the best advertisement at the appropriate time in a proper personalized format. This can take ad personalization to the next level that guarantees maximum returns.

3. Chatbots

Reinforcement learning can make dialogue more engaging. Instead of general rules or chatbots with supervised learning, reinforcement learning can select sentences that can take a conversation to the next level for collecting long-term rewards.

4. Finance

Reinforcement learning has immense applications in stock trading. It can be used to evaluate trading strategies that can maximize the value of financial portfolios.

Opinions expressed by DZone contributors are their own.

Comments