The Service Mesh in the Microservices World

In this article, take a look at the service mesh in the microservices world.

Join the DZone community and get the full member experience.

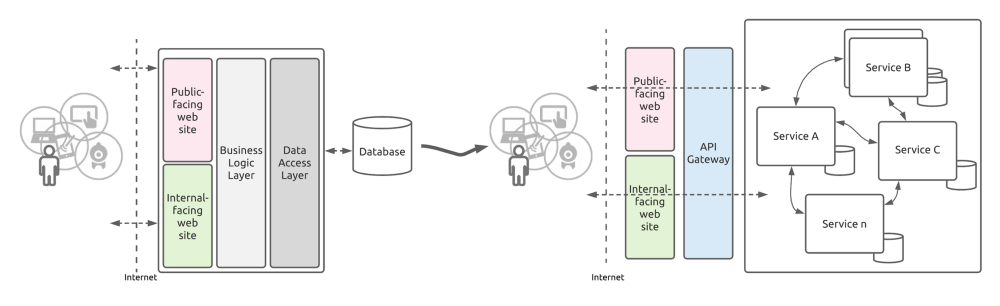

Join For FreeThe software industry has come a long journey and throughout this journey, Software Architecture has evolved a lot. Starting with 1-tier (Single-node), 2-tier (Client/ Server), 3-tier, and Distributed are some of the Software Architectural patterns we saw in this journey.

The Problem

The majority of software companies are moving from Monolithic architecture to Microservices architecture, and Microservices architecture is taking over the software industry day-by-day. While monolithic architecture has many benefits, it also has so many shortcomings when catering to modern software development needs. With those shortcomings of monolithic architecture, it is very difficult to meet the demand of the modern-world software requirements and as a result, microservices architecture is taking control of the software development aggressively. The Microservices architecture enables us to deploy our applications more frequently, independently, and reliably meeting modern-day software application development requirements.

The Microservices architecture addresses almost all the shortcomings of the monolithic architecture. Improved fault isolation, smaller and faster deployments, scalability, eliminating vendor and technology lock-in, improved productivity and speed, improved maintainability and business centricity are some of the capabilities/ benefits introduced by the microservices architecture.

While microservices architecture provides many advantages over other architectures, it has its own set of challenges. Everything is not green in Microservice architecture, and it has to deal with the same set of problems encountered while designing distributed systems. The idea behind the microservices architecture is, instead of having a large/ single codebase, we will have a multiple/ independent set of smaller services designed to provide the functionality of each individual business unit. With this approach, a modern-world software application will easily have hundreds (100s) of individual services working together.

With the microservices architecture, a dependency on the network comes in and raises reliability questions. Network reliability, latency, bandwidth, and network security are some of the network-related challenges which we have to deal with. Implementing communication between services will be a challenging task. As the number of services increases, we have to deal with the interactions between them. Apart from the service-to-service communication, we also have to handle the monitoring of the overall system health, be fault-tolerant, have logging and telemetry in place, handle multiple points of failures, and much more. With the microservices architecture, debugging problems can be a bit harder as we have to deal with many services and the underlying network. With the introduction of Container-based deployments, it made even worse for application developers as it adds another extra layer of complexity into the solution.

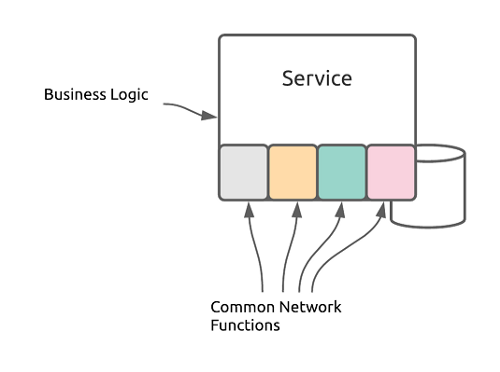

In order to overcome the above challenges related to the microservices architecture, each of the services needs to have these common functionalities (monitoring, health checking, logging, telemetry, etc.) in place so that the service-to-service communication is smooth and reliable. But, having to incorporate these capabilities into each and every service sounds like a lot of effort for both development and maintenance.

As a result, developers ended up using 3rd-party libraries and components such as Eureka, Ribbon, Hystrix to provide these additional common functionalities such as service discovery, load balancing, circuit breaker, logging and metrics, telemetry, and more.

However, using 3rd-party libraries and components added a different set of challenges such as:

- tight coupling — coding and configuration of these 3rd-party libraries/ components are tight to the business functionality within the services. Now the application code is a mixture of business functionalities and these additional library/ component configurations.

- the complexity of coding/ configuration — having to use a different set of programming and configuration languages depending on the 3rd-party library/ component in use

- maintainability challenges — anytime these external libraries/ components are upgraded, we have to update our application code, verify it, and deploy these changes

- difficulty in debugging/ troubleshooting — now the service is a mixture of business functionality and code/ configuration related to 3rd-party libraries/ components, developers have to spend a lot of time to understand the code and identify the issues

Though microservices architecture provides a lot of benefits, developers also face a lot of difficulties with the above challenges. Those challenges led developers to find a better alternative and as a result, Service Mesh came to the rescue.

The Solution

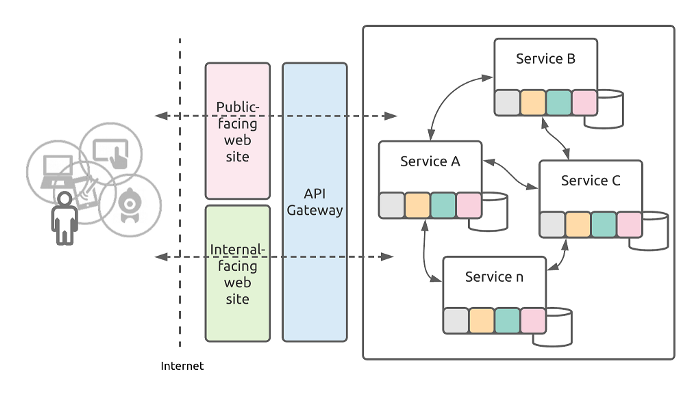

A Service Mesh can be defined as a dedicated infrastructure layer that handles inter-service communication in a microservice architecture thereby reducing the complexity introduced by the above challenges. Service Mesh reduces the complexity associated with a microservice architecture. Service Mesh lets us successfully, and efficiently, run a distributed microservice architecture, and provides a uniform way to secure, connect, and monitor microservices.

The idea behind the service mesh is very simple. Don’t trouble your service code with additional infrastructure/ network-related details and let it focus only on the business functionalities it has to perform.

In general, Service Mesh provides features such as:

- Load balancing

- Service discovery

- Health checks

- Authentication

- Traffic management and routing

- Circuit breaking and failover policy

- Security and policy management

- Metrics and telemetry

- Fault injection

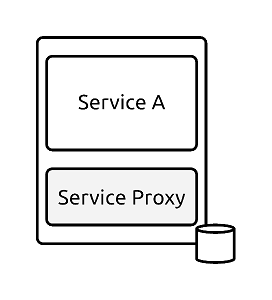

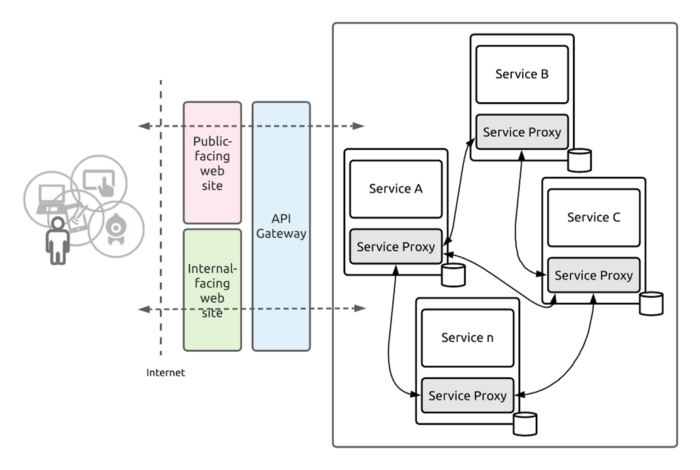

Service Mesh decouples the complexity introduced by using 3rd-party libraries/ components from your application and puts it in a separate layer called “service proxy” and lets it handle it for you. These service proxies can provide you with a bunch of functionalities like traffic management, circuit breaking, service discovery, authentication, monitoring, security, and much more.

With the Service Mesh in place, we don’t have to use any 3rd-party libraries/ components to provide the common network-related functionalities like configuration, routing, telemetry, logging, circuit breaking, etc. inside each and every microservice. Service Mesh will abstract/ externalize those common network-related functionalities into a separate component/ layer — called a “service proxy”. Now developers can easily identify the root cause of any issue, based on whether it is application or network related thereby making their life super convenient. With the Service Mesh architecture, there is a clear segregation of responsibilities between business functionalities and network-related functionalities.

In general, the Sidecar pattern is used to implement the Service Mesh architecture. In this pattern, we can deploy a service mesh proxy alongside the services. The sidecars which contain the service mesh service proxy abstract the complexity away from the application and handle the functionalities like service discovery, traffic management, load balancing, circuit breaking, etc.

In summary, as developers who embrace microservices architecture:

- No need to worry about implementing network-related functionalities as part of the service implementation

- No need to worry about using any 3rd-party libraries/ components for providing network-related functionalities

- Microservices will not talk to each other directly and all the service-to-service communications will go through the component/ layer called “service proxy” and we don't have to implement it within each service

- No mixing of business functionalities with network-related functionalities thereby decoupling them in order to provide clear segregation

- Just focus only on the business functionality and no need to worry about the underlying network-related functionalities and let service mesh handle it for you

- Embrace platform/ language independence while developing your services as service mesh is based on open standards such as HTTP, TCP, RPC, gRPC, etc.

Conclusion

The Microservices architecture is taking control of the software engineering industry aggressively due to its inherent capabilities/ advantages over the other architecture patterns. As more and more organizations moving from monolithic architecture to microservices architecture, as developers we need to understand the inherent challenges in the microservices architecture and find remedies to them. The Service Mesh architecture solves some of the inherent challenges introduces by the microservices architecture and it’s worth embracing it in your next development challenge.

Now that we know the role and importance of Service Mesh in the microservices architecture, let’s look at what are the service mesh products/ platforms which we can use in our next development engagement. Linkerd, Envoy Proxy, Istio, Consul, Kuma, and Open Service Mesh (OSM) are some of the leading Open Source Service Mesh platforms which we can use. Most of them are battle-tested and ready for production use. I strongly recommend you to check them, evaluate them, and use them in your next project.

Published at DZone with permission of Joy Rathnayake. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments