What Is a Kubernetes CI/CD Pipeline?

A Kubernetes CI/CD pipeline is different from a traditional CI/CD pipeline. The primary difference is the containerization process.

Join the DZone community and get the full member experience.

Join For FreeSince organizations began migrating from building monolith applications to microservices, containerization technology has been on the rise. With applications running on hundreds and thousands of containerized environments, an effective tool to manage and orchestrate those containers became essential.

Kubernetes (K8s)—an open-source container orchestration tool from Google—became popular with features that improved the deployment process for companies. With its high flexibility and scalability features, Kubernetes has emerged as the leading container orchestration tool, and over 60% of companies have already adopted Kubernetes in 2022.

With more and more companies adopting containerization technology and Kubernetes clusters for deployment, it makes sense to implement CI/CD pipelines for delivering Kubernetes in an automated fashion. So in this article, we’ll cover the following:

- What is a CI/CD pipeline?

- Why should you use CI/CD for Kubernetes?

- Various stages of Kubernetes app delivery.

- Automating CI/CD process using open source Devtron platform.

What Is a CI/CD Pipeline?

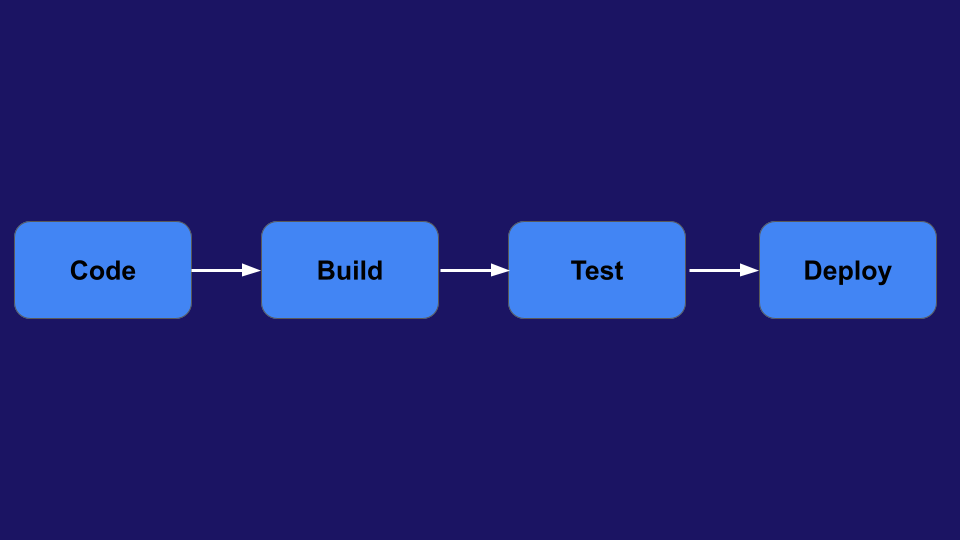

Continuous Integration and Continuous Deployment (CI/CD) pipeline represent an automatic workflow that continuously integrates code developed by software developers and deploys them into a target environment with less human intervention.

Before CI/CD pipelines, developers were manually taking the code, building it into an application, and then deploying it into testing servers. Then, on the approval from testers, developers would throw their code off the wall for the Ops team to deploy the code into production. The idea somewhat worked fine with monolithic applications when deployment frequency was once in a couple of months.

But with the advent of microservices, developers started building smaller use cases faster and deployed them frequently. The process of manually handling the application after the code commit was repetitive, frustrating, and prone to errors.

This is when agile methodologies and DevOps principles flourished with CI/CD at its core. The idea is to build and ship incremental changes into production faster and more frequently. A CI/CD pipeline made the entire process automatic, and high-quality codes were shipped to production quickly and efficiently.

The Two Primary Stages of a CI/CD Pipeline

1. Continuous Integration or CI Pipeline

The central idea in this stage is to automatically build the software whenever new software is developed by developers. If developments happen every day, there should be a mechanism to build and test it every day. This is sometimes referred to as the build pipeline. The final application or artifact is then pushed to a repository after multiple tests.

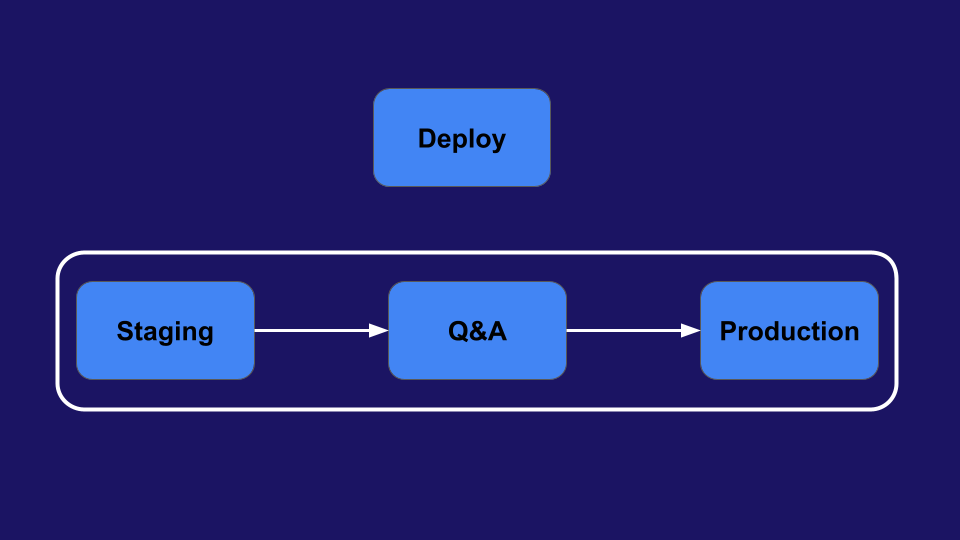

2. Continuous Deployment or CD Pipeline

The continuous deployment stage refers to pulling the artifact from the repository and deploying it frequently and safely. A CD pipeline is used to automate the deployment of applications into the test, staging, and production environments with less human intervention.

Note: Another term people use interchangeably when referring to Continuous Deployment is Continuous Delivery, but they’re not the same. As per the book Continuous Delivery: Reliable Software Releases Through Build, Test, and Deployment Automation by David Farley and Jez Humble, it’s the process of releasing changes of all types—including new features, configuration changes, bug fixes, and experiments—into production, or into the hands of users, safely and quickly in a sustainable way.

Continuous Delivery comprises the entire software delivery process, i.e., planning, building, testing, and releasing software to the market continuously. It’s assumed that continuous integration and continuous deployment are the two parts of it.

Now, let us discuss why to use CI/CD pipelines for Kubernetes applications.

Benefits of Using a CI/CD Pipeline for Kubernetes

By using a CI/CD pipeline for Kubernetes, one can reap several crucial benefits:

Reliable and Cheaper Deployments

A CI/CD pipeline deploying on Kubernetes facilitates controlled release of the software, as DevOps Engineers can set up staged releases, like blue-green and canary deployments. This helps in achieving zero downtime during release and reduces the risk of releasing the application to all users at once. Automating the entire SDLC (Software Development Lifecycle) using a CI/CD pipeline aids in lowering costs by cutting many fixed costs associated with the release process.

Faster Releases

Release cycles that used to take weeks and months to complete have significantly come down to days by implementing CI/CD workflows. In fact, some organizations even deploy multiple times a day. Developers and Ops team can work together as a team with CI/CD pipeline and quickly resolve bottlenecks in the release process, including re-works that used to delay releases. With automated workflows, the team can release apps frequently and quickly without getting burnt out.

High-Quality Products

One of the major benefits of implementing a CI/CD pipeline is that it helps to integrate testing continuously throughout the SDLC. You can configure the pipelines to stop proceeding to the next stage if certain conditions are not met, such as failing the deployment pipeline if the artifact has not passed functional or security scanning tests. Due to this, issues are detected early, and the chances of having bugs in the production environment become slim. This ensures that quality is built into the products from the beginning itself, and the end users get better products.

Figure C illustrates the benefits of implementing CI/CD pipelines.

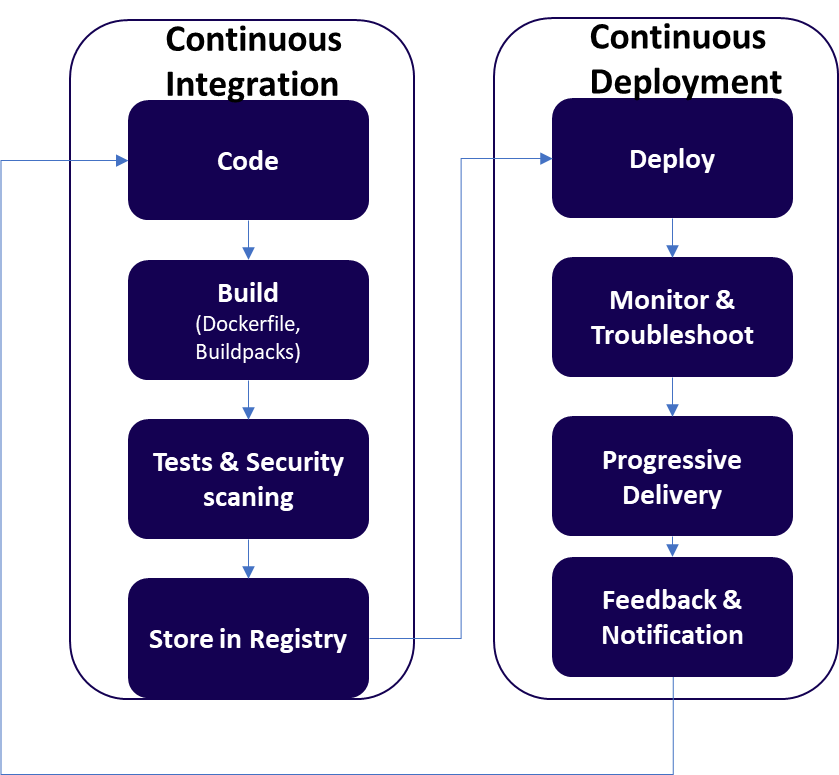

Now, let’s dive deeper into various stages of Kubernetes app delivery, which can be made a part of the CI/CD pipeline.

Stages of Kubernetes App Delivery

Below are the different stages involved in a CI/CD pipeline of Kubernetes application delivery and the tools developers and DevOps teams use at each stage.

Figure D represents the stages of Kubernetes app delivery.

Code

Process: Coding is the stage where developers write codes for applications. Once new codes are written, they’re pushed into central storage on a remote repository, where application codes and configurations are stored. It’s a shared repository among developers, and they continuously integrate code changes in the repository, mostly daily. These changes in the code repository trigger the CI pipeline.

Tools: GitHub, GitLab, BitBucket

Build

Process: Once changes are made in the application code repository, it’s then packaged into a single executable file called an artifact. This allows flexibility in moving the file around until it’s deployed. The process of packaging the application and creating an artifact is called building. The built artifact is then made into a container image that would be deployed on Kubernetes clusters.

Tools: Maven, Gradle, Jenkins, Dockerfile, Buildpacks

Test

Process: Once the container image is built, the DevOps team will ensure it undergoes multiple functional tests such as unit tests, integration tests, and smoke tests. Unit tests ensure small pieces of codes (units), like functions, are working properly. Integration tests look for how different components of codes, like different modules, are holding up together as a group. Finally, smoke tests check if the build is stable enough to proceed.

After the functional tests are done, there will be another sub-stage for testing and verifying security vulnerabilities. DevSecOps would execute two types of security tests, i.e., Static application security testing (SAST) and dynamic application security testing (DAST), to detect problems such as container images containing vulnerable packages.

After passing all the functional and security tests, the image is then stored in a container image repository.

Tools: JUnit, Selenium, Claire, SonarQube

All the above steps make up the CI or build pipeline.

Deploy

Process: In the deployment stage, a container image is pulled from the registry and deployed into a Kubernetes cluster running in testing, pre-production, or production environments. Deploying the image into production is also called a release, and the application will then be available for the end users.

Unlike VM-based monolithic apps, deployment is the most challenging part of Kubernetes because of the following reasons:

- Developers and DevOps engineers have to handle many Kubernetes resources for successful deployment.

- As there are various ways of deployment, such as using declarative manifest files and HELM charts, enterprises rarely follow a standardized way to deploy their applications.

- Multiple deployments of large distributed systems every day can be really frustrating work.

Tools: Kubectl, Helm Charts

For interested users, we’ll show the steps involved in the simple process of deploying an NGINX image into K8s. (Feel free to skip the working example.)

Deploying Nginx in Kubernetes Cluster (Working Example)

Before deploying, you have to create resource files in K8s so that your application will run in containers. And there are many resources, such as Deployments, ReplicaSets, StatefulSet, Daemonset, Services, Configmap, and many other custom resources.

We’ll look at how to deploy into Kubernetes with the bare minimum resources or manifest files: Deployment and Service. A Deployment workload resource provides declarative updates and describes the desired state, like replication and scaling of pods. A Service in Kubernetes uses the IP addresses of the Pods to load balance traffic to the pod replicas.

For testing this, you should have a K8s cluster running on a server or locally using Minikube or Kubeadm. Now, let’s deploy Nginx to a Kubernetes cluster.

The Deployment YAML for Nginx—let’s name it nginx-deployment.yaml—would look like this:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 10

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

ports:

- containerPort: 80The Deployment file specifies the container image (which is nginx), declares the desired number of Pods (10), and sets the container port to 80.

Now, create a Service file with the name nginx-service.yaml and paste the below code:

apiVersion: v1

kind: Service

metadata:

name: nginx-service

spec:

selector:

app: nginx

ports:

- name: http

port: 80

targetPort: 80

type: ClusterIPOnce you configure the manifest files, deploy them using the following commands:

# kubectl apply -f nginx-deployment.yaml

# kubectl apply -f nginx-service.yamlThe code will deploy the Nginx server and make it accessible from outside the cluster. You can see the Pods by running the following command:

# kubectl get podsAlso, you can run the following command and get the service’s IP address to access Ngnix from a browser.

# kubectl get svcIf you have noticed, there are multiple steps and configurations one needs to perform while deploying an application. Just imagine the drudgery of the DevOps team when they are tasked to deploy multiple applications into multi clusters every day.

Monitoring, Health-Check, and Troubleshooting

Process: After the deployment, it’s extremely important to monitor the health of new Pods in a K8s cluster. The Ops team may manually log into a cluster, and use commands, such as kubectl get pods or kubectl describe deployment, to determine the health of newly deployed Pods in a cluster or a namespace. In addition, ops teams may use monitoring and logging tools to understand the performance and behavior of pods and clusters.

To troubleshoot and find issues, matured Kubernetes users will use advanced mechanisms like Probes. There are three kinds of probes: liveness probes to ensure an application is running, readiness probes to ensure the application is ready to accept traffic, and startup probes to ensure an application or a service running on a Pod has completely started. Although there are many commands and ways, it can be very difficult for developers or Ops teams to troubleshoot and detect an error because of poor visibility of a lot of components inside a cluster (node, pod, controller, security and deployment objects, etc.).

Tools: AppDynamics, Dynatrace, Prometheus, Splunk, kubectl

Progressive Delivery and Rollback

Process: People use advanced deployment strategies like blue-green and canary to roll out their applications gradually and avoid degradation to customer experience. This is also known as progressive delivery. The idea is to allow a small portion of traffic to the newly deployed pods and perform quality and performance regression. And if the newly deployed application is healthy, then the DevOps team will gradually roll it forward. But in case there’s an issue in performance or quality, the Ops or SRE team instantly rolls back the application to its older version to ensure there’s a zero-downtime release process.

Tools: Kubectl, Kayenta, etc.

Feedback and Notification

Process: Feedback is the heart of any CI/CD process because everybody should know what’s happening in the software delivery and deployment process. The best way to ensure effective feedback is to measure the efficacy of the CI/CD process and notify all the stakeholders in real-time. In case of failures, it helps DevOps and SREs to quickly create incidents in service management tools for further resolution.

For example, project managers and business owners would be interested to know if a new feature has been successfully rolled out to the market. Similarly, DevOps would like to know the status of new deployments, clusters, and pods, and SREs would like to be intimated about the health and performance of a new application deployed into production.

Tools: Slack, Discord, MS Teams, JIRA, and ServiceNow

Note: All the stages—Monitoring, Progressive Delivery, and Feedback and Notification—fall under Continuous Deployment.

If you’re large or mid-enterprise with tens or hundreds of microservices based on Kubernetes, then you need to serialize your delivery (CI/CD) process using pipelines.

Published at DZone with permission of Jyoti Sahoo. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments