The ELK Stack is most commonly used as a log analytics tool, but there are some key use cases that can help justify adoption of the technology.

Development Tool

Software engineers working in cloud-based environments can find themselves at a disadvantage when an expected segment of the application landscape fails to function as expected. Basic troubleshooting techniques often apply, where the engineer navigates the logs from one component to the next. This reduces development productivity and increases the cost to implement a given feature or function. Employment of the ELK Stack aggregates the logs and provides observability and searchability across the entire application landscape, allowing the development lifecycle to continue at the expected pace — even when an unexpected exception arises.

Production Support

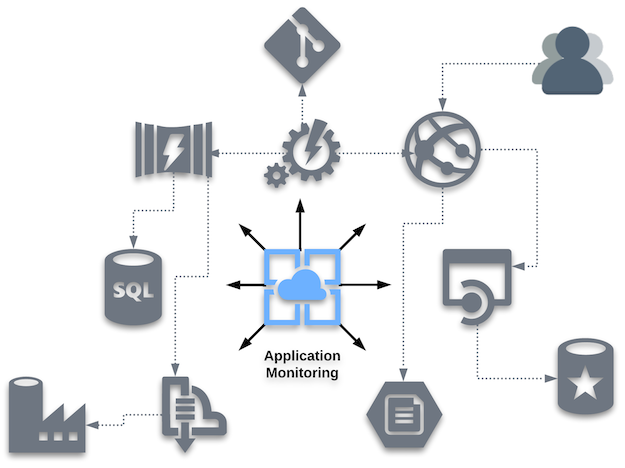

Modern IT environments are multilayered and distributed in nature, posing a huge challenge for the teams in charge of supporting them. Monitoring the various components comprising an application’s architecture is extremely time and resource consuming. The ELK Stack provides organizations with a solution that's focused on gaining insight into and information about an application running on any type of infrastructure:

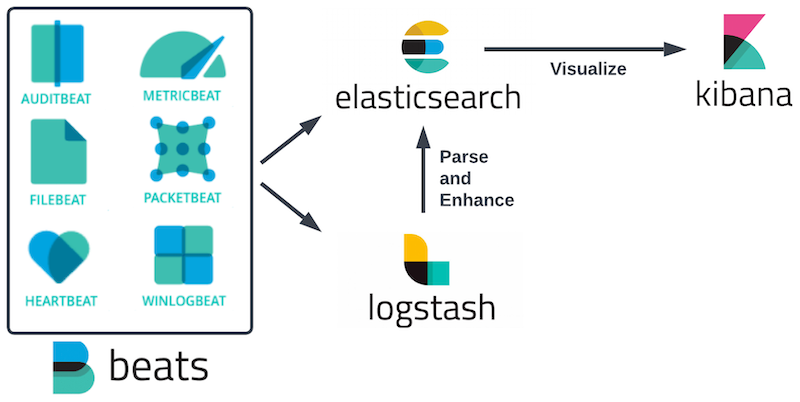

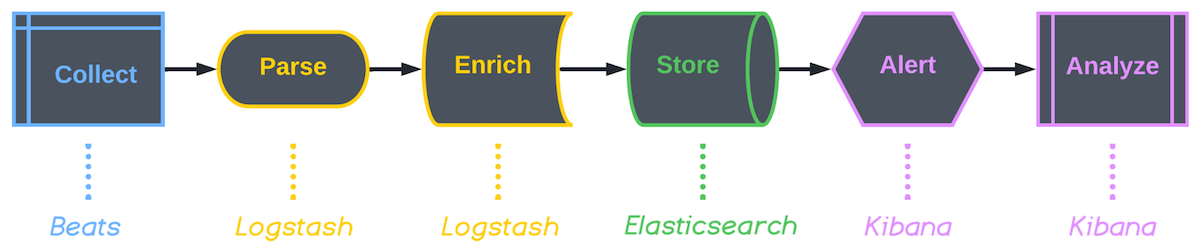

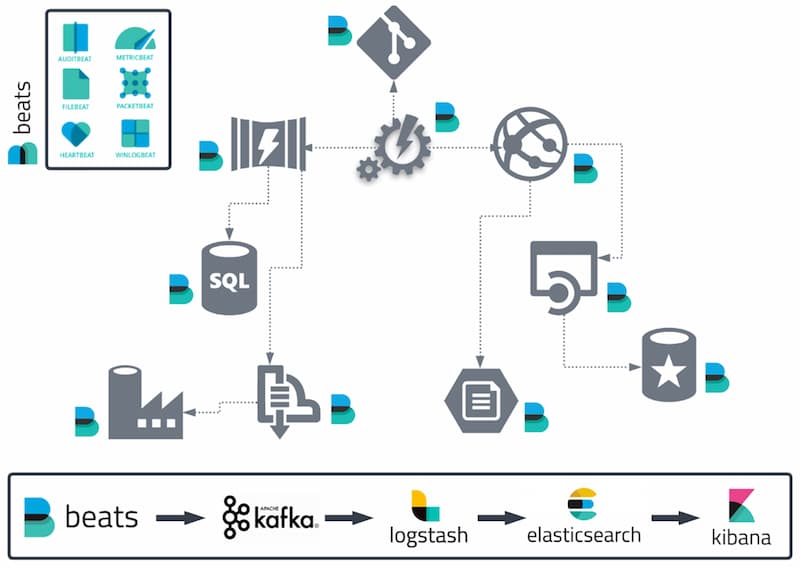

- Beats agents forward log data to Logstash instances.

- Logstash can be configured to aggregate and process data before indexing the results in Elasticsearch.

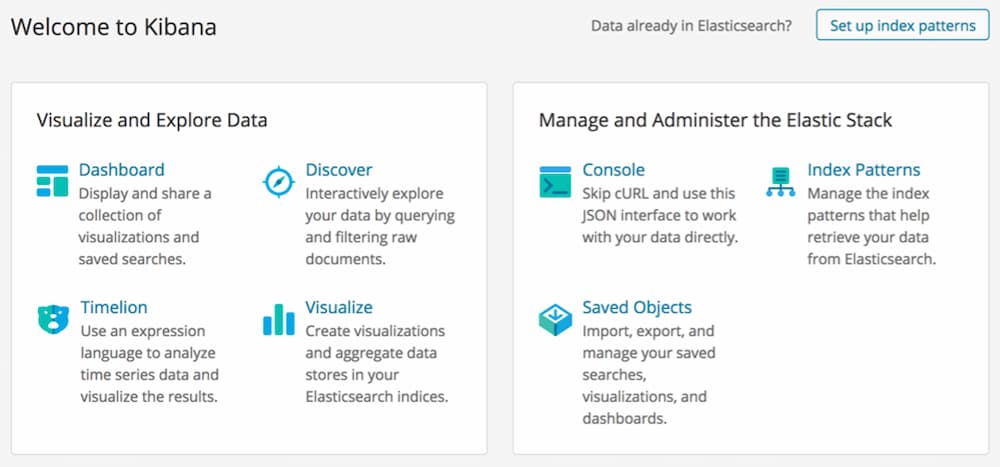

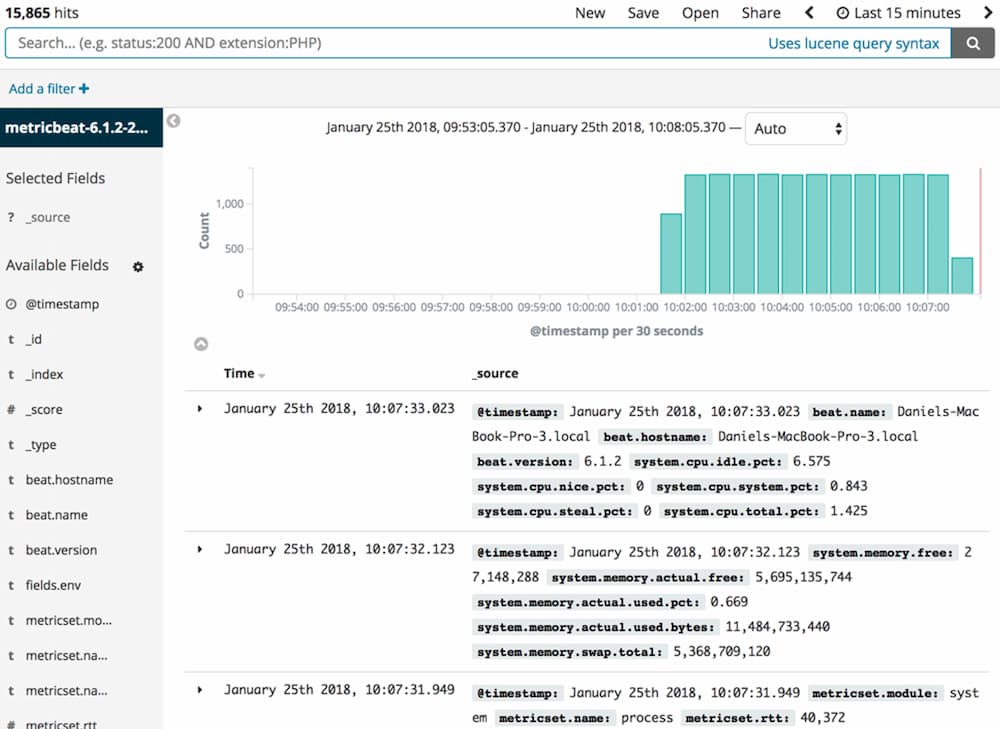

- Kibana is then used to analyze the data, detect anomalies, perform root cause analyses, and build beautiful monitoring dashboards.

While Elasticsearch was initially designed for full-text search and analysis, recent trends show a secondary use for metrics analysis. These metrics can communicate performance benchmarks for each component in the application’s landscape.

Application Performance

Application performance monitoring (APM) is one of the most common methods used by engineers today to measure the availability, response times, and behavior of applications and services. As part of the ELK Stack, Elastic APM allows engineers to track key performance-related information such as requests, responses, database transactions, and errors.

Compliance and Security

Security has always been crucial for organizations and is driven by compliance requirements (HIPAA, PCI, SOC, FISMA, etc.) and the threat of unexpected attacks. Log data contains a wealth of valuable information on what is actually happening with an application in real time. As a result, the ELK Stack should be recognized as a tool that benefits compliance and security teams.

Some key aspects that can be realized from ELK Stack adoption:

- Anti-DDoS – The ELK Stack provides the ability to quickly identify a DDoS attack that has been mounted, processing data for easier analysis and visualizing the data via monitoring dashboards.

- SIEM – This provides a holistic view of IT security, and the ELK Stack can consume, parse, and aggregate the audit logs from organizations leveraging local and cloud-based services to help gain SIEM compliance.