Amazon SQS as an Event Source to AWS Lambda : A Deep Dive

In this article, we go over SQS as an event source, Lambda integration, and using pre-built AWS CloudFormation templates to provision the backend resources.

Join the DZone community and get the full member experience.

Join For FreeSQS as an event source to Lambda is a game changer. I can now send all my event-based messages, logs, and analytics from my iOS application directly to an SQS queue. Sending these messages to SQS hasn’t changed; SQS is still low latency and batch capable, has very high throughput, and is an affordable alternative to using other storage options and no data store schema or tables to setup. What has changed is the managed backend resources for consuming content sent to the queue. All the “consuming of an SQS queue” is managed for you and we’ll explore what role each service is playing as part of this new architecture.

In this article, I’ll go over the details of SQS as an event source, the Lambda integration, and then we’ll quickly build this architecture using pre-built AWS CloudFormation templates to provision the backend resources. After you are up and running, follow along for a deeper dive into this serverless event-driven architecture.

About Amazon SQS

Simple Queue Service (SQS) is scalable, low latency, offers unlimited throughput, at-least-once-delivery, batching, server-side encryption, serverless messaging service.

All that capability existed for years, long before SQS became an event source.

So Why All the Hype and What Has changed With SQS?

Prior to SQS becoming an event source to Lambda in late June 2018, developers needed to create a service/client to poll an SQS queue for new messages (also known as an SQS consumer). This could be done with short polling or long polling. Either way, it was a service you, as a developer, had to build, monitor, scale, and maintain. You even had to write logic to know when to back off polling and know when to delete a message or let it go back into the queue. It was a hacked way of event-driven development. Think of it like building your own webhook for an API. Not an easy solution.

Lambda Is Doing All the Heavy Lifting

It’s important to know that nothing has really changed with SQS since announcing SQS as an event source. The source queue is NOT triggering your Lambda function. Now that SQS is an event source to Lambda, all that heavy lifting of managing message polling and deleting has been offloaded to the Lambda service, which is now your SQS consumer. It should be called Lambda SQS Consumer Service, in my opinion. Lambda is doing the scaling of consumers of YOUR SQS queue for messages, receiving messages, and passing those messages to your Lambda function, and then deleting those messages when your Lambda function successfully handles the message(s) received. This is all explained in more detail here.

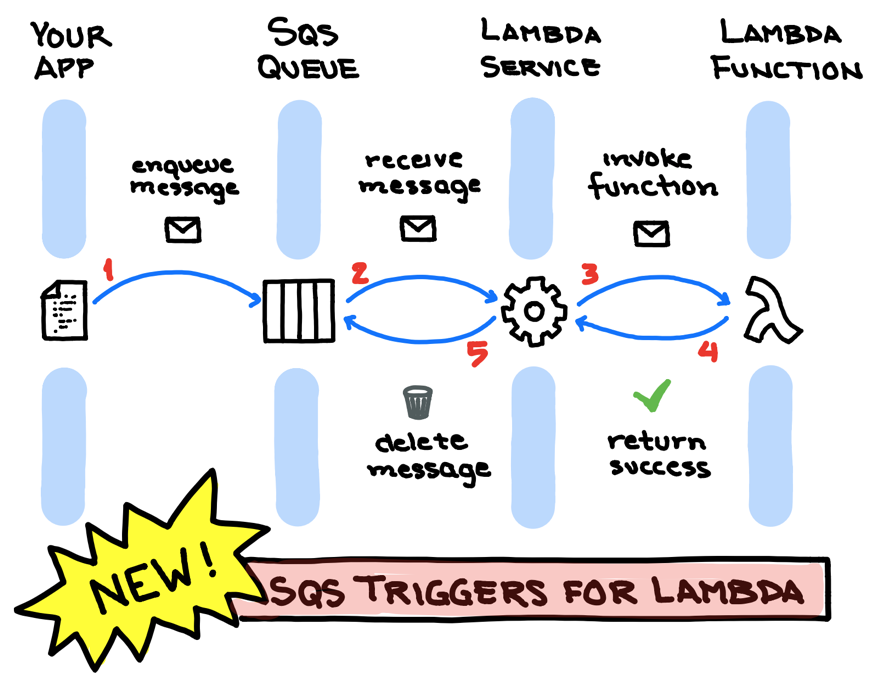

Below is a nice visual of what’s going on with this integration. Thanks for the sketch, Jerry Hargrove!

If you already know enough about SQS as an event source and how the AWS Lambda integration works, skip the preamble and move onto Get Started using the included end-to-end CloudFormation template and then come back for a deeper dive.

Get Started — Backend

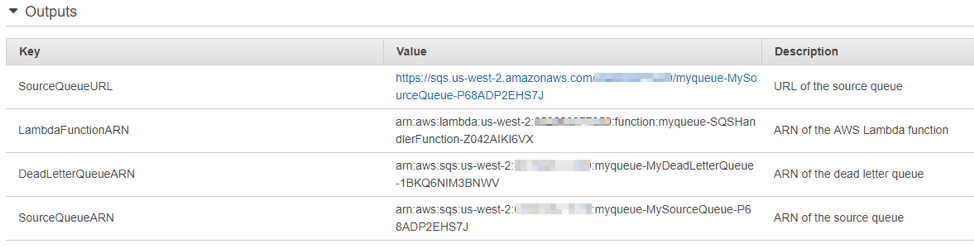

I created a CloudFormation template that will provision:

- A standard SQS queue.

- A dead letter queue.

- AWS Lambda function for handling messages.

- The event source mapping.

- Lambda execution permissions to SQS and SNS for sending SMS text message.

Click on the template to launch the CloudFormation console to begin building your stack. The template defaults to the US West Oregon region (us-west-2).

For testing fun, I put in a parameter for you to provide your mobile number so that you will receive an SMS text message whenever an SQS message arrives in the queue. This for testing purposes only. The phone number is stored as an environment variable for the Lambda function.

If Node.js is not your thing, here’s a link to Lambda sample code for handling SQS events using .NET, Go, Java, and Python.

Once the stack completes, you can now test the setup as follows:

1. In the Amazon SQS console, send messages to the queue.

2. AWS Lambda polls the queue and when it detects new messages, it invokes your Lambda function by passing in the event data from the queue.

3. Your function executes and creates logs in Amazon CloudWatch. You can verify the logs reported in the Amazon CloudWatch Logs console.

To really see the value of SQS, checkout my sample iOS Swift application (you can download it from GitHub here) that demonstrates single and batch messages.

###

Deep Dive Into SQS as an Event Source to Lambda

###

How Does the Lambda Integration Work?

AWS Lambda service (not your Lambda function, but a Lambda SQS long-poll service running on your behalf) polls your SQS queue continually for incoming messages. When a message arrives, it receives the message(s) and then invokes your Lambda function by passing the message(s) as a parameter.

Here’s what a typical message event looks like to your Lambda function that is handling the passed in messages:

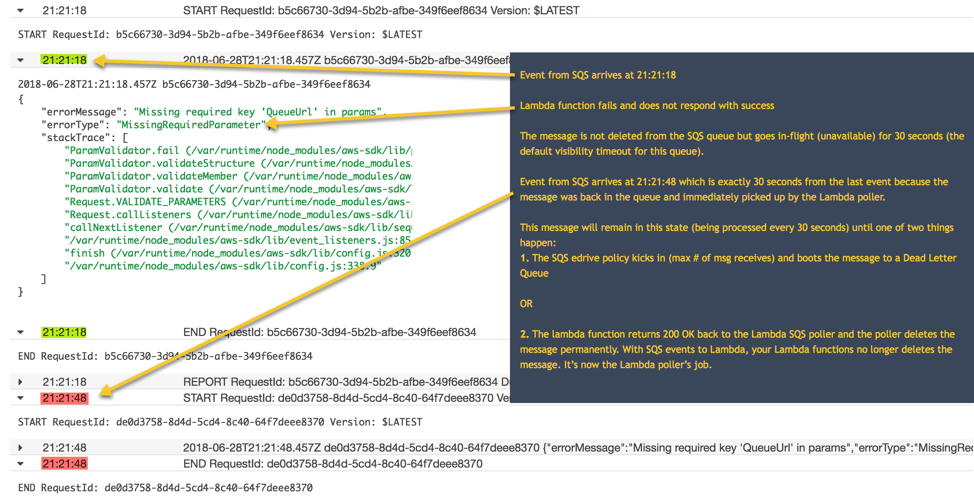

Here’s an example of how the integration flow works as shown with the labeled CloudWatch Logs from an SQS handler Lambda function.

Lambda Message Processing and Scaling Limits

With Lambda as a target for SQS event source, it fully supports reading and deleting messages in batch (1 to 10) within a queue. In addition to configurable batch support, Lambda function scaling starts polling your queue with a (minimum) concurrency of 5 invocations and will increase concurrency by 60 concurrent invocations per minute as needed. You can manually control the Lambda function concurrency from 1 to 1000, but Lambda will always default to an initial burst of 5 concurrent function invocations. This is where all the heavy lifting comes in, allowing you to process thousands of requests per second with only a single standard queue! More about scaling behavior.

Permissions

Lambda polls an Amazon SQS queue on your behalf and reads new messages off the queue using the sqs:ReceiveMessage action and deletes them once your function has successfully processed the message using sqs:DeleteMessage or sqs:DeleteMessageBatch actions. Your Lambda function processing the messages do not delete the messages and therefore you no longer have to keep track of each Message ID or Receipt Handle. When provisioning the event source, you need to grant AWS Lambda permissions to these SQS actions for the relevant source queue. You can use an Identity Based Policy (IAM Execution Role) and/or a Resource Based Policy (typically used if cross-account access is needed). In the CloudFormation template provided, we are using an identity-based policy attached to our Lambda Execution Role (IAM Role). This means that our Lambda function can assume the IAM role and is granting permission for the Lambda SQS consumer to receive/delete SQS messages from the designated standard source queue.

A Dead Letter Queue and the Redrive Policy

A Dead Letter Queue (DLQ) is just another standard queue that receives messages from another queue when a message in that queue has been received X number of times. That X number of times IS determined by you and is the core of the redrive policy. The DLQ is for handling messages that can’t be processed (consumed) successfully by the source queue.

Pricing

There are no additional charges for provisioning SQS as event source. However, because the Lambda service (acting as an SQS consumer) is continuously long-polling the SQS queue, you may be charged for those API calls (sqs:ReceiveMessage) at the standard SQS pricing rates, even if you have NO messages in the queue. From my experience, if you have no messages for a month, the cost was negligible (pennies) but you should test this out yourself and provide feedback below with your experience to help others.

Final Thoughts

Amazon SQS as an event source to AWS Lambda is really a match made in heaven! Consider this event-driven solution to solve your next project or improve an existing app. Trust me, you can never go wrong with SQS.

Resources

Opinions expressed by DZone contributors are their own.

Comments