An Overview of the Kafka Distributed Message System (Part 1)

Apache Kafka is one of the most popular tools for big data out there. If you're curious as to why, read on to take a look under the hood!

Join the DZone community and get the full member experience.

Join For FreeI originally intended to name this article "Setting up a Kafka Message Queue Cluster." However, unlike RabbitMQ, Kafka does not implement message queue protocols (for example, Advanced Message Queuing Protocol (AMQP)). AMQP provides advanced queuing protocols for unified message services. It is an open standard for application layer protocols and designed for message-oriented middleware. Therefore, although Kafka's usage mode is more like a queue, it is still not strictly a message queue. So I decided to give this article a more generic name: "An Overview of Kafka Distributed Message System."

Introduction to Kafka

LinkedIn was the first company to develop Kafka using the Java and Scala languages. Its source code was open sourced in 2011, and it became a top project of the Apache Software Foundation in 2012. In 2014, several founders of Kafka set up a new company named Confluent, which specialized in Kafka.

The purpose of the Kafka project is to provide a unified, high-throughput, and low-delay system platform for real-time data processing. Kafka delivers the following three functions:

- Publishing and Subscription: Kafka publishes subscription streaming data similar to other message systems.

- Processing: Kafka compiles a stream processing application and responds to real-time events.

- Storage: Kafka securely stores streaming data in a distributed and fault-tolerant cluster.

Message System

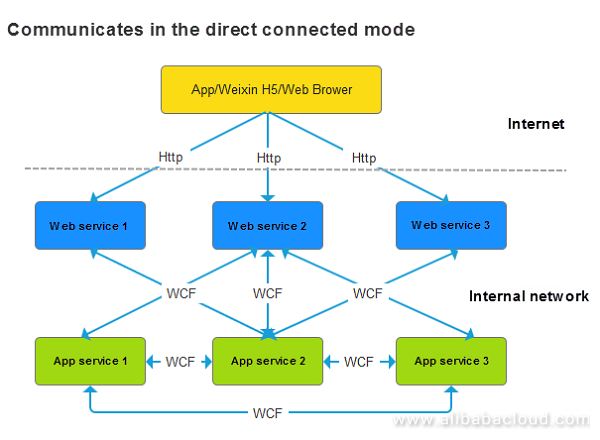

Kafka is a message system. Let us understand more about the message system and the problems it solves. Take the currently popular microservice architecture as an example. Let's assume that there are three terminal-oriented (WeChat official account, mobile app, and browser) web services (HTTP protocols) at the web end, namely Web1, Web2, and Web3, and three internal application services App1, App2, and App3 (Remote Procedure Call, for example, WCF and gRPC). If there is no message system and the direct connected mode is adopted, the communication mode between them may be as follows:

Figure 1: Structure of the System that Communicates in the Direct Connected Mode

Figure 1: Structure of the System that Communicates in the Direct Connected Mode

The following issues exist while adopting this mode:

- Tightly coupled services increase the challenges. If an external interface of application service two is modified, all components invoking the interface would require modification. In the extreme circumstance shown above, (where all components invoke the interface, which is rare in practice) all other web and application services would require modification.

- Modifying an interface with tightly coupled services is difficult. If an uncontrolled third-party application invokes the interface, the interface would require a modification. This would make third-party applications unavailable. Modifying WeChat's official interface will lead to a failure of thousands of applications.

- Launching different versions of an interface is the resolution to this problem. There are various access modes provided such as web/v1/interface, web/v1.1/interface, and app/v2.0/interface. Interfaces are compatible among minor versions; however, they aren't compatible among versions that are significantly different and completely tweaked.

- Although the above interface planning method solves the issue of tight coupling to some extent, this method isn't entirely free of challenges. Firstly, multiple versions need to be modified should there be an update and secondly, a large number of versions need maintenance.

- The method also creates difficulties in operation. It becomes complex to increase or decrease clients. If application service 4 is now added to provide a function necessary for a web service, web service 1, web service 2, and web service 3 must be modified. Similarly, if application service 2 is no longer required, the code that is used to invoke this service must be removed on the web service's end.

- Performance is limited, and expansion becomes difficult. For example, it is imperative to use a third-party tool such as ZooKeeper or Consul to achieve load balancing. An alternative approach is to either rewrite the code or adding specific configurations.

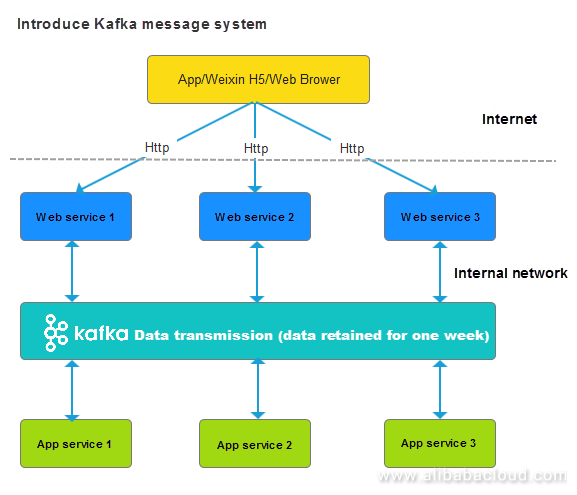

However, after the introduction of the message system, the structure changes as below:

Figure 2: Structure of the System After the Introduction of the Message System

Figure 2: Structure of the System After the Introduction of the Message System

After the introduction of the message system, all the issues mentioned previously get resolved.

- Components, web services, and application services no longer need to be concerned about each other's interface definitions. Instead, they only need to be concerned about the data structure (JSON structure).

- There isn't a need to worry about the structure of Kafka. It is mature, highly standard, and relatively stable. However, the protocol used to communicate with Kafka needs attention.

- Kafka improves performance. It is not only designed to transmit big data but also meet the requirements of most enterprises with its throughput.

- Kafka makes expansion easy through clustering. Moreover, it has a unique model that provisions common needs such as load balancing.

Two Message System Models

Producer/Consumer Model:

Producer is an application that produces messages at one end of a data pipeline. Consumer is an application that consumes messages at one end of a data pipeline.

Outlined below are the two scenarios when the producer sends messages to a queue:

- If no consumer connects to the queue or consumes messages at this time, messages are saved in the queue until it is full or a consumer is online.

- If multiple consumers connect to the queue at this time, one consumer receives only one message. Therefore, load balancing is naturally achieved in cases where there are multiple consumers in practice.

Publisher/Subscriber Model:

Publisher: an application that generates events at one end of a data pipeline.

Subscriber: an application that responds to events at one end of a data pipeline.

In the Publisher/Subscriber model, the data sent to a queue is in the form of events instead of messages. In this case, data processing is the subscription of an event, and not message consumption.

If no subscriber connects to the queue after the publisher publishes an event, the event gets lost, i.e., no application responds to it. If a subscriber is online later, he will not receive the event.

In case if multiple subscribers connect to the queue after the publisher publishes an event at the same time, the event gets broadcasted to all the subscribers, and each subscriber receives the same event. Therefore, load balancing does not exist.

Stream Processing Application

There is a difference between batch processing applications and stream processing applications. A visible boundary determines the most significant difference between batch processing and stream processing. If it exists, it is called batch processing. For example, a client collects the data once every hour, sends this data to the server for statistics, and then saves the statistical results in the statistical database.

If the boundary doesn't exist, the processing is called streaming data (stream processing). Here is an example of stream processing: logs and orders are generated continuously on a large website just like a data flow. If the processing of each log and order takes less than several hundred milliseconds or several seconds after its generation, the application is called a stream application. If the collection of logs and orders happens once every hour followed by a unified transmission, the original stream data converts into batch data.

Occasionally, stream processing becomes imperative. For example, Jack Ma wanted to display the orders and sales on Tmall for November 11 on a large screen. If the data center works in a T+1 mode and can obtain data for November 11 on November 12, Jack Ma would not be happy.

The method for processing stream data is different from the method for processing batch data. Kafka provides a unique component, Kafka Streaming, to process stream data. Kafka offers different elements for other projects in the Hadoop ecosystem. For example, Spark also uses Spark Streaming to process stream data. Storm was the first system that was built to process stream data exclusively.

Apart from data boundaries, processing times can be used to differentiate stream processing and batch processing. The processing cycle for batch processing is generally hours or days, while the processing cycle for stream processing is usually seconds. Correspondingly, batch processing is referred to as offline data processing, whereas, stream processing is referred to as real-time data processing. In the unit of minutes, data processing is referred as near-line data processing. However, data processing is seldom discussed and generally processed offline unless the processing cycle slows down.

Storage

Kafka securely stores data in a distributed and fault-tolerant cluster. The default storage period is one week. Additionally, Kafka naturally supports clusters. Kafka allows us to conveniently add or reduce machines and specify the number of copies for data. This ensures that the cluster provides break free services even when individual servers in the cluster break down.

Kafka is primarily used to transmit data in our data center project. Let me first introduce the background of this project and then provide an understanding of issues that Kafka solves.

At present, 10 applications are running in the front-end which might increase with time. The front-end applications send data to the backend data center (an application called data collector or collector for short). The collector corresponds to multiple applications. While it is idle most of the time, when numerous applications send data at the same time, the collector isn't able to process the data. In this case, there is a requirement for buffer mechanisms so that the collector is not too idle or busy. Kafka is useful as a buffer pool for the data in such situations.

In this example, instead of selecting a traditional message queue component such as RabbitMQ, I have selected Kafka. This is because Kafka is inherently developed to cope with a large batch of data and provide better performance.

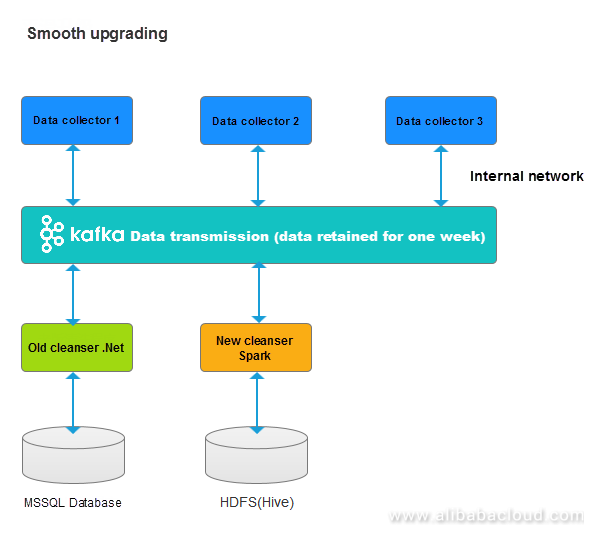

Kafka serves the function of "smooth upgrading" in a data center in addition to data buffering. Outlined below is a quick diagrammatic representation:

Figure 3: Smooth Upgrading

Figure 3: Smooth Upgrading

In the previous use case, we used .NET for developing front-end, data collection, and data cleaning applications. MS SQL stores the same. Big data technology helped us to store large amounts of data on the HDFS and Sparks helped us to collect statistics.

There was no need to change the previous versions of front-end, data collection, or data cleaning applications after introducing Kafka. The new versions of collection or cleaning applications can be accessed because Kafka allows us to extract data at any point in time.

It is easy to switch to the new system by simply stopping previous versions of the applications once the new versions pass the test.

Challenges After a Message Queue Is Introduced

Every coin has two sides. After introducing Kafka, the following changes take place.

Although the applications in the system are not mutually dependent, they depend heavily on Kafka. Stability of Kafka, therefore, becomes very important (similar to infrastructure such as Microsoft SQL Server).

- In practice, implement microservices. Microservices offer decentralization as each service can independently work without depending on the other. It is critical to determine when to use these two modes.

- A message queue is naturally asynchronous. Although a message queue improves performance, it increases code complexity. Initially, it was simple to invoke returned results by using RPC synchronously. However, code compilation and debugging become more complicated after adopting an asynchronous message queue.

That's all for Part 1. Tune in next time when we'll discuss brokers, topics, partitions, and more!

Published at DZone with permission of Leona Zhang. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments