Build an API Using AWS API Gateway and Dell Boomi — Step 1

Step by step guide to creating an API using AWS API Gateway along with Dell Boomi for the HTTP service provider.

Join the DZone community and get the full member experience.

Join For FreeIntroduction

Hi All, I hope you all had a long amazing 4th of July in the United States and a nice weekend everywhere else. Today, I thought, let me write a blog on a new technology, something that I have not written before in this platform or anywhere else. So, today, we will look into a step by step guide to creating an API using AWS API Gateway along with Dell Boomi for the HTTP service provider. Once again, I may take baby steps, if you are already aware of certain steps, and want to skip some steps, feel free to do so.

I will build an API on a couple of tables in Maria DB. You can use any other backend of your wish with your changes. For simplicity, I will split this blog into 2 parts, first part describing steps to create API in Dell Boomi and second part describing to connect the Boomi API with AWS API Gateway.

So, without any further ado, let us get started with the API building.

Prerequisites

- Amazon AWS Account to build an API in AWS API Gateway. Take a close look at your AWS account to make sure you are not incurring charges.

- Dell Boomi Platform

- A backend Maria DB Server.

- Understanding of YAML is good but not necessary.

Step 1: Define the API Contract using Open API Specification.

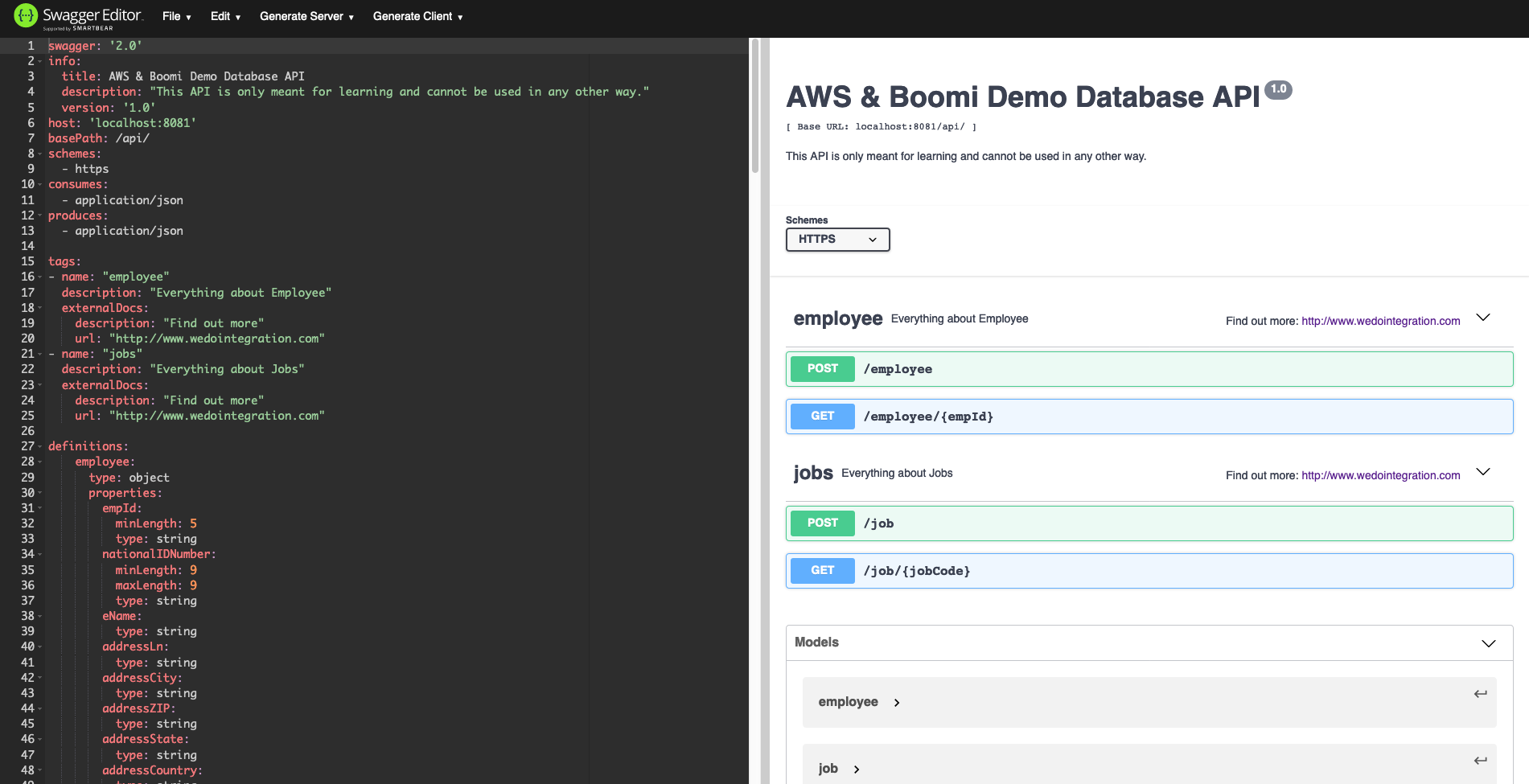

With the API First building approach, the first step in building an API is to create the contract. So, we will take our first step in building the API contract using Open API Specification in Swagger 2.0 format. We would use swagger 2.0 instead of the newer version of OAS 3.0 because this is recognized by both AWS and Dell Boomi.

You can write Swagger in various tools like Atom etc., but there is a website that is available and can be used for this case. We would use this website https://editor.swagger.io/ to build our API. So, you can open this website and there will already be a Swagger Petstore API example present over there. You may delete this and start creating your API here.

Following is the API Definition that I will use. Let us have 2 endpoints. One called /job and the other called /employee. I will create 4 Json in the example folder 2 objects and 2 arrays for each type.

swagger'2.0'

info

titleAWS & Boomi Demo Database API

description"This API is only meant for learning and cannot be used in any other way."

version'1.0'

host'localhost:8081'

basePath/api/

schemes

https

consumes

application/json

produces

application/json

tags

name"employee"

description"Everything about Employee"

externalDocs

description"Find out more"

url"http://www.wedointegration.com"

name"jobs"

description"Everything about Jobs"

externalDocs

description"Find out more"

url"http://www.wedointegration.com"

definitions

employee

typeobject

properties

empId

minLength5

typestring

nationalIDNumber

minLength9

maxLength9

typestring

eName

typestring

addressLn

typestring

addressCity

typestring

addressZIP

typestring

addressState

typestring

addressCountry

typestring

phone

typestring

gender

enum

Male

Female

typestring

birthDate

typestring

formatdate

required

empId

nationalIDNumber

eName

addressLn

addressCity

addressZIP

addressState

addressCountry

phone

gender

birthDate

job

typeobject

properties

jobCode

typestring

jobTitle

typestring

jobDescription

typestring

minQualification

typestring

empClass

typestring

minSalary

typenumber

maxSalary

typenumber

flsaStatus

typestring

required

jobCode

jobTitle

jobDescription

minQualification

empClass

minSalary

maxSalary

flsaStatus

success

typeobject

properties

status

typestring

badAuth

typeobject

properties

error

typestring

description

typestring

internalError

typeobject

properties

error

typestring

description

typestring

paths

/employee

post

descriptionCreate new Employee

tags

"employee"

operationIdpostEmployee

parameters

inheader

namex-api-key

typestring

requiredtrue

inbody

schema

$ref'#/definitions/employee'

namebody

requiredtrue

responses

201

description'Successful'

schema

$ref'#/definitions/success'

403

descriptionAuthentication error response.

schema

$ref'#/definitions/badAuth'

500

descriptionError response to indicate internal API error.

schema

$ref'#/definitions/internalError'

/employee/{empId}

get

tags

"employee"

descriptionGet employee with employee Id.

operationIdgetEmployee

parameters

inheader

namex-api-key

requiredtrue

typestring

inpath

nameempId

requiredtrue

typestring

responses

200

description'Employee Data'

schema

$ref'#/definitions/employee'

403

descriptionAuthentication error response.

schema

$ref'#/definitions/badAuth'

500

descriptionError response to indicate internal API error.

schema

$ref'#/definitions/internalError'

/job

post

tags

"jobs"

descriptionCreate new Job

operationIdpostJob

parameters

inheader

namex-api-key

typestring

requiredtrue

inbody

schema

$ref'#/definitions/job'

namebody

requiredtrue

responses

201

description'Successful'

schema

$ref'#/definitions/success'

403

descriptionAuthentication error response.

schema

$ref'#/definitions/badAuth'

500

descriptionError response to indicate internal API error.

schema

$ref'#/definitions/internalError'

/job/{jobCode}

get

tags

"jobs"

descriptionGet Job by Job Code.

operationIdgetJob

parameters

inheader

namex-api-key

requiredtrue

typestring

inpath

namejobCode

requiredtrue

typestring

responses

200

description'Employee Data'

schema

$ref'#/definitions/job'

403

descriptionAuthentication error response.

schema

$ref'#/definitions/badAuth'

500

descriptionError response to indicate internal API error.

schema

$ref'#/definitions/internalError'

On completing this Swagger, you should be able to see all the endpoints in the tool. It would look something like this.

Step 2: Build the API Scaffolder in Dell Boomi

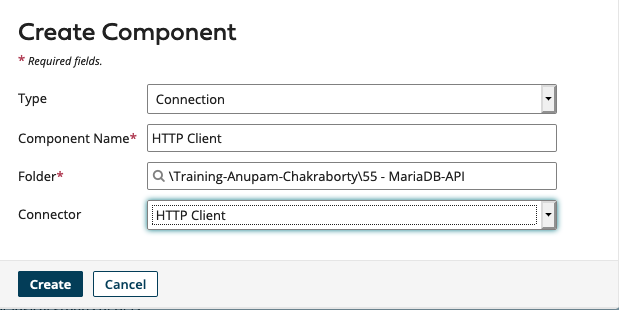

Now, let us build our backend API in Dell Boomi. We would head over to https://platform.boomi.com and go into the Integration page. Now to keep my project organized, I would create a folder in the platform. I will name it as MariaDB-API. Next, I will create one component which is needed to import our API. We should create an HTTP Client in our Folder and name it as HTTP Client.

We will not fill up anything now and just save this Component by clicking Save and Close.

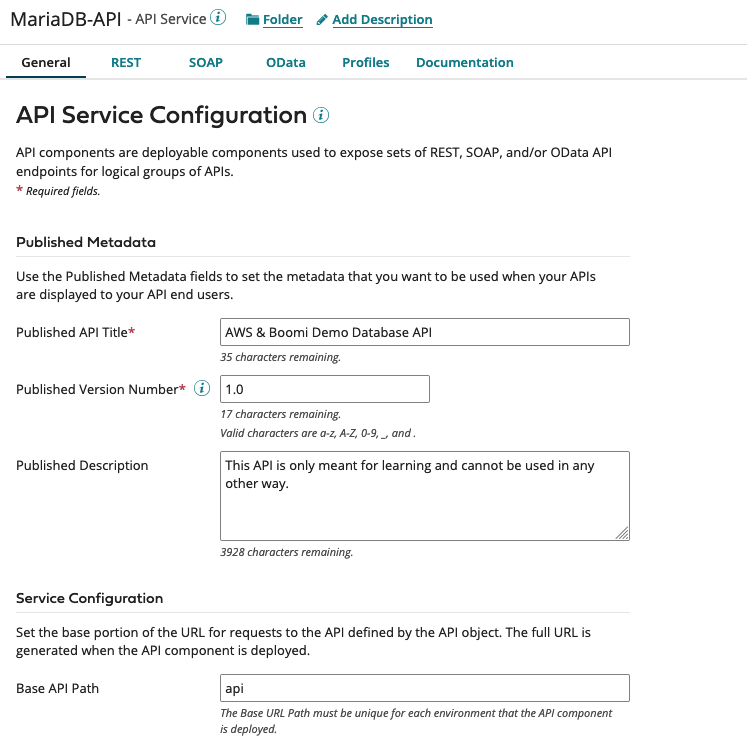

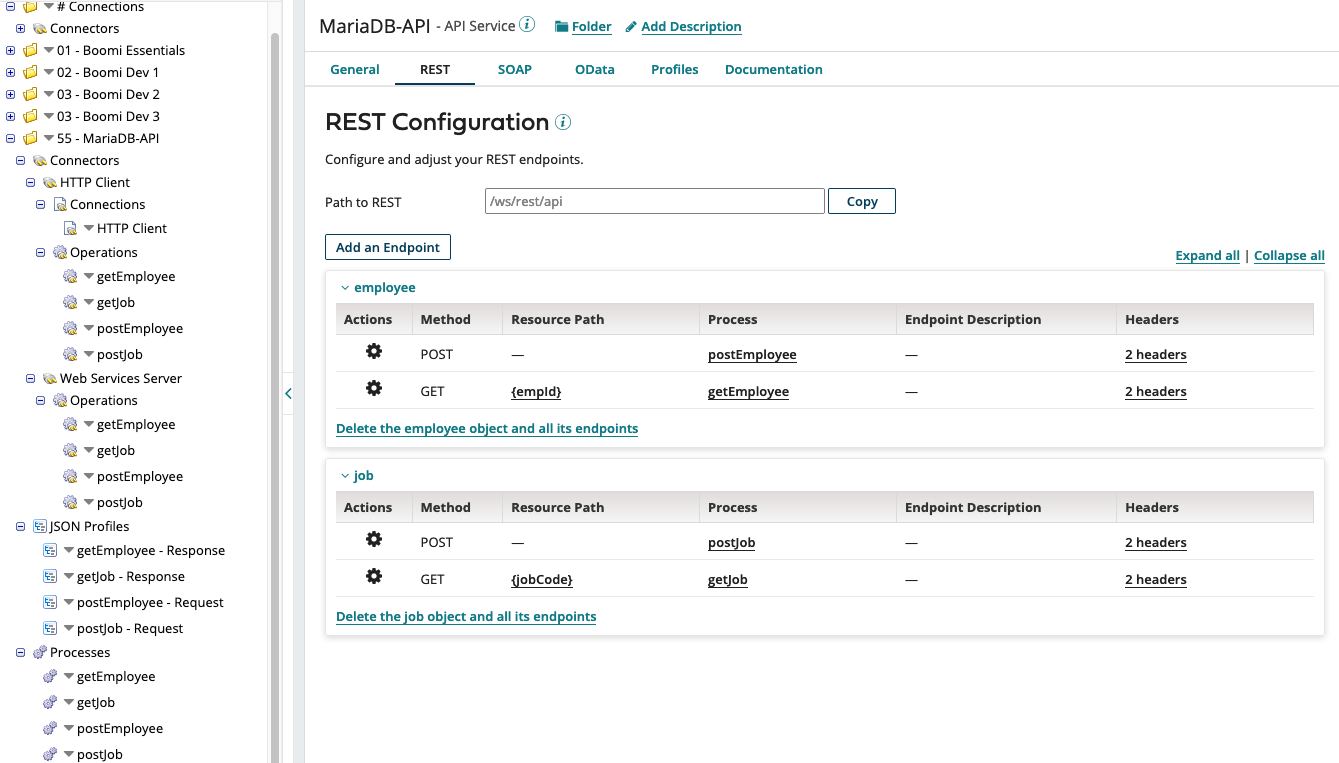

Next, we will create another component of type API and call it MariaDB-API. We will fill up the title description and version based on our details in the swagger file.

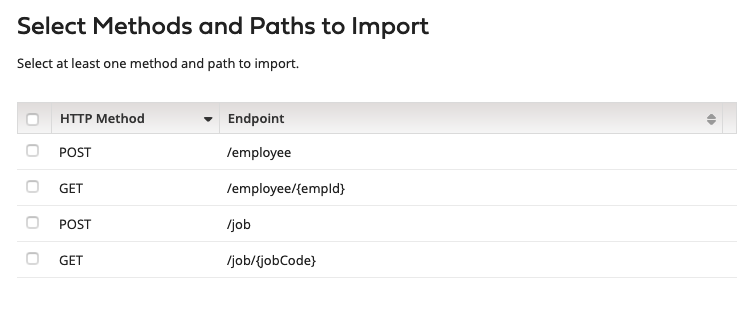

Now we should move to the REST tab and click Help Me Create an Endpoint button and then select Import from an external service file. They should allow us to import the swagger file that we have already created. For process location, we have to select the folder we are using. On the next page, we will select the HTTP client component that we created earlier. let us continue with the Process Mode as General. We can now see that all the endpoints we defined earlier in our swagger file are now available. We will select all of them and click on Next.

Now we should move to the REST tab and click Help Me Create an Endpoint button and then select Import from an external service file. They should allow us to import the swagger file that we have already created. For process location, we have to select the folder we are using. On the next page, we will select the HTTP client component that we created earlier. let us continue with the Process Mode as General. We can now see that all the endpoints we defined earlier in our swagger file are now available. We will select all of them and click on Next.

We can validate the summary with all the information and click on next to finish creating all the necessary components. We will find a bunch of components that will be created along with some Connectors, operations, JSON profiles, and some processes corresponding to all our various endpoints. We should make sure to save the API itself.

At this stage, I will go over each endpoint and remove the client ID and Client Secret parameters from the Boomi API. This is because we will authenticate the Boomi API using a basic authentication which we will see later.

Step 3: Connect to our Database Backend.

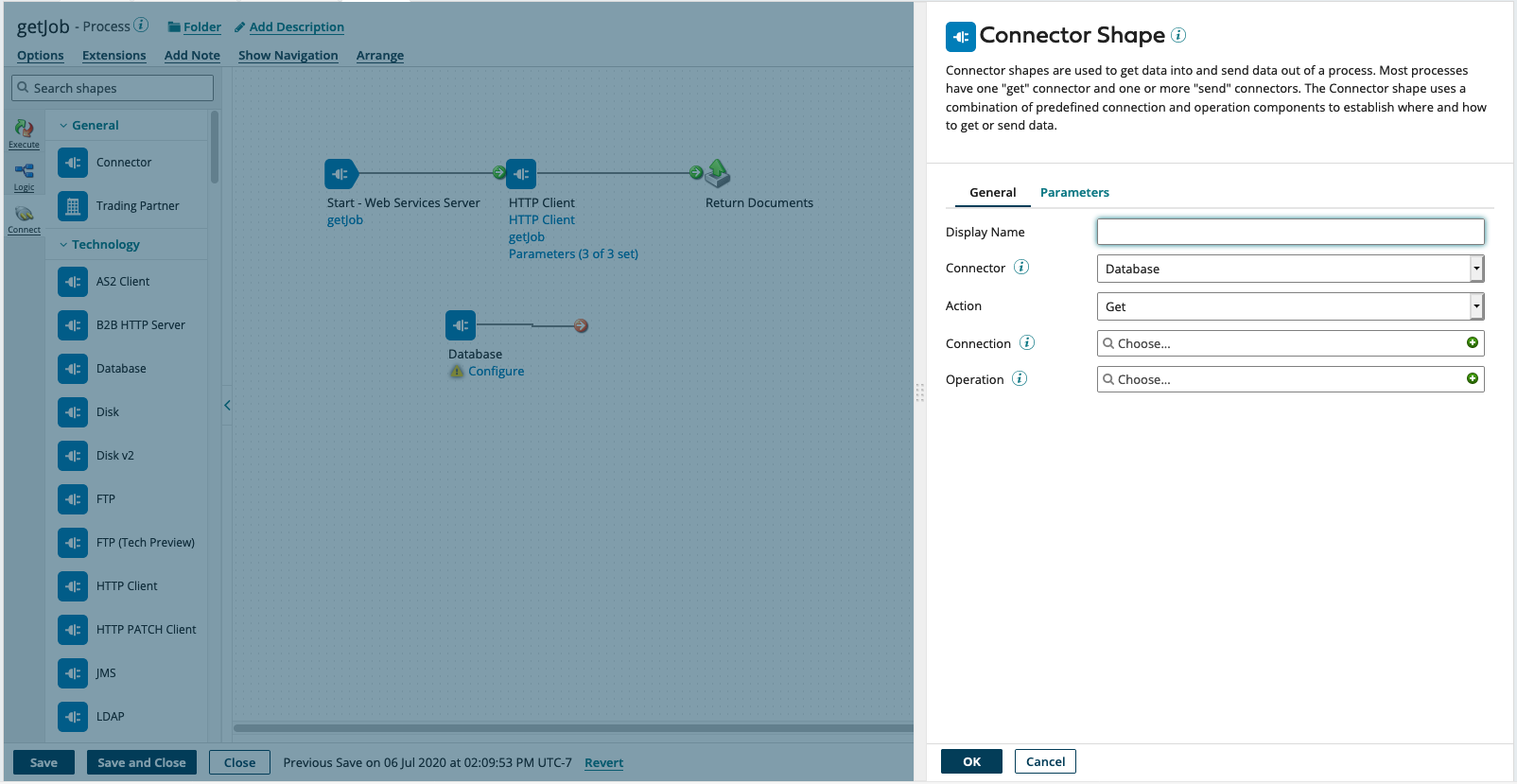

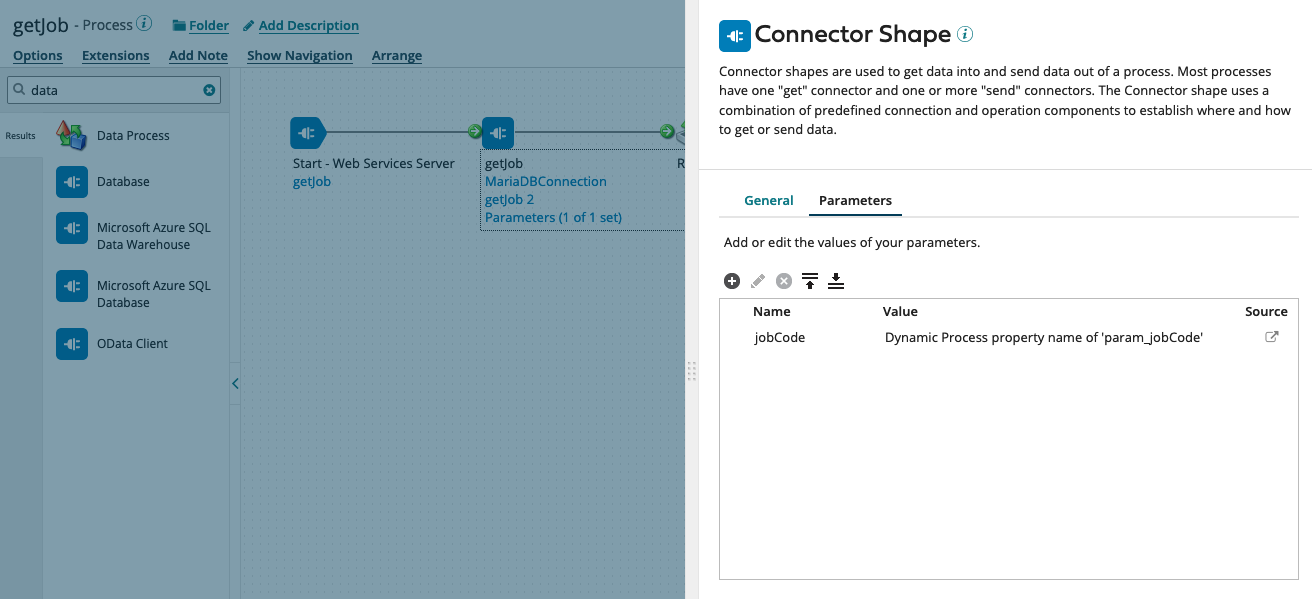

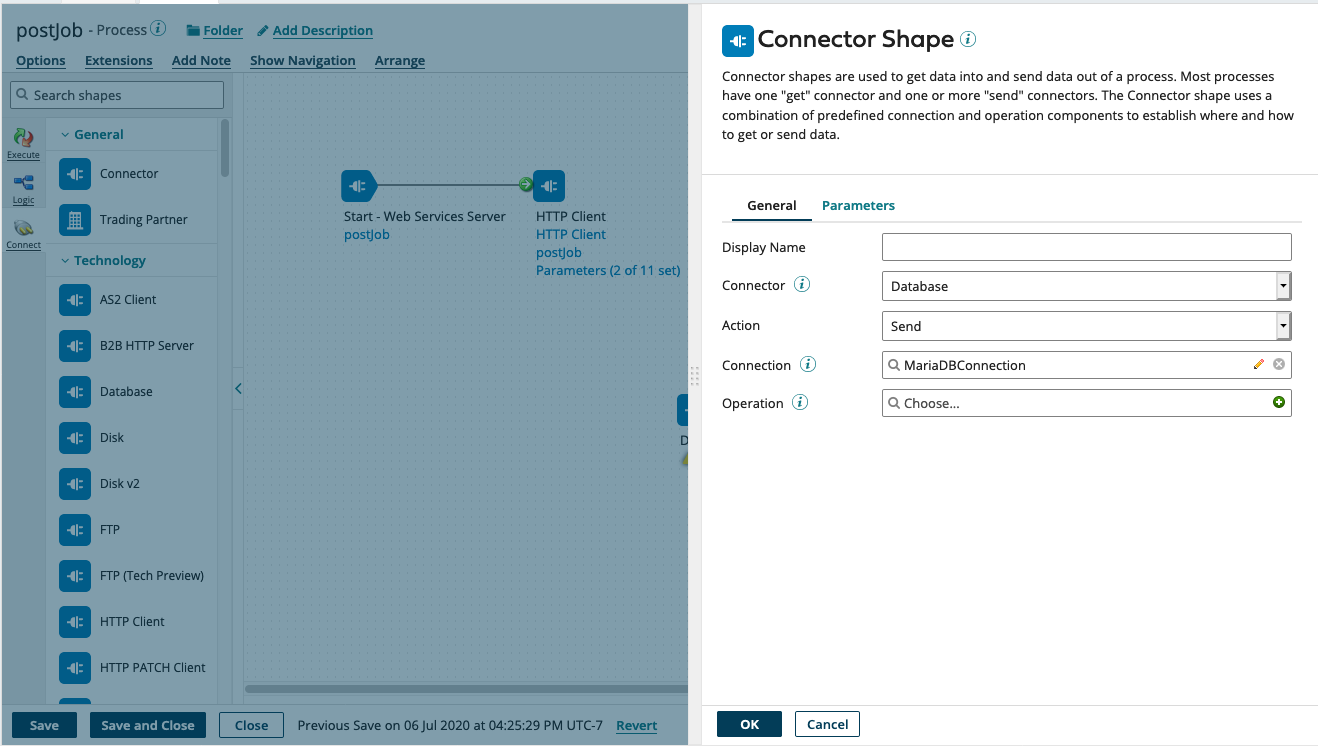

Now, we will complete the backend Database connectivity to getJob processes. We would have to repeat the same for the getEmployee process as well. From the component explorer, we would click on the getJob process and add a Database Connector Component.

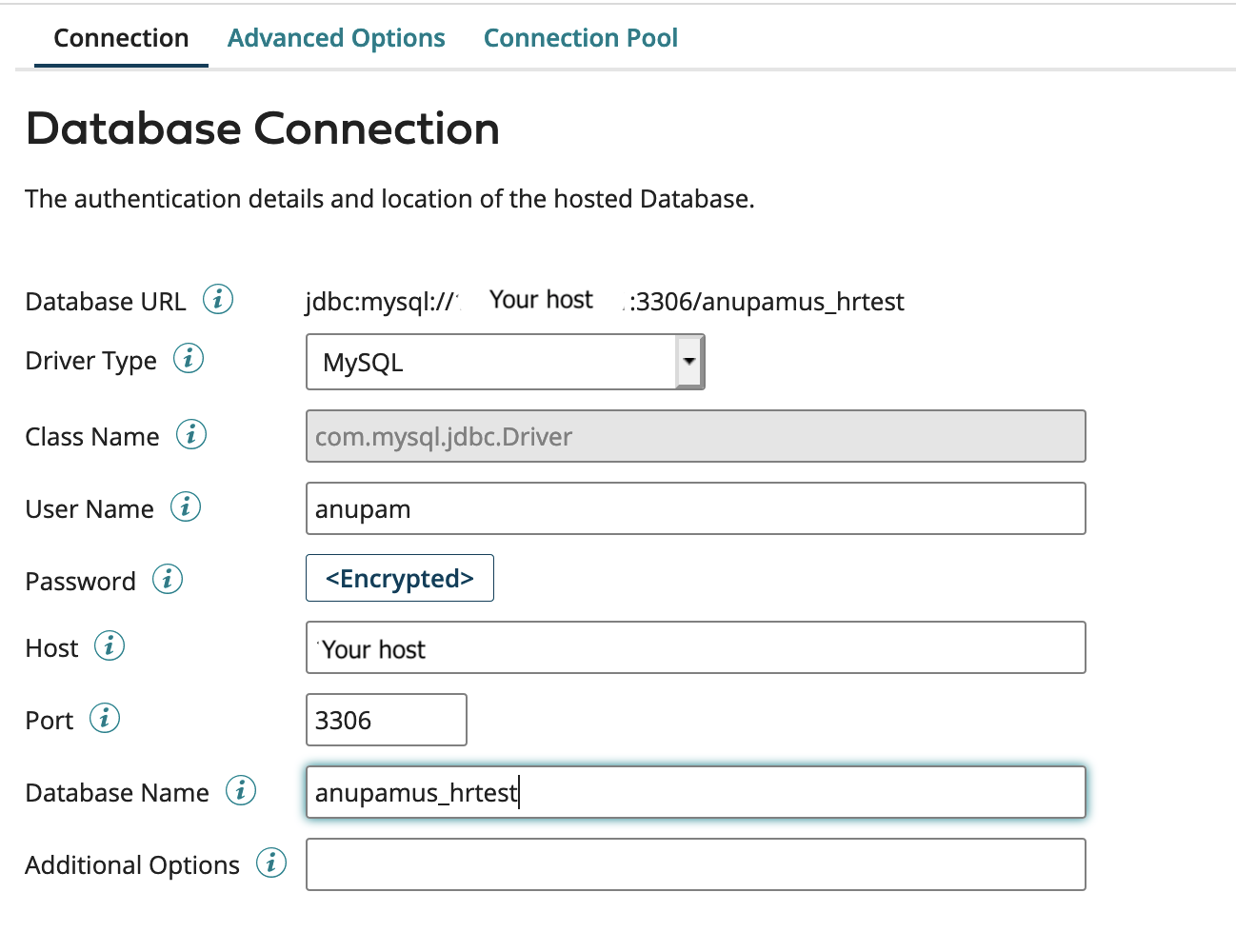

First, we will create a connection. To do that we will click on the + button beside the connection option. For the driver type, we will select MySQL and then fill up username, password, host, port, and database them respectively with our database details. Finally, we will click save and close.

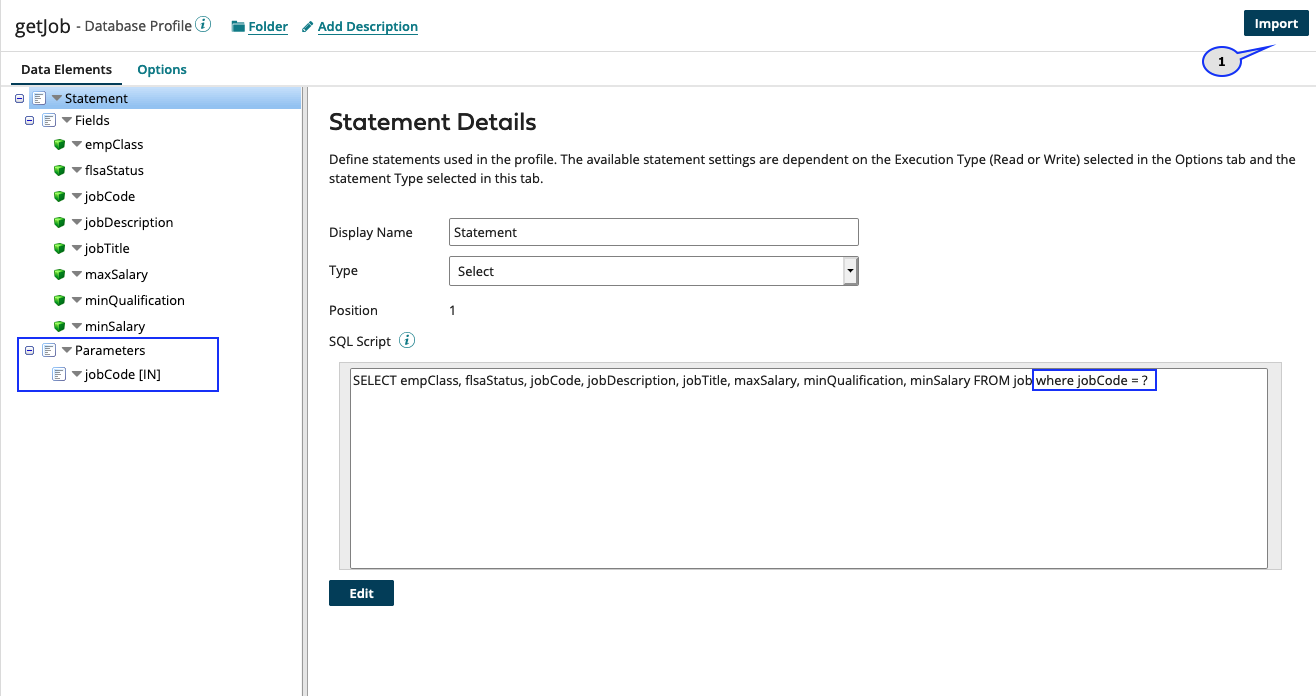

Next, we will create a new operation. To do that we will click the + button beside the operation Option. I will change the name of the operation as getJob and then create a new database Profile by clicking the + button in the Profile field. We will change the name of the profile as getJob. Now we will click the Import button, select Use Boomi cloud in the Browse in field and the connection we previously created in the Connection field, then we will click next. we should see all the tables in our database being populated here. I will choose the job table and click next. I will select all the fields present in the table and click next again and finally click Finish.

Since we only want one job details based on the job code we will add a parameter in the query with the where clause and I will add one parameter called job code of type characters.

We will arrange all the components in my process and bind the parameter in the Database Operation to the Dynamic Process Property called param_jobCode.

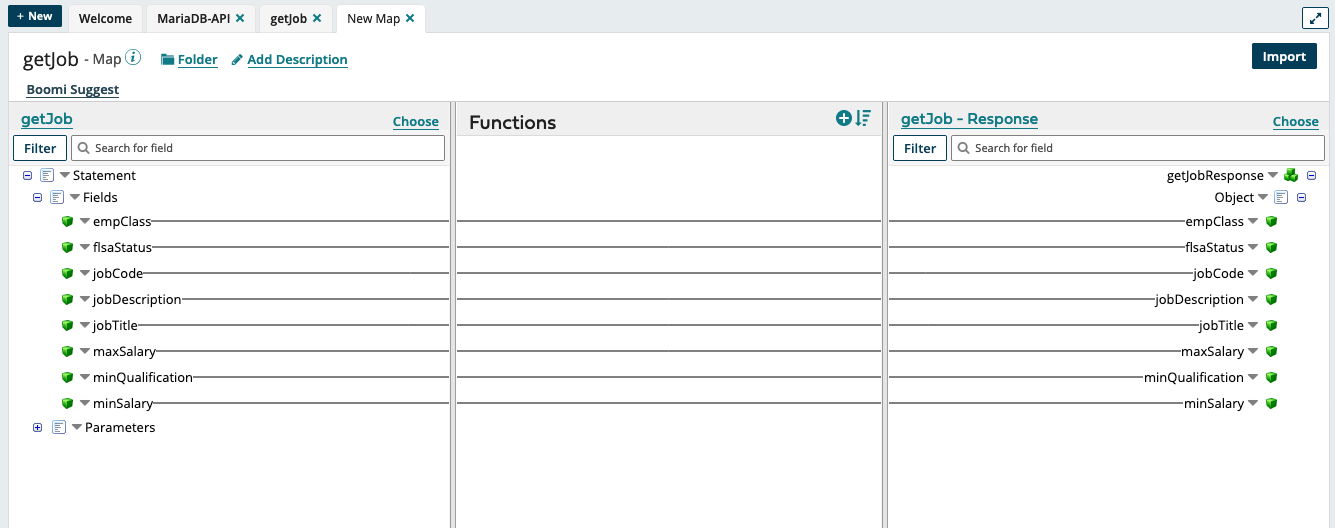

We also have to map the database profile to the response JSON profile, right? We will add a map shape and select the getjob Database Profile as a source and getJob-Response JSON profile as the target. The field names should match so it should be a one to one mapping and Boomi Suggest will do it for us.

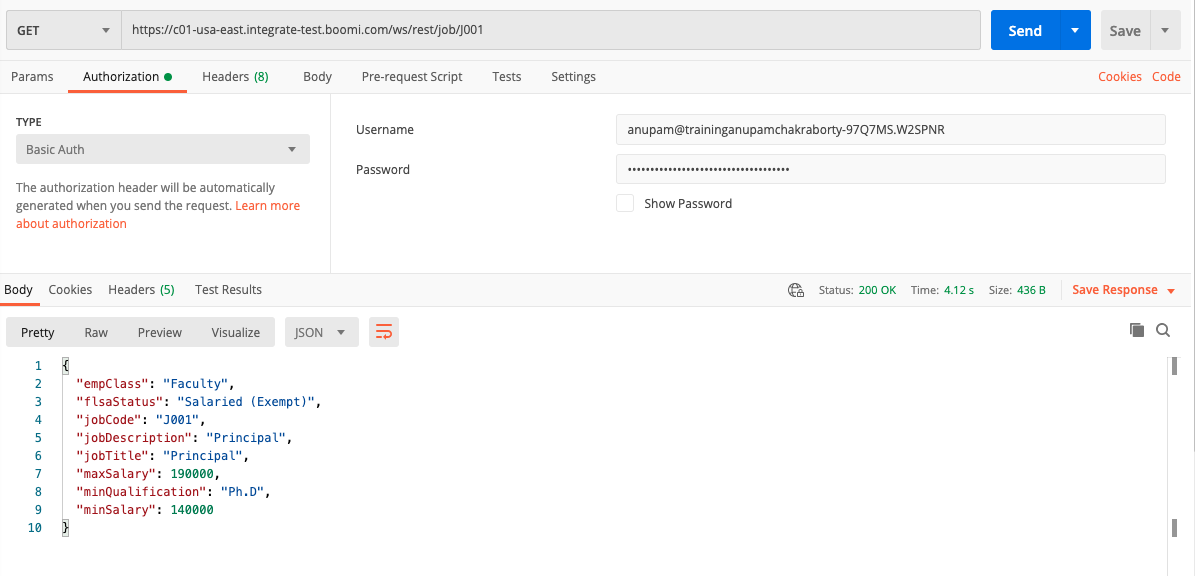

Now we will try to test this HTTP Service to check if we can retrieve the data.

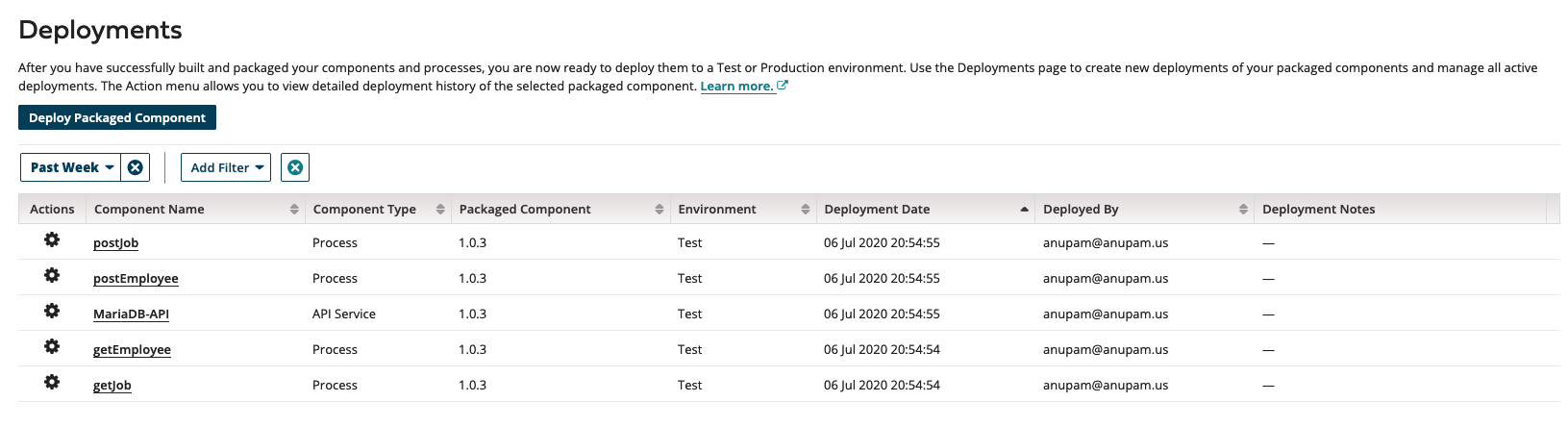

Step 4: Deploy Our Process

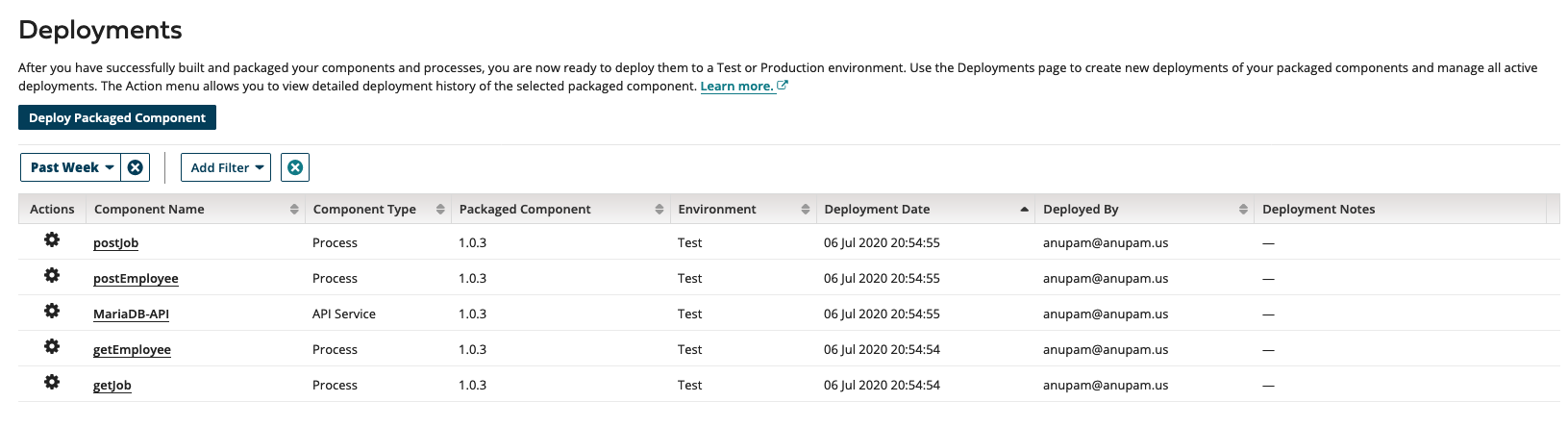

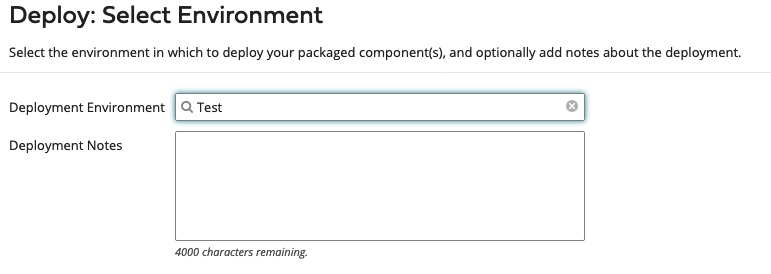

Our next step is to deploy all the processes that we have created into an Atom so that we can Call these HTTP endpoints and check if our service is working as expected. We should click on Create Packaged Component button on the top of the process window and then we should select all the processes and the API which is present in your folder and create a package component.

Finally, we have to deploy our package component in an Atom. In my case, I am using a test Atom cloud.

After deployment Is complete, we can check the deployment status to see if all the components are successfully deployed.

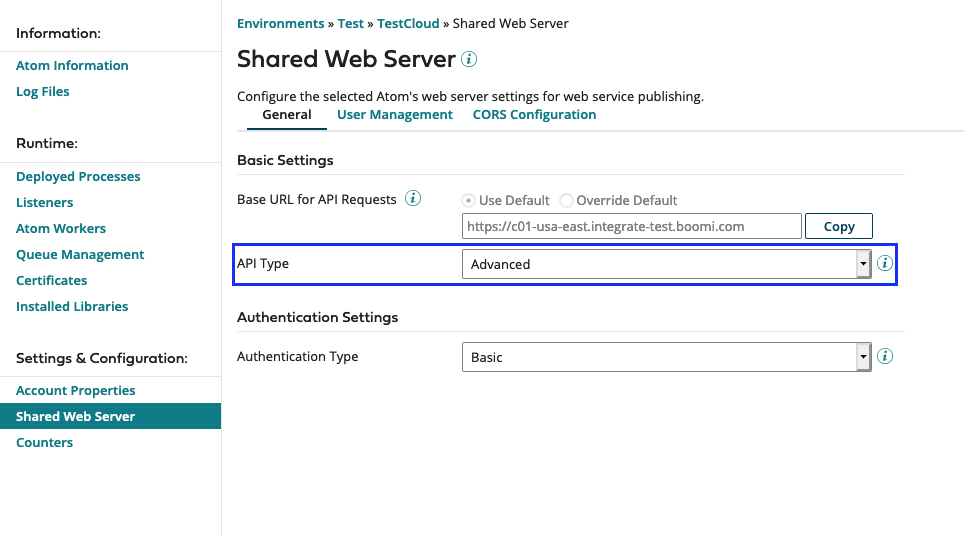

Step 5: Set up the Shared Web Server

The Shared Web Server panel appears on the Atom Management page (Manage > Atom Management). This panel is used to configure the selected Atom’s web server settings for web service publishing. The important property we need to change is API Type. We have to change it to Advanced instead of Basic or Intermediate.

Next, we should move over to the user management tab and create a new user to authenticate to our API from the HTTP Client.

That should be it. Now we need to go to our favorite HTTP client like postman and test our API. We need to keep in mind that for now only Get job API is working.

Step 6: Code for POST Job

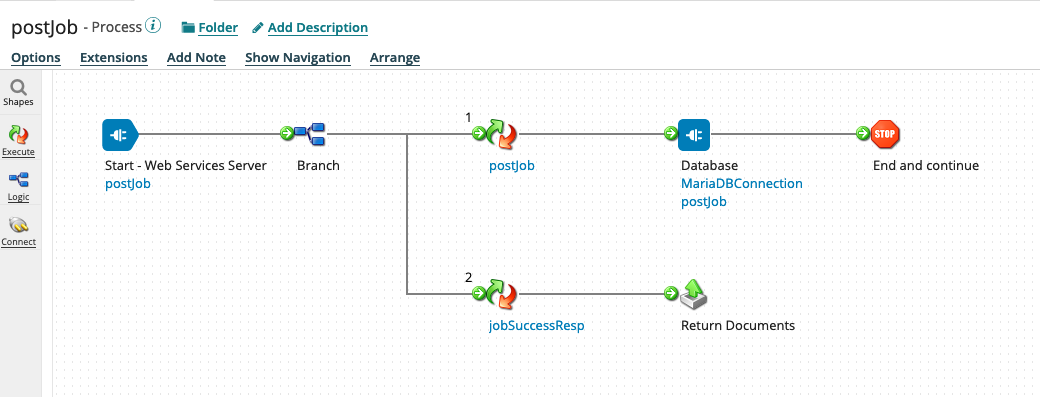

Let us now get back to the Build tab in the Boomi platform and built the process postJob. Similar to the getJob process we will have to add a database connector and create a database profile for postJob.

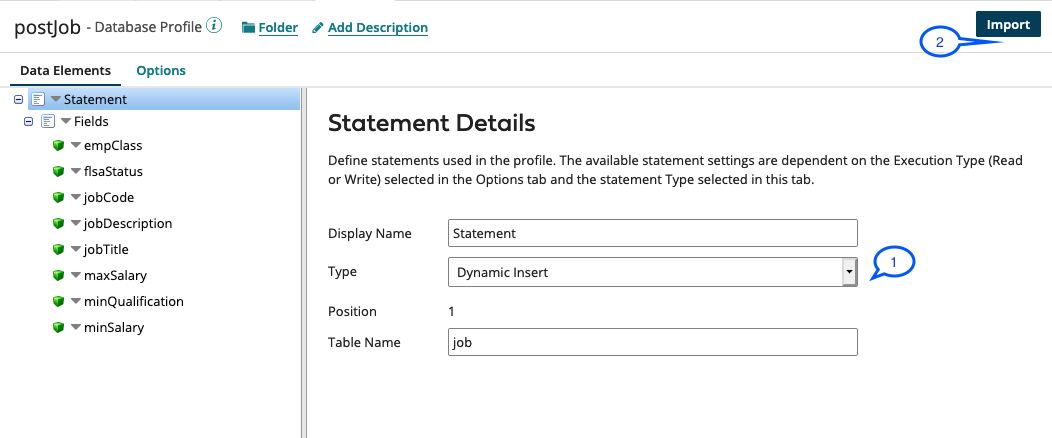

We will reuse the existing MariaDB connection and create a new operation. We will name our operation as postJob and create a database profile. For the database profile, we will use the Type Dynamic Insert and import our database table the same way we did it for the get job profile.

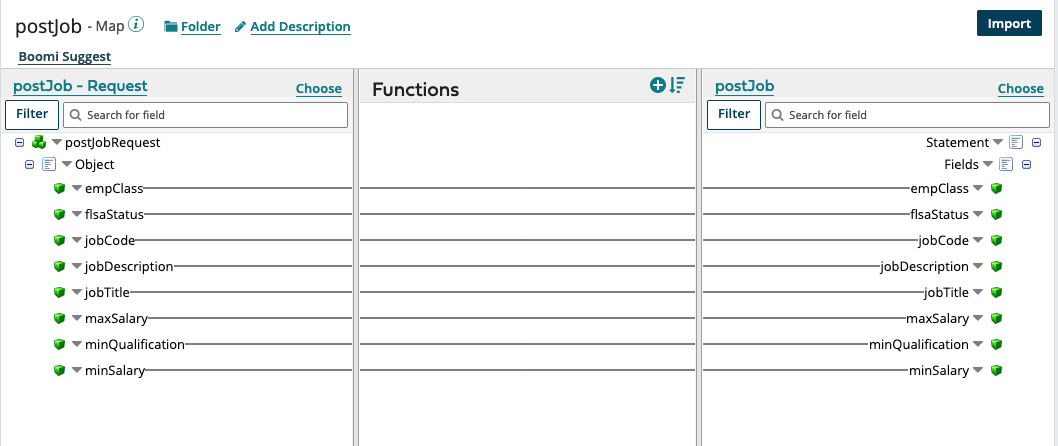

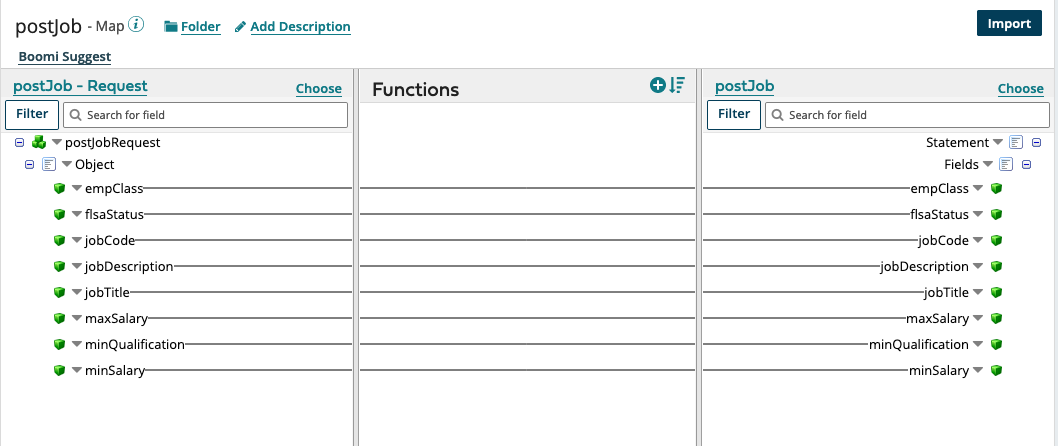

We will save and close each component that we have created and then add a map shape into our process. this map ship will convert the JSON profile into a database profile. Once again, the field names should be the same and hence there will be a one to one mapping.

We will also manually create a JSON profile named success – Response. This will have only one field called status and we will use this as a response to our post HTTP endpoints.

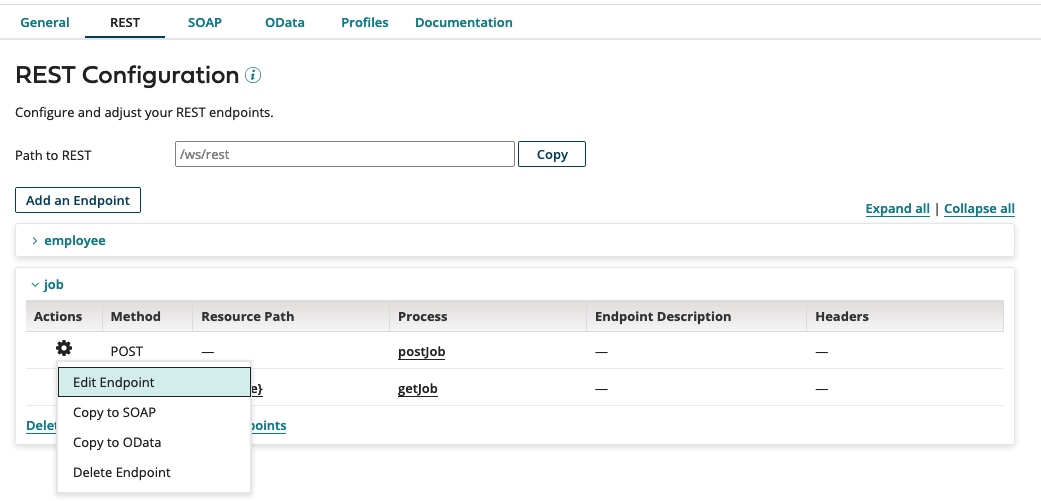

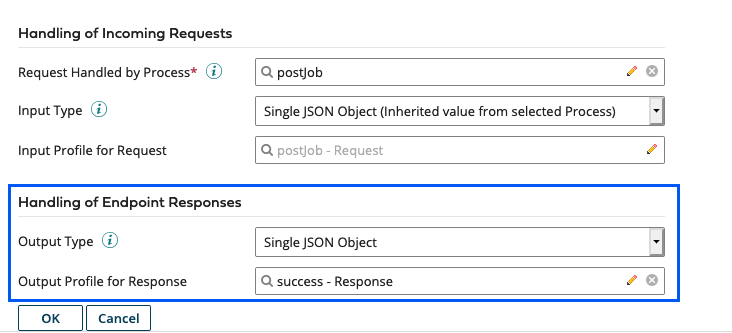

After creating this response JSON profile, we should go to our API Service MariaDB-API, manually edit the endpoints postJob and postEmployee.

Here we need to scroll down and change the Handling of Endpoint Responses to add the success-Response JSON profile as single Jason object.

We will use a branch shape to create a second branch and map to the success response since the database connection does not create any data and hence that branch will not continue.

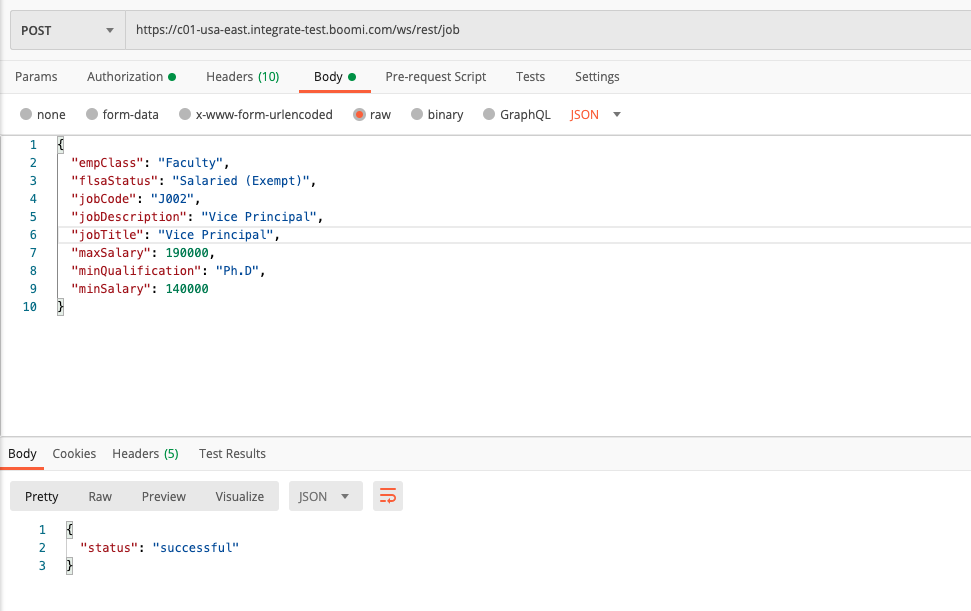

We can now test our Endpoint from Postman to see that data would be created in the backend Maria DB System.

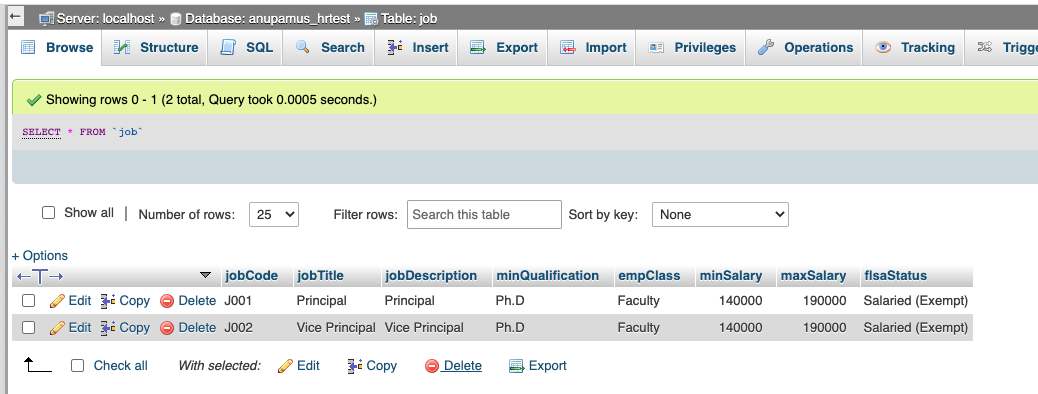

As we can see in the phpMyAdmin console our data is visible in the database table.

Step 7: Repeat the Above Steps for the Employee API

We would have to repeat the above steps for the getEmployee and postEmployee respectively. Once complete, we will continue in the next blog where we will integrate the AWS API Gateway with the Dell Boomi processes. You can find the blog in Build an API using AWS API Gateway and Dell Boomi - Step 2.

Thank you for going through my blog and please feel free to let me know what you think about this blog.

Opinions expressed by DZone contributors are their own.

Comments