Conquering Highest Scalability of an Enterprise Chat Application Using Kubernetes

The infrastructure of enterprise messaging applications is quite untraceable. Learn more about Node Js' autoscaling feasibility.

Join the DZone community and get the full member experience.

Join For Free

With over 1.5 billion monthly active users interacting with each other, Whatsapp has been in the topmost scalable application in the market. Unlike Whatsapp, there’s a huge number of enterprises chat application and software like Slack, Flock, and Zoom that connects millions of users with billions of messages based on the use cases factor.

The infrastructure of such enterprise messaging applications is quite untraceable. As we are aware that Node Js has the awesome autoscaling feasibility that can handle 1 million users, but what if the overcoming #users# limit is more than you expected.

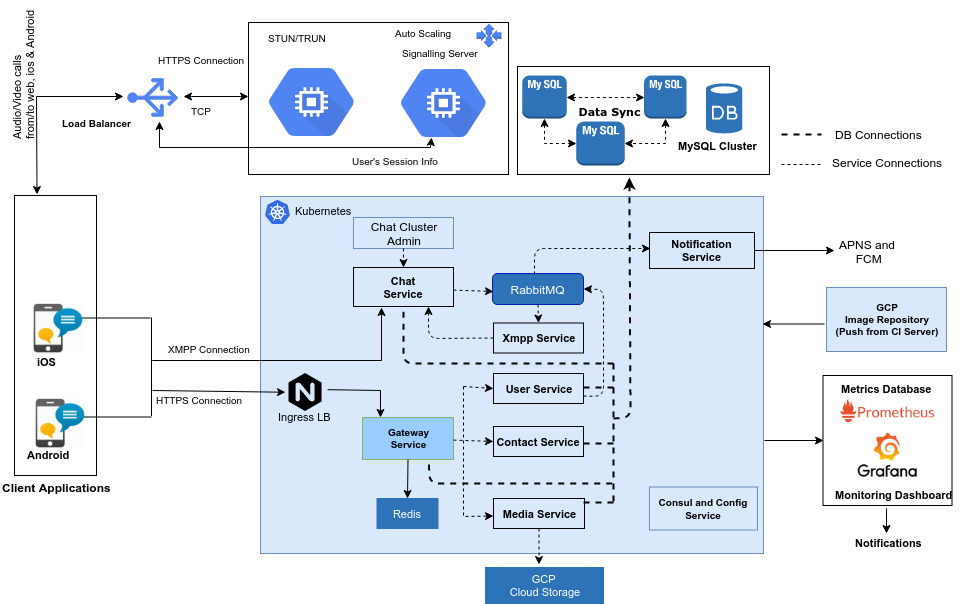

After a thorough working with several architectures of chat apps, here’s what we found out that chat rooms have a problem with it. Here comes the architecture of an enterprise chat application [MirrorFly] with the use of micro-service architecture.

Since Kubernetes is an open-source platform for managing containerized services, and also one of the best-in-market solutions, the core services are handled by it.

1. Client-Server Workflow

Clients: In our architecture, the clients can be of three types, iOS, and Android mobile applications and the Web Chat. The mobile applications first communicate to the server through API Gateway Service where the client is generated an access token which will authorize every communication and service.

Load Balancer: The webchat also communicates the same way and it is hosted along with the Web Admin app. Since there will be traffic from the requests from the web client, a load balancer is used here.

Cache Server: The Identity Service by Redis is a server used for caching the access tokens and reduces database operations.

Monitoring Service: We have also used Grafana’s Monitoring Dashboard to have a detailed view of all the operations that happen throughout. Now, let’s take a look at the components of our server and its roles in the architecture.

As the microservices are easier to develop, deploy and debug, the core microservices are used to scale the chat application.

The core microservices that are used:

- Gateway Service.

- Contact Service.

- User Service.

- Media Service.

- XMPP Service.

- Notification Service.

- Rabbit MQ Service.

2. Gateway/Auth Service

Gateway, as the name indicates, is used to enter into the application. Service contains the following APIs:

- Login — Used to authenticate the users.

- Logout — Used to log out the users from the application.

- Register — Used to register a user in the application.

What performance can you expect from this architecture setup?

- 14 Million messages per day.

- Max of 200 messages per second.

It’s good enough to simply spend on such architecture which will yield you an enterprise-grade architecture.

Now here comes the architecture of a call service system.

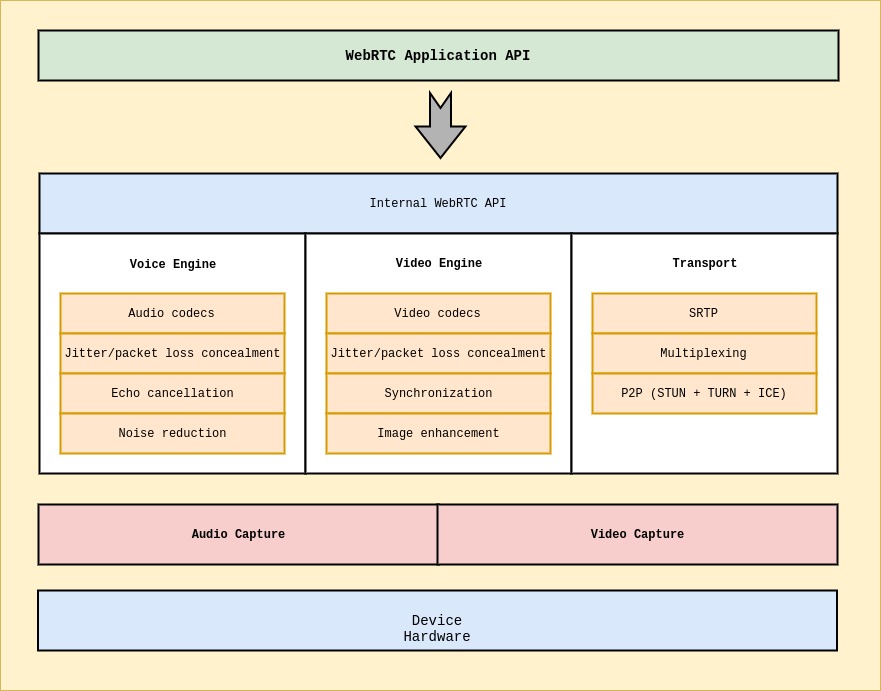

Let’s list down the overall explanation of each component in detail.

- Web API: An API to be used by third-party developers for developing web-based video chat-like applications. The latest proposal can be found here.

Transport/Session: The session components are built by re-using components from libjingle, without using or requiring the XMPP/jingle protocol.

RTP Stack: A network stack for RTP, the Real-Time Protocol.

STUN/ICE: A component allowing calls to use the STUN and ICE mechanisms to establish connections across various types of networks.

Session Management: An abstracted session layer, allowing for call setup and management layer. This leaves the protocol implementation decision to the application developer.

VoiceEngine: VoiceEngine is a framework for the audio media chain, from sound card to the network.

iSAC/iLBC/Opus

iSAC: A wideband and super wideband audio codec for VoIP and streaming audio. iSAC uses 16 kHz or 32 kHz sampling frequency with an adaptive and variable bit rate of 12 to 52 kbps.

iLBC: A narrowband speech codec for VoIP and streaming audio. Uses 8 kHz sampling frequency with a bitrate of 15.2 kbps for 20ms frames and 13.33 kbps for 30ms frames. Defined by IETF RFCs 3951 and 3952.

Opus: Supports constant and variable bitrate encoding from 6 kbit/s to 510 kbit/s, frame sizes from 2.5 ms to 60 ms, and various sampling rates from 8 kHz (with 4 kHz bandwidth) to 48 kHz (with 20 kHz bandwidth, where the entire hearing range of the human auditory system can be reproduced). Defined by IETF RFC 6176. NetEQ for Voice.

A dynamic jitter buffer and error concealment algorithm used for concealing the negative effects of network jitter and packet loss. Keeps latency as low as possible while maintaining the highest voice quality.

Acoustic Echo Canceler (AEC)

The Acoustic Echo Canceler is a software-based signal processing component that removes, in real-time, the acoustic echo resulting from the voice being played out coming into the active microphone.

Noise Reduction (NR)

The Noise Reduction component is a software-based signal processing component that removes certain types of background noise usually associated with VoIP. (Hiss, fan noise, etc…)

VideoEngine

VideoEngine is a framework video media chain for video, from the camera to the network, and from network to the screen.

VP8

Video codec from the WebM Project. Well suited for RTC as it is designed for low latency.

Video Jitter Buffer

Dynamic Jitter Buffer for video. It helps conceal the effects of jitter and packet loss on overall video quality.

Image Enhancements

For example, it removes video noise from the image captured by the webcam.

Putting It All Together

What we have shown here is the power of Kubernetes, microservices system and the essence of an enterprise chat API solution that can easily scale over 1,000,000 concurrent connections. The core benefit of an enterprise chat architecture is that it’s quick, scalable and reliable to invest.

When Do You Need to Go With an Enterprise Chat API Solution Like This?

- When your communication platform runs on low technology, expects better performance, then probably these 3rd party architectures are the best fit for your business needs.

- If you are a technology development team and want to develop a chat application that connects users across the globe, then an enterprise chat architecture is something you need to consider.

Further Reading

How to Set Up Scalable Jenkins on Top of a Kubernetes Cluster

Opinions expressed by DZone contributors are their own.

Comments