Deal With Multi-Tenant Data in Solr

Different techniques can be used to handle multi-tenant data in Solr. This article discusses routing techniques you can use depending on the size and number of shards.

Join the DZone community and get the full member experience.

Join For FreeMany Solr users need to handle multi-tenant data. There are different techniques that deal with this situation: some good, some not-so-good. Using routing to handle such data is one of the solutions, and it allows one to efficiently divide the clients and put them into dedicated shards while still using all the goodness of SolrCloud. In this blog post I will show you how to deal with some of the problems that come up with this solution: the different number of documents in shards and the uneven load.

Imagine that your Solr instance indexes your clients’ data. It’s a good bet that not every client has the same amount of data, as there are smaller and larger clients. Because of this it is not easy to find the perfect solution that will work for everyone. However, I can tell one you thing: it is usually best to avoid per/tenant collection creation. Having hundreds or thousands of collections inside a single SolrCloud cluster will most likely cause maintenance headaches and can stress the SolrCloud and ZooKeeper nodes that work together. Let’s assume that we would rather go to the other side of the fence and use a single large collection with many shards for all the data we have.

No Routing At All

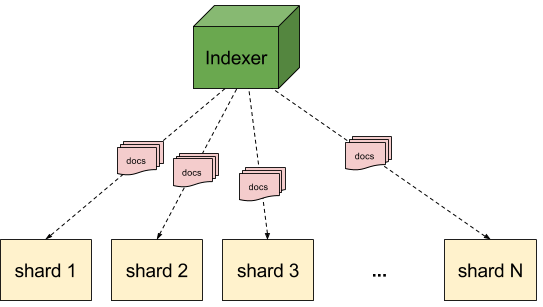

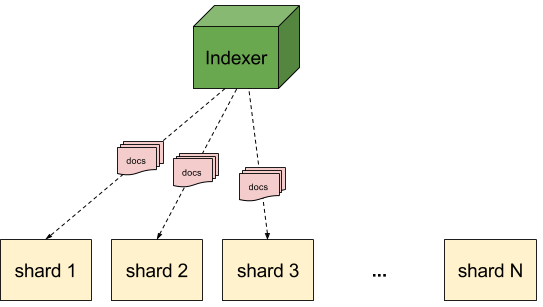

The simplest solution that we can go for is no routing at all. In such cases the data that we index will likely end up in all the shards, so the indexing load will be evenly spread across the cluster:

However, when having a large number of shards the queries end up hitting all the shards:

This may be problematic, especially when dealing with a large number of queries and a large number of shards together. In such cases Solr will have to aggregate results from the large number of shards, which can take time and be performance expensive. In these situations routing may be the best solution, so let’s see what that brings us.

Using Routing

When using routing we tell Solr which shards the data should be placed in. Solr will do that automatically, so we only need to prefix the identifier of the document with the routing value and ! character. For example, if we would like to use a user name as our routing value, we could use a routing value of user1!12345, where user1 is the user identifier and 12345 is the identifier of the document. The indexing would look like this:

Of course, during query time, we can use the _route_request parameter and provide the routing value to tell Solr which shard to query:

This is not, however, a perfect solution. Very large tenant data will end up in a single shard. This means that some shards may become very large and the queries for such tenants will be slower, even though Solr doesn’t have to aggregate data from multiple shards. For such use cases — when you know the size of your tenants — you can use a modified routing approach in Solr, one that will place the data of such tenants in multiple shards.

Composite Hash Routing

Solr allows us to modify the routing value slightly and provide information on how many bits from the routing key to use (this is possible for the default, compositeId router). For example, instead of providing the routing value of user1!12345, we could use user1/2!12345. The /2 part indicates how many bits to use in the composite hash. In this case we would use 2 bits, which means that the data would go to 1/4 of the shards. If we would set it to /3 the data would go to 1/8 of the shards, and so on.

Given the above scenario, the indexing would look as follows (assuming we have 12 shards):

Now we can use multiple shards to handle the indexing load and the problem of very large shards is less visible — or not visible at all (depending on the use case and data).

Of course, the query time also changes. So instead of providing the _route_request parameter and setting it to user1!, we’ll provide the composite hash, meaning the _route_ parameter value should be user1/2!. In such a case the query time routing would look like this:

Again, this is an improvement from the basic routing. We can now query multiple shards and divide the load of processing the query between multiple shards — at least for large tenants.

Summary

The nice thing about the provided routing solutions is that they can be combined to work together. For small shards you can just provide a routing value equal to the user identifier; for medium tenants you can send data to more than a single shard; and, for large tenants, you can send them to multiple shards. The best thing about these strategies is that they are done automatically, and one only needs to care about the routing values during indexing and querying.

Published at DZone with permission of Rafał Kuć, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments