Doris vs Elasticsearch: A Comparison and Practical Cost Case Study

Apache Doris excels in analytics, SQL support, and cost efficiency, while Elasticsearch leads in text search but has higher storage costs and complexity.

Join the DZone community and get the full member experience.

Join For FreeIn the domain of big data real-time analytics and log search, enterprises frequently find themselves choosing between Elasticsearch and Apache Doris. Elasticsearch is well-known for its powerful full-text search and flexible aggregation capabilities.

On the other hand, Apache Doris, with its distributed MPP architecture, columnar storage, and continuously evolving inverted indexing mechanism, shines in complex aggregations and data analysis.

This article delves into a comparison of the two solutions from multiple angles, such as architecture design, data ingestion, query optimization, storage management, functional capabilities, operational complexity, and community activity. Special attention is given to cost. Finally, we will present a real-world case study from the Tencent Music Content Library to illustrate the substantial benefits of replacing Elasticsearch with Doris.

1. Architecture Comparison

Doris

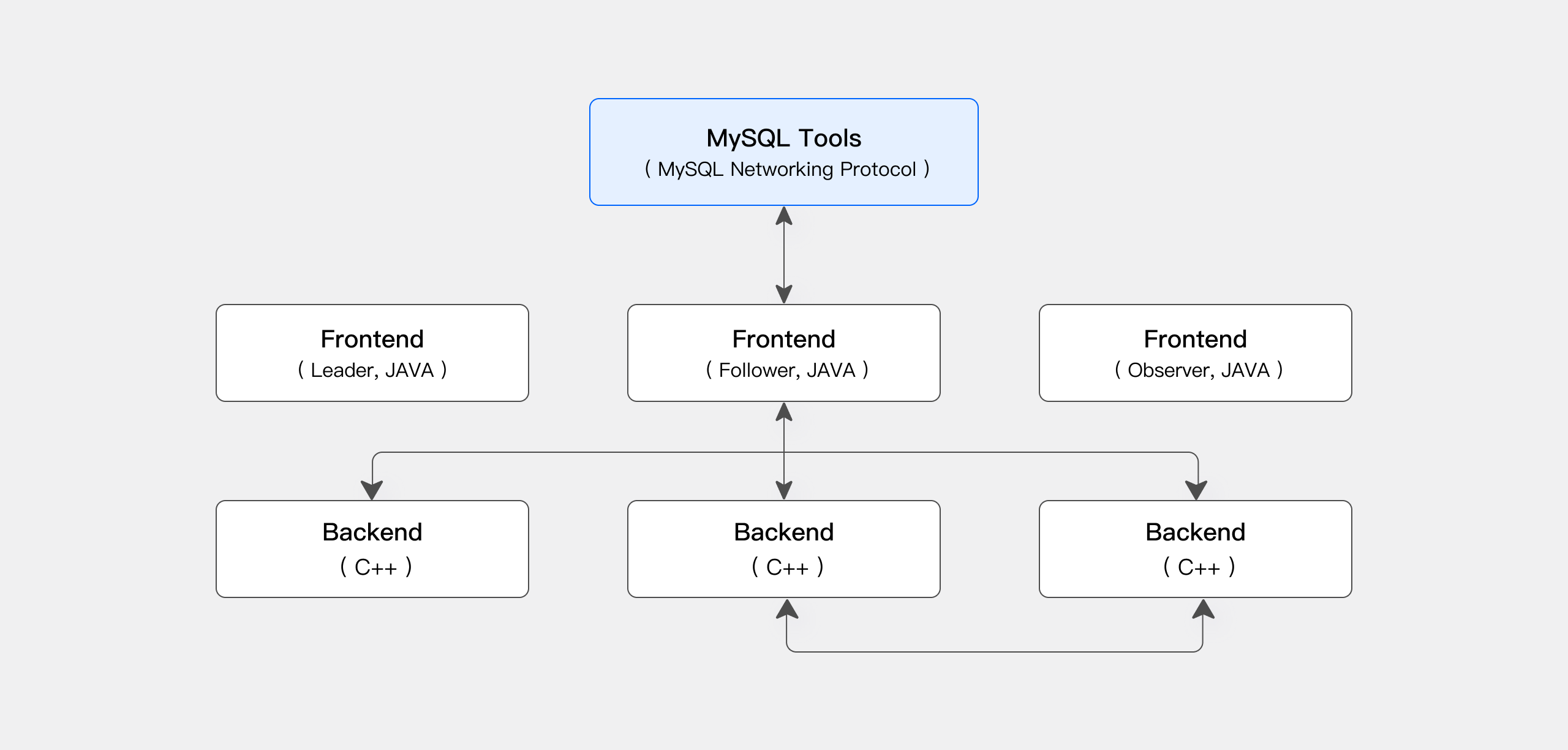

Distributed MPP Architecture and Decoupled Frontend/Backend

Doris separates SQL parsing, optimization, and execution. The frontend is responsible for managing metadata and query scheduling, while the backend focuses on data storage and computation. This design makes it easy to scale, isolate faults, and perform efficient parallel processing.

Elasticsearch

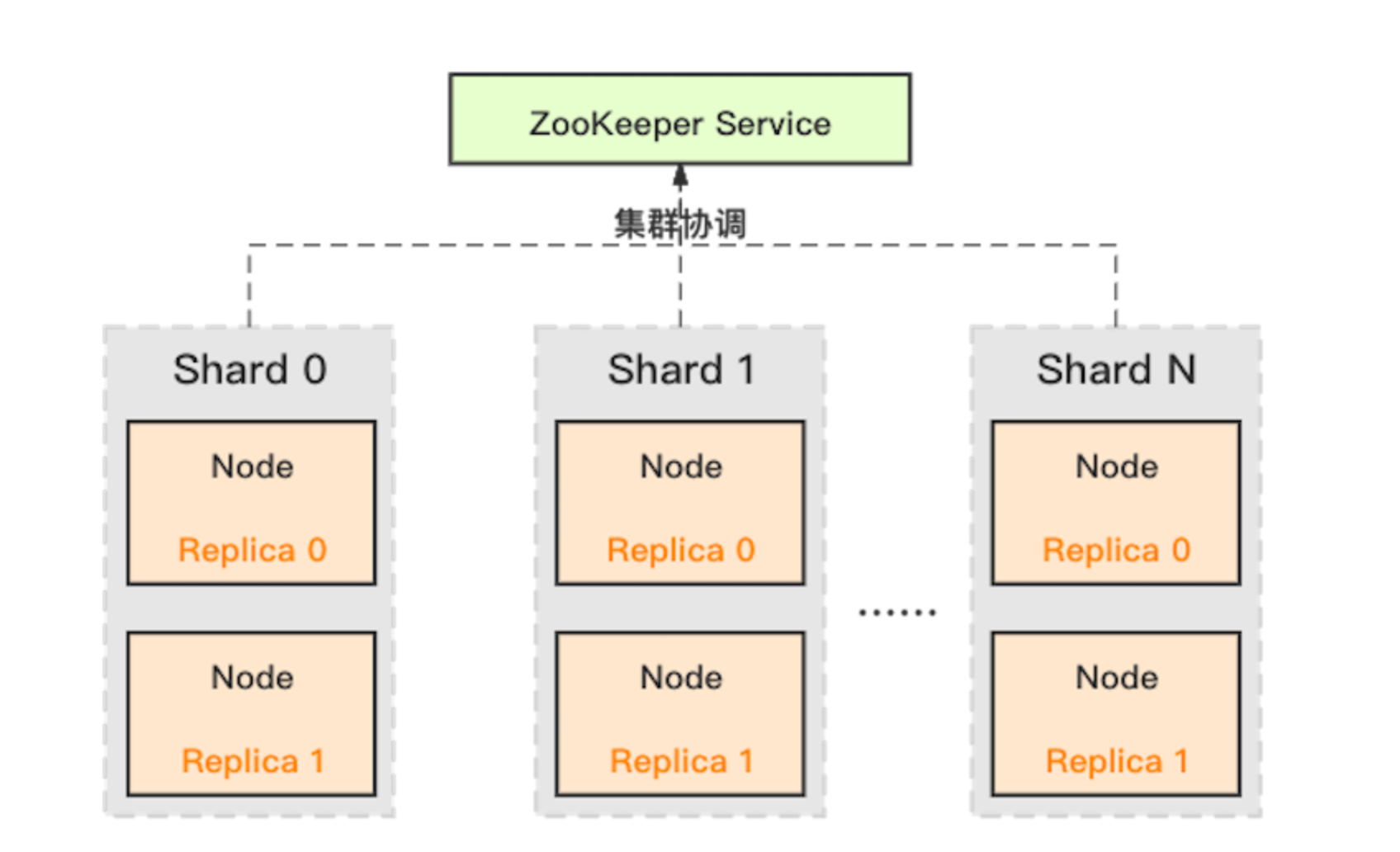

Shard and Replica-Based Distributed Architecture

Elasticsearch uses inverted indexes along with sharding to distribute data and ensure high availability. It is great at handling unstructured data for full-text search. However, its architecture may face difficulties when dealing with complex aggregations and join queries.

Comparison Insight

Both systems support distributed clusters. But Doris has a more distinct separation of responsibilities for data computation and complex queries. Elasticsearch, on the contrary, places more emphasis on text retrieval.

2. Data Ingestion

Doris

Unified Batch and Streaming Data Ingestion

Doris supports batch loading, streaming synchronization, and real-time writes. It is also MySQL-protocol compatible, which enables seamless integration with existing systems.

Elasticsearch

Real-Time Indexing via RESTful API

Data in Elasticsearch is usually ingested through HTTP interfaces, often with the help of tools like Logstash and Beats. However, building and maintaining indexes at scale can cause significant overhead.

Comparison Insight

Doris is highly optimized for OLAP requirements, featuring high throughput and complex computations. Elasticsearch, on the other hand, emphasizes real-time search and index building.

3. Query Optimization

Doris

Standard SQL and Built-In Optimizer

Doris fully supports MySQL syntax and uses a cost-based optimizer to automatically plan parallel queries. This is especially effective for multi-table joins, aggregations, and complex analyses.

Enhanced With Inverted Indexes

Starting from version 2.0, Doris added support for inverted indexes and full-text search, further reducing query response times.

Elasticsearch

Dedicated JSON-Based DSL

Elasticsearch uses a JSON-based Domain-Specific Language (DSL) that is excellent for keyword matching and text search. But it may be less flexible for complex joins and multi-dimensional aggregations.

Comparison Insight

Doris’s SQL-friendly approach and integrated optimizer allow it to respond more rapidly in complex query scenarios. Elasticsearch, meanwhile, remains strong in text search.

4. Storage Management

Doris

Columnar Storage and High Compression

Data in Doris is stored by column and supports efficient compression algorithms like ZSTD. It often achieves compression ratios of 5 to 10 times, significantly reducing storage costs.

Real-Time Update Support

Doris supports updates and deletions, making it suitable for scenarios that require interactive real-time data.

Elasticsearch

Inverted Index and Redundant Storage

In addition to storing the raw data, Elasticsearch maintains inverted indexes and other auxiliary data (such as forward indexes and column-store copies), which increases storage space requirements.

Comparison Insight

Doris offers better storage efficiency, especially for large-scale data. Elasticsearch’s redundant design helps with retrieval but comes at the cost of higher storage usage.

5. Functional Capabilities

Doris

Standard SQL Interface and Extensibility

Doris is fully compatible with MySQL and supports JDBC/ODBC for integration with various BI tools. It allows for near-instant schema changes (adding, dropping, or modifying fields and indexes) using its Variable data type to auto-expand JSON fields.

Advanced Query and Analytics Features

It provides rich aggregation queries, pre-aggregation, multi-table joins, subqueries, window functions, logical/materialized views, and SQL UDFs and supports external data lake tables (e.g., Hive, Iceberg, Hudi, Paimon).

Diverse Index Support

Besides text inverted and BKD (multi-dimensional numerical) indexes, it supports sparse primary key indexes, BloomFilter (skipping) indexes, and Ngram BloomFilter indexes to meet complex query needs.

Elasticsearch

Dedicated DSL and Dynamic Mapping

Elasticsearch uses a JSON-based DSL for queries and supports dynamic mapping to auto-expand JSON fields. However, it does not support changes in field types; once defined, the schema is usually static.

Focus on Full-Text Search

Its powerful text-inverted indexes and BKD numerical indexes offer excellent keyword search and simple aggregation performance, but it may lack advanced SQL analytics capabilities.

Comparison Insight

Doris’s functional design is open and flexible, covering everything from standard SQL and dynamic schema changes to advanced aggregation and analytics. It is a comprehensive solution for complex data analysis. Elasticsearch, in contrast, is more specialized in text retrieval and basic aggregations.

6. Operational Complexity

Doris

Simplified Operations through Decoupled Design

Doris has integrated monitoring and logging systems, and it supports automatic scaling and fault recovery, which reduces overall operational complexity.

Unified Platform Management

It combines data ingestion, computation, and storage in one system, minimizing the need to coordinate across multiple platforms.

Elasticsearch

Challenging Cluster Tuning

Elasticsearch requires significant expertise for sharding, replica configuration, index mapping, and cross-node data balancing, which increases operational overhead.

Multi-System Coordination Issues

It often needs to be maintained together with other systems (e.g., separate data warehouses), further complicating management.

Comparison Insight

Doris’s more centralized and integrated approach significantly reduces operational costs. Elasticsearch’s tuning and multi-system management require higher expertise and more resources.

7. Community Activity

Doris

Rapidly Growing Open-Source Community

Although relatively new, Doris’s community has been growing rapidly, with an increasing number of case studies, documentation, and plugins.

Elasticsearch

Mature and International Community

Elasticsearch has a large global developer ecosystem and extensive third-party support. However, some advanced features and commercial support require paid licenses.

Comparison Insight

Both communities have their own strengths. Elasticsearch’s community is well-established and global, while Doris’s community is expanding quickly, driven by wide adoption among leading enterprises worldwide.

8. Cost Comparison

When choosing a big data platform, the cost includes not only hardware investment but also operational expenses, development efficiency, and storage costs.

Storage Costs

Doris’s columnar storage and high compression typically reduce storage space requirements by 60% to 80%, significantly lowering hardware and cloud storage expenses. Elasticsearch’s need to maintain multiple index structures usually results in higher storage consumption.

Computational Resource Consumption

Doris is implemented in C++ with support for vectorized execution, leading to lower CPU and memory usage. Under equivalent hardware, Doris can achieve 4x faster write speeds and reduce query latency by over 50%, thereby reducing the need for high-end servers.

Operational and Development Costs

Doris’s SQL-friendly interface and unified management simplify development and operations. Elasticsearch’s DSL and complex tuning add additional labor and expertise costs.

Overall Cost Reduction

Overall, replacing Elasticsearch with Doris can reduce total costs by over 50% in hardware, storage, and maintenance.

9. Practical Case Study: Tencent Music Content Library

Background

Tencent Music Content Library stores and analyzes billions of music-related records, including song details, artist information, album data, and label metadata. As the business grew, the original Elasticsearch-based search and analytics architecture started to show problems.

There were high index maintenance costs, long query response times, and insufficient support for complex aggregations. To reduce resource usage and enhance real-time analytics capabilities, Tencent Music integrated Apache Doris in key scenarios to replace parts of the Elasticsearch components.

Outcomes

Resource and Cost Advantages

After adopting Doris, the number of required servers decreased significantly. Overall, CPU and memory usage were reduced by about 50%, and storage space requirements were cut by nearly 70% compared to Elasticsearch.

Enhanced Query and Write Performance

For real-time log and content data ingestion, Doris achieved a 3 to 5 times increase in write speed. Complex aggregation query response times were reduced from several seconds to sub-second levels, effectively meeting the needs for real-time monitoring and rapid analysis.

Improved Operational Efficiency

The unified Doris platform combined data ingestion, computation, and storage processes, greatly simplifying the overall system architecture and reducing the complexity of maintaining data consistency across multiple systems.

Case Study Conclusion

Tencent Music Content Library’s experience shows that adopting Apache Doris not only leads to a significant improvement in query performance and data processing capability but also significantly reduces hardware and storage costs. This provides a smoother and more efficient digital music experience for global users.

10. Summary and Recommendations

Summary

From an Architectural and Technical Perspective

Apache Doris uses a distributed MPP architecture and columnar storage to optimize complex queries and big data analysis deeply. It is especially good at high-throughput data ingestion and real-time querying.

Cost Efficiency

Doris reduces hardware, storage, and maintenance costs by over 50% compared to Elasticsearch.

Real-World Impact

As shown in the Tencent Music Content Library case, replacing Elasticsearch components with Doris has solved performance bottlenecks in high-data-volume environments, improved system stability, and reduced overall costs.

Recommendation

For enterprises that need to balance complex aggregation queries, real-time data updates, and low storage costs and want to simplify operations with a unified platform, Apache Doris is a very attractive solution. It is recommended that Doris be tested in a controlled environment, evaluated for performance and cost benefits, and gradually expanded across the business.

Opinions expressed by DZone contributors are their own.

Comments