gRPC vs REST: Comparing Approaches for Making APIs

gRPC and REST are commonly used approaches for creating APIs. Find the best fit for your application as you take a closer look at their characteristics.

Join the DZone community and get the full member experience.

Join For FreeIn today’s text, I want to take a closer look at gRPC and REST, probably two of the most commonly used approaches for creating API nowadays.

I will start with a short characteristic of both tools — what they are and what they can offer. Then I will compare them according to seven categories, in my opinion, most crucial for modern-day systems.

The categories go as follows:

- Underlying HTTP protocols

- Supported data formats

- Data size

- Throughput

- Definitions

- Ease of adoption

- Tooling support

The Why

When people hear "API," they probably think of REST API right away. However, REST is one of many approaches for building APIs. It is not the silver bullet solution for all the use cases. There are other ways, with RPC (Remote Procedure Call) being just one of them, and gRPC is probably the most successful framework for working with RPC.

Despite being quite mature and efficient technology, gRPC is still regarded as the new one. Thus, it is less widely adopted than REST despite being quite handy in some use cases.

My main reason for writing this blog post is to popularize gRPC and point out use cases when it can shine.

What Is REST?

REST, or Representational State Transfer, is probably the most common way of creating an application that exposes any type of API. It uses HTTP as the underlying communication medium. Because of that, it can benefit from all the advantages of HTTP, like caching.

Moreover, being stateless by its inception, REST allows for easy separation between client and server. The client only needs to know the interface exposed by the server to effectively communicate with it and does not depend in any sense on server implementation. Communication between client and server is based on a request and response basis, with each request being a classic HTTP request.

REST is not a protocol nor a tool (to a degree): it is an architectural approach to building applications. The services that follow the REST approach are called RESTFul services. As an architecture, it imposes several constraints upon its users. In particular:

- Client-server communication

- Stateless communication

- Caching

- Uniform interface

- Layered system

- Code on demand

The two crucial concepts of REST are:

- Endpoints: A unique URL (Uniform Resource Locator) representing a specific resource; can be viewed as a way to access a particular operation or data element over the Internet

- Resource: A particular piece of data available under a specific URL

Furthermore, there is a description called the Richardson Maturing Model — a model describing the degree of “professionalism” in REST APIs. It divides REST APIs into 3 levels (or 4, depending if you count level 0) based on the set of traits a particular API has.

One such trait is that the REST endpoint should use nouns in the URL and correct HTTP request methods to manage its resources.

- Example: DELETE user/1 instead of GET user/deleteById/1

As for HTTP methods and their associated actions, it goes like this:

- GET — Retrieve a specific resource or a collection of resources

- POST — Create a new resource

- PUT — Upsert whole resource

- PATCH — Partial update of specific resource

- DELETE — Remove a specific resource by id

The maturity model specifies a lot more than that; for example, a concept called HyperMedia. HyperMedia binds together the presentation of data and the control over actions clients can do.

A full description of the maturity model is out of the scope of this blog — you can read more about it here.

Disclaimer: many things mentioned in this paragraph are more nuanced than described here. REST is quite a huge topic and worth a whole series of articles. Nevertheless, everything here is in accordance with all commonly known best REST practices.

What Is gRPC?

It is yet another implementation of the relatively old concept of Remote Procedure Call. People from Google built it — that is why it has "g" in its name. It is probably the most modern and efficient tool for working with RPCs and also a CNCF incubation project.

gRRC uses Google’s Protocol Buffers as a serialization format while utilizing HTTP/2 as transport medium data, though gRPC can also work with JSON as a data layer.

The basic building blocks of gRPC include:

- Method: The basic building block of gRPC, each method is a remote procedure call that takes some input and returns output. It performs a single operation implemented further in the programming language of choice. As for now, gRPC supports 4 types of methods:

- Unary: Classic request-response model where the method takes input and returns output

- Server Streaming: Methods accept a message as an input while returning the stream of messages as an output. gRPC guarantees message ordering within an individual RPC call.

- Client Streaming: The method takes the stream of messages as an input, processes them until no messages are left, and then returns a single message as an output. Similar to the above, the gRPC guarantees message ordering within an individual RPC call.

- Bidirectional Streaming: The method takes the stream as an input and returns the stream as an output, effectively using two read and write streams. Both streams operate independently and message ordering is preserved on stream-level.

- Service: Represents a group of methods — each method must have its unique name within the service. Services also describe features like security, timeouts, or retries.

- Message: An object that represents the input or output of methods.

The gRPC API definitions are written in the form of .proto files which contain all three basic building blocks from above. Additionally, gRPC provides a protocol buffer compiler that generates client and service code from our .proto files.

We can implement server-side methods however we want. We have to stick with the input-output contract of the API.

On the client side, there is an object called client (or stub) — like an HTTP client. It knows all the methods from the server and just handles calling remote procedures and returning their responses.

The Comparison

Underlying HTTP Protocol

This is the first category and probably the most important one, as its influence can also be visible in others.

In general, REST is request-response based and uses HTTP/1.1 as a transport medium. We have to use a different protocol like WebSocket (more about them here) or any type of streaming or more long-lasting connection.

We can also implement a hacky code around to make REST look like streaming. What is more, using HTTP/1.1 REST requires one connection per request-response exchange. Such an approach may be problematic for long-running requests or when we have limited network capabilities.

Of course, we can use HTTP/2 for building REST-like APIs; however, not all servers and libraries may support HTTP/2 yet. Thus, problems may arise in other places.

gRPC, on the other hand, uses HTTP/2 only. It allows for sending multiple request-response pairs through a single TCP connection. Such an approach can be quite a significant performance boost for our application.

- Result: Slight win for gRPC

Supported Data Formats

Assuming the default case when REST API is using HTTP/1.1, then it can support many formats.

REST generally does not impose any restrictions on message format and style. Essentially every format that can be serialized to plain old text is valid. We can use any format that suits us best in a particular scenario.

The most popular format for sending data in REST applications is definitely JSON. XML comes second because of the large number of older/legacy applications.

However, when using REST with HTTP/2, then only binary exchange formats are supported. In this case, we can use Protobuf or Avro. Of course, such an approach can have its drawbacks, but more on this in the following points.

Meanwhile, gRPC supports only two formats for exchanging data:

- Protobuf — By default

- JSON — When you need to integrate with an older API

If you decide to try with JSON, then gRPC will use JSON as an encoding format for messages and GSON as a message format. Moreover, using JSON will require some more configuration to be done. Here is gRPC documentation on how to do that.

- Result: Win for REST, as it supports more formats.

Data Size

By default, gRPC uses binary data exchange format, which GREATLY reduces the size of messages sent over the network: research says around 40–50% lower size in bytes — my experience from one of the previous projects says even 50–70% less.

The above article provides a relatively in-depth size comparison between JSON and Protobuff. The author also provided a tool for generating JSONs and binary files. Thus you can re-run his experiments and compare the results.

The objects from the article are reasonably simple. Still, the general rule is — the more embedded objects and the more complex structure of JSON, the heavier it will be compared to Protobuf. A 50% difference in size in favor of Protobuf is a good baseline.

The difference can be minimized or eliminated while utilizing the binary exchange format for REST. Nevertheless, it is not the most common nor the best-supported way of doing RESTful APIs, so other problems may arise.

- Result: In the default case, victory for gRPC; in the case of both using binary data format, a draw.

Throughput

Again, in the case of REST, everything depends on the underlying HTTP protocol and server.

In the default case, REST based on HTTP/1.1, even the most performant server will not be able to beat gRPC performance, especially when we add serialization and deserialization overhead when using JSON. Although when we switch to HTTP/2 the difference seems to lessen.

As for maximum throughput, in both cases, HTTP is a transport medium, so it has the potential to scale to infinity. Thus everything depends on the tools we are using and what we are precisely doing with our application, as there are no limits by design.

- Result: In the default case, gRPC; in the case of both using binary data and HTTP/2, draw or slight victory for gRPC.

Definitions

In this part, I will describe how we define our messages and service in both approaches.

In most REST applications, we just declare our requests and responses as classes, objects, or whatever structure a particular language supports. Then we rely on provided libraries for serializing and deserializing JSON/XML/YAML, or whatever format we need.

Moreover, ongoing efforts exist to create tools capable of generating code in the programming language of choice based on REST API definitions from Swagger. However, they seem to be in the alpha version, so still, some bugs and minor problems may occur that will make them difficult to use.

There is little difference between binary and non-binary formats for REST applications, as the rule is more or less the same in both cases. For binary format, we just define everything in a way required by a particular format.

Additionally, we defined our REST service via methods or annotation from our underlying library or framework. The tool is further responsible for exposing it along with other configurations to the outside world.

In the case of gRPC, we have Protobuf as default and de facto the only way of writing definitions. We have to declare everything: messages, services, and methods in .proto files, so the matter is pretty straightforward.

Then we use the gRPC provided tool to generate code for us, and we just have to implement our methods. After that, everything should work as intended.

Moreover, Protobuf supports importing so we can spread our setup across multiple files in a reasonably simple way.

- Result: No winner here, just the description and a tip from me: pick whichever approach suits you the most.

Ease of Adoption

In this part, I will compare the library/framework support for each approach in modern-day programming languages.

In general, every programming language (Java, Scala, Python) I came across in my short career as a software engineer has at least 3 major libraries/frameworks for creating REST-like applications, not to mention a similar number of libraries for parsing JSONs to objects/classes.

Additionally, because REST uses human-readable formats by default, it is easier to debug and work with for newcomers. This can also impact the peace of delivering new features and help you fight bugs appearing in your code.

Long story short, support for REST-style applications is at least very good.

In Scala, we even have a tool called tapir — which I had the pleasure of being one of the maintainers for some time. Tapir allows us to abstract our HTTP server and write endpoints that will work for several servers.

gRPC itself provides a client library for over 8 popular programming languages. It is usually enough as these libraries contain everything that is required to make gRPC API. Additionally, I am aware of libraries providing higher abstractions for Java (via Spring Boot Starter) and for Scala.

Another thing is that REST is considered today a worldwide standard and an entry point for building services, while RPC and gRPC, in particular, are still viewed as a novelty despite being somewhat old at this point.

- Result: REST, as it is more widely adopted and has much more libraries and frameworks around

Tooling Support

Libraries, frameworks, and general market shares were covered above, so in this part, I would like to cover the tooling around both styles. It means tools for tests, performance/stress tests, and documentation.

Automated Tests/Tests

First, in the case of REST, tools for building automated tests are built into different libraries and frameworks or are separate tools built with this sole purpose, like REST-assured.

In the case of gRPC, we can generate a stub and use it for tests. If we want to be even more strict, we can use the generated client as a separate application and use it as a base for our tests on real-life service.

As for external tools support for gRPC, I am aware of:

- Postman app support for gRPC

- JetBrains HTTP client used in their IDEs can also support gRPC with some minimal configuration

- Result one: Victory for REST; however, the situation seems to improve for gRPC.

Performance Tests

Here, REST has significant advantages, as tools like JMeter or Gatling make stress testing of REST APIs a reasonably easy job.

Unfortunately, gRPC does not have such support. I am aware that people from Gatling have the gRPC plugin included in the current Gatling release, so the situation seems to be getting better.

However, until now, we only had one unofficial plugin and library called ghz. All of these are good; it is just not the same level of support as for REST.

- Result two: Victory for REST; however, the situation seems to improve for gRPC, again ;)

Documentation

In the case of API documentation, victory is once again for the REST with OpenAPI and Swagger being widely adopted throughout the industry and being the de facto standard. Almost all libraries for REST can expose swagger documentation with minimal effort or just out of the box.

Unfortunately, gRPC has nothing like this.

However, the question is if the gRPC needs a tool like this at all. gRPC is more descriptive than REST by its design, so additional documentation tools may.

In general, .proto files with our API description are more declarative and compact than the code responsible for making our REST API code, so maybe one does not need more documentation from gRPC. The answer I leave up to you.

- Result three: Victory for REST; however, the question of gRPC documentation is open.

Overall Result:

A significant victory for REST

Summary

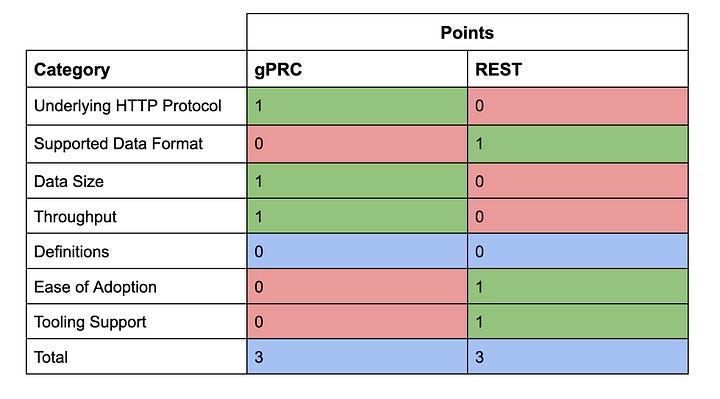

The final score table looks like this.

The scores are evenly split between both styles, with three victories each and one category without a clear winner.

There is no silver bullet: just think about which categories may be the most important for your application and then pick the approach that won in most of them — at least it is my recommendation.

As for my preference, I give a try to gRPC if I can, as it worked pretty well in my last project. It may be a better choice than plain old REST fellow.

If you ever need help choosing REST over gRPC or deal with any other kind of technical problem, just let me know. I might be able to help.

Thank you for your time.

Published at DZone with permission of Bartłomiej Żyliński. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments