IaC and Containers: Building Blocks of a DevOps Transformation

Explore ways IaC and containers have uprooted traditional methods of working in software, and how DevOps top performers use the technologies to deliver value.

Join the DZone community and get the full member experience.

Join For FreeThis is an article from DZone's 2023 Containers Trend Report.

For more:

Read the Report

There are few technologies that have seen as much attention and adoption over the last decade as Infrastructure as Code (IaC) and containers. In those years, both have improved and eventually converged to form a modern standard for application delivery. In this article, we'll take a look at this transformation, the ways it has uprooted traditional methods of working in software, and how DevOps top performers use the technologies to deliver value at a blistering pace.

Two Powerful Technologies

IaC and containers emerged initially to solve different sets of problems. Modern IaC was predated by configuration management, aiming to gain some control over the frequently unpredictable drift of infrastructure over time. Not only did automation increase the ease with which infrastructure could be provisioned, it improved stability as well because services could be quickly recreated instead of manually fixed. Yet, in a world where dev and ops are split, advances in infrastructure automation remained squarely in the toolkit of ops.

Containers were also born of a need for reproducibility, though in the context of applications. As installed libraries, dependencies, and other aspects of the runtime environment changed over time or across machines, the behavior of applications hosted on them changed as well. Many early approaches leveraged the Unix chroot syscall, which eventually converged to the development of containers as a more complete abstraction. Unlike IaC, much of the pain that containers solved was shared by both dev and ops. Collaboration across different workstations was challenging for dev and deploying that application to yet another machine in production was difficult for ops.

Breaking Down the Wall of Confusion

A silver lining of previously separate groups having the same problem is they start to work more closely to find a solution. The convergence within the industry toward containers has tracked alongside the DevOps movement, breaking down many of the traditional silos found in companies. Now an icon of this transformation, the "wall of confusion" connotes the challenges faced in traditional organizations when work moves in one direction from dev to ops. In the most pathological scenario, code that "works" on a developer's machine is "tossed over the wall" to be deployed and maintained by the operations team. Figure 1 illustrates this scenario:

Figure 1: The wall of confusion

Work moves from dev to ops: dev is responsible for Green, and ops is responsible for Red

Success in this situation is difficult to achieve for many reasons, including that:

- It is not clear who should compile the binary; the developer understands application requirements but operations knows the real target environment.

- Many details of the environment that the application was tested in are known, but only to the developer. Operations must recreate this environment without having a consistent way to document it precisely.

- Even with IaC on the ops side, untracked changes to the dev environment will lead to production failures.

Without containers, the use of IaC can improve outcomes only marginally. Ops can manage and change all that is on their side of the wall more effectively, but the same gaps in communication and feedback are still present. The core limitation is not lifted, and this is demonstrated well by the scope of work needed to be done by IaC tools.

Many steps to prepare dependencies for the application are redone by ops when provisioning the production environment, even though the developer already took similar steps to produce their development environment. IaC tools will build the environment quickly, but without effective communication channels, they will only build broken environments more quickly. Once containers are introduced, however, these limitations can be significantly diminished.

Figure 2: Delivering from dev to ops with containers

Containers enable a more direct line of flow between dev and ops

After containerizing the application, many previously hidden details can be shared explicitly:

- Most of the application dependencies are delivered directly to the production environment.

- The binary can be compiled, linked, and tested before handoff.

- The configuration can be split into what is already known at build time and what must be determined when the application is deployed.

- The overall footprint of what ops must independently build and maintain is significantly reduced.

The minimization of hidden dependencies on the developer's machine has an outsized impact on the shared understanding across the organization. Of the three remaining dependencies depicted in this scenario, source code is largely irrelevant once the downstream artifacts have been persisted in the container. The runtime configuration and hardware dependencies remain as the key dependencies outside the containerized application.

The definition of these is a natural task for many IaC tools, specifying resources such as CPU and networking supplemented with relevant connection parameters and credentials. It is also more appropriately aligned with the concerns of operations.

Doubling Down on Containers

The trend of container adoption in the industry has had an impact on the way dev and ops are able to work, and has been central to many DevOps transformations. What's next for these technologies? In the same way these technical advances have changed the way we work, the changes to the way we work have paved the way for further improvements to the technology.

The abstraction that containers provided ultimately reduced the set of concerns that IaC tools had to deal with. This enabled the development of better abstractions for IaC, largely built around virtualized compute, storage, and networking. Installing dependencies and managing OSs has shifted left into the container build process and often within the development cycle.

In many ways, containers form a contract between the application and the infrastructure that will run it. This has been formally defined by the Open Container Initiative (OCI), and an ecosystem of modern cloud-native tools has emerged conforming to the specification. A familiar but often overlooked element of container-driven architectures is the container registry. By systematically submitting container images to a registry, the DevOps workstream gains the additional benefit of decoupling software delivery.

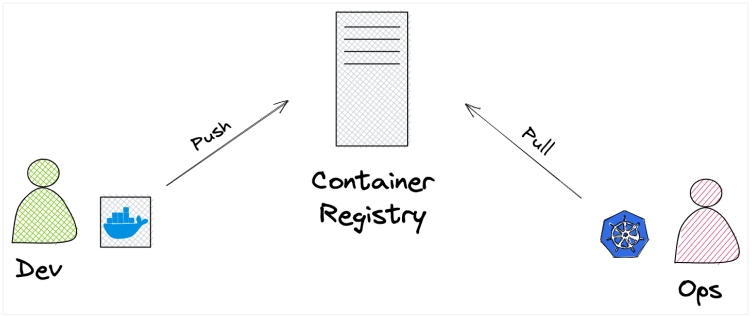

Figure 3: Decoupling build and deploy

Dev may build container images with Docker, and ops may deploy them with Kubernetes

A container image, representing an immutable snapshot of a deployable application, is pushed to the registry. The application can then be deployed to any OCI-compliant container runtime. This may be directly to a virtual machine (VM), an open-source orchestration framework like Kubernetes, or one of the many managed container runtimes offered by cloud providers.

A common element between these options is that container images are pulled from the registry when needed. Not only does this enable greater control over when changes are actually released, but it also provides a natural means of declaratively specifying the application being deployed. In fact, this is arguably the key to achieving some of the longest-held goals of IaC. The entire infrastructure is declared authoritatively with immutable references to applications. This is the closest the industry has been able to get to a single definition of the entire system.

Modern Patterns Made Possible

As defined by the OpenGitOps project, the core principles of GitOps are:

- Declarative

- Versioned and immutable

- Pulled automatically

- Continuously reconciled

With these principles in mind, it is clear that GitOps is a natural extension of patterns enabled by IaC and containers. The system definition is made declarative and immutable through the use of IaC with references to immutable containers. By persisting the definition to a version control system such as Git, we can achieve the versioned quality.

The remaining two principles — pulled automatically and continuously reconciled — are the extensions that GitOps offers and can generally be implemented by running agents in the target environment. One agent is responsible for synchronizing the latest state from source control and the other ensures that the actual state continues to match the desired state.

In order for an organization to fully benefit from a microservices architecture, the services must be small and independently deployable. Deployment pipelines have often been built to push builds through various stages, sometimes making it difficult to selectively release only certain parts of the system.

As we have seen, container registries offer essential decoupling: Build pipelines push images to the registry, and the declared infrastructure pulls those images precisely when we want it to. This enables isolation between teams and small, safe changes to production.

Conclusion

Starting initially as tools meant for distinct use cases, IaC and containers have converged to form a modern framework for cloud-native application delivery. With maturing open-source standards, they are democratizing access to previously elite levels of quality and reliability. First-class support from cloud providers is further reducing barriers to adoption. The greatest advances are in the ways we work and collaborate as teams, and this is ultimately how we are able to deliver the greatest value and at the highest levels of quality.

This is an article from DZone's 2023 Containers Trend Report.

For more:

Read the Report

Opinions expressed by DZone contributors are their own.

Comments