Implementing Auto Retry in Java EE Applications

How do you handle the problem of inconsistent and unpredictable timeouts? In Java EE a good solution is interceptors.

Join the DZone community and get the full member experience.

Join For FreeInitially, I wanted to call this blog – ‘Flexible timeouts with interceptor driven retry policies‘ – but then I thought it would be too ‘heavy’. This statement, along with the revised title should (hopefully) give you an idea of what this post might talk about ;-)

The Trigger

This post is primarily driven by one of the comment/questions I received on one of my earlier posts which briefly discussed timeout mechanisms and how they can be used to define ‘concurrency policies’ for Stateful and Singleton EJBs.

The Problem

While timeouts are a good way to enforce concurrency policies and control resource allocation/usage in your the EJB container, a problem arises when the timeouts are inconsistent and unpredictable. How do you configure your timeout policy then?

Of course, there is no perfect solution. But, one of the work arounds which popped into my mind was, to ‘retry‘ the failed method (this might not be appropriate or possible for your given scenario, but can be applied if the use case permits). This is good example of a ‘cross-cutting‘ concern or in other words, an ‘aspect‘. The Java EE answer for this is – Interceptors. These are much better than the default ‘rinse-repeat-until-xyz with a try-catch block‘ because of

- code reuse

- flexibility

The Gist (of the Solution)

Here is the high level description (code available on Github)

The Sample Code

- Uses a Singleton EJB to simulate a sample service and introduces latency via the obvious Thread.sleep() [which of course is forbidden inside a Java EE container]

- Uses a JAX-RS resource which injects and calls the Singleton EJB and is a candidate for ‘retry’ as per a ‘policy’

- Can be tested by deploying on any Java EE (6 or 7) compatible server and using Apache JMeter to simulate concurrent clients/requests (Invoke HTTP GET on http://serverip:port/FlexiTimeouts/test)

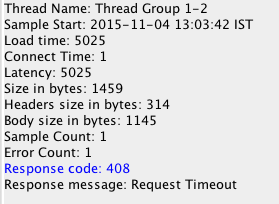

Without the retry (interceptor) configuration, the tests (for concurrent requests) will result in a HTTP timeout (408).

Once the retry interceptor is activated, there will be some latency because the task will be automatically retried once it fails. This of course will depend on the volume (of concurrent requests) and the threshold would need to be tuned accordingly – higher threshold for a highly concurrent environment (usually, not ideally)

Published at DZone with permission of Abhishek Gupta. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments