The SPACE Framework for Developer Productivity

Improve developer productivity using the SPACE framework and software engineering intelligence, a multi-dimensional approach for modern software teams.

Join the DZone community and get the full member experience.

Join For FreeWelcome to SPACE

Developer productivity is a complex subject for which there is no magic bullet. However, economic pressure, increased market competition, and shorter delivery circles force many organizations to improve their efficiency and open up new models of operations. Measuring, maintaining, and eventually improving engineering productivity in an increasingly hybrid workplace are important discussions many organizations are having right now.

As a result there are more and more companies investigating how to do more with the resources they have, how to remove bottlenecks in their processes and how to enable developers to be productive. Empirical evidence and understanding of productivity drivers are forming at the same time as some myths and misconceptions are getting debunked.

One of the approaches that got a lot of attention is the SPACE framework. We give the background on it and explain some of its key concepts. Moreover, we give some additional examples of the application of SPACE in your organization.

Introduction to the SPACE Framework

“The SPACE of Developer Productivity” is a framework by authors from GitHub, the University of Victoria, and Microsoft that has gained attention because of its practical and multi-faceted approach.

The authors debunk common productivity myths and misconceptions and then present drivers for developer productivity. They present those drivers structured as a holistic multi-dimensional model. Moreover, the authors show some example productivity metrics as well as counter-indicators.

For convenience, we present a summary of the SPACE framework and give some assistance on how to utilize increasingly popular engineering intelligence to kick-start your SPACE tracking and reporting.

Developer Productivity: Myths and Misconceptions

Some misconceptions and myths are clarified by the authors of the SPACE Framework early on:

- One obvious myth is that (developer) productivity is not a one-dimensional metric. There is no single number that defines productivity and any approach that is too simplistic will provide little insights. Not only that but different organizations and different teams might be best served with a different set of KPIs to focus on. We will go into this a bit later.

- Software development is a team sport. As such, individual metrics are less relevant; in fact, can be counter-productive. What matters is the performance of teams or the organization as a whole. Also, we might add, not absolute numbers are essential but trends and the observability of countersignals highlighting problems to address.

- Outcomes are more important than output. While harder to quantify, the ability to ship customer-relevant features is obviously more important than just churning out code. As a result, pure activity metrics are not sufficient to make good productivity estimates.

Having said all this, it is worth highlighting the value of measuring nonetheless:

- A good set of measurements can give insights into how the organization is performing, how it is trending, and which areas can improve. Moreover, key indicators are not only valuable to management, but if used correctly give a voice to engineering at the same time.

Developers like to have evidence to show the value they bring to their teams and the organization. People generally like to show their worth and like to improve processes and themselves where they can. Having that evidence at your fingertips helps to improve self-worth and in turn, improve organizational productivity.

The SPACE Framework Explained

SPACE stands for Satisfaction, Performance, Activity, Communication, and Efficiency and reflects the multi-dimensional approach proposed by the creators. We summarize those in the following.

The 5 SPACE dimensions are:

- Satisfaction and well-being: This dimension measures how satisfied development teams and individuals are with their tools, processes, and the work environment. For instance, are the right tools and resources in place to perform tasks efficiently? Are teams protected from overload or do individuals suffer from potential burnout? Is the management structure and environment supportive of growth and productivity?

- Performance: This relates to the actual outcomes created by teams and the absence of blockers to create those outcomes. For instance: What is the customer acceptance and satisfaction of features shipped? How did we improve on that over time? How did we improve our overall quality? Performance is closely related to organization and team performance and satisfaction to contribute to overall efficiency.

- Activity: As mentioned above, outcomes are preferable to output, but activity or output are often good proxy metrics that still enable some useful indicators – and especially counter indicators. This can be the release cadence, build system performance, or the number of incidences to manage. Simply speaking, do we get stuff done? And how did we improve over time?

- Communication and Collaboration: Effective collaboration and team cohesion has been shown as a significant contributing factor to developer productivity. For instance, brainstorming, collaboration, aligning on goals, and participating in outcomes improve productivity. Counter to these are scenarios where individuals work against each other, shift blame around, or feel abandoned by their management.

- Efficiency and Flow: It is one thing to produce valuable outcomes, but how efficient can we be in doing so? A key indicator is how much individuals and teams can be “in the flow” of performing their work; how much they can be free from blockers, interruptions or delays. The “flow” is something a lot of organizations are starting to pay attention to on a process or organizational level already. This shows up as a company’s Value Stream Metrics or DORA metrics. These are concepts that are often used to communicate with the executive team or even the board.

Organizational Dimensions

Lastly, the SPACE framework makes a distinction of where the productivity measures are taken and applied these are:

- Individuals: Helping individuals to feel more productive is important, but this is often best done by setting the right process, organizational, and team environments. As we have seen above, micro-managing individuals does not have the best impact and often does not produce the desired outcomes.

- Teams: Well-performing teams are at the core of well-performing organizations. Setting the right environment, context, and feedback loops for teams has been shown to improve productivity significantly.

- System: Improving processes, systems, and organizational metrics helps to improve the overall organization efficacy and deliver better outcomes to customers faster. These are high-level indicators that help to drive better performance across the board.

This concludes the summary of key dimensions for the SPACE framework, but it is worthwhile to read the original article linked earlier. Next, we provide some examples augmented by our own experience that can easily be measured.

SPACE Framework: Example Metrics

Satisfaction

For satisfaction and well-being, there are numerous ways to measure how things are going within the organizations. These can be explicit metrics such as:

- Results on NPS from formal or semi-formal surveys

- Quick emoji-like responses to survey emails, ticket support cases, or internal portal features: Retention rates of engineers and engineering managers

These metrics require, however, dedicated effort and resources to implement, maintain and analyze/report on. While this is feasible in some organizations, this is often not the first step in moving to a SPACE process.

Proxy metrics have been shown to be useful to equate with a certain level of satisfaction or rather the opposite, are indicators of frustration. Examples are:

- CI build failure rates and recovery times: Means; potential pain of waiting and uncertainty

- Code review cycles and review delays: The pain of context switching

- Bug numbers and issue fix times: The pain of customer dissatisfaction

While proxy metrics are not suitable for more personalized sentiment analysis or sentiment around organization and management issues, they can highlight common triggers for frustration and dissatisfaction. Moreover, proxy metrics are often an easy starting point as they are a matter of mining existing data and do not require introducing new workflows or additional potentially distributing tasks.

Performance

The authors of the SPACE framework highlight a number of proxy metrics for performance around code reviews and related activities. This includes:

- Code review velocity/acceptance rates: How quickly/consistently are we delivering outcomes?

- Items shipped (epics/features/story points): How much do we get done?

- Reliability of infrastructure/product/build systems: Do we have infrastructure bottlenecks preventing us from performing?

Again, while this does not necessarily give a complete picture all of the above metrics provide useful signals to gauge overall performance.

Activity

While performance measures the outcomes, activity is more focused on outputs. These are metrics that are typically easy to obtain, examples are:

- The number of code reviews completed

- Number of PRs done

- The number of issues/story points completed

- Time spent on development activities

- Deployment frequency

Activity items are those that can often be accessed from data in your engineering tools and infrastructure. These metrics are especially useful when extracted continuously and automatically for reducing any friction and developer overhead, while at the same time being aggregated to teams or organization level for monitoring and trending.

Communication/Collaboration

Metrics in this category are a bit more open to interpretation and one needs to be careful when introducing any proxy metrics. While it is possible to detect negative signals, the converse is harder. A highly collaborative team is something that often cannot be determined by numbers alone and requires good personal management skills. Nonetheless, some proxy metrics that have shown to be beneficial are:

- Code review scores/number of reviewers/number of review cycles: Are reviews well distributed, include several active people per PR, and comment more than “LGTM”? Do numbers reflect a sense of collaboration and not blame shifting as it might be evident in long review cycles between the same people?

- PR cycle times: Are we efficient and work together well or are there any obvious blocking stages?

- Knowledge/review graphs: Is there a wider network of collaboration or do we have knowledge islands?

Efficiency/Flow

One of the key categories around developer productivity is the “flow” engineers are in, but also the flow enabled by supporting infrastructure and team processes. There are both positive and anti-signals that can be measured such as:

- PR velocity and trends

- Development cycle time

- Build times and reliability

- Blockers and delays in code reviews

- Aging of backlogs and ticket state changes

Measuring flow, efficiencies, as well as blockers, is something that can be well approximated by hard data.

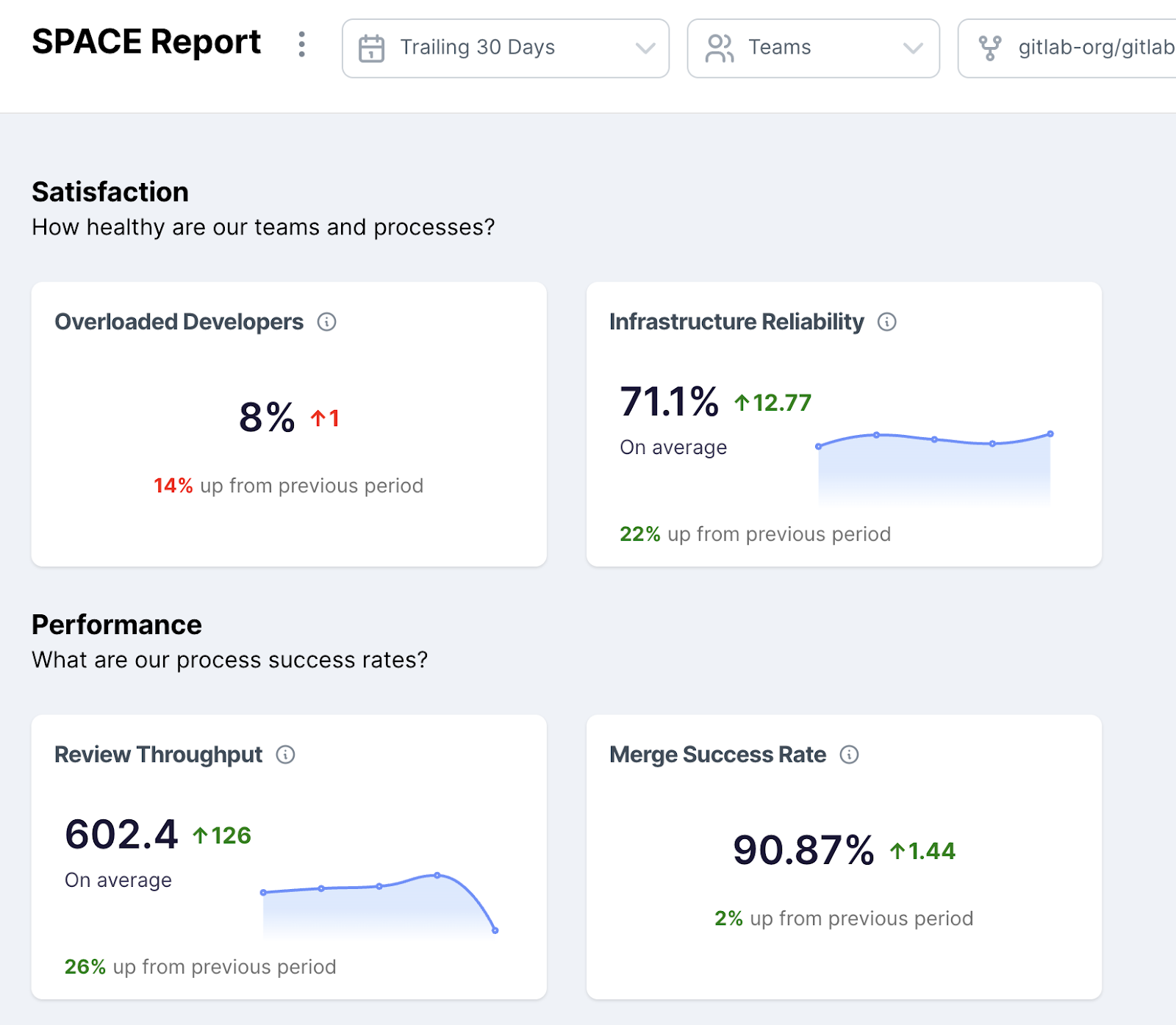

Example Snapshot of SPACE Report in Logilica

Summary

Overall the SPACE framework introduces a multi-faceted approach to developer productivity. Looking both at some key dimensions of what productivity means, but also across individuals, teams, and the organization as a whole.

Metrics to measure SPACE dimension can be direct or indirect through proxy data. The great thing is that many data points already exist in some shape or form in organizations and can be data mined. This can be done, for example, by in-house productivity engineering teams themselves or with the help of increasingly popular software engineering intelligence (SEI) platforms.

Published at DZone with permission of Ralf Huuck. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments