Now, with more context about the main concepts, architecture, and components of OpenTelemetry, we are ready to start tracing. We will instrument two APIs; one will be built in .NET and the other in Python. They will be designed to have the same endpoints and the same purpose. It will return untranslatable words that exist only in one language at random or by language. In this diagram, you can follow each API's simple flows:

Figure 8: Untranslatable API flow chart

Different programming language paradigms present us with different challenges, so I've selected to show examples in two languages, Python and .NET — not to highlight the challenges but to demonstrate the consistency of OpenTelemetry across stacks. Please note that all .NET examples are for ASP.NET Core, and the configuration might differ for the .NET Framework.

Configuration

First, imagine that we already have a project created for whatever language we will use with the basic structure. You will install (Python) or add the necessary libraries (.NET) to your project by running the following commands. For Python, if using setuptools, you can add this library as an installation requirement.

Python:

.NET:

These commands will also add the SDK and API for OpenTelemetry as a dependency.

Collect Traces Using OpenTelemetry

In OTel, we can perform tracing operations on a Tracer. We can obtain it by using GetTracer() in the global Tracer Provider, returning an object that can be used for tracing operations. However, when using auto-instrumentation and depending on the language, that might not be necessary.

Add a Simple Trace With Automatic Instrumentation

Not all frameworks offer automatic instrumentation, but OpenTelemetry advises using it for those that do. Not only does it save lines of code, but it also provides a baseline for telemetry with little work. It works by attaching an agent to the running application and extracting tracing data. When considering auto-instrumentation, remember that it's not as flexible as manual instrumentation and only captures basic signals.

Let us look at code implementations. Below, we have the basic setup for auto-instrumenting our API.

Python:

.NET:

In Python, we don't need to add anything to the code to extract basic metrics, but I'd recommend using the FlaskInstrumentor that adds flask-specific features support. You can add FlaskInstrumentor().instrument_app(app) after instantiating Flask and add extra configurations as needed.

In .NET, we need to configure necessary OpenTelemetry settings as the exporter, instrumentation library, and constants. Like in Python, adding the OpenTelemetry.Instrumentation.AspNetCore package will provide extra features specific to the framework, adding to the base instrumentation library. The instrumentation library for ASP.NET Core will automatically create spans and traces from inbound HTTP requests.

To run our applications with automatic instrumentation and start collecting and exporting telemetry, run the commands below.

Python:

.NET:

These commands will start the instrument agent and set up the specific instrumentation libraries. Now, a trace will be printed to the console whenever we send a request. We can see the examples of output below.

Python:

.NET:

Notice that in Python, the traces have many empty unknown values by not adding any configuration.

Add Manual Instrumentation

Manual instrumentation means adding extra code to the application to start and finish spans, define payload, and add counters or events. You can use client libraries and SDKs available for many programming languages. Manual instrumentation and automatic instrumentation should walk hand in hand as they complement each other. Instrumenting your application with intention will augment the automated instrumentation and provide better and deeper observability. The following implementation will add traces to the APIs' methods:

Python:

.NET differs from other languages that support OpenTelemetry. The System.Diagnostics API implements tracing, reusing existing objects like ActivitySource and Activity to comply with OpenTelemetry under the hood. For consistency, I've used the OpenTelemetry Tracing Shim so that you can learn to use OpenTelemetry concepts. If you want to see an implementation using Activities, you can check this repo.

.NET:

Store and Visualize Data

Jaeger is a popular open-source distributed tracing tool initially built by teams at Uber and then open sourced once it became part of the CNCF family. It's a back-end application for tracing that allows developers to view, search, and analyze traces. One of its most powerful functionalities is visualizing request traces through services in a system domain, enabling engineers to quickly pinpoint failures in complex architectures.

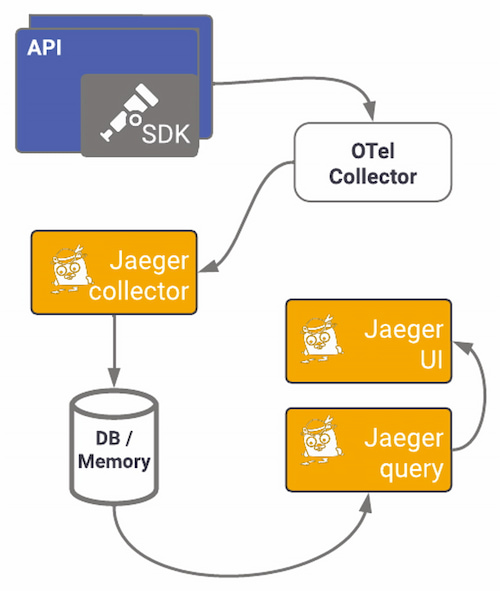

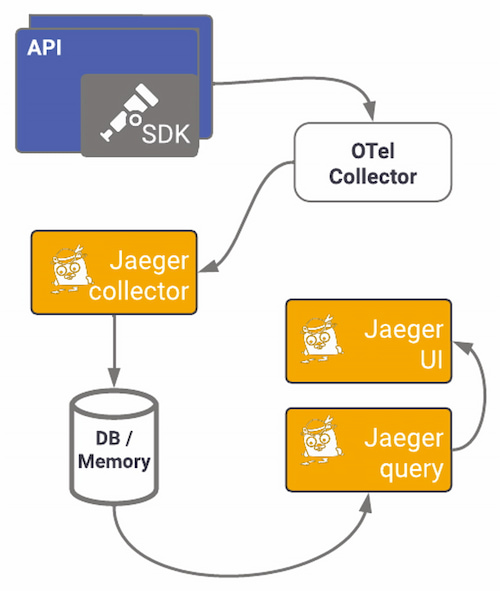

Jaeger provides instrumentation libraries built on OpenTracing standards. Using the specific exporter for Jaeger can offer a quick win on observing your application. Here we will use the OTel exporter and OpenTelemetry's Jaeger exporter to send OTel traces to a Jaeger back-end service. We've seen how the OTel collector works and is set up; the following diagram shows what using Jaeger's specific exporter pipeline looks like:

Figure 9: OTel Collector pipeline

To start visualizing data, you need to set up Jaeger first. You can opt for other setups, but I'll use the all-in-one image to install the collector, query, and Jaeger UI in one container, using memory as default storage (not for production environments). This docker-compose file sets up all components, the network, the ports needed, and the OTel Collector. The ports used in this example are the default ports for each service. Run docker-compose up to start the containers.

You should now have two containers running, one for Jaeger and another for the OTel collector:

If you navigate to http://localhost:16686, you should see Jaeger's UI. Here you'll be able to explore the traces generated by your instrumentation:

Figure 10: Jaeger's user interface

In the top left drop-down menu is the service we created. Services are added to that list when we export traces to Jaeger. As I've mentioned, there are two ways to export telemetry to Jaeger, using the OTLP or directly to Jaeger using one of the supported protocols. We've already seen how to configure the OTLP collector, so now all we have to configure is the Collector to export to Jaeger:

However, if setting up a collector seems daunting, or if you want to start small using OpenTelemetry, sending data directly to a back end can offer results reasonably fast without the Collector. Let's start by installing OpenTelemetry's Jaeger exporter.

Python:

.NET:

Again, in Python, we install the package on our host or the virtual environment, whereas for .NET, we add it directly as a project dependency. For Python, the package comes with both gRPC and Thrift protocols.

Python:

After installing the package, you can set the exporter in the TracerProvider, which will be configured when tracing starts. Now we will do the same for .NET: After adding the NuGet package to the project, we will configure the exporter. Here we will enable instrumentation using an extension method — AddAspNetCoreInstrumentation — on IServiceCollection and binding the Jaeger exporter.

.NET:

Now run your APIs and make some requests. Go to Jaeger's UI, and you should be able to see traces generated by those requests by selecting the service name you specified and the operation you traced. Below, you can see all traces captured within a time window and a trace's detail and the associated spans:

Figure 11: All traces

Figure 12: Trace details

For complex systems and architectures, distributed tracing is invaluable. You can quickly start exporting directly to Jaeger's back end and adding OpenTelemetry auto-instrumentation to get the telemetry data. With Jaeger, it's easier to find where the problem occurred than through logs, allowing you to monitor transactions, perform root cause analysis, optimize performance and latency, and visualize service dependencies.

Common Pitfalls of Migrating Legacy Applications to OpenTelemetry

Your services are probably already emitting telemetry data bound to some observability back end. Changing your observability architecture can be very painful:

- Re-instrumenting is time consuming

- Data will change

- Telemetry data must continue to flow, not allowing blind stops in the system

- Traces have to remain linked

To migrate sequentially and as seamlessly as possible, you can use the OpenTelemetry Collector as a proxy between your services and the back ends you use. The Collector can replace most telemetry services, removing the need for separate services for processing and transmitting signals, making them redundant. Its telemetry pipeline's flexibility lets you configure any compatible back end or service while keeping your code agnostic.

Suppose you want to start migrating all your applications slowly. In that case, the Collector can translate any input into the output you need; you can move an application to OpenTelemetry and send data to the same back end. Note that when changing instrumentation libraries, the output produced changes, so you might have to adjust your dashboards and alerting systems.