The Activiti Performance Showdown

Join the DZone community and get the full member experience.

Join For Freeup till now, when you would ask me that same question, i would tell you about how activiti minimizes database access in every way possible, how we break down the process structure into an ‘execution tree’ which allows for fast queries or how we leverage ten years of workflow framework development knowledge.

you know, trying to get around the question without answering it. we knew it is fast, because of the theoretical foundation upon which we have built it. but now we have proof: real numbers …. yes, it’s going to be a lengthy post. but trust me, it’ll be worth your time!

disclaimer: performance benchmarks are hard. really hard. different machines, slight different test setup … very small things can change the results seriously. the numbers here are only to prove that the activiti engine has a very minimal overhead, while also integrating very easily into the java eco-system and offering bpmn 2.0 process execution.

the activiti benchmark project

to test process execution overhead of the activiti engine, i created a little side project on github: https://github.com/jbarrez/activiti-benchmark

the project contains currently 9 test processes, which we’ll analyse below. the logic in the project is pretty straightforward:

- a process engine is created for each test run

- each of the processes are sequentially executed on this process engine, using a threadpool from 1 up to 10 threads.

- all the processes are thrown into a bag, of which a number of random executions are drawn.

- all the results are collected and a html report with some nice charts are generated

to run the benchmark, simply follow the instructions on the github page to build and execute the jar.

benchmark results

the test machine i used for the results is my (fairly old) desktop machine: amd phenom ii x4 940 3.0ghz, 8 gb 800mhz ram and an old-skool 7200 rpm hd running ubuntu 11.10. the database used for the test runs on the same machine on which the tests also run. so keep in mind that in a ‘real’ server environment the results could even be better!

the benchmark project i mentioned above, was executed on a default ubuntu mysql 5 database. i just switched to the ‘large.cnf’ setting (which throws more ram at the db and stuff like that) instead the default config.

- each of the test processes ran for 2500 times, using a threadpool going from one to ten threads . in simpleton language: 2500 process executions using just one thread, 2500 threads using two threads, 2500 process executions using three … yeah, you get it.

- each benchmark run was done using a ‘default’ activiti process engine. this basically means a ‘regular’ standalone activiti engine, created in plain java. each benchmark run was also done in a ‘spring’ config. here, the process engine was constructed by wrapping it in the factory bean, the datasource is a spring datasource and also the transactions and connection pool is managed by spring (i’m actually using a tweaked bonecp threadpool)

- each benchmark run was executed with history on the default history level (ie. ‘audit’) and without history enabled (ie. history level ‘none’) .

the processes are in detail analyzed in the sections below, but here are the integral results of the test runs already:

- activiti 5.9 – mysql – default – history enabled

- activiti 5.9 – mysql – default – history disabled

- activiti 5.9 – mysql – spring – history enabled

- activiti 5.9 – mysql – spring – history disabled

i ran all the tests using the latest public release of activiti, being activiti 5.9. however, my test runs brought some potential performance fixes to the surface (i also ran the benchmark project through a profiler). it was quickly clear that most of the process execution time was done actually cleaning up when a process ended. basically, more than often queries were fired which were not necessary if we would save some more state in our execution tree. i sat together with daniel meyer from camunda and my colleague frederik heremans, and they’ve managed to commit fixes for this! as such, the current trunk of activiti, being activiti 5.10-snapshot at the moment, is significantly faster than 5.9 .

- activiti 5.10 – mysql – default – history enabled

- activiti 5.10 – mysql – default – history disabled

- activiti 5.10 – mysql – spring – history enabled

- activiti 5.10 – mysql – spring – history disabled

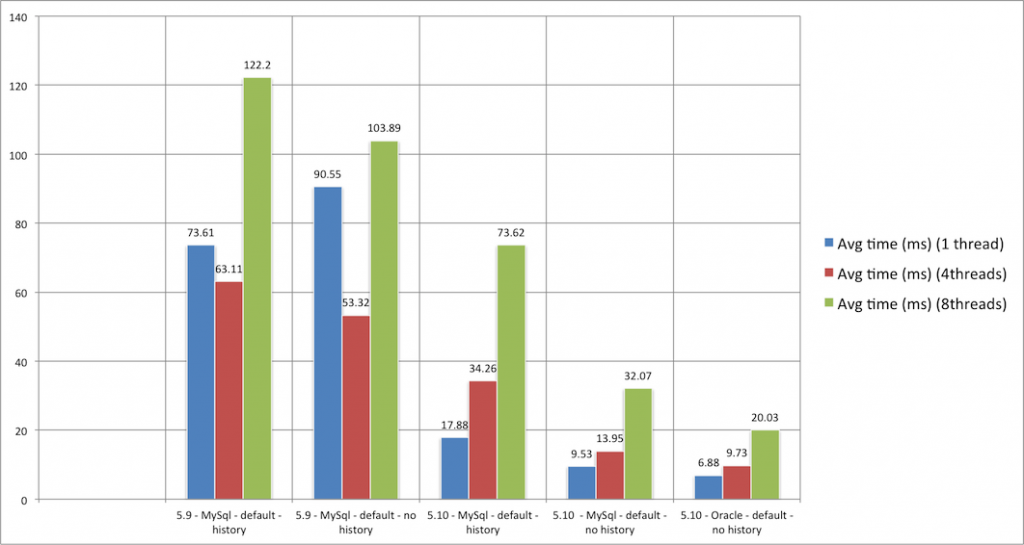

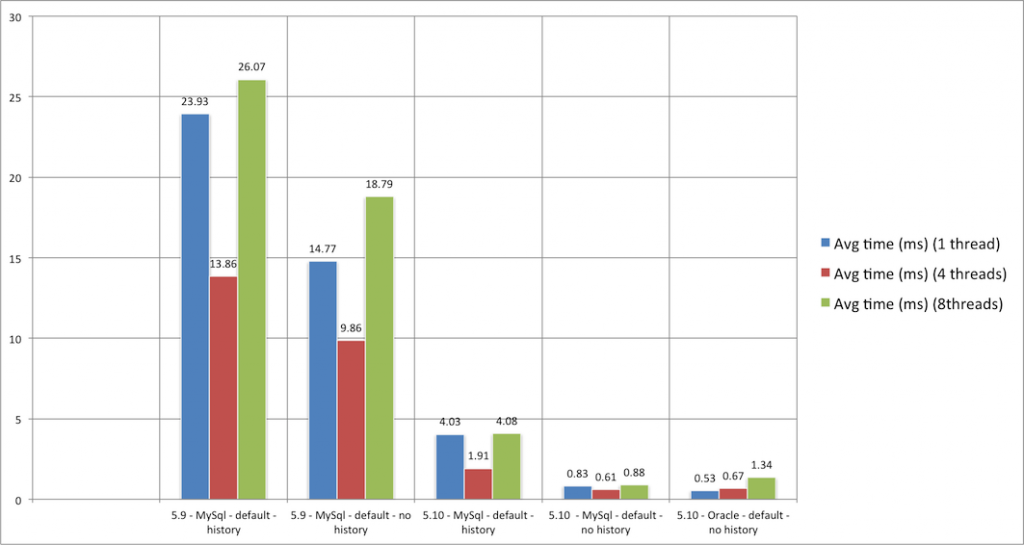

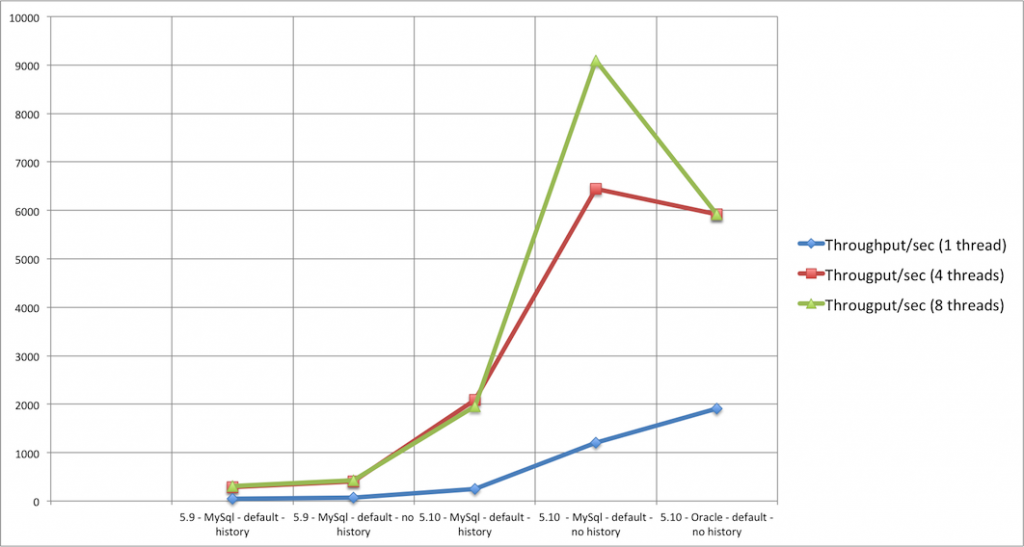

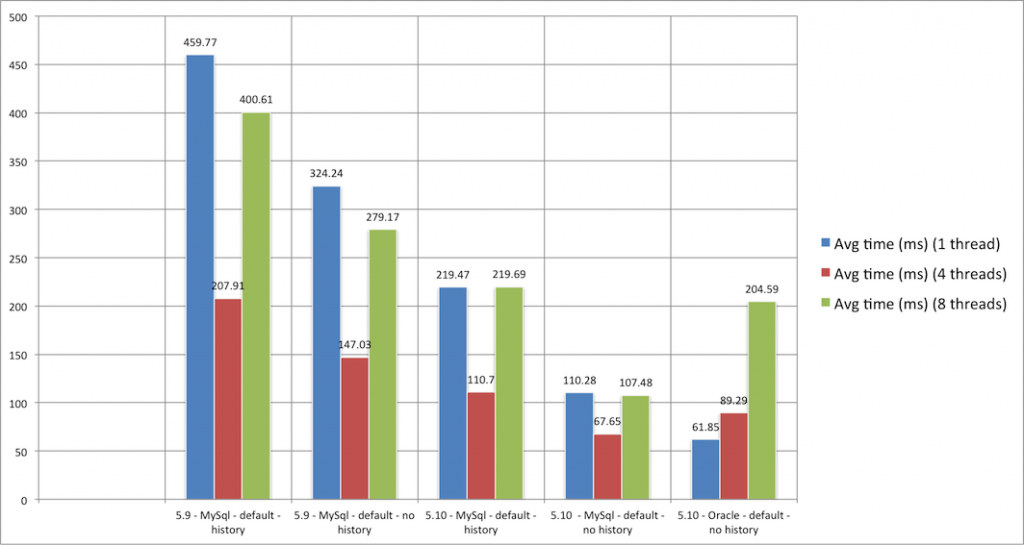

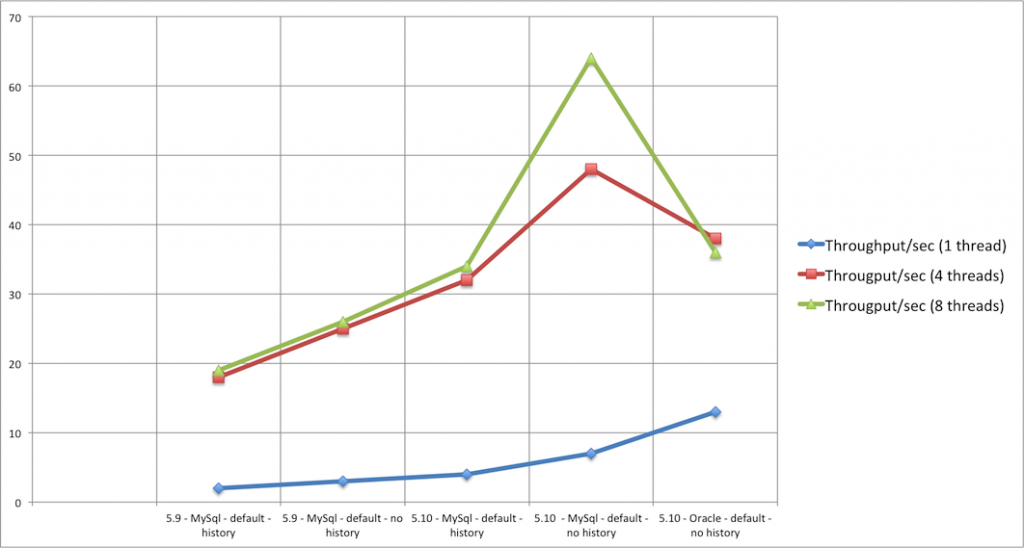

from a high-level perspective (scroll down for detailed analysis), there are a few things to note:

- i had expected some difference between the default and spring config, due to the more ‘professional’ connection pool being used. however, the results for both environments are quite alike. sometimes the default is faster, sometimes spring. it’s hard to really find a pattern. as such, i omitted the spring results in the detailed analyses below.

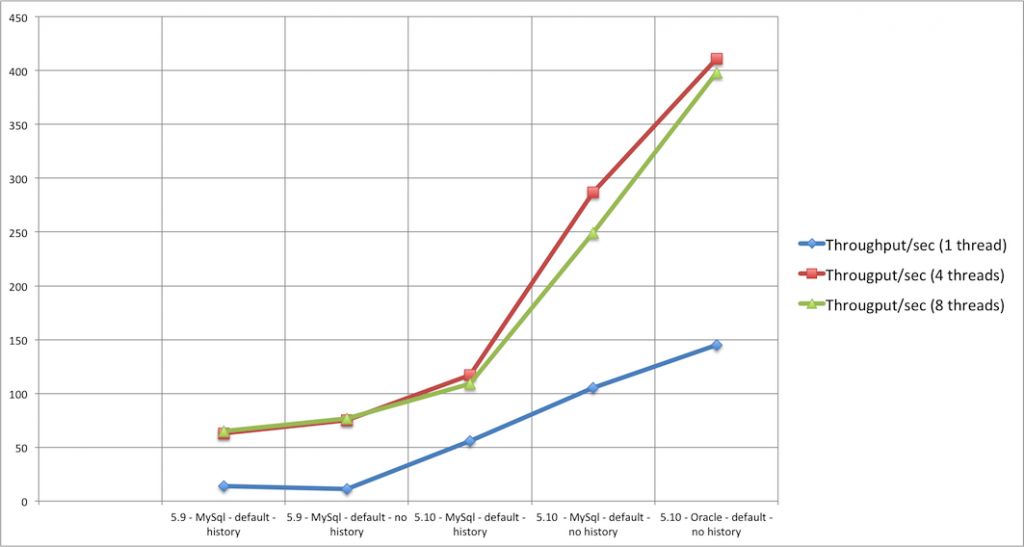

- the best average timings are most of the times found when using four threads to execute the processes . this is probably due to having a quad-core machine.

- the best throughput numbers are most of the times found when using eight threads to execute the processes. i can only assume that is also has something to do with having a quad-core machine.

- when the number of threads in the threadpool go up, the throughput (processes executed / second) goes up, both it has a negative effect on the average time. certainly with more than six or seven threads, you see this effect very clear. this basically means that while the processes on itself take a little longer to execute, but due to the multiple threads you can execute more of these ‘slower’ processes in the same amount of time.

- enabling history does have an impact. often, enabling history will double execution time. this is logical, given that many extra records are inserted when history is on the default level (ie. ‘audit’).

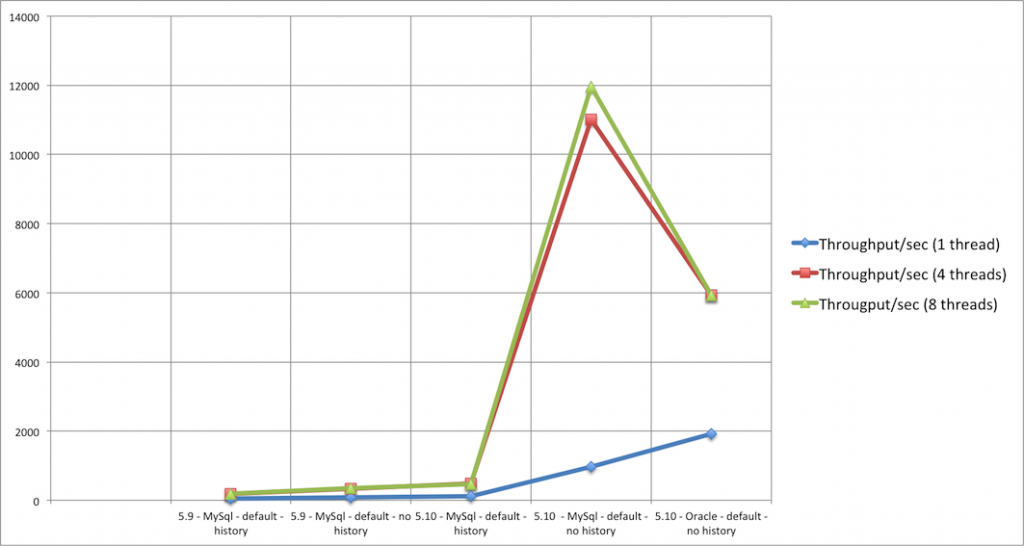

there was one last test i ran, just out of curiosity: running the best performing setting on an oracle xe 11.2 database. the oracle xe is a free version of the ‘real’ oracle database. no matter how hard, i tried, i couldn’t get it decently running on ubuntu. as such, i used an old windows xp install on that same machine. however, the os is 32 bit, wich means the system only has 3.2 of the 8gb of ram available. here are the results:

the results speak for itself. oracle blows away any of the (single-threaded) results on mysql (and they are already very fast!). however, when going multi-threaded it is far worse than any of the mysql results. my guess is that these are due to the limitations of the xe version : only one cpu is used, only 1 gb of ram, etc. i would really like to run these test on a real oracle-managed-by-a-real-dba … feel free to contact me if you are interested !

in the next sections, we will take a detailed look into the performance numbers of each of the test processes. an excel sheet containing all the the numbers and charts below can be downloaded for yourself .

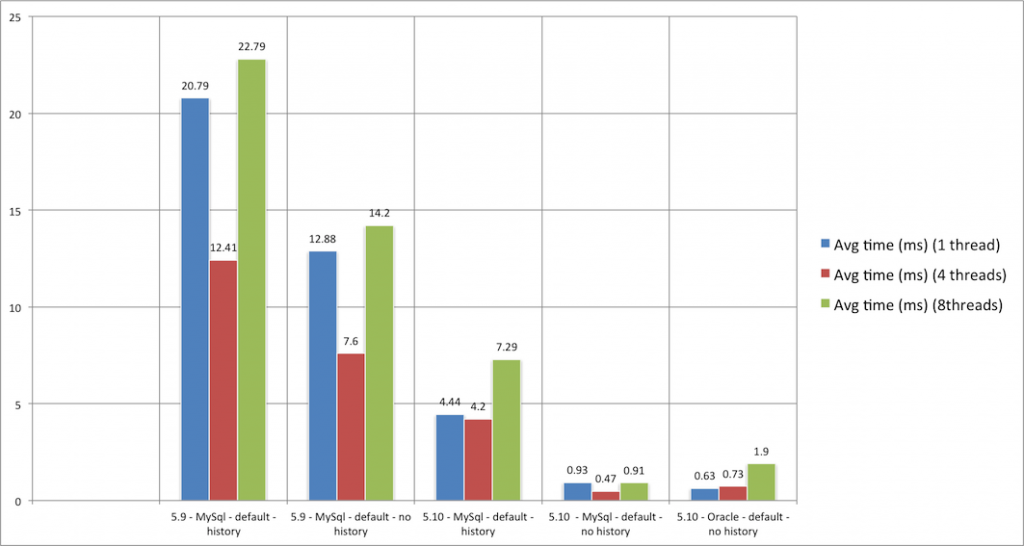

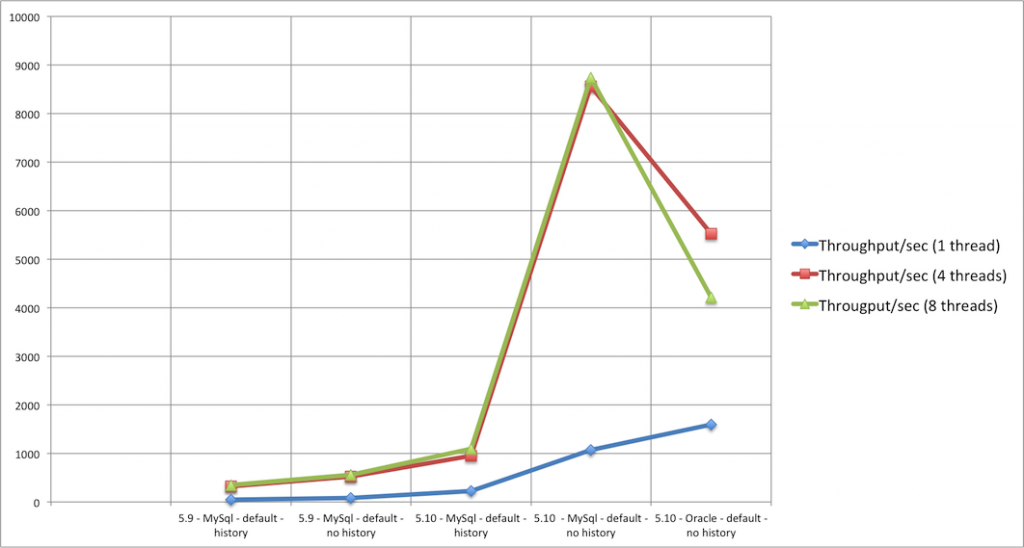

process 1: the bare micromum (one transaction)

the first process is not a very interesting one, business-wise at least. after starting the process, the end is immediately reached. not very useful on itself, but its numbers learn us one essential thing: the bare overhead of the activiti engine. here are the average timings:

this process runs in a single transaction, which means that nothing is saved to the database when the history is disabled due to activiti’s optimizations. with history enabled, you’ll basically get the cost for inserting one row into the historical process instance table, which is around 4.44 ms here. it is also clear that our fix for activiti 5.10 has an enormous impact here. in the previous version, 99% of the time was spent in the cleanup check of the process. take a look at the best result here: 0.47 ms when using 4 threads to execute 2500 runs of this process. that’s only half a millisecond ! it’s fair to say that the activiti engine overhead is extremely small.

the throughput numbers are equally impressive:

in the best case here, 8741 processes are executed. per second. by

the time you arrive here reading the post, you could have executed a few

millions of this process

![]() . you can also see that there is little difference between 4 or 8

threads here. most of the execution time here is cpu time, and no

potential collisions such as waiting for a database lock happens here.

. you can also see that there is little difference between 4 or 8

threads here. most of the execution time here is cpu time, and no

potential collisions such as waiting for a database lock happens here.

in these numbers, you can also easily see that the oracle xe doesn’t scale well with multiple threads (which is explained above). you will see the same behavior in the following results.

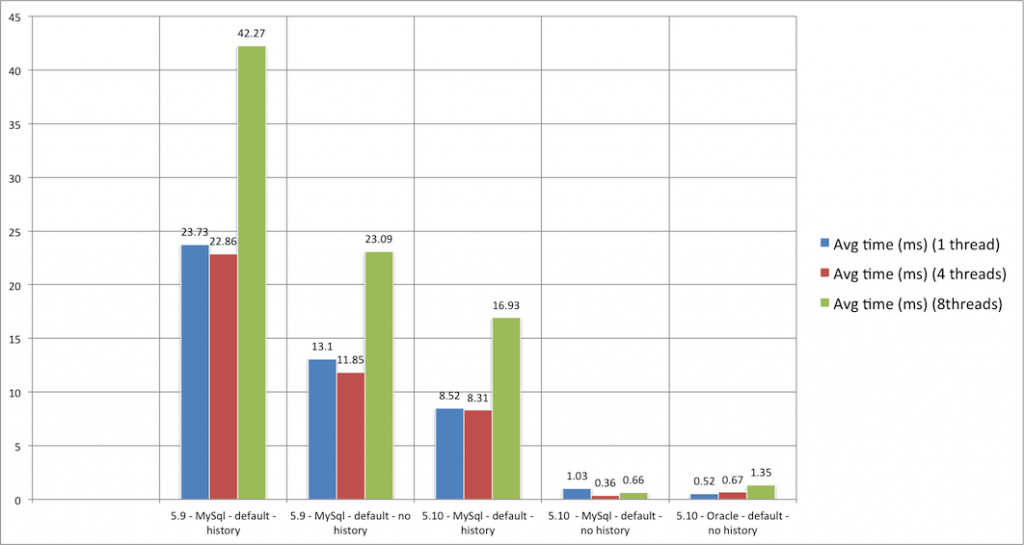

process 2: the same, but a bit longer (one transaction)

this process is pretty similar to the previous one. we have again only one transaction. after the process is started, we pass through seven no-op passthrough activities before reaching the end.

some things to note here:

- the best result (again 4 threads, with history disabled) is actually better than the simpler previous process. but also note that the single threaded execution is a tad slower. this means that the process on itself is a bit slower, which is logical as is has more activities. but using more threads and having more activities in the process does allow for more potential interleaving. in the previous case, the thread was barely born before it was killed again.

- the difference between history enabled/disabled is bigger than the previous process. this is logical, as more history is written here (for each activity one record in the database).

- again, activiti 5.10 is far more superior to activiti 5.9.

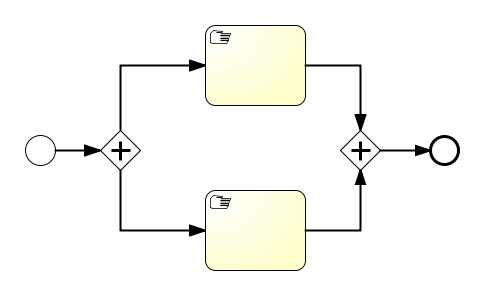

process 3: parallelism in one transaction

this process executes a parallel gateway that forks and one that joins in the same transaction. you would expect something along the lines of the previous results, but you’d be surprised:

comparing these numbers with the previous process, you see that execution is slower. so why is this process slower, even if it has less activities? the reason lies with how the parallel gateway is implemented, especially the join behavior. the hard part, implementation-wise, is that you need to cope with the situation when multiple executions arrive at the join. to make sure that the behavior is atomic, we internally do some locking and fetch all child executions in the execution tree to find out whether the join activates or not. so it is quite a ‘costly’ operation, compared to the ‘regular’ activities.

do mind, we’re talking here about only 5 ms single threaded and 3.59 ms in the best case for mysql . given the functionality that is required for implementing the parallel gateway functionality, this is peanuts if you’d ask me.

the throughput numbers:

this is the first process which actually contains some ‘logic’. in the best case above, it means 1112 processes can be executed in a second. pretty impressive, if you’d ask me! .

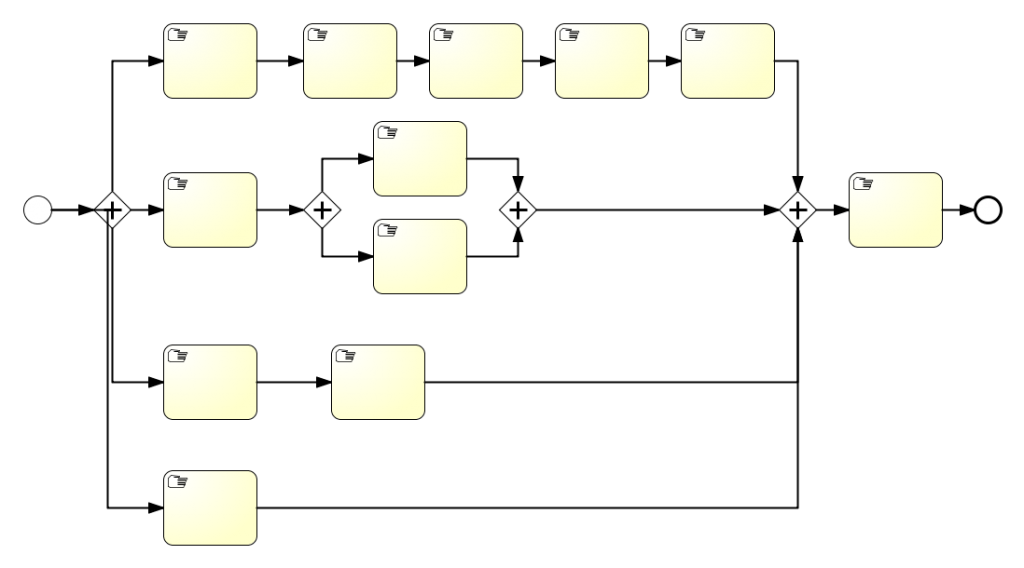

process 4: now we’re getting somewhere (one transaction)

this process already looks like something you’d see when modeling real business processes. we’re still running it in one database transaction though, as all the activities are automatic passthroughs. here we also have two forks and two joins.

take a look at the lowest number: 6.88 ms on oracle when running with one thread. that’s freaking fast , taking in account all that is happening here. the history numbers are at least doubled here (activiti 5.10), which makes sense because there is quite a bit of activity audit logging going on here. you can also see that this causes to have a higher average time for four threads here, which is probably due to the implementation of the joining. if you know a bit about activiti internals, you’ll understand this means there are quite a bit of executions in the execution tree. we have one big concurrent root, but also multiple children which are sometimes also concurrent roots.

but while the average time rises, the throughput definitely benefits:

running this process with eight threads, allows you to do 411 runs of this process in a single second.

there is also something peculiar here: the oracle database performs better with more thread concurrency. this is completely contrary with all other measurements, where oracle is always slower in that environment (see above for explanation). i assume it has something to do with the internal locking and forced update we are applying when forking/joining, which is better handled by oracle it seems.

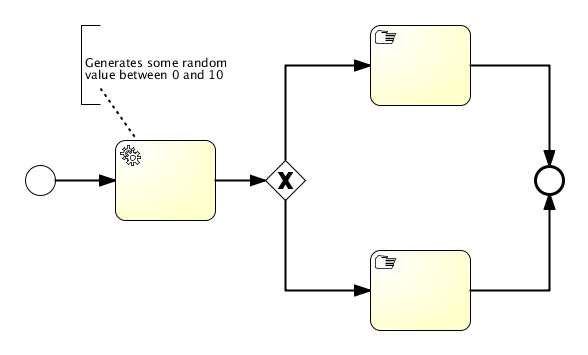

process 5: adding some java logic (single transaction)

i added this process to see the influence of adding a java service task in a process. in this process, the first activity generates a random value, stores it as a process variable and then goes up or down in the process depending on the random value. the chance is about 50/50 to go up or down.

the average timings are very very good.

actually,

the results are in the same range as those of process 1 and 2 above

(which had no activities or only automatic passthroughs).

this means that the overhead of integrating java logic into your process is nearly non-existant

(nothing is of course for free). of course, you can still write slow

code in that logic, but you can’t blame the activiti engine for that

![]()

throughput numbers are comparable to those of process 1 and 2: very, very high. in the best case here, more than 9000 processes are executed per second . that indeed also means 9000 invocations of your own java logic.

process 6, 7 and 8: adding wait states and transactions

the previous processes demonstrated us the bare overhead of the activiti engine. here, we’ll take a look at how wait states and multiple transactions have influence on performance. for this, i added three test processes which contain user tasks. for each user task, the engine commits the current transaction and returns the thread to the client. since the results are pretty much compatible for these processes, we’re grouping them here. these are the processes:

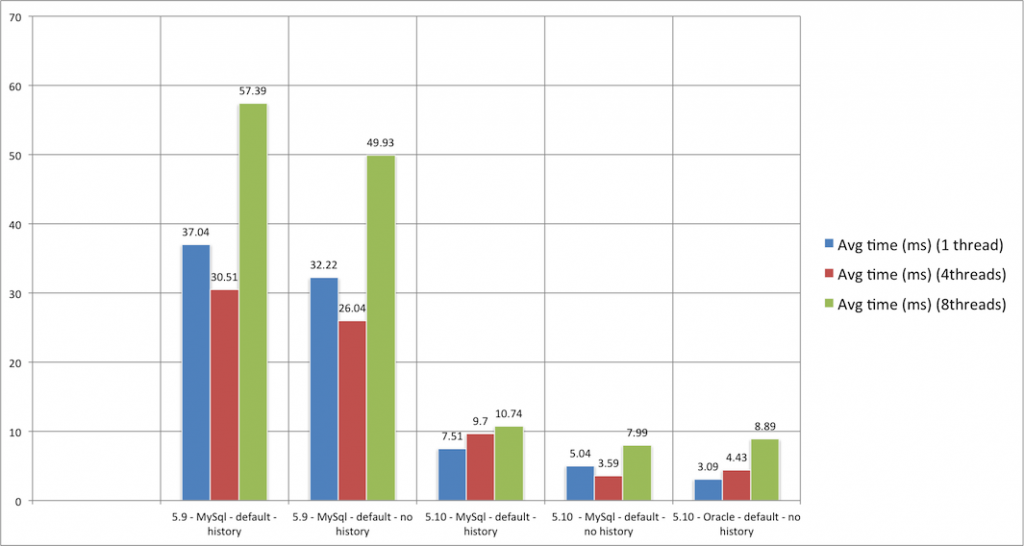

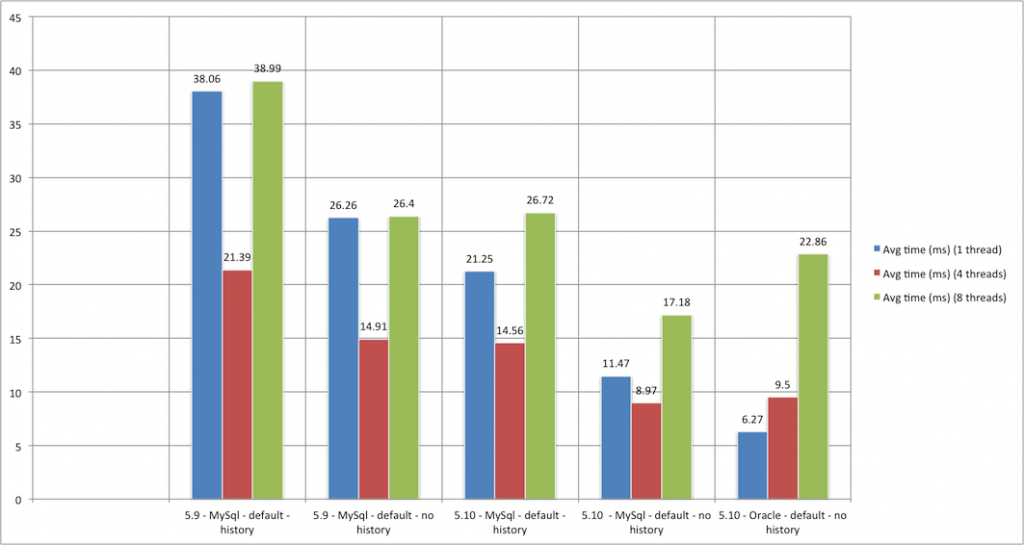

here are the average timings results, in order of the processes above. for the first process, containing just one user task:

it is clear that having wait states and multiple transaction does have influence on the performance. this is also logical: before, the engine could optimize by not inserting the runtime state into the database, because the process was finished in one transaction. now, the whole state, meaning the pointers to where you are currently, need to be saved into the database. the process could be ‘sleeping’ like this for many days, months, years now …. the activiti engine doesn’t hold it into memory now anymore, and it is freed to give its full attention to other processes.

if you check the results of the process with only one user task, you can see that in the best case (oracle, single thread – the 4 threads on mysql is pretty close) this is done in 6.27ms . this is really fast, if you take in account we have a few inserts (the execution tree, the task), a few updates (the execution tree) and deletes (cleaning up) going on here.

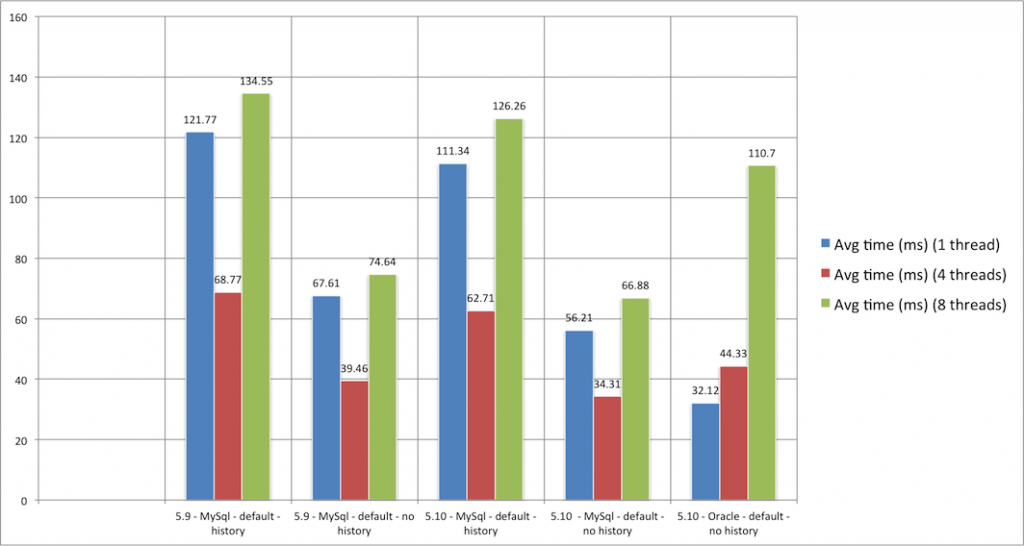

the second process here, with 7 user tasks:

the second chart learns us that logically, more transactions means more time. in the best case here the process is done in 32.12 ms . that is for seven transactions, which gives 4.6 ms for each transactions. so it is clear that average time scales in a linearly way when adding wait states. this makes of course sense, because transactions aren’t free.

also note that enabling history does add quite some overhead here. this is due to having the history level set to ‘audit’, which stores all the user task information in the history tables. this is also noticeable from the difference between activiti 5.9 with history disabled and activiti 5.10 with history enabled: this is a rare case where activiti 5.10 with history enabled is slower than 5.9 with history disabled. but it is logical, given the volume of history stored here.

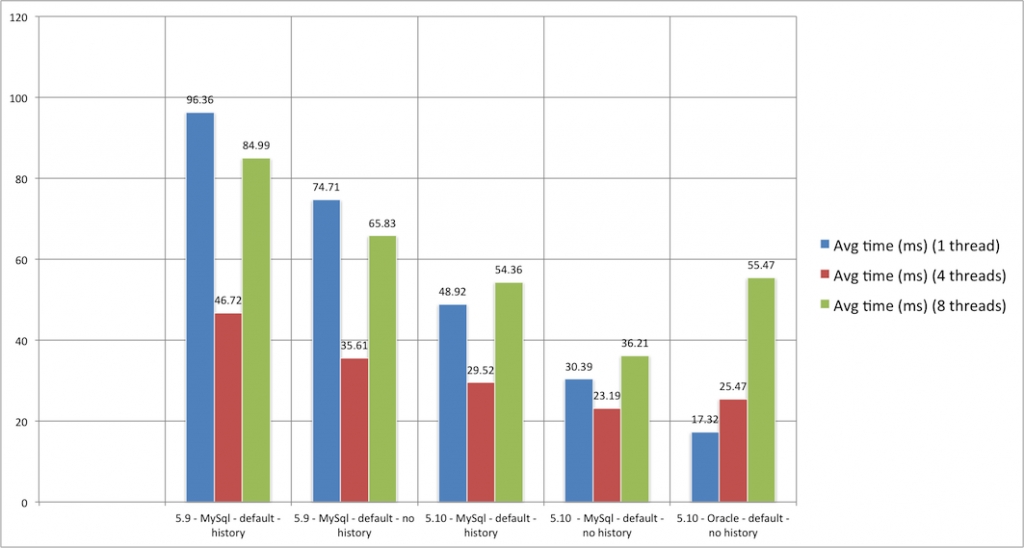

and the third process learns us how user tasks and parallel gateways interact:

the third chart learns us not much new. we have two user tasks now, and the more ‘expensive’ fork/join (see above). the average timings are how we expected them.

the throughput charts are as you would expect given the average timings. between 70 and 250 processes per second. aw yeah!

to save some space, you’ll need to click them to enlarge:

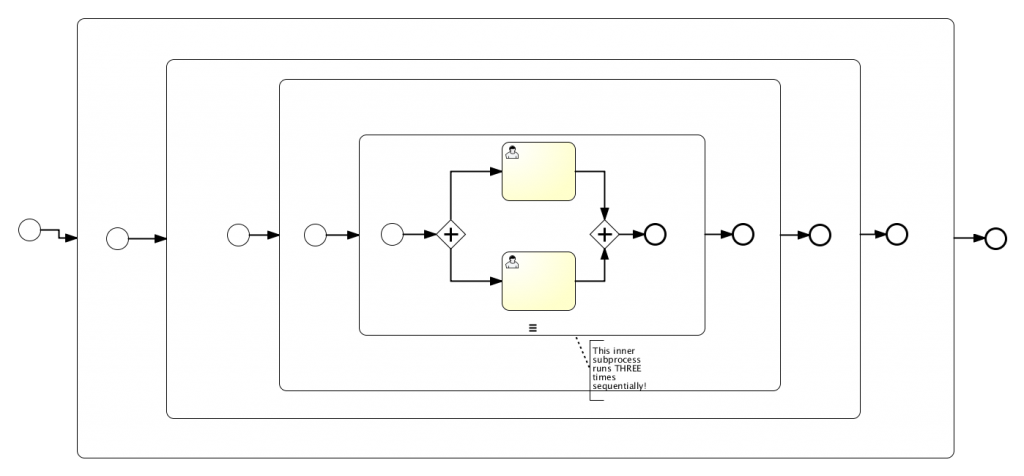

process 9: so what about scopes?

for the last process, we’ll take a look at ‘scopes’. a ‘scope’ is how we call it internally in the engine, and it has to do with variable visibility, relationships between the pointers indicating process state, event catching, etc. bpmn 2.0 has quite some cases for those scopes, for example with embedded subprocesses as shown in the process here. basically, every subprocess can have boundary events (catching an error, a message, etc) that only are applied on its internal activities when it’s scope is active. without going into too much technical details: to get scopes implemented in the correct way, you need some not so trivial logic.

the example process here has 4 subprocesses, nested in each other. the inner process is using concurrency, which is a scope on itself again for the activiti engine. there are also two user tasks here, so that means two transactions. so let’s see how it performs:

you can clearly see the big difference between activiti 5.9 and 5.10. scopes are indeed an area where the fixes around the ‘process cleanup’ at the end have a huge benefit, as many execution objects are created and persisted to represent the many different scopes. single threaded performance is not so good on activiti 5.9. luckily, as you can see from the gap between the blue and the red bars, those scopes do allow for high concurrency.

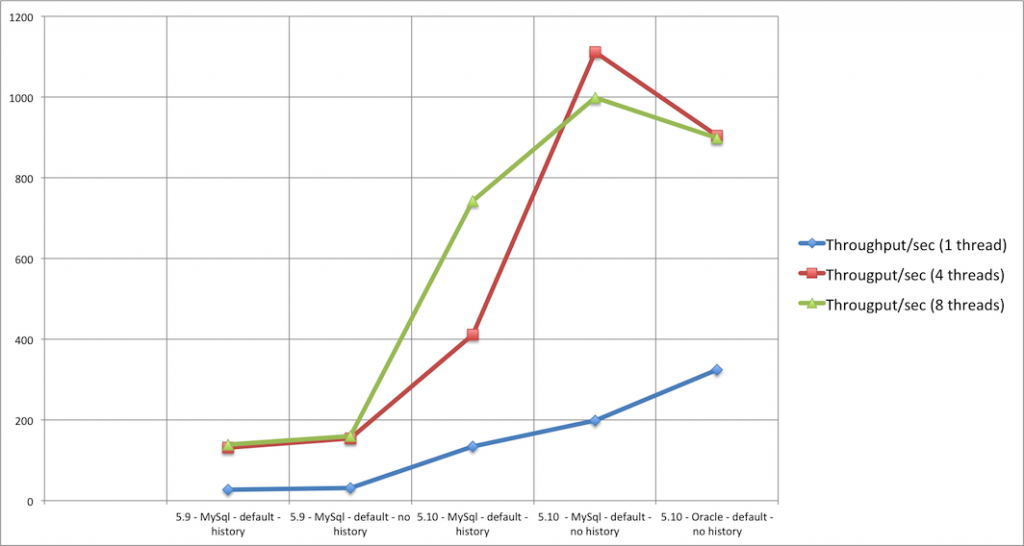

the numbers of oracle, combined with the multi-threaded results of the 5.10 tests, do prove that scopes are now efficiently handled by the engine. the throughput charts prove that the process nicely scales with more threads, as you can see by the big gap between the red and green line in the second last block. in the best case, 64 processes of this more complex process are handled by the engine.

random execution

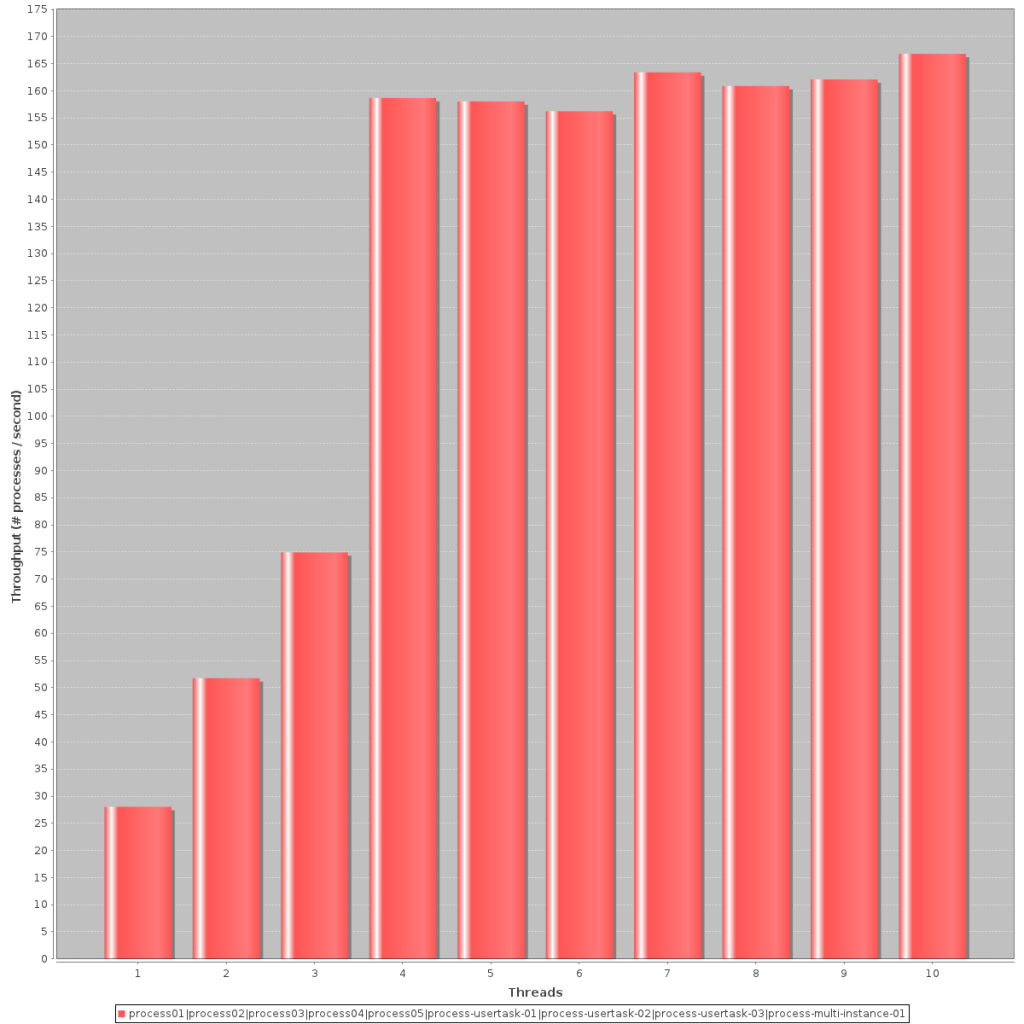

if you have already clicked on the full reports at the beginning of the post, you probably have noticed also random execution is tested for each environment. in this setting, 2500 process executions were done, both the process was randomly chosen. as shown in those reports this meant that over 2500 executions, each process was executed almost the same number of times (normal distribution).

this last chart shows the best setting (activiti 5.10, history disabled) and how the throughput of those random process executions goes when adding more threads:

as we’ve seen in many of the test above, once passed four threads things don’t change that much anymore. the numbers (167 processes/second) prove that in a realistic situation (ie. multiple processes executing at the same time), the activiti engine nicely scales up.

conclusion

the average timing charts show two things clearly:

- the activiti engine is fast and overhead is minimal !

- the difference between history enabled or disabled is definitely noticeably. sometimes it comes even down to half the time needed. all history tests were done using the ‘audit’ level, but there is a simpler history level (‘activity’) which might be good enough for the use case. activiti is very flexible in history configuration, and you can tweak the history level for each process specifically. so do think about the level your process needs to have, if it needs to have history at all !

the throughput charts prove that the engine scales very well when more threads are available (ie. any modern application server). activiti is well designed to be used in high-throughput and availability (clustered) architectures .

as i said in the introduction, the numbers are what they are: just numbers. my main point which i want to conclude here, is that the activiti engine is extremely lightweight. the overhead of using activiti for automating your business processes is small. in general, if you need to automate your business processes or workflows, you want top-notch integration with any java system and you like all of that fast and scalable … look no further!

Published at DZone with permission of . See the original article here.

Opinions expressed by DZone contributors are their own.

Comments