Extractive Summarization With LLM Using BERT

Extractive summarization is a prominent technique in the field of NLP. Learn how you can pull key sentences out of a corpus of text using BERT Summarization.

Join the DZone community and get the full member experience.

Join For FreeIn today's fast-paced world, we're bombarded with more information than we can handle. We’re increasingly getting used to receiving more information in less time, leading to frustration when having to read extensive documents or books. That's where extractive summarization steps in. To get to the heart of a text, the process pulls out key sentences from an article, piece, or page to give us a snapshot of its most important points.

For anyone needing to understand big documents without reading every word, this is a game changer.

In this article, we delve into the fundamentals and applications of extractive summarization. We'll examine the role of Large Language Models, especially BERT (Bidirectional Encoder Representations from Transformers), in enhancing the process. The article will also include a hands-on tutorial on using BERT for extractive summarization, showcasing its practicality in condensing large text volumes into informative summaries.

Understanding Extractive Summarization

Extractive summarization is a prominent technique in the field of natural language processing (NLP) and text analysis. With it, key sentences or phrases are carefully selected from the original text and combined to create a concise and informative summary. This involves meticulously sifting through text to identify the most crucial elements and central ideas or arguments presented in the selected piece.

Where abstractive summarization involves generating entirely new sentences often not present in the source material, extractive summarization sticks to the original text. It doesn’t alter or paraphrase but instead extracts the sentences exactly as they appear, maintaining the original wording and structure. This way, the summary stays true to the source material's tone and content. The technique of extractive summarization is extremely beneficial in instances where the accuracy of the information and the preservation of the author's original intent are a priority.

It has many different uses, like summarizing news articles, academic papers, or lengthy reports. The process effectively conveys the original content's message without potential biases or reinterpretations that can occur with paraphrasing.

How Does Extractive Summarization Use LLMs?

1. Text Parsing

This initial step involves breaking down the text into its essential elements, primarily sentences and phrases. The goal is to identify the basic units (sentences, in this context) the algorithm will later evaluate to include in a summary, like dissecting a text to understand its structure and individual components.

For instance, the model would analyze the four-sentence paragraph by breaking it down into the following four-sentence components.

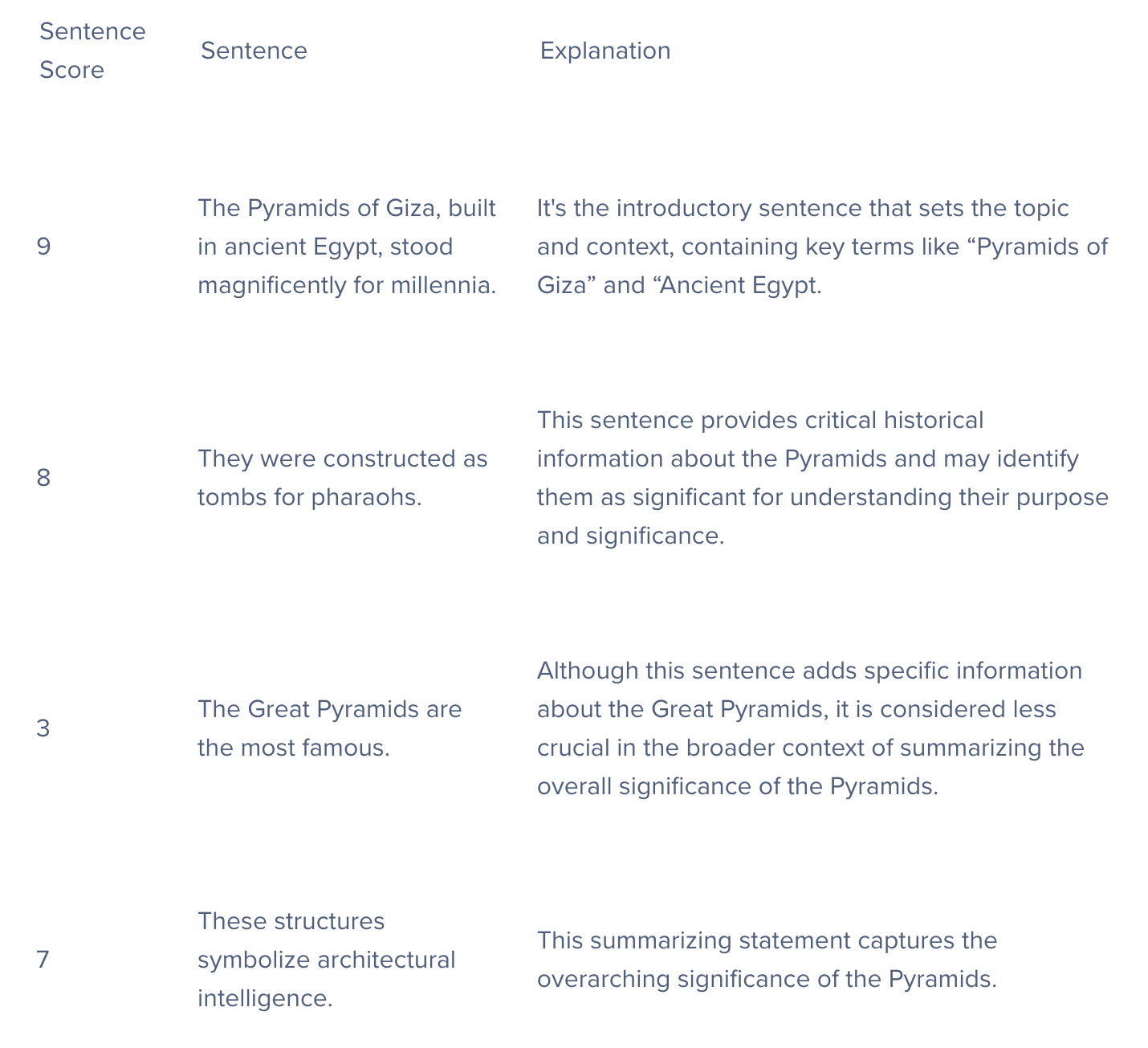

- The Pyramids of Giza, built in ancient Egypt, stood magnificently for millennia.

- They were constructed as tombs for pharaohs.

- The Great Pyramids are the most famous.

- These structures symbolize architectural intelligence.

2. Feature Extraction

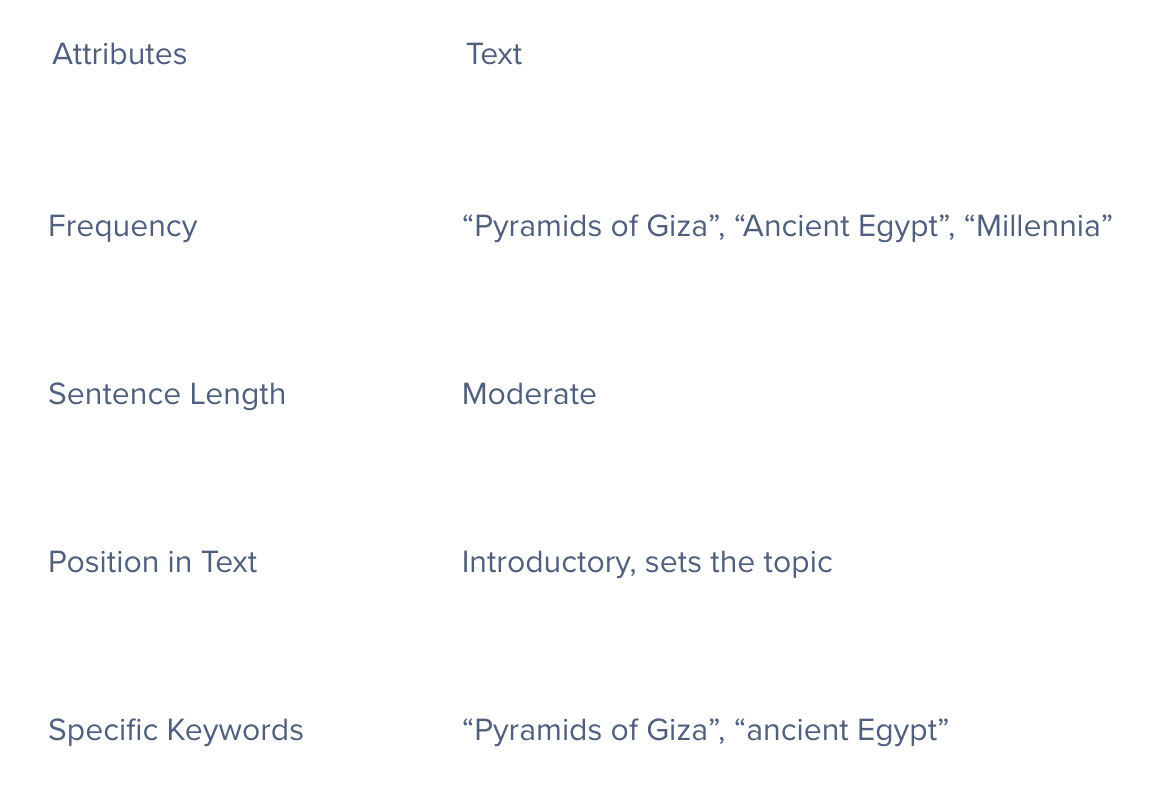

In this stage, the algorithm analyzes each sentence to identify characteristics or 'features' that might indicate what their significance is to the overall text. Common features include the frequency and repeat use of keywords and phrases, the length of sentences, their position in the text and its implications, and the presence of specific keywords or phrases that are central to the text's main topic.

Below is an example of how the LLM would do feature extraction for the first sentence: “The Pyramids of Giza, built in ancient Egypt, stand magnificently for millennia.”

3. Scoring Sentences

Each sentence is assigned a score based on its content. This score reflects a sentence’s perceived importance in the context of the entire text. Sentences that score higher are deemed to carry more weight or relevance.

Simply put, this process rates each sentence for its potential significance to a summary of the entire text.

4. Selection and Aggregation

The final phase involves selecting the highest-scoring sentences and compiling them into a summary. When carefully done, this ensures the summary remains coherent and is aggregately representative of the main ideas and themes of the original text.

To create an effective summary, the algorithm must balance the need to include important sentences that are concise, avoid redundancy, and ensure that the selected sentences provide a clear and comprehensive overview of the entire original text.

- The Pyramids of Giza, built in Ancient Egypt, stood magnificently for millennia. They were constructed as tombs for pharaohs. These structures symbolize architectural brilliance.

This example is extremely basic, extracting 3 of the total 4 sentences for the best overall summarization. Reading an extra sentence doesn't hurt, but what happens when the text is longer? Let's say, 3 paragraphs?

How To Run Extractive Summarization With BERT LLMs

Step 1: Installing and Importing Necessary Packages

We will be leveraging the pre-trained BERT model. However, we won't be using just any BERT model; instead, we'll focus on the BERT Extractive Summarizer. This particular model has been finely tuned for specialized tasks in extractive summarization.

!pip install bert-extractive-summarizer from summarizer import Summarizer

Step 2

The Summarizer() function imported from the summarizer in Python is an extractive text summarization tool. It uses the BERT model to analyze and extract key sentences from a larger text. This function aims to retain the most important information, providing a condensed version of the original content. It's commonly used to summarize lengthy documents efficiently.

model = Summarizer()

Step 3: Importing Our Text

Here, we will import any piece of text that we would like to test our model on. To test our extractive summary model, we generated text using ChatGPT 3.5 with the prompt: “Provide a 3-paragraph summary of the history of GPUs and how they are used today.”

text = "The history of Graphics Processing Units (GPUs) dates back to the early 1980s when companies like IBM and Texas Instruments developed specialized graphics accelerators for rendering images and improving overall graphical performance. However, it was not until the late 1990s and early 2000s that GPUs gained prominence with the advent of 3D gaming and multimedia applications. NVIDIA's GeForce 256, released in 1999, is often considered the first GPU, as it integrated both 2D and 3D acceleration on a single chip. ATI (later acquired by AMD) also played a significant role in the development of GPUs during this period. The parallel architecture of GPUs, with thousands of cores, allows them to handle multiple computations simultaneously, making them well-suited for tasks that require massive parallelism. Today, GPUs have evolved far beyond their original graphics-centric purpose, now widely used for parallel processing tasks in various fields, such as scientific simulations, artificial intelligence, and machine learning. Industries like finance, healthcare, and automotive engineering leverage GPUs for complex data analysis, medical imaging, and autonomous vehicle development, showcasing their versatility beyond traditional graphical applications. With advancements in technology, modern GPUs continue to push the boundaries of computational power, enabling breakthroughs in diverse fields through parallel computing. GPUs also remain integral to the gaming industry, providing immersive and realistic graphics for video games where high-performance GPUs enhance visual experiences and support demanding game graphics. As technology progresses, GPUs are expected to play an even more critical role in shaping the future of computing."

Here's that text without it inside the code block:

"The history of Graphics Processing Units (GPUs) dates back to the early 1980s when companies like IBM and Texas Instruments developed specialized graphics accelerators for rendering images and improving overall graphical performance. However, it was not until the late 1990s and early 2000s that GPUs gained prominence with the advent of 3D gaming and multimedia applications. NVIDIA's GeForce 256, released in 1999, is often considered the first GPU, as it integrated both 2D and 3D acceleration on a single chip. ATI (later acquired by AMD) also played a significant role in the development of GPUs during this period.

The parallel architecture of GPUs, with thousands of cores, allows them to handle multiple computations simultaneously, making them well-suited for tasks that require massive parallelism. Today, GPUs have evolved far beyond their original graphics-centric purpose and are now widely used for parallel processing tasks in various fields, such as scientific simulations, artificial intelligence, and machine learning. Industries like finance, healthcare, and automotive engineering leverage GPUs for complex data analysis, medical imaging, and autonomous vehicle development, showcasing their versatility beyond traditional graphical applications.

With advancements in technology, modern GPUs continue to push the boundaries of computational power, enabling breakthroughs in diverse fields through parallel computing. GPUs also remain integral to the gaming industry, providing immersive and realistic graphics for video games where high-performance GPUs enhance visual experiences and support demanding game graphics. As technology progresses, GPUs are expected to play an even more critical role in shaping the future of computing."

Step 4: Performing Extractive Summarization

Finally, we'll execute our summarization function. This function requires two inputs: the text to be summarized and the desired number of sentences for the summary. After processing, it will generate an extractive summary, which we will then display.

# Specifying the number of sentences in the summary summary = model(text, num_sentences=4) print(summary)

Extractive Summary Output

The history of Graphics Processing Units (GPUs) dates back to the early 1980s when companies like IBM and Texas Instruments developed specialized graphics accelerators for rendering images and improving overall graphical performance. NVIDIA's GeForce 256, released in 1999, is often considered the first GPU, as it integrated both 2D and 3D acceleration on a single chip. Today, GPUs have evolved far beyond their original graphics-centric purpose and are now widely used for parallel processing tasks in various fields, such as scientific simulations, artificial intelligence, and machine learning. As technology progresses, GPUs are expected to play an even more critical role in shaping the future of computing.

Our model pulled the 4 most important sentences from our large corpus of text to generate this summary!

Challenges of Extractive Summarization Using LLMs

Contextual Understanding Limitations

While LLMs are proficient in processing and generating language, their understanding of context, especially in longer texts, is limited. LLMs can miss subtle nuances or fail to recognize critical aspects of the text, leading to less accurate or relevant summaries. The more advanced the language model, the better the summary will be.

Bias in Training Data

LLMs learn from vast datasets compiled from various sources, including the internet. These datasets can contain biases, which the models might inadvertently learn and replicate in their summaries, leading to skewed or unfair representations.

Handling Specialized or Technical Language

While LLMs are generally trained on a wide range of general texts, they may not accurately capture specialized or technical language in fields like law, medicine, or other highly technical fields. This can be alleviated by feeding it more specialized and technical text. Lack of training in specialized jargon can affect the quality of summaries when used in these fields.

Conclusion

It's clear that extractive summarization is more than just a handy tool; it's a growing necessity in our information-saturated age, where we’re inundated with walls of text every day. By harnessing the power of technologies like BERT, we can see how complex texts can be distilled into digestible summaries, saving us time and helping us to comprehend the texts being summarized further.

Whether for academic research, business insights, or just staying informed in a technologically advanced world, extractive summarization is a practical way to navigate the sea of information we’re surrounded by. As natural language processing continues to evolve, tools like extractive summarization will become even more essential, helping us to quickly find and understand the information that matters most in a world where every minute counts.

Published at DZone with permission of Kevin Vu. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments