Forging a NoSQL Database

This post provides an inside account of how user feedback helped RavenDB become a mature data solution.

Join the DZone community and get the full member experience.

Join For FreeRavenDB has been around for a little over a decade and is currently used by thousands of customers, including fortune 500 companies like Toyota and Verizon, but it hasn’t always been smooth sailing. Like any other software, RavenDB has had its growing pains, particularly in the early days when the company was made up of just a handful of developers.

User feedback has always played an important role in our development. Many of RavenDB’s features were added due to popular demand, and our customers have helped us find countless obscure bugs by using our software in ways we never could have imagined.

Even negative feedback has helped us grow. Thanks in part to our critics, many of RavenDB’s weaknesses have turned into strengths.

“My attitude is that if you push me towards something that you think is a weakness, then I will turn that perceived weakness into a strength.” - Michael Jordan

Some of RavenDB’s most infamous reviews help tell the tale.

Data Backup and Restore Functionality

When Octopus switched to RavenDB, the feedback (in 2012) was very positive. The only complaint they had was with OS compatibility in the backup system. The system was quite bare-bones at the time and there was a lot of room for improvement.

Backups are now performed automatically at user-defined intervals and can be restored regardless of OS. There are also further disaster recovery options to protect your data which we’ll get into later.

However, that article was just a prelude to this brutal follow-up in 2014.

Improving Database Usability, Documentation, and Learning Resources

The Octopus team found that RavenDB was highly opinionated and worked in mysterious ways. It was easy to make mistakes, there was little in the way of guidance or explanation, and documentation was sadly lacking. To make matters worse, most companies didn’t have RavenDB experts on staff to handle problems when they arose.

Lack of experience/expertise is a challenge for any new technology, and NoSQL databases are still (relatively) new compared to their long-dominant relational counterparts. The solution is to make the software intuitive, and easy to learn and use.

At the time of these posts, we weren’t doing a good job of that and Paul's criticisms weren’t unique. In response to our users’ feedback, we went all out to turn things around.

We created the RavenDB studio: a GUI that lets you monitor all aspects of the database and perform most functions just by pointing and clicking.

We added a wealth of documentation, and currently have several employees working full time adding and updating content. Our CEO Oren Eini (also known as Ayende) wrote a whole (free) book called Inside RavenDB 4.0, which provides a comprehensive explanation of RavenDB and its underlying design philosophy. We also created a bootcamp program to help new users get started, so there’s no shortage of quality learning material.

Updating the “Safe By Default” Approach

Much of the Octopus team’s confusion and frustration was caused by RavenDB’s “safe by default” policy. It was a design philosophy aimed at preventing developers from “hurting themselves” with bad code.

Queries per session were limited and result sets were bounded, and though the limits were reasonable, they weren’t made apparent. Exceeding the limits didn’t cause an error or notify the user: the request would simply fail quietly. This led to occasions where code would work differently in development than it would in production, with no clear reason why.

There was negative feedback from multiple sources regarding this and it led to a review of our “safe by default” approach.

Result sets were originally limited to 128, but are now unrestricted. It’s now up to the user to code responsibly and use paging to keep result sets small and avoid performance loss.

Queries were limited to 30 per session because with more than that you’re effectively performing a DOS attack on yourself. Lazy requests allow you to combine multiple calls into 1, so to get to 30 would take some very unusual (or just plain bad) code. You can now adjust this limit (from the default 30) for those unusual cases.

MapReduce results were limited to 15 per item mapped due to the difficulty of reserving memory for fanout indexes. We have since removed that limit, but users will now receive a performance notification if more than 1024 results will be produced from a single document.

In short, thanks to user feedback, RavenDB is a lot less opinionated than it used to be, and functions more in line with user expectations.

Before getting to the rest of Paul’s criticisms, we need to explain the biggest fundamental change RavenDB has gone through since the time of his post.

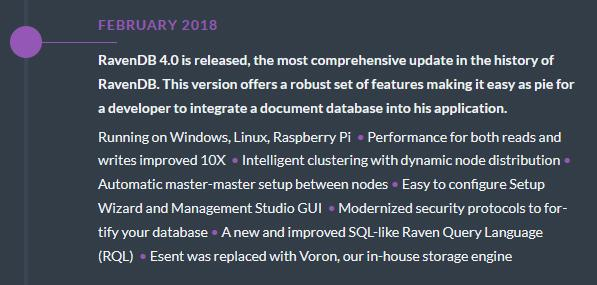

RavenDB 4.0: A Giant Leap Forward

Many problems customers had with RavenDB early on were simply too fundamental to fix with small patches. We needed a massive update to completely overhaul RavenDB’s core systems, and 4.0 was our opportunity.

Our company went through a lot in preparation for 4.0: the number of developers on staff doubled within a year and 80% of the codebase was rewritten from the ground up.

And then, RavenDB took flight.

(You can see the whole Development Roadmap here.)

Finally, we could deliver on the improvements our users had long been waiting for.

Now with that context, let’s get back to Paul and Octopus. The last item on the list above relates to Paul’s comments about ESENT-related indexing errors and poor performance.

Database Indexes and the Voron Storage Engine

We weren’t happy with ESENT either, but we were stuck with it. It was flakey, sensitive, only worked on Windows, and unfortunately, the basis for all of RavenDB’s storage and indexing.

4.0 is where we made our break. We switched from .NET Framework to .NET Core to go beyond Windows and we introduced our own custom-made data storage engine: Voron.

Voron is much more stable and reliable than ESENT and is highly optimized for our database model. Its introduction resulted in speeds increasing by orders of magnitude. With indexing rebuilt on this ideal foundation, it became one of the highlights of RavenDB.

Clarifying Eventual Consistency

On the subject of indexing; in his article “Would I use RavenDB again?,” Jeremy Miller talks about the problems of eventual consistency. Any time data is changed there’s a wait before associated (and now stale) indexes are updated to be consistent with the raw data. If you query an index that hasn’t been updated you may receive out-of-date information.

In RavenDB queries must be performed against indexes. You cannot query raw data. This rule is for performance sake, since querying an index is much faster, but what about stale indexes?

We don't actually let indexes stay stale long (they’re updated after 5ms) and in most cases, they present no problems. However, in situations where consistency is critical, RavenDB has the answers – you can check for stale reads or make queries wait for indexes to be updated.

While we haven’t changed our approach to eventual consistency, we’ve made it much clearer with our introductory and learning materials.

MapReduce Customization

In his post “Migrating from RavenDB to Cassandra,” Aaron Stannard takes issue with the way RavenDB implements MapReduce, with these two complaints:

RavenDB’s MapReduce system required re-aggregation “which works great at low volumes but scales at an inverted ratio to data growth.”

This is a little confusing. RavenDB performs incremental aggregation and has never required re-aggregation. As a result, performance is excellent regardless of data growth.

The MapReduce pipeline was too simplistic, which prevented them from performing more in-depth custom queries.

This criticism had merit, and we responded by opening the door for user customization. Now you can easily add your own code to RavenDB’s map/reduce pipeline and make it do whatever you want.

We’re actually really proud of our MapReduce system as a whole, so we created an entire webinar devoted to it.

What’s Happening With Sharding?

Aaron also had some very valid criticism of RavenDB’s sharding system:

“Raven’s sharding system is really more of a hack at the client level which marries your network topology to your data, which is a really bad design choice...”

Aaron was right on the money – it wasn’t good. We decided to abandon our client-side simulation of sharding and are now working to introduce true sharding in our next major update: Version 6.0.

Introducing In-Memory Testing

Another issue raised by Jeremy Miller was RavenDB’s lack of in-memory testing. At the time this was a gap in our feature set, one we filled with a more than adequate solution.

Like other systems, RavenDB now provides a fully in-memory mode for database testing. It’s blazingly fast, you can set it up with 1 line of code, and you can use it for automated testing in a CI/CD pipeline.

Unlike other systems*, the in-memory version will behave identically to how your database does in production, ensuring your results are always valid.

*In Microsoft’s documentation for entity framework:

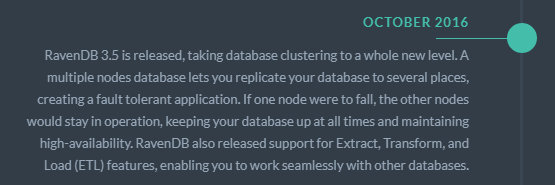

Database High-Availability and Disaster Recovery

In his post, “Initial thoughts on Octopus Deploy 3.0 – from RavenDB back to SQL Server,“ Ian Paullin talks about his organization’s lack of HA/DR solutions with RavenDB.

These were serious issues and we dealt with them accordingly.

As the notes say, clustering provides great fault tolerance. Something they don’t mention is that RavenDB makes clusters extremely easy to set up and manage.

RavenDB is best run on clusters of at least 3 nodes, that way if one or two nodes fail your database will still stay up and running. Automatic replication and assignment failover protect your data, while features like load balancing and concurrent reads and writes provide significant performance benefits.

RavenDB also uses hashing to keep an eye out for data corruption. You can replace the affected hard disk and replicate your database back to that node.

If you don’t have the data replicated to another node or backed up, the Voron recovery tool can still help you get it back.

More Programming Language Support

In his post “Thoughts on MongoDB vs Traditional SQL and RavenDB,” John Culviner noted that RavenDB "suffered from bugs and a sub-par CLI/querying API (unless you were using C#)."

This was a valid criticism at the time. Early in development, we focused primarily on C#, and support for other languages lagged behind.

We’ve always received a lot of feedback on this issue, and we’re always trying to accommodate. At the time of writing we have clients for C#, Java, Node.js, Python, Ruby, and Go.

The Post-4.0 Era

Negative articles and reviews are a lot harder to find after the release of 4.0. The most significant critique comes from long-term user Alex Klause in his post titled “RavenDB: Two Years of Pain and Joy.” Alex had a lot of good to say, but there were some disappointing negatives as well.

His first criticism was about a lack of documentation and community. It’s true that RavenDB doesn’t have the biggest community, though it has grown significantly since the time of the article. We offset this by providing extensive support on our community forums, through support packages available to our customers, and by providing comprehensive documentation and learning materials.

The next issue was the inexcusable number of (quickly fixed) bugs.

Unfortunately for Alex, he started using RavenDB shortly before our biggest set of changes ever. In the period he used RavenDB (v3.5 - v4.2), a set of old bugs were waiting to be squashed, only to be replaced in 4.0 by a whole new set just waiting to be discovered. It was a wild time, and things have settled down a lot since then. These days, bugs are far less common and far less significant.

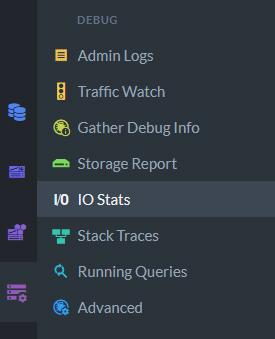

Profiling RavenDB

As Alex noted, and as is still the case, RavenDB doesn’t have a single dedicated profiler. Instead, it now has multiple profiling tools, all of which you can find and use inside the studio.

With these tools, you can monitor and debug virtually every aspect of your database’s operations in our intuitive and aesthetically pleasing GUI.

With these tools, you can monitor and debug virtually every aspect of your database’s operations in our intuitive and aesthetically pleasing GUI.

Raven Query Language (RQL)

Oren also addressed the comments about RQL, but the confusion is understandable. A good understanding of RQL requires a good explanation, and as mentioned our learning resources were lacking at the time. A great explanation can now be found in Oren’s book.

Published at DZone with permission of Chris Balnave. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments