How to Optimize CPU Performance Through Isolation and System Tuning

Learn more about the challenges of tuning your CPU and system for optimal performance with Linux and how Chronicle Tune could do the work automatically for you.

Join the DZone community and get the full member experience.

Join For FreeCPU isolation and efficient system management are critical for any application which requires low-latency and high-performance computing. These measures are especially important for high-frequency trading systems, where split-second decisions on buying and selling stocks must be made. To achieve this level of performance, such systems require dedicated CPU cores that are free from interruptions by other processes, together with wider system tuning.

In modern production environments, there are numerous hardware and software hooks that can be adjusted to improve latency and throughput. However, finding the optimal settings for a system can be challenging as it requires navigating a multidimensional search space. To accomplish this efficiently, it is necessary to understand the tuning landscape and to use tools and strategies that facilitate effective changes.

Moreover, managing Java processes can be more difficult due to the number of auxiliary threads which are spawned by the JVM, even for logically single-threaded applications. The scheduling of these threads is critical to minimizing jitter and achieving optimal performance. In this article, we will explore the strengths and weaknesses of the standard solutions for controlling CPU isolation for low-latency applications under Linux and how we at Chronicle Software developed Chronicle Tune to address the inherent trade-offs of these solutions.

Using the isolcpus Linux Configuration

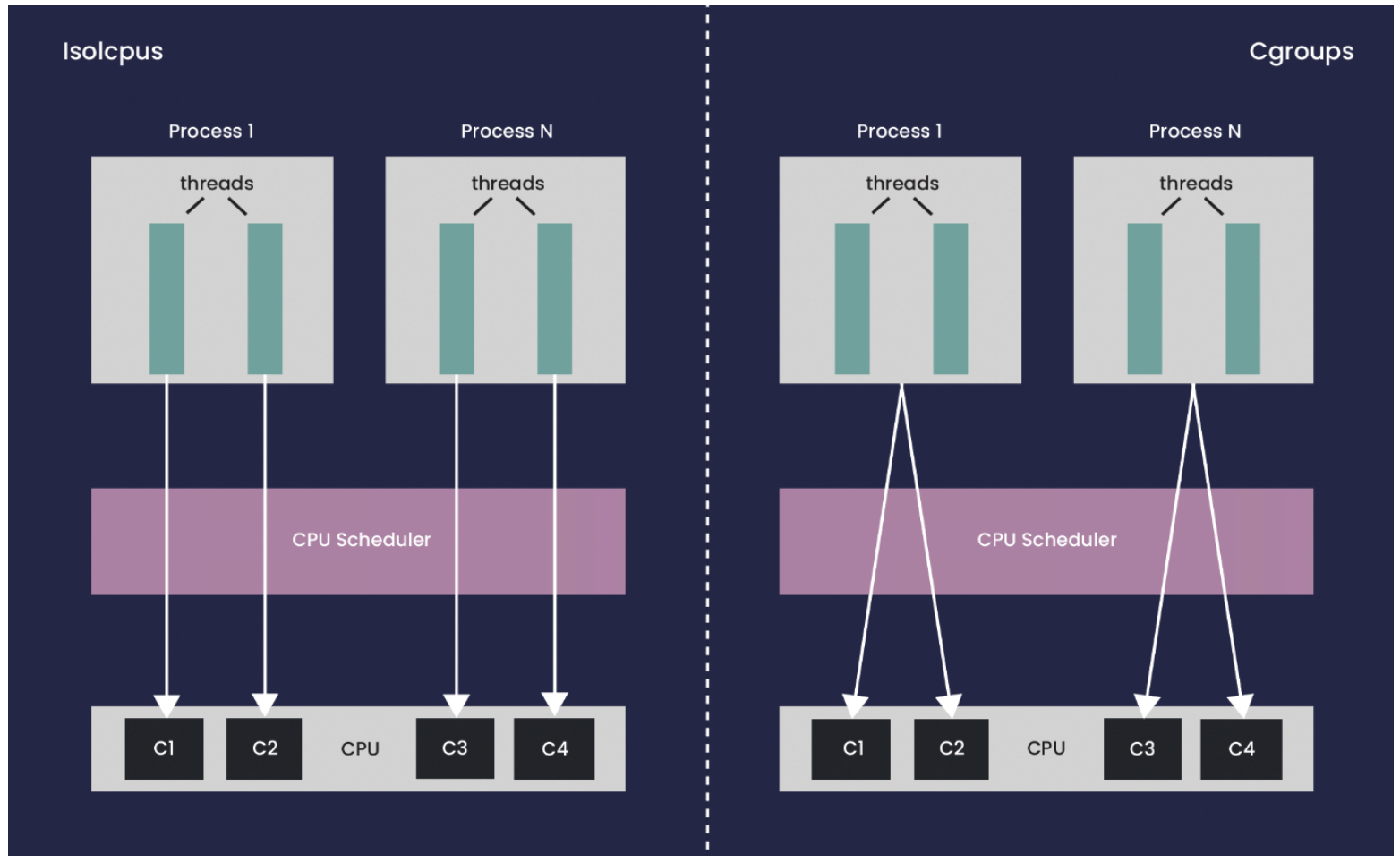

isolcpus is a Linux boot command-line option that allows an explicit list of CPUs to be excluded from consideration by the Linux scheduler. This option provides very effective isolation; however, the problem is that it does not respect CPU ranges. For example, when you use task set or sched_setaffinity to specify a range of allowed CPUs for a pinned process, only the first CPU in the allowed range is utilized, regardless of the number of threads in the process. Thus when controlling thread placement under isolcpus, every thread requires explicit management and particular care must be taken to avoid scheduling conflicts from auxiliary and/or child threads. Another major disadvantage of isolcpus is that the configuration is fixed once a host has started, so changes to the configuration require a reboot of the system.

Using Cgroups or Csets

An alternative that provides a similar level of isolation and allows for dynamically changing configuration is Linux cgroups. cgroups is a feature in the Linux kernel that enables administrators to limit, allocate, and prioritize system resources such as CPU, memory, disk I/O, and network bandwidth among processes or groups of processes. This can help prevent one application from monopolizing system resources, resulting in poor performance or instability.

csets is a utility that is used specifically to manage CPU affinity and placement for groups of tasks. By defining csets, administrators can assign specific CPUs or CPU cores to particular tasks or groups of tasks, ensuring that those tasks have dedicated CPU resources and minimizing interference from other tasks. This can be especially useful in high-performance computing environments, where minimizing contention and maximizing performance is critical. Both cgroups and csets enable specific cpuset groupings to be defined, with processes confined to run within one particular group.

Figure 1. A comparison of how threads can be managed with isolcpus and cgroups. isolcpus allows the management of individual threads but prevents the use of flexible CPU groups.

One drawback is that cgroups are primarily designed to work at the process level and are a less natural tool to use when the targeted control of individual threads is important. As touched on earlier, this can be a particular problem for Java applications, given the relatively large number of auxiliary threads started by the JVM. Even though many of the threads only run occasionally, they can still generate enough jitter to impact the high percentiles of any latency-sensitive application in the same group. A further complication when using cgroups is the absence of support from standard calls like task set and sched_setaffinity, making it more challenging to combine cgroups with low-level libraries: moving processes between groups requires the use of specialized calls.

Making the Procedure Better and Automatic

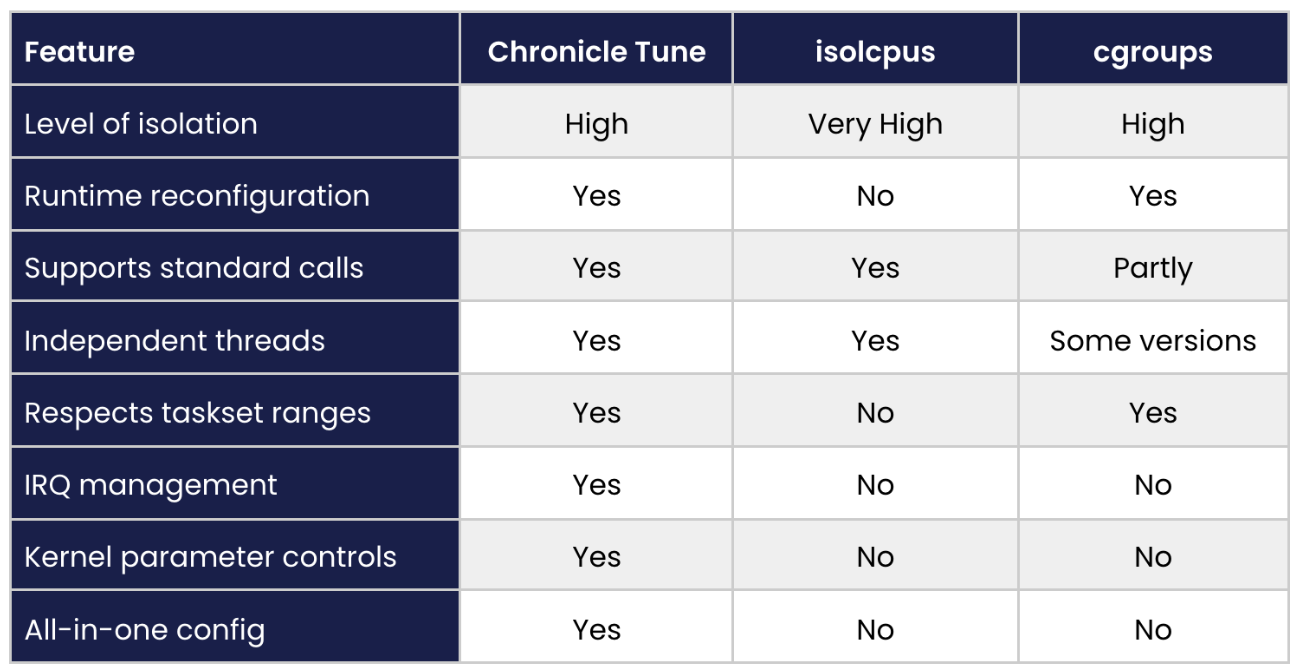

Since there are drawbacks with both isolcpus and cgroups/csets, plus they can be time-consuming to configure, we developed software to make tuning and managing a system simpler and more transparent. Chronicle Tune blends features from isolcpus and cgroups/csets together with bespoke functionality to simplify CPU and system tuning, allowing changes to be applied dynamically without the need for reboots.

Chronicle Tune can be especially useful for Java applications where careful separation and control of application and background threads is essential for achieving the best performance. Chronicle Tune facilitates optimal process placement and control, helping to ensure fewer and shorter interrupts and allowing threads to be dynamically migrated.

Figure 2. Comparison of Chronicle Tune, isolcpus, and groups

How Much Could Tuning Improve Performance?

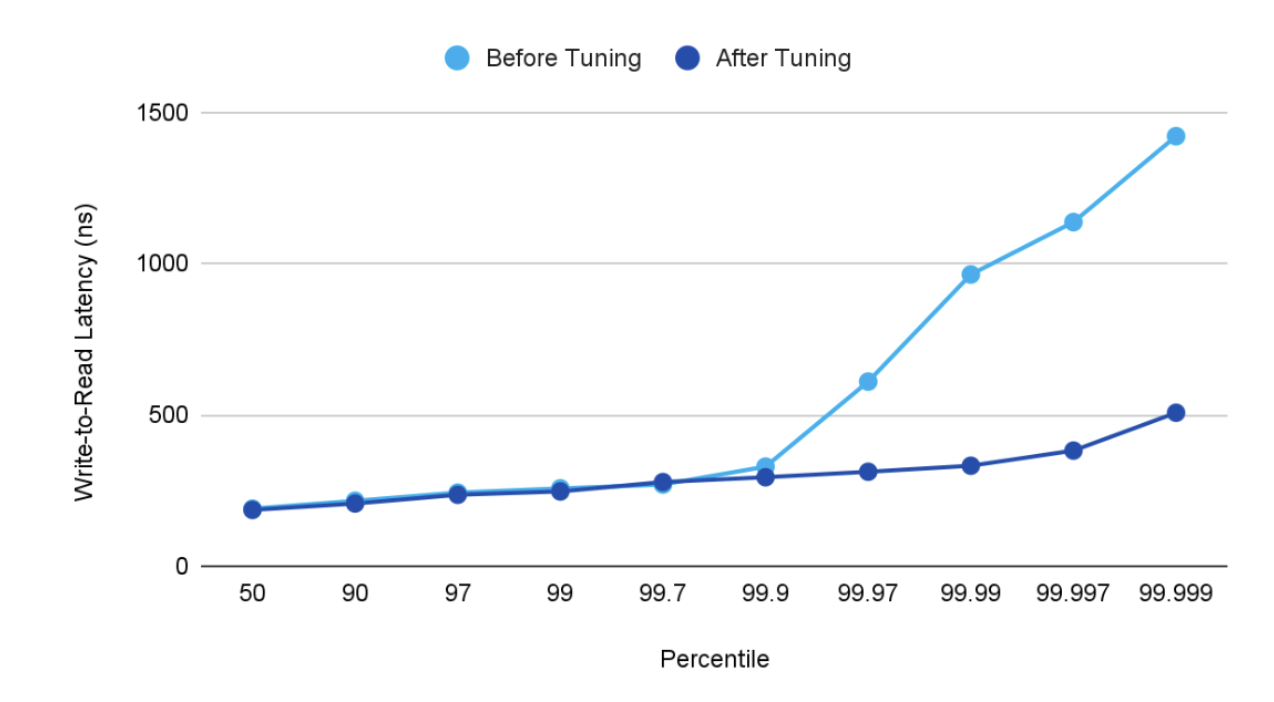

For businesses seeking to improve their performance down to the nanosecond, it’s crucial to understand how much of a difference tuning can make. To this end, we conducted a practical test to evaluate the impact of the Chronicle Tune on the performance of the Chronicle Queue. Specifically, we measured the write-to-read latency for 256-byte messages at a rate of 100,000 messages per second. Our testing shows that while Chronicle Tune had an effect even at lower percentiles, from around the 99.9th percentile onwards, the benefits of significantly reduced jitter became increasingly apparent, showing the machine running in a much cleaner, more optimal configuration.

Figure 3 Write-to-read latency of Chronicle Queue exchanging 256-byte messages @ 100k msgs/s.

Can Shrink Wrapped Software Be as Efficient as Manual Tuning?

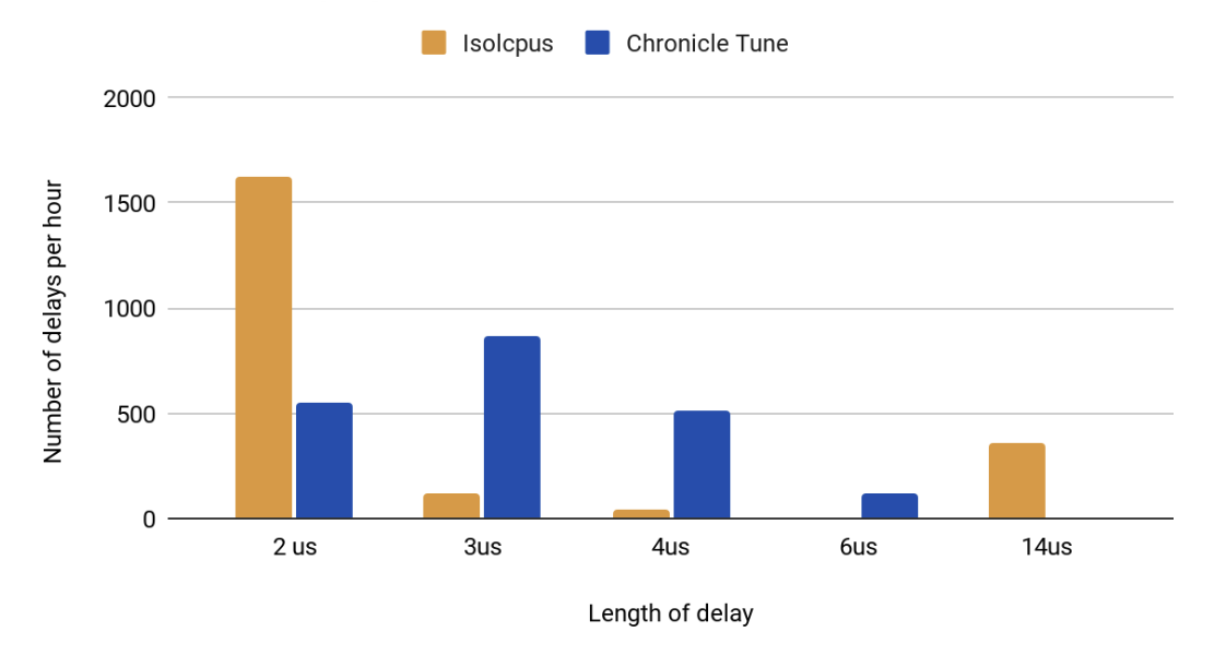

Using ready-made software is certainly more convenient than tuning manually. So how effective is Chronicle Tune in comparison? To investigate this, we have a tool that measures the jitter experienced by a spinning, pinned thread (closely representing a typical latency-sensitive application thread), and Figure 4 below shows the results of a comparison between isolcpus and Chronicle Tune.

This plot shows that while the total number of jitter events is slightly lower for isolcpus (as might be expected given isolcpus integrates directly with the scheduler), the worst outliers are, in fact, slightly lower with Chronicle Tune (6us vs. 14us) on account of the additional system tuning with Chronicle Tune beyond just CPU isolation. Chronicle Tune achieves this with a simple, transparent configuration, which can be adjusted without the need for reboots.

Figure 4. Average number of delays per hour, grouped by length of the delay. The statistics were gathered during a 91-second jitter test run.

Conclusion

The standard solutions for controlling CPU isolation for low-latency applications under Linux are isolcpus and cgroups/csets. However, they each have their downsides and can be awkward to use. Chronicle Tune simplifies the process of system tuning and manages low-latency, low-jitter tasks, scheduling of threads separately from processes, dynamic adjustment of allocations during runtime, efficient management of Interrupt Requests, and whole-system optimization, including SSD, disk, memory, and network. All of this is achieved using a simple, transparent configuration that can be adjusted dynamically without the need for a reboot to take effect.

Published at DZone with permission of Peter Lawrey, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments