How To Combine DevOps Tools Together To Solve Our Problems

Check out the best tools to employ for each continuous phase of the DevOps lifecycle.

Join the DZone community and get the full member experience.

Join For Free

DevOps Tools

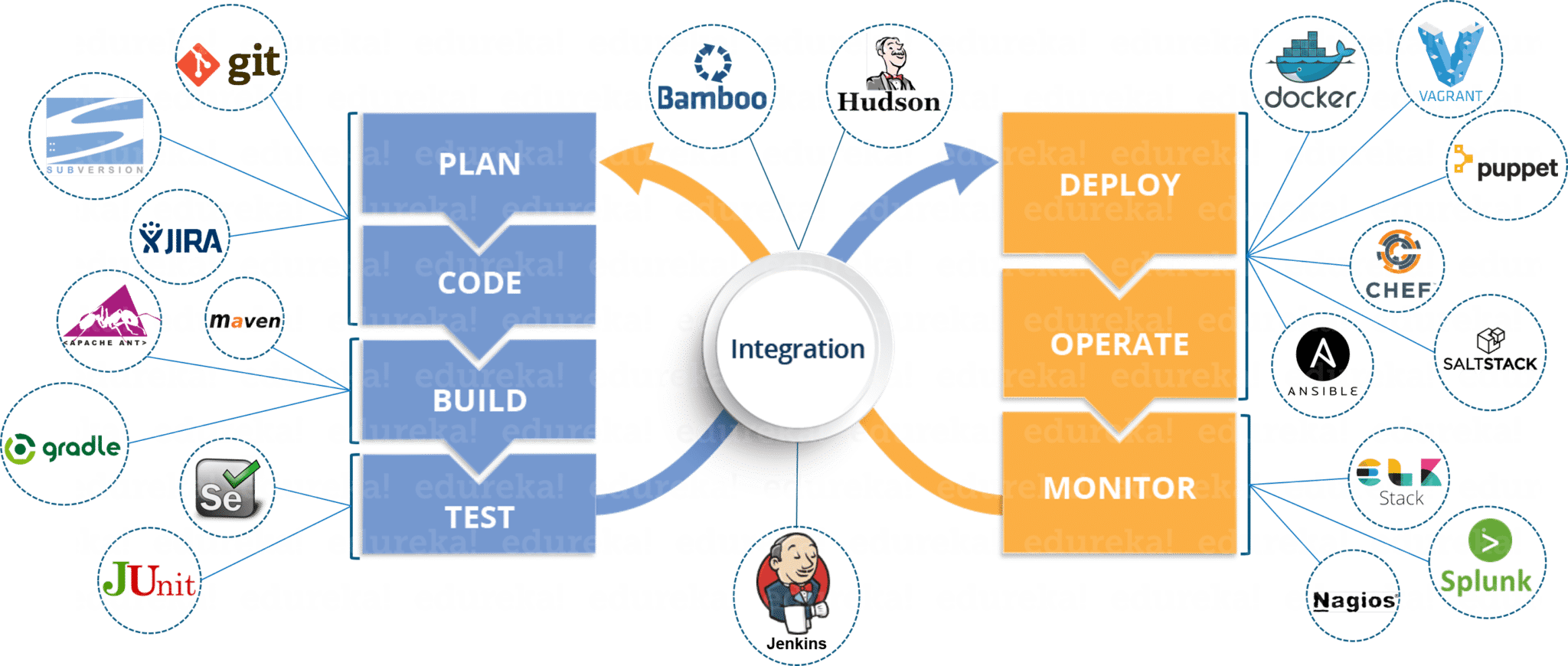

Before going any further, let’s recap the different tools and where they fall in the DevOps lifecycle.

Most Used DevOps Tools

DevOps Lifecycle Phases (DevOps Tools)

Well, I’m pretty sure you’re impressed with the above image. But, you might still have problems relating the tools to various phases. Don’t you?

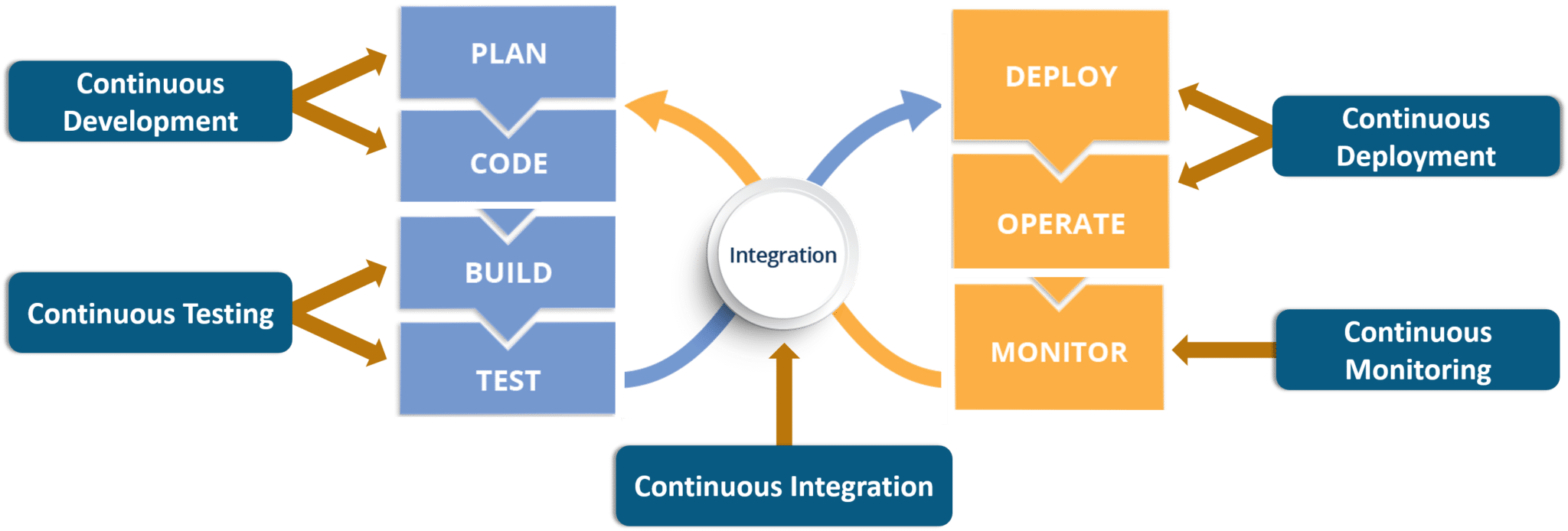

In that case, let’s take a step back and first understand the various phases present in the DevOps lifecycle. Below are the five different phases any software/ application has to pass through when developed via the DevOps lifecycle:

- Continuous Development

- Continuous Testing

- Continuous Integration

- Continuous Deployment

- Continuous Monitoring

1. Continuous Development

This is the phase that involves planning and coding of the software application’s functionality. There are no tools for planning as such, but there are a number of tools for maintaining the code.

The vision of the project is decided during the planning phase and when they start writing the code, the act is referred to as the coding phase.

The code can be written in any language, but it is maintained by using version control tools. These are the Continuous Development DevOps tools, the most popular of which are Git, SVN, Mercurial, CVS, and JIRA.

So why is it important to keep the main versions of the code? Which of the Dev vs. Ops problem does it solve? Let’s look at that first.

- Versions are maintained (in a central repository), to hold a single source of truth so that all the developers can collaborate on the latest committed code, and operations can have access to that same code when they plan to make a release.

- Whenever a mishap happens during a release, or even if there are lots of bugs in the code (faulty feature), there is nothing to worry about. Ops can quickly roll back the deployed code and thus revert back to the previous stable state.

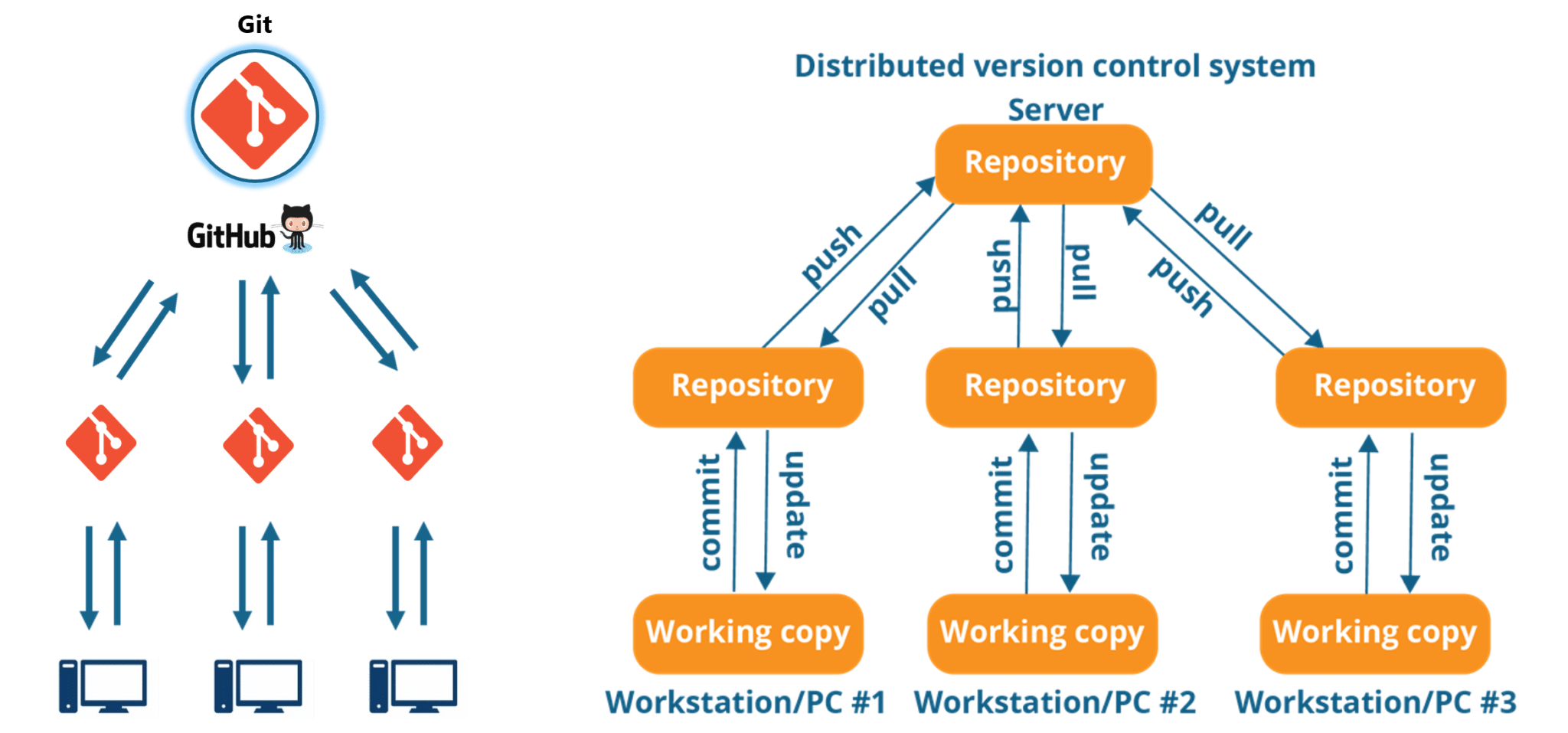

My favorites tools have got to be Git & GitHub. Why? Because Git allows developers to collaborate with each other on a Distributed VCS (Version Control System).

Since there is no dependency on the central server, pulls and pushes to the repository can be made from remote locations. This central repository where the code is maintained is called GitHub.

2. Continuous Testing

When the code is developed, it is maddening to release it straight to deployment. The code should first be tested for bugs and performance. Can we agree on that statement?

If so, then what would be the procedure to perform the tests? Would it be manual testing? Well, it can be, but it is very inefficient.

Automation testing is the answer to a lot of the issue that manual testers have. Tools like Selenium, TestNG, JUnit/ NUnit are used to automate the execution of our test cases. So, what are their benefits?

- Automation testing saves a lot of time, effort and labor for executing the tests manually.

- Besides that, report generation is a big plus. The task of evaluating which test cases failed in a test suite gets simpler.

- These tests can also be scheduled for execution at predefined times.

And the continuous use of these tools while developing the application is what forms the Continuous Testing phase during DevOps lifecycle. Which of these is my favorite tool? A combination of these tools, actually!

Selenium is my favorite, but Selenium without TestNG is equivalent to a snake without a poisonous bite, at least from the perspective of the DevOps lifecycle.

Selenium does the automation testing, and the reports are generated by TestNG. But to automate this entire testing phase, we need a trigger, right? This is where the role of continuous integration tools like Jenkins coming into the picture.

![selenium testing jenkins]()

Selenium Testing Jenkins

3. Continuous Integration

This is the most brilliant DevOps phase. It might not make sense during the first cycle of release, but then you will understand this phase’s importance going forward.

Continuous Integration (CI) plays a major role even during the first release. It helps massively to integrate the CI tools with configuration management tools for deployment.

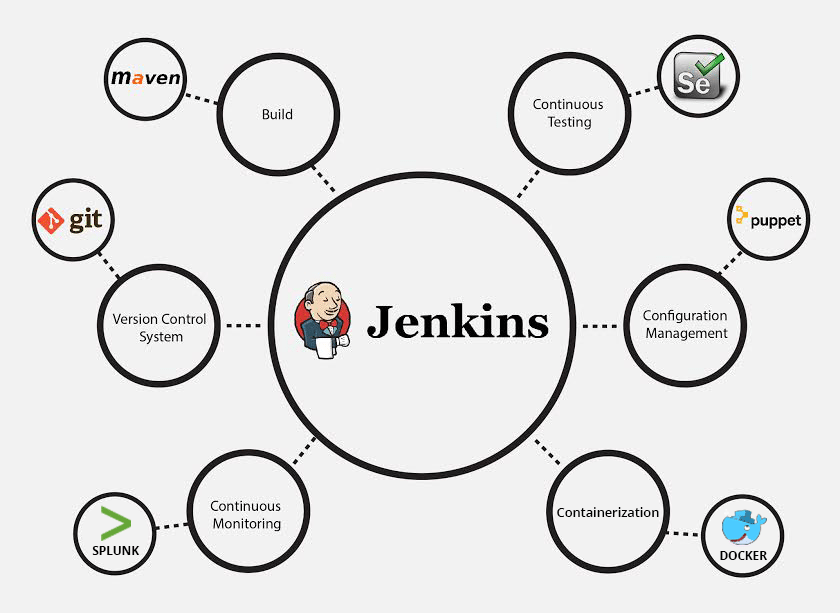

Undisputedly, the most popular CI tool in the market is Jenkins. And personally, Jenkins is my favorite DevOps tool. Other popular CI tools are Bamboo and Hudson.

Why do I hold such high regard for continuous integration tools? Because they are the ones which hold the entire DevOps structure together.

The CI tools orchestrate the automation of tools falling under other DevOps lifecycle phases. Be they continuous development, testing, or deployment tools, or even continuous monitoring tools, the continuous integration tools can be integrated with all of them.

- When integrated with Git/SVN, Jenkins can schedule jobs (pulling the code from shared repositories) automatically and make it ready for builds and testing (continuous development). Jenkins can build jobs either at scheduled times of the day or whenever there is a commit pushed to the central repository.

- When integrated with testing tools like Selenium, we can achieve continuous testing. The developed code can be built using tools like Maven/Ant/Gradle. When the code is built, then Selenium can automate the execution of that code by creating a suite of test cases and executing the test cases one after the other. The role of Jenkins/Hudson/Bamboo here would be to schedule/automate.

- When integrated with Continuous Deployment tools, Jenkins/Hudson/Bamboo can trigger the deployments planned by configuration management and containerization tools.

- And finally, Jenkins/Hudson can be integrated with monitoring tools like Splunk, ELK, Nagios, or NewRelic, to continuously monitor the status and performance of the server where the deployments have been made.

Because CI tools are capable of this and so much more, they are my favorite.

4. Continuous Deployment

Continuous Deployment is the phase where the action actually happens. We have seen the tools which help us build the code from scratch and tools which help in testing. Now it is time to understand why DevOps will be incomplete without configuration management tools or containerization tools. Both sets of tools here help in achieving continuous deployment (CD).

Configuration Management Tools

- Configuration Management is the act of establishing and maintaining consistency in an applications’ functional requirements and performance. In simpler words, it is the act of releasing deployments to servers, scheduling updates on all servers and most importantly keeping the configurations consistent across all the servers.

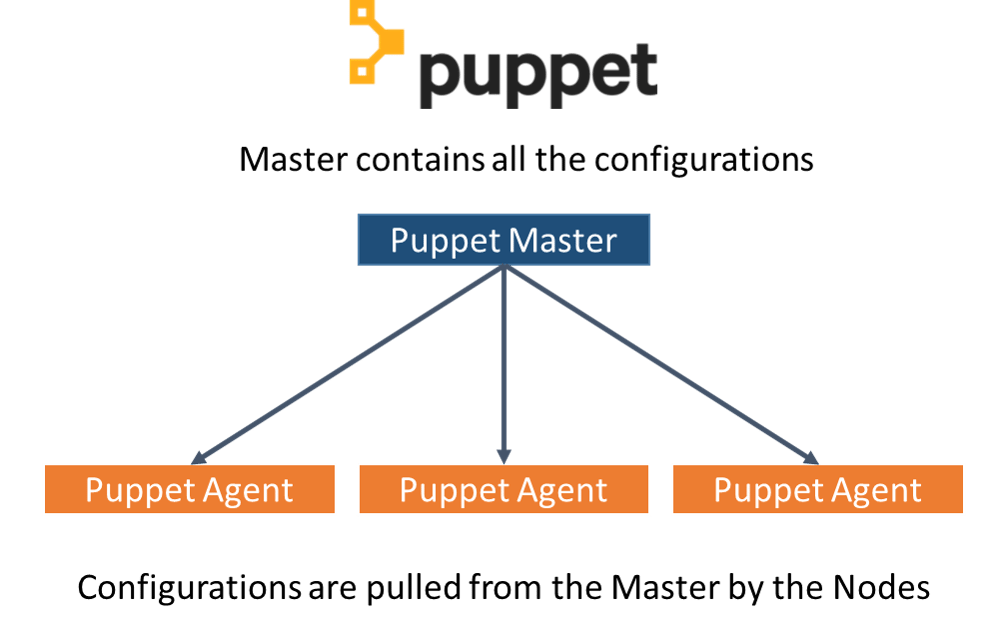

- For this, we have tools like Puppet, Chef, Ansible, SaltStack and more. But the best tool here is a Puppet. Puppet and the other CM tools work based on the master-slave architecture. When there is a deployment sent to the master, the master is responsible for replicating those changes across all the slaves.

Now let’s move onto containerization.

Containerization Tools

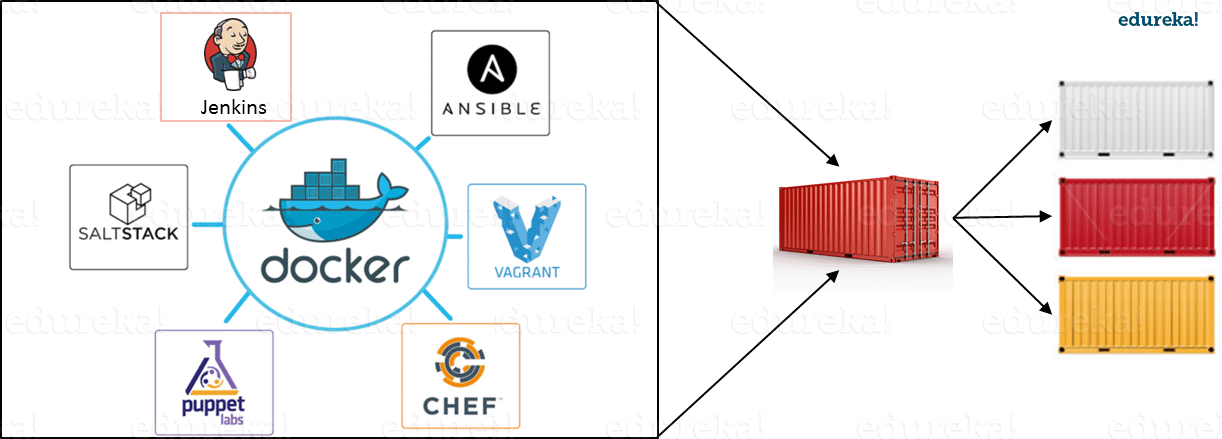

Containerization tools are other sets of tools that help in maintaining consistency across the environments where the application is developed, tested and deployed. It eliminates any chance of errors/ failure in the production environment by packaging and replicating the same dependencies and packages used in the development/ testing/ staging environment.

The clear winner here is Docker, which was among the first containerization tools ever. Earlier, this act of maintaining consistency in environments was a challenge because VMs and servers were used, and their environments would have to be managed manually to achieve consistency. Docker containers threw this challenge up above and blew it out of the water. (Pun intended!)

Another containerization tool is Vagrant. But as of late, a number of cloud solutions have started providing support for container services. Amazon ECS, Azure Container Service, and Google Container Engine are a few of the cloud services that have started radical support for Docker containers. This is the reason why Docker is the clear winner.

5. Continuous Monitoring

What is the point of developing an application and deploying it, if we do not monitor its performance? Monitoring is as important as developing the application because there will always be a chance of bugs that escape undetected during the testing phase.

Which tools fall under this phase? Splunk, ELK Stack, Nagios, Sensu, NewRelic are some of the popular tools for monitoring. When used in combination with Jenkins, we achieve continuous monitoring. So, how does monitoring help?

- To minimize the consequences of buggy features, monitoring is a necessity. Buggy features most often tend to cause financial loss, which is all the more reason to perform continuous monitoring.

- Monitoring tools also report failure and unfavorable conditions before your clients get to experience the faulty features.

Which is my favorite tool here? I would prefer either Splunk or ELK stack. These two tools are major competitors. They pretty much provide the same features. But the way they provide the functionality is where they are different.

Splunk is a propriety tool. But, this also effectively means that working on Splunk is very easy. The ELK stack, however, is a combination of three open-source tools: ElasticSearch, LogStash, and Kibana. It may be free, but setting it up is not as easy as a commercial tool like Splunk. You can try both of them to figure out the better for your organization.

Well, that's it folks! These were the various phases of the DevOps lifecycle and the tools that fit seamlessly in those situations.

Published at DZone with permission of Sahiti Kappagantula, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments