Insightful Interpretation of Machine Learning Datasets

An overview of training datasets which can subsequently be enriched through data annotation and labeling for further use as artificial intelligence (AI) training data.

Join the DZone community and get the full member experience.

Join For FreeIt is possible to simulate human intelligence in machines with artificial intelligence (AI) and machine learning (ML). These simulations allow them to complete a variety of tasks without much human assistance. Companies need precise training data if they are to develop AI and ML models that are more efficient and newer. It is possible to gain a better understanding of a given problem through the use of training datasets which can subsequently be enriched through data annotation and labeling for further use as artificial intelligence (AI) training data.

What Is Machine Learning?

The goal of machine learning is to imitate humans' learning process through the use of data and algorithms. It gradually improves the accuracy of its predictions. Statistical methods allow algorithms to be trained to make classifications or predictions within data mining projects using machine learning — this provides key insights into the data.

Ideally, data mining improves business and application decision-making, influencing key growth metrics through these insights. Increasing demand for data scientists will result from the continued growth and development of big data, which requires them to identify the most pertinent business questions and the data that will be required to answer the questions.

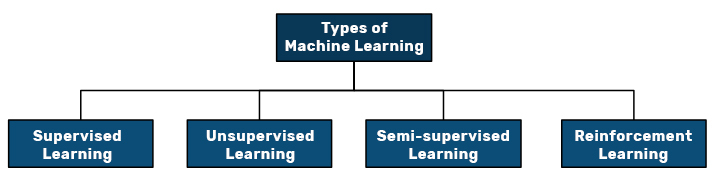

Types of Machine Learning

An algorithm learns to improve its accuracy by applying supervised, unsupervised, semi-supervised, and reinforcement learning approaches. These four basic approaches are classified according to how an algorithm learns. Data scientists choose which algorithm and machine learning type depending on the data they wish to analyze.

1. Supervised Learning

These types of machine learning algorithms require labeled training data and variables data scientists want the algorithm to evaluate for correlations. Here, the input and output of the algorithm are both specified by the data scientists.

2. Unsupervised Learning

It involves algorithms that learn from unlabeled data, where an algorithm scans data sets to identify meaningful connections. All predictions or recommendations are predetermined by the data that the algorithms train on.

3. Semi-supervised Learning

There are two approaches to machine learning. In this approach, the model is fed mostly labeled training data by a data scientist, but it is free to explore the data on its own and develop its own insights about it.

4. Reinforcement Learning

As part of reinforcement learning, data scientists teach a machine how to complete a multistep process governed by clearly defined rules. For the most part, an algorithm decides how to complete a task on its own, but data scientists program it to complete it and give it positive or negative cues as it works out how to accomplish it.

Real-World Machine Learning Use Cases

You might encounter machine learning every day in the following ways:

Speech Recognition

Alternatively called automatic speech recognition (ASR), computer speech recognition, or speech-to-text, this technology converts human speech into written form using natural language processing (NLP). A number of mobile devices include speech recognition in their systems so that users can conduct voice searches—like Google Assistant in Android smartphones, Siri in Apple devices, and Amazon’s Alexa in media devices.

Customer Service

Human agents are being replaced by online chatbots as customer service grows. We are seeing the shift in customer engagement across websites and social media platforms as these companies provide answers to frequently asked questions (FAQs) around topics such as shipping or product delivery, or cross-selling product recommendations. Slack and Messenger, for example, as well as virtual agents and voice assistants, are some examples of messaging bots on e-commerce sites with virtual agents.

Computer Vision

Computers and systems can use this AI technology to glean meaningful information from images, videos, and other visual inputs; Using this technology, they can take action based on these inputs. It is distinguished from image recognition tasks by its ability to provide recommendations. The application of computer vision in the industry of photo tagging on social media, radiology imaging in healthcare, and self-driving cars is based on convolutional neural networks.

Recommendation Engines

Online retailers can make useful add-on recommendations to customers during checkout using data on past consumption behavior. AI algorithms can help us discover data trends for developing more effective cross-selling strategies.

Automated Stock Trading

Without human intervention, AI-driven high-frequency trading platforms execute thousands or millions of trades every day in order to optimize stock portfolios.

What Is Training Data?

Machine learning algorithms develop an understanding of datasets by processing data and finding connections. In order to make this connection and find patterns in processed data, an ML system must first learn. After the 'learning,' it can then make decisions based on the learned patterns. ML algorithms can solve problems from retro observations — Exposing machines to relevant data over time allows them to evolve and improve. The training data quality directly influences the ML model's performance quality.

Cogito is a leading data annotation company assisting AI and machine learning enterprises with high-quality training data. In its decade-long journey as a data procurer, the company has built credibility for the accuracy and timely delivery of training data to ensure the quick accomplishment of data-driven AI models.

What Is Test Data?

When an ML model is built using training data, you need to test it with 'unseen' data. This testing data is used to evaluate the future predictions or classifications the model makes. The validation set is another partition of the dataset that is tested iteratively before the test data is entered; this testing allows developers to identify and correct overfitting before the test data is entered.

Both positive and negative tests are performed using test data to verify functions produce the expected results for given inputs and to determine whether the software is capable of handling unusual, exceptional, or unexpected inputs. As your test data management strategy can be optimized by outsourcing data annotation to an industry expert, you can ensure quality information reaches test cases more quickly.

Training Dataset vs. Test Dataset

An ML model can learn patterns by learning insights from training data, which is approximately 80% of the complete dataset to be fed into the model. Testing data represent the actual dataset since they evaluate the model's performance, monitor its progress, and skew it for optimal results.

The training data is typically 20% of the entire dataset, while the testing data confirms the model's functionality. In essence, the training data train the model, and the testing data confirms its effectiveness.

Enriching Datasets Using Data Annotation and Labeling

Building and training an ML model will require large volumes of training data. Data annotation is the process of adding tags and labels to training data. In order to achieve this goal, ML models require properly annotated training data in order to process data and gain specific information.

Data annotation helps machines identify specific patterns and trends in data by connecting all the dots. Enterprises must understand how different factors affect the decision-making process in order to achieve business success. Data annotation and labeling Services 2023 hold the key to accelerating businesses into the future.

Published at DZone with permission of Matthew McMullen. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments