Integrating Google Cloud Platform Services With Kubernetes

In this article, we’ll explore the power of GCP and Kubernetes and see how they work together to create a dynamic and flexible infrastructure.

Join the DZone community and get the full member experience.

Join For FreeToday, more and more organizations demand useful and efficient solutions to meet their growing infrastructure needs. Two prominent examples of such high-performance solutions are the Google Cloud Platform (GCP) and Kubernetes. GCP offers a robust cloud computing environment, while Kubernetes provides a container coordination platform. Together they are a powerful combination for managing and deploying applications at any scale. In this article, we’ll explore the power of GCP and Kubernetes and see how they work together to create a dynamic and flexible infrastructure.

Introduction to Google Cloud Platform

Google Cloud Platform is a set of cloud services that enables developers to efficiently build, deploy, and scale applications. It offers various products and services, including computing, storage, networking, and databases.

Here are a few key features and benefits of GCP:

Compute Engine

Compute Engine GCP provides virtual machines (VMs) with scalable performance to run applications. It allows users to instantiate and manage virtual machines in the cloud, giving flexibility and control over computing resources.

App Engine

App Engine is a fully managed platform that makes deploying and scaling applications easy. It supports multiple programming languages and automatically scales the application based on demand, allowing developers to focus on writing code rather than managing infrastructure.

Cloud Storage

GCP Cloud Storage offers robust object storage. It allows you to store and retrieve data and easily interact with other GCP services.

BigQuery

BigQuery is a serverless data warehouse allowing you to analyze large datasets quickly. It supports SQL queries and provides real-time information for business intelligence and data analysis.

Kubernetes: The Container Orchestration Platform

Kubernetes is an open-source container management platform that automates deployment and scaling. It simplifies the application process in containers and provides features such as service discovery, load balancing, and autoscaling.

Here are some key Kubernetes concepts:

Pods

A Pod is the smallest unit in Kubernetes and represents a group of one or more containers deployed together on the same host. Containers within a Pod share the same network namespace and can communicate with each other via localhost.

Deployments

Deployments allow you to define and manage the desired state of your application. They provide a declarative way to create and update modules so that the desired number of replicas always works.

Services

Services provide network access to a set of pods. They provide a stable endpoint and load balancing to access your application. Services can be provided internally to the cluster or externally using load balancers or ingress controllers.

Scaling

Kubernetes supports horizontal scaling, which allows you to dynamically adjust the number of replicas of your application based on demand. It also ensures that your application can handle various traffic loads efficiently.

Using GCP With Kubernetes

Now that we understand GCP and Kubernetes, let’s explore how they can be used together to create a scalable and robust infrastructure.

Here are a few examples:

Running Kubernetes on GCP

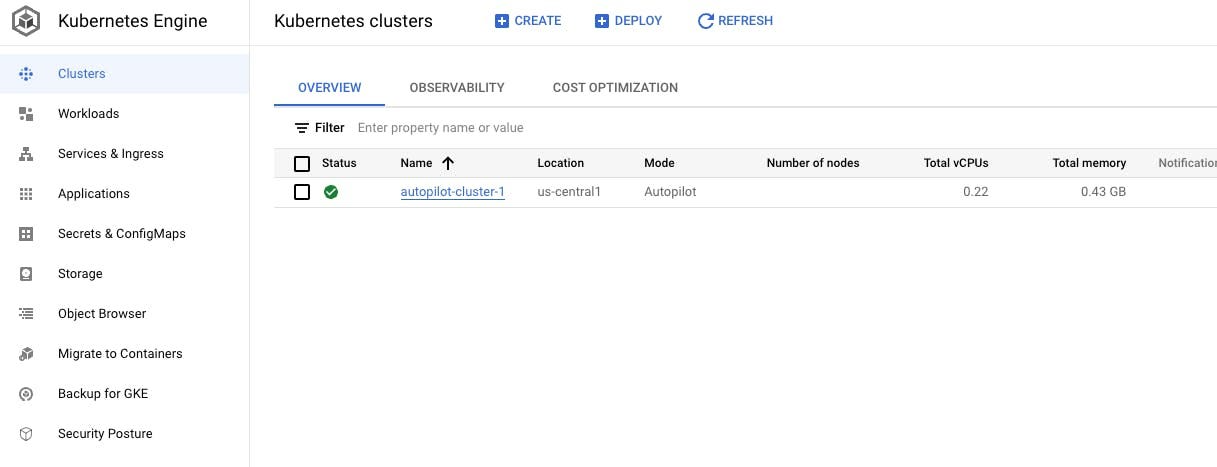

GCP provides managed Kubernetes services like Google Kubernetes Engine (GKE), allowing you to easily deploy and manage Kubernetes clusters. GKE abstracts away the underlying infrastructure and provides a fully managed and reliable environment for running your containerized applications.

Autoscaling With GKE

GKE integrates with the autoscaling capabilities of GCP. This allows you to automatically adjust the size of your Kubernetes cluster based on resource usage. It also ensures that your application can scale up or down based on incoming traffic, optimizing resource allocation and cost efficiency.

To enable autoscaling in GKE, you can define horizontal pod autoscale (HPAs) that automatically scale the number of pod replicas based on metrics such as CPU usage or custom metrics.

Here is an example of HPA configuration:

apiVersion: autoscaling/v2beta2

kind: HorizontalPodAutoscaler

metadata:

name: autoscaler

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: deployment

minReplicas: 1

maxReplicas: 5

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 85In this example, the HPA adjusts the number of replicas for a Deployment named “deployment” based on CPU utilization. The minimum number of replicas is set to 1, and the maximum is set to 5. The average CPU utilization target is defined as 85%.

Integrating GCP Services With Kubernetes

GCP offers various services that can be easily integrated with Kubernetes. For example, you can use Cloud Storage as persistent storage for your Kubernetes applications. You can also mount the Cloud Storage bucket as a volume in your pods, allowing you to store and retrieve data from the bucket in your application.

Here is an example of a PersistentVolumeClaim (PVC) definition for using Cloud Storage as a storage solution in Kubernetes:

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: storage-claim

spec:

accessModes:

- ReadWriteOnce

storageClassName: standard

resources:

requests:

storage: 7GiIn this example, a PVC named “storage-claim” is defined with a storage request of 7GB. By default, GKE uses the “standard” storage class, which is backed by Cloud Storage. This PVC can then be used by your application Pods to mount the storage and store persistent data.

Conclusion

In this article, we explored the capabilities of Google Cloud Platform (GCP) and Kubernetes and how they can be used together to create a dynamic and scalable infrastructure. GCP offers a comprehensive set of cloud services, while Kubernetes provides powerful container management capabilities.

By using GCP Kubernetes Managed Service (GKE) and integrating GCP services with Kubernetes, organizations can build resilient, scalable, and cost-effective solutions and incorporate them into their workflows.

Opinions expressed by DZone contributors are their own.

Comments