Is Your Postgres Query Starved for Memory?

Why not use all your server’s available memory to run all your SQL statements as fast as possible, all the time? This result seems too easy, too good to be true. And it is.

Join the DZone community and get the full member experience.

Join For Freefor years or even decades, i’ve heard about how important it is to optimize my sql statements and database schema. when my application starts to slow down, i look for missing indexes; i look for unnecessary joins; i think about caching results with a materialized view.

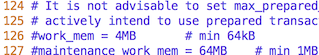

but instead, the problem might be my postgres server was not installed and tuned properly. buried inside the

postgresql.conf

file is an obscure, technical setting called

work_mem

. this controls how much “working memory” your postgres server allocates for each sort or join operation. the default value for this is only 4mb:

if your application ever tries to sort or join more than four megabytes worth of data, this working memory buffer will fill up. instead of just returning the dataset you want, postgres will waste time streaming excess data out to disk, only to read it back again later as the hash join or sort algorithm proceeds.

today, i’ll start with a look at how postgres scales up the hash join algorithm for larger and larger datasets. then, i’ll measure how much slower a hash join query is when the hash table doesn’t fit into the working memory buffer. you’ll learn how to use the

explain analyze

command to find out if your slow query is starved for memory.

hash tables inside postgres

in my last article, i described how postgres implements the hash join algorithm . i showed how postgres scans over all the records in one of the tables from the join and saves them in a hash table.

here’s what a hash table might look like conceptually:

on the left is an array of pointers called buckets . each of these pointers is the head of a linked list, which i show on the right using blue rectangles. the rectangles represent values from one of the tables in the join. postgres groups the values into lists based on their hash values. by organizing the values from one table like this, postgres can later scan over a second table and repeatedly search the hash table to perform the join efficiently. this algorithm is known as a hash join .

postgres’s hash join code gracefully scales up to process larger and larger data sets by increasing the number of buckets. if the target table had more records, postgres would use 2,048 buckets instead of 1,024:

before starting to execute the hash join algorithm, postgres estimates how many records it will need to add to the hash table, using the query plan. then postgres chooses a bucket count that is large enough to fit all of the records.

postgres’s bucket count formula keeps the average linked list length less than 10 (the constant

ntup_per_bucket

in the postgres source code) to avoid iterating over long lists. it also sets the bucket count to a power of two, which allows postgres to use c bitmask operations to assign buckets to hash values. the least significant bits of the hash value for each record becomes the bucket number.

if you’re curious, postgres implements the bucket count formula in a c function called

execchoosehashtablesize

(which you can view

here

):

how large can a postgres hash table grow?

if the table from your join query was even larger, then postgres would use 4,096 buckets instead of 2,048:

in theory, this doubling of the bucket count could continue forever: 8192 buckets, 16384 buckets, etc. with 10 records per linked list, this would accommodate 81,920 values, 163,840 values, etc.

in practice, as the total size of the data set being saved into the hash table continues to increase, postgres will eventually run out of memory. but that doesn’t seem to be an immediate problem, does it? modern server hardware contains tens or even hundreds of gbs; that is plenty of room to hold an extremely large hash table.

but in fact, postgres limits the size of each hash table to only 4mb!

the rectangle i drew around the hash table above is the working memory buffer assigned to that table. regardless of how much memory my server hardware actually has, postgres won’t allow the hash table to consume more than 4mb. this value is the

work_mem

setting found in the

postgresql.conf

file.

at the same time that postgres calculates the number of buckets, it also calculates the total amount of memory it expects the hash table to consume. if this amount exceeds 4mb, postgres divides the hash operation up into a series of batches :

in this example, postgres calculated that it would need up to 8mb to hold the hash table. a larger join query might have many more batches, each holding 4mb of data. like the bucket count, postgres sets the batch count to a power of two, also. the first batch, shown on the left, contains the actual hash table in memory. the second batch, shown on the right, contains the records that won’t fit into the 4mb hash table in the first batch. postgres assigns a batch number to each record, along with the bucket number. then, it saves the records from the first batch into the hash table and streams the remaining data out to disk. each batch gets its own temporary file.

using an algorithm known as the hybrid hash join , postgres first searches the hash table already in memory. then, it streams all of the data back from disk for the next batch, builds another hash table, and searches it, repeating this process for each batch.

note : postgres actually holds a second hash table in memory, called the skew table. for simplicity, i’m not showing this in the diagram. this special hash table is an optimization to handle hash values that occur frequently in the data. postgres saves the skew table inside the same 4mb working memory buffer, so the primary hash table actually has a bit less than 4mb available to it.

measuring your sql statement’s blood pressure

if one of the sql queries in your application is running slowly, use the

explain analyze

to find out what’s going on:

> explain analyze select title, company from publications, authors where author = name;

query plan

-------------------------------------------------------------------------------------------------------------------------------

hash join (cost=2579.00..53605.00 rows=50000 width=72) (actual time=66.820..959.794 rows=21 loops=1)

hash cond: ((authors.name)::text = (publications.author)::text)

-> seq scan on authors (cost=0.00..20310.00 rows=1000000 width=50) (actual time=0.059..267.217 rows=1000000 loops=1)

-> hash (cost=1270.00..1270.00 rows=50000 width=88) (actual time=38.054..38.054 rows=50000 loops=1)

buckets: 4096 batches: 2 memory usage: 2948kb

-> seq scan on publications (cost=0.00..1270.00 rows=50000 width=88) (actual time=0.010..14.211 rows=50000 loops=1)

planning time: 0.489 ms

execution time: 960.482 ms

(8 rows)

postgres’s

explain

command displays the query plan, a tree data structure containing instructions that postgres follows when it executes the query. by using

explain analyze

, we ask postgres to execute the query, also, displaying time and data metrics when it's finished. we can see in this example there were one million records in the authors table and 50 thousand records in the publications table. at the bottom, we see that the join operation took a total of 960ms to finish.

explain analyze

also tells us how many buckets and batches the hash table used:

buckets: 4096 batches: 2 memory usage: 2948kblike my diagram above, this query used two batches: only half of the data fit into the 4mb working memory buffer! postgres saved the other half of the data in the file buffer.

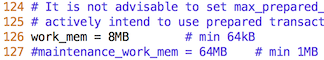

increasing work_mem

now, let’s increase the size of the working memory buffer by editing the

postgresql.conf

file and restarting the postgres server.

first, i stop my postgres server:

$ launchctl unload homebrew.mxcl.postgresql.plist

then i edit

postgresql.conf

:

$ vim /usr/local/var/postgres/postgresql.conf…uncommenting and changing the setting:

finally, i restart my server and repeat the test:

$ launchctl load homebrew.mxcl.postgresql.plist> explain analyze select title, company from publications, authors where author = name;

query plan

-------------------------------------------------------------------------------------------------------------------------------

hash join (cost=1895.00..32705.00 rows=50000 width=72) (actual time=59.224..624.716 rows=21 loops=1)

hash cond: ((authors.name)::text = (publications.author)::text)

-> seq scan on authors (cost=0.00..20310.00 rows=1000000 width=50) (actual time=0.031..146.327 rows=1000000 loops=1)

-> hash (cost=1270.00..1270.00 rows=50000 width=88) (actual time=34.436..34.436 rows=50000 loops=1)

buckets: 8192 batches: 1 memory usage: 5860kb

-> seq scan on publications (cost=0.00..1270.00 rows=50000 width=88) (actual time=0.008..13.382 rows=50000 loops=1)

planning time: 0.481 ms

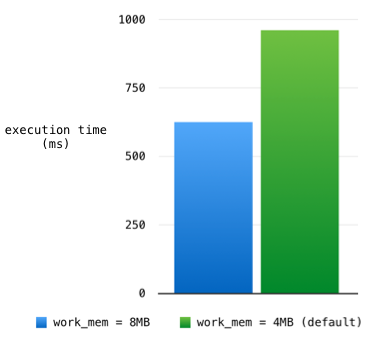

execution time: 625.796 ms

(8 rows)you can see the number of batches is now 1, and the memory usage increased to 5.8mb:

buckets: 8192 batches: 1 memory usage: 5860kbpostgres was able to use a working memory buffer size larger than 4mb. this allowed it to save the entire data set into a single, in-memory hash table and avoid using temporary buffer files.

because of this, the total execution time decreased from 960ms to 625ms:

too good to be true

if memory-intensive postgres sql statements could run so much faster, why does postgres use only 4mb by default for the working memory buffer size? in this example, i increased

work_mem

modestly from 4mb to 8mb — why not increase it to 1gb or 10gb? why not use all of your server’s available memory to run all of your sql statements as fast as possible, all of the time? that’s why you bought that fat box to host your postgres server, isn’t it?

this result seems too easy, too good to be true. and it is.

database servers like postgres are optimized to handle many small, concurrent requests at the same time. each request needs its own working memory buffer. not only that, but each sql statement postgres executes might require multiple memory buffers — one for each join or sort operation the query plan calls for.

my postgres server isn’t entirely dedicated to executing this one example sql statement and nothing else. by increasing the value of

work_mem

, i’ve increased it server-wide for every request, not just for my one slow hash join. given the same amount of total ram available on the server box, increasing

work_mem

means postgres can handle fewer concurrent requests before running out of memory. however, it certainly might be the case that 8mb or some larger value for

work_mem

is appropriate given the amount of memory i have and the number of concurrent connections i expect.

be smart about how you configure your postgres server. don’t blindly accept the default values or guess what they should be at the moment you install postgres. instead, after your application is finished and running in production, look for memory-intensive sql statements. measure the sql queries your application actually executes. take their blood pressure using the

explain analyze

command; you might find they are memory starved!

Published at DZone with permission of Pat Shaughnessy. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments