RSocket vs. gRPC Benchmark

These two go head-to-head in a performance battle based on latency, CPU consumption, QPS, and scalability.

Join the DZone community and get the full member experience.

Join For FreeAlmost every time I present RSocket to an audience, there will be someone asking the question: "How does RSocket compare to gRPC?"

Today we are going to find out.

Setting the Stage

RSocket

RSocket implements the reactive semantics on application networks. It is a networking protocol that enforces back pressure and other reactive streams concepts end-to-end.

gRPC

gRPC is designed to solve the problem of polyglot RPC. It has two parts: the protobuf IDL and HTTP/2 networking protocol.

Apple to Apple?

From the design and components, we know the apple to apple comparison should be RSocket vs. HTTP/2.

However, how would you compare two protocols effectively? One approach is to benchmark them with the same application. To make applications run on the protocols, we need an RPC SDK.

RSocket is quite agnostic on the encoders. It supports JSON, protobuf and other definitions. In this benchmark, we’ll use RSocket with protobuf, Java RPC and Messagepack. For gRPC, we'll only use protobuf since it is proven to be the best-performing encoder for gRPC already.

Context

Before the benchmark test, let’s compare the use cases of the two protocols first. Basically, RSocket is designed for application communication, HTTP/2 is still designed to handle web traffic.

But what does “designed to handle web traffic” mean? Well, it means there is a clear distinction between client and server. And the conversation style is mostly request/response, with the possibility of stream. Remember TCP does not stress on that client/server distinction. When we are using the HTTP/2 protocol, it’s hard for the server to make reverse requests to the client, let alone using the same socket connection to do that.

Application-to-application communication, on the other hand, is quite different. Applications are peers having conversations. There is no hard line between who is the server and who is the client, especially in microservices architecture.

To cover most conversation scenarios, RSocket implements four types of communication models :

- Request/response (stream of 1)

- Request/stream (finite stream of many)

- Fire-and-forget (no response)

- Channel (bi-directional streams)

Not only is RSocket multiplexed, but the sender and receiver can switch roles while retaining the same socket connection.

Benchmark test

Setup

- Two servers, each with 4-core Intel(R) Xeon(R) Platinum 8163 CPU @ 2.50GHz + 8G

JVM Configuration

-Xmx2g -Xms2g -XX:+AlwaysPreTouch -XX:+UseStringDeduplication

gRPC Configuration

- gRPC windowupdate = 1 *1024*1024 *1024

Sampling Rule

- The best result out of 10 tries

Tool

The package we use is a comparison tool developed by Netifi. It's a Java stack.

Heap vs. Direct

In some of the results, we'll see heap vs. direct comparison. It is mostly meant for Java applications at high loads. On the charts, we will show heap (with _h tag) and non-heap (with _d tag) results.

Results:

The two meaningful benchmarks are throughput (QPS) and Latency. Here are the results in different loads:

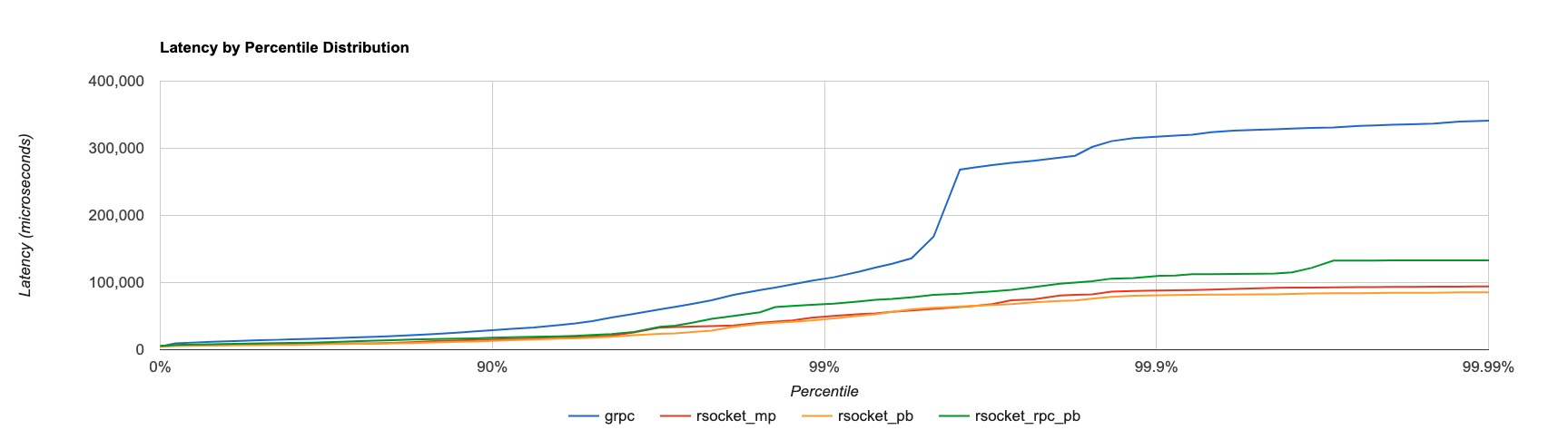

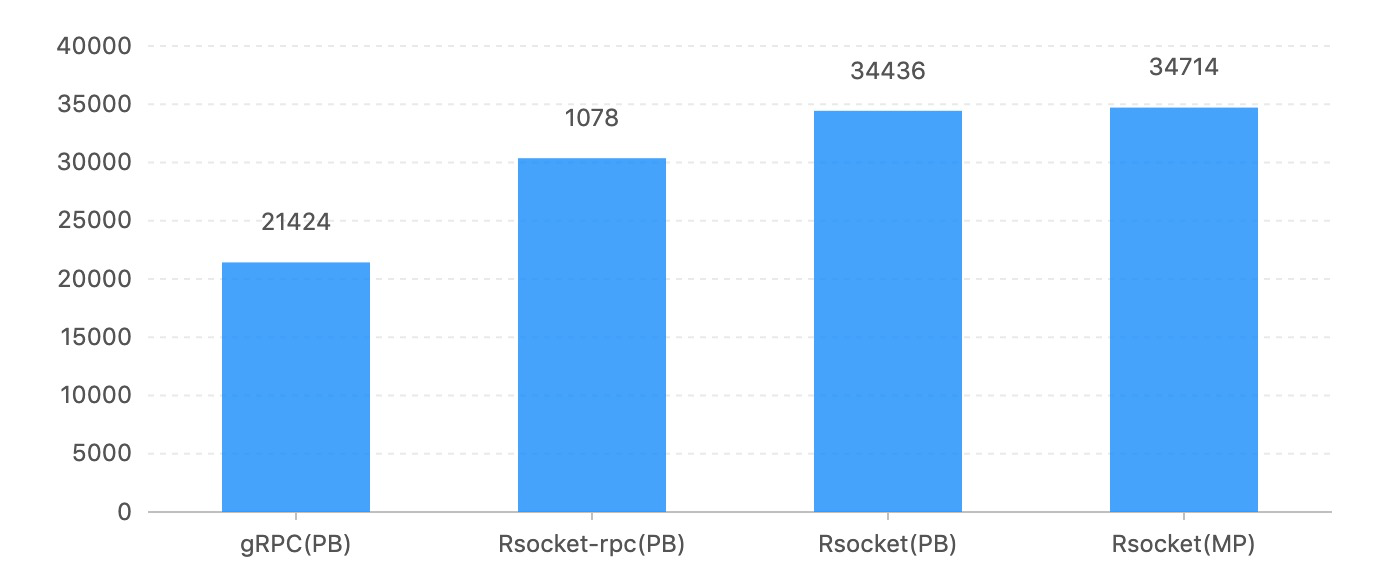

100,000-request_32-concurrency_16-conns_16bytes-repsonse

Latency:

QPS:

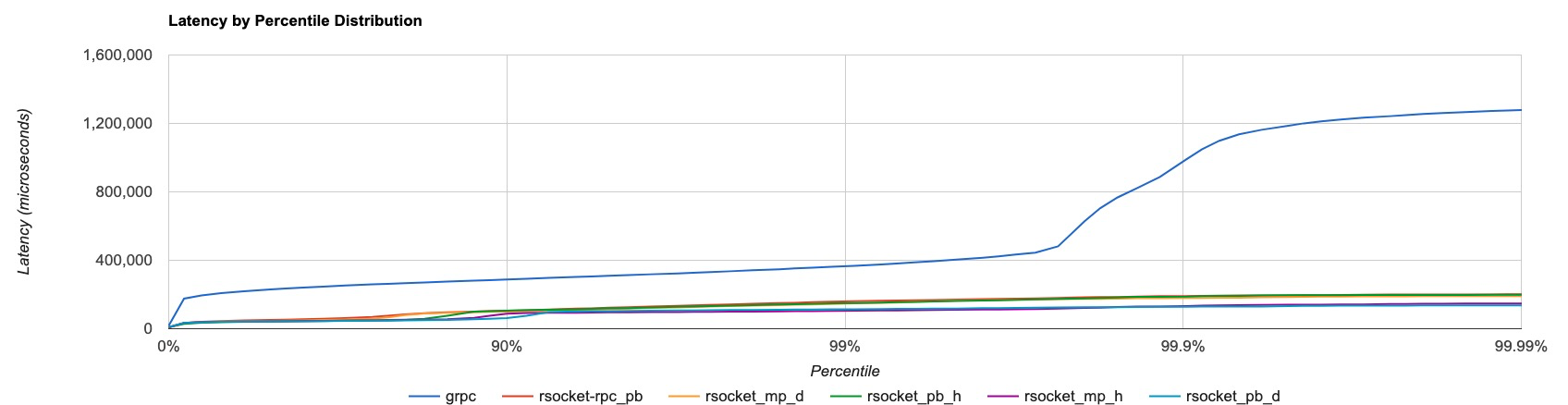

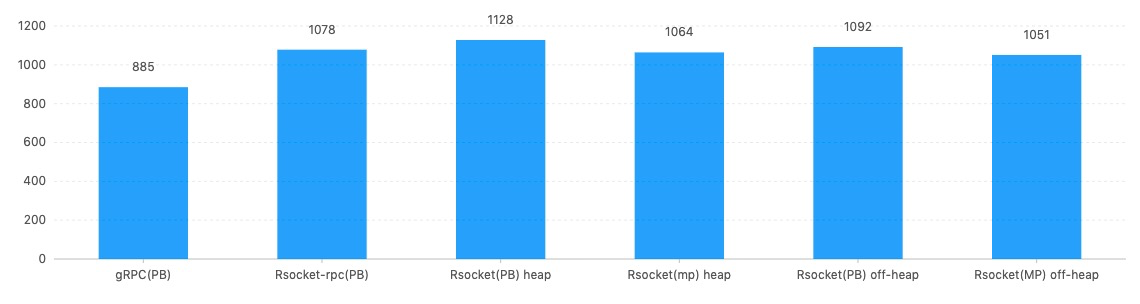

10,000,000-request_512-concurrency_16-conns_16bytes-repsonse

Latency:

QPS:

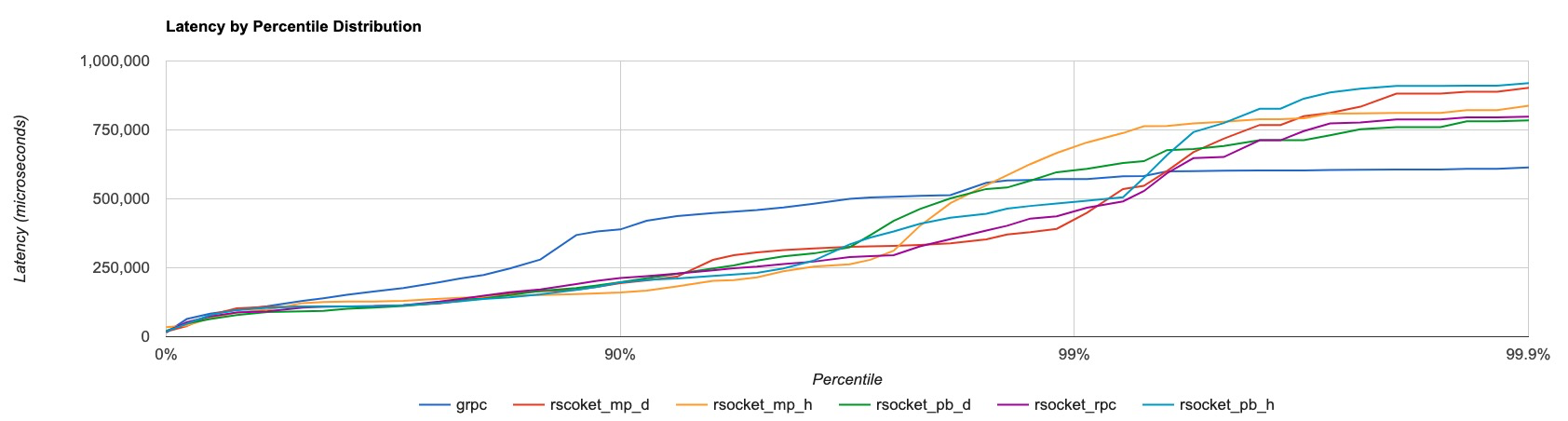

2,000-request_16-concurrency_16-conns_131072bytes-repsonse

Latency:

QPS:

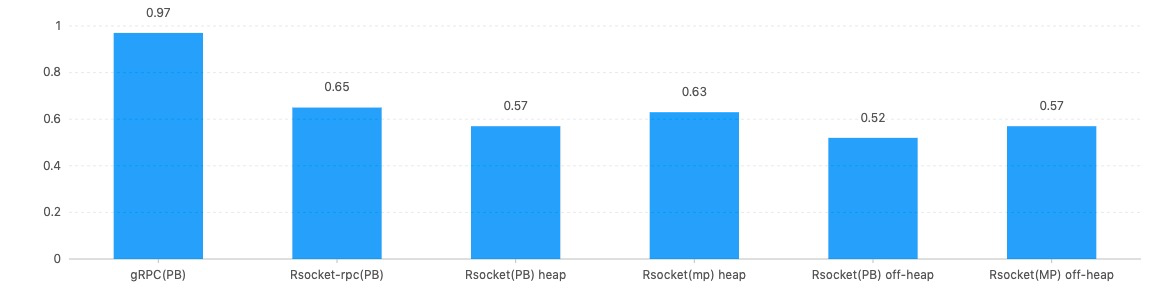

CPU

We also tested CPU usage against the 10,000,000-request_512-concurrency_16-conns_16bytes-repsonse test. Using Java profiling tool, we get the following results:

Conclusion

It’s pretty obvious that in the Java version, the RSocket SDK wins hands down against gRPC. From QPS, latency, CPU consumption, and scalability, RSocket performs better than gRPC in each and every category.

Reactive gRPC?

The last question we should ask is: "What happens when gRPC goes reactive?" To answer this question, I recommend the book Hands-On Reactive Programming in Spring 5 by Oleh Dokuka and Igor Lozynskyi. Oleh Dokuka is one of the main contributors to the reactive gRPC project. In the book, there is a chapter comparing reactive gRPC vs. RSocket. And I quote: “With the only difference being that it supports the flow control with a higher granularity. Since gRPC is built on top of HTTP/2, the framework employs HTTP/2 flow control as the building block for providing a fine-grained backpressure control. Nevertheless, the flow control still relies on the sliding window size in bytes, so the backpressure control on the logical elements' level granularity is left uncovered.”

So basically, HTTP/2 cannot be “truely” reactive, even though with the reactive implementation, gRPC’s performance may improve a lot.

Opinions expressed by DZone contributors are their own.

Comments