8 Steps to Safely Migrate a Database Without Downtime

Aviran Mordo, Head of Engineering at Wix, walks through a safe way to migrate your database without downtime and with minimum disruption to production.

Join the DZone community and get the full member experience.

Join For Freewhen practicing continuous delivery, it is nessesary sometimes to safely migrate one's database to another database without downtime. the question is how to do it safely with minimum disruption to production.

at wix.com we do database migrations several times a year (on different products) with much success. you can use this pattern to migrate between two different databases, for instance between mysql and mongodb or between two schemas in the same database.

the idea of this pattern is to do a lazy database migration using feature toggles to control the behaviour of your application and progressing through the phases of the migration.

let’s assume two databases you want to migrate from the “old” database to the “new” database.

build and deploy the “new” database schema into production

in this phase your system stays the same, nothing changes other than the fact that you have deployed a new database which you can start using when ready.

add a new dao to your app that writes to the “new” database

you may need to refactor your application to have a single (or very few) point(s) in which you access the database. at the points you access the database or dao you add a multi-state feature toggle that will control the flow of writing to the database. the first state of this feature toggle is “use the old database”. in this state your code ignores the “new” database and simply uses the “old” one as always.

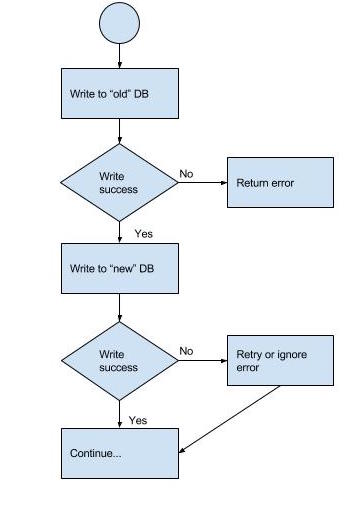

start writing to the “new” database but use the “old” one as the primary

we are now getting into the distributed transaction world because you can never be 100% sure that writing to 2 databases can succeed or fail at the same time. when your code performs a write operation it first writes to the “old” database and if it succeeds it writes to the “new” database as well. notice that in this step the “old” database is in a consistent state while the “new” database can potentially be inconsistent since the writes to it can fail while the “old” database write succeeded.

it is important to let this step run for a while (several days or even weeks) before moving to the next step. this will give you the confidence that the write path of your new code works as expected and that the “new” database is configured correctly with all the replications in place.

at any time you decide that something is not working you can simply change the feature toggle back to the previous state and stop writing to the “new” database. you can make modifications to the new schema or even drop it if you need as all the data is still in the “old” database and in a consistent state.

enable the read path. change the feature toggle to enable reading from both databases.

in this step the it is important to remember that “old” database is the consistent one and should still be treated as the authoritative data.

since there are many read patterns i’ll describe just a couple here but you can adjust it to your own use case.

in case you have immutable data and you know the record id you first read from the “new” database, and in case you did not find the record you need to fall back to the “old” database and look for the record there. only if both databases don’t have the record you return a “not found” to the client. otherwise if the record is found you return the result preferring the “new” database.

if your data is mutable you’ll need to perform the read operation from both databases and prefer the “new” one only if the timestamp is equal to the record in the “old” database. remember in this phase only the “old” database is considered consistent.

if you don’t know the record id and need to fetch unknown number of records you basically need to query both databases and merge the results coming from both dbs.

whatever your read pattern is, remember that in this case the consistent database is the “old” one, but in this phase you need to read and use the “new” database read path as much as you can, in order to test your application and your new dao on a real production environment. in this phase you may find out that you are missing some indices or need more read replicas.

let this phase run for a while before moving to the next phase. like in the previous phase, you can always turn the feature toggle back to the previous states without a fear of data loss.

another thing to note that since you are reading data from two schemas you will probably need to maintain backward and forward compatibility for the two data sets.

making the “new” database the primary one. change the feature toggle to first write to the new database (you still read from both but now prefer the new db).

this is a very important step. in this step you already run the write and read path of your code for a while now and when you feel comfortable you now switch roles and making the “new” database the consistent one and the “old” as a not consistent. instead of first writing to the “old” database first, you now write to the “new” database first and do a “best effort” writing to the old database. this phase also requires you to change the read priority. up until now we considered the “old” database as having the authoritative data but now you would prefer the data in the “new” database (of course you need to consider the record timestamp because it is authoritative only for data that is written since you switched to step 5).

this is also the point where you should try as much as you can to avoid switching back the feature toggle to the previous state as you’ll need to run a manual migration script to compare the two databases as writes to the “old” one may not have succeeded (remember distributed transaction). i call this “ the point of no return “.

stop writing to the “old” database (read from both)

change the feature toggle again to now stop writing to the “old” database having only a write path with the “new” database. since the “new” database still does not have all the records you will still need to read from the “old” database as well as from the new and merge the data coming from both.

this is an important step as it basically transforms the “old” database to a “read-only” database.

if you feel comfortable enough you can do step 5 and 6 in one go.

eagerly migrate data from the “old” database to the “new” one

now that the “old” database is in a “read-only” mode it is very easy to write a migration script to migrate all the records from the “old” database that are not present in the “new” database.

delete the “old” dao

this is the last step where all the data is migrated to the “new” database you can now safely remove the old dao from your code and leave only the new dao that uses the new database. you now of course stop reading from the “old” db and remove the data merging code that handle merging data from both daos.

this is it you are done and safely migrated the data between two databases without downtime.

side note: at wix we usually run steps 3 and 4 for at least 2 weeks each and sometimes even a month before moving on to the next step. examples for issues we had encounter during these steps were:

-

on the write path we were holding large objects in memory which caused gc storms during peak traffic.

-

replications were not configured/working properly.

-

missing proper monitoring.

-

on the read path we had issues like missing index and an inefficient data model that caused poor performance, which let us to rethink our data model for better read performance.

originally published at http://www.aviransplace.com/2015/12/15/safe-database-migration-pattern-without-downtime/

Opinions expressed by DZone contributors are their own.

Comments