Securing Access, Encryption, and Storage Keys

Explore the fundamentals of operating and leveraging binary serialized data structures in this deep dive into effective and efficient data utilization.

Join the DZone community and get the full member experience.

Join For FreeThe cloud platforms provide customers with technology and tools to protect their assets, including the most important one — data. At the time of writing, there's a lot of debate about who's responsible for protecting data. Still, generally, the company that is the legal owner of the data has to make sure that it's compliant with (international) laws and standards. In the UK, companies have to adhere to the Data Protection Act, and in the European Union, all companies have to be compliant with the General Data Protection Regulation (GDPR).

This article is an excerpt from my book, Multi-Cloud Strategy for Cloud Architects, Second Edition.

Both the Data Protection Act and GDPR deal with privacy. International standards ISO/IEC 27001:2013 and ISO/IEC 27002:2013 are security frameworks that cover data protection. These standards determine that all data must have an owner so that it's clear who's responsible for protecting the data. In short, the company that stores data on a cloud platform still owns that data and is therefore responsible for data protection.

To secure data on cloud platforms, companies have to focus on two aspects:

- Encryption

- Access, using authentication and authorization

These are just security concerns. Enterprises also need to be able to ensure reliability. They need to be sure that, for instance, keys are kept in another place other than the data itself and that even if a key vault is not accessible for technical reasons, there's still a way to access the data in a secure way. An engineer can't simply drive to the data center with a disk or a USB device to retrieve the data. How do Azure, AWS, and GCP take care of this? We will explore this in the next section.

Using Encryption and Keys in Azure

In Azure, the user writes data to blob storage. The storage is protected with a storage key that is automatically generated. The storage keys are kept in a key vault outside the subnet where the storage is itself. But the key vault does more than just store the keys. It also regenerates keys periodically by rotation, providing shared access signature (SAS) tokens to access the storage account. The concept is shown in the following diagram:

Figure 1: Concept of Azure Key Vault

The key vault is highly recommended by Microsoft Azure for managing encryption keys. Encryption is a complex domain in Azure since Microsoft offers a wide variety of encryption services in Azure. Disks in Azure can be encrypted using BitLocker or DM-Crypt for Linux systems. With Azure, Storage Service Encryption (SSE) data can automatically be encrypted before it's stored in the blob. SSE uses AES-256. For Azure SQL databases, Azure offers encryption for data at rest with Transparent Data Encryption (TDE), which also uses AES-256 and Triple Data Encryption Standard (3DES).

Using Encryption and Keys in AWS

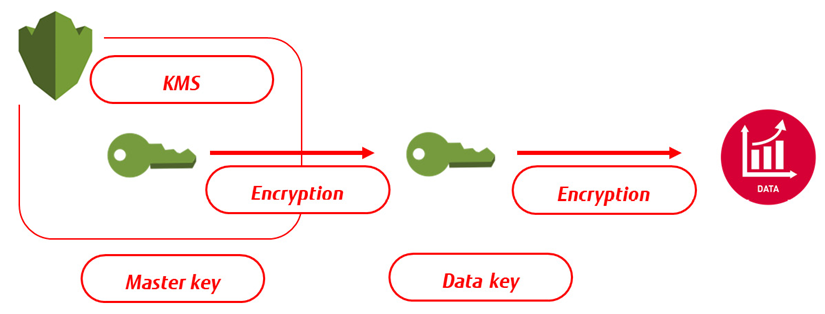

Like Azure, AWS has a key vault solution called Key Management Service (KMS). The principles are also very similar, mainly using server-side encryption. Server-side means that the cloud provider is requested to encrypt the data before it's stored on a solution within that cloud platform. Data is decrypted when a user retrieves the data. The other option is client-side, where the customer takes care of the encryption process before data is stored.

The storage solution in AWS is S3. If a customer uses server-side encryption in S3, AWS provides S3-managed keys (SSE-S3). These are the unique data encryption keys (DEKs) that are encrypted themselves with a master key, the key-encryption key (KEK). The master key is constantly regenerated. For encryption, AWS uses AES-256.

AWS offers some additional services with customer master keys (CMKs). These keys are also managed in KMS, providing an audit trail to see who has used the key and when. Lastly, there's the option to use customer-provided keys (SSE-C), where the customer manages the key themselves. The concept of KMS using the CMK in AWS is shown in the following diagram:

Figure 2: Concept of storing CMKs in AWS KMS

Both Azure and AWS have automated a lot in terms of encryption. They use different names for the key services, but the main principles are quite similar. That counts for GCP too, which is discussed in the next section.

Using Encryption and Keys in GCP

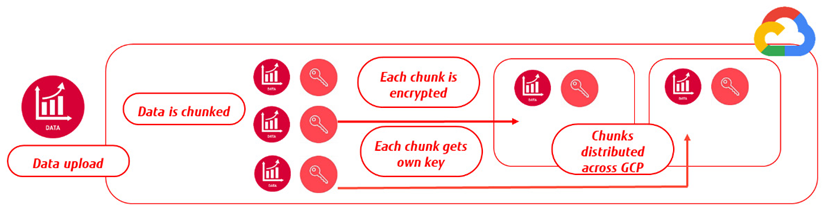

In GCP, all data that is stored in Cloud Storage is encrypted by default. Just like Azure and AWS, GCP offers options to manage keys. These can be supplied and managed by Google or by the customer. Keys are stored in Cloud Key Management Service. If the customer chooses to supply and/or manage keys themselves, these will act as an added layer on top of the standard encryption that GCP provides. That is also valid in the case of client-side encryption – the data is sent to GCP in an encrypted format, but still, GCP will execute its own encryption process, as with server-side encryption. GCP Cloud Storage encrypts data with AES-256.

The encryption process itself is similar to AWS and Azure and uses DEKs and KEKs. When a customer uploads data to GCP, the data is divided into chunks. Each of these chunks is encrypted with a DEK. These DEKs are sent to the KMS, where a master key is generated. The concept is shown in the following diagram:

Figure 3: Concept of data encryption in GCP

Implementing Encryption in OCI and Alibaba Cloud

Like in the named major public clouds, OCI offers services to encrypt data at rest using an encryption key that is stored in OCI's Key Management Service. Companies can use their own keys too, but these must be compliant with the Key Management Interoperability Protocol (KMIP). The process for encryption is like the other clouds. First, a master encryption key is created that is used to encrypt and decrypt all data encryption keys. Remember, we talked about these KEKs and DEKs before. Keys can be created from the OCI console, the command-line interface, or SDK.

Data can be encrypted and stored in block storage volumes, object storage, or in databases. Obviously, OCI too recommends rotation of keys as best practice.

Keys that are generated by customers themselves are kept in the OCI Vault and stored across a resilient cluster.

The steps are the same for Alibaba Cloud. From the console or CLI, keys are created, and next encrypted data is stored in one of the storage services that Alibaba Cloud provides: OSS, Apsara File Storage, or databases.

So far, we have been looking at data itself, the storage of data, the encryption of that data, and securing access to data. One of the tasks of an architect is to translate this into principles. This is a task that needs to be performed together with the CIO or the chief information security officer (CISO). The minimal principles to be set are as follows:

- Encrypt all data in transit (end-to-end)

- Encrypt all business-critical or sensitive data at rest

- Apply DLP and have a matrix that shows clearly what critical and sensitive data is and to what extent it needs to be protected.

Finally, develop use cases and test the data protection scenarios. After creating the data model, defining the DLP matrix, and applying the data protection controls, an organization has to test whether a user can create and upload data and what other authorized users can do with that data—read, modify, or delete. That does not only apply to users but also to data usage in other systems and applications. Data integration tests are, therefore, also a must.

Data policies and encryption are important, but one thing should not be neglected: encryption does not protect companies from misplaced identity and access management (IAM) credentials. Thinking that data is fully protected because it's encrypted and stored safely gives a false sense of security. Security really starts with authentication and authorization.

Published at DZone with permission of Jeroen Mulder. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments