Troubleshooting the Performance of Vert.x Applications, Part III — Troubleshooting Event Loop Delays

In the previous entry to this series, we reviewed several techniques that help you to prevent event loop delays. However, even the best programmer makes mist...

Join the DZone community and get the full member experience.

Join For Freein the previous entry to this series, we reviewed several techniques that help you to prevent event loop delays; however, even the best programmer makes mistakes. what should you do when your vert.x application doesn't perform as expected? how to find out what part of your code is blocking the event loop threads? in the final part of the series, we are going to focus on troubleshooting event loop delays.

the event loop thread model is vastly different from the thread-per-request model employed by standard jee or spring frameworks. from my experience, i can report that it takes developers some time to wrap their heads around it and that at the beginning they tend to make the mistake of introducing blocking calls into the event loop's code path. in the following sections, we will discuss several techniques of how to troubleshoot such situations.

blocked thread checker

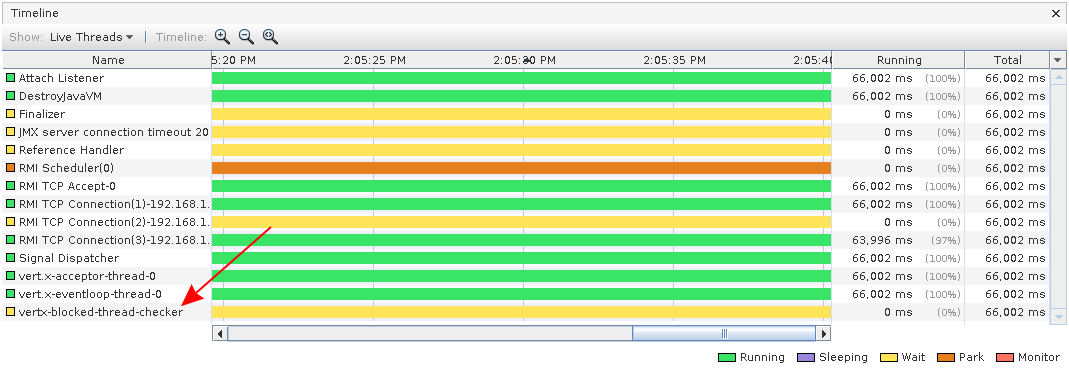

vert.x comes with a built-in mechanism to detect delays on the event loop and worker threads by checking the execution time of handlers that you registered with the vert.x apis. this mechanism operates in two steps. in the first step, vert.x saves the timestamp of the moment when a handler starts executing. this

start timestamp

is saved to storage attached to the thread that is executing the handler. whenever the execution of the handler has completed the timestamp is reset. in the second step, vert.x periodically checks the timestamps using a dedicated thread called

vertx-blocked-thread-checker

. this thread is spawned by vert.x during the creation of the vert.x instance for example when you call

vertx.vertx()

. the vertx-blocked-thread-checker thread can be seen in

visualvm

:

the blocked thread checker serves as a watchdog that periodically checks the vert.x threads. it iterates over all vert.x threads and for each thread it subtracts the threads start timestamp from the current time to compute how long the thread has already been executing the handler code. if the execution time exceeds the specified threshold a warning message is dropped into the logs:

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-5,5,main] has been blocked for 39 ms, time limit is 10 ms

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-6,5,main] has been blocked for 26 ms, time limit is 10 ms

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-1,5,main] has been blocked for 31 ms, time limit is 10 ms

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-3,5,main] has been blocked for 42 ms, time limit is 10 ms

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-2,5,main] has been blocked for 20 ms, time limit is 10 ms

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-4,5,main] has been blocked for 21 ms, time limit is 10 ms

mar 01, 2019 11:53:24 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-7,5,main] has been blocked for 19 ms, time limit is 10 msyou can use grep to routinely search through your application logs for this message. vert.x can also log the entire stack trace to help you pinpoint the location in your code where your handler is blocking the thread:

mar 24, 2019 9:34:23 am io.vertx.core.impl.blockedthreadchecker

warning: thread thread[vert.x-eventloop-thread-6,5,main] has been blocked for 24915 ms, time limit is 10 ms

io.vertx.core.vertxexception: thread blocked

at java.lang.thread.sleep(native method)

at mycomputingverticle.start(helloserver.java:72)

at io.vertx.core.impl.deploymentmanager.lambda$dodeploy$8(deploymentmanager.java:494)

at io.vertx.core.impl.deploymentmanager$$lambda$8/644460953.handle(unknown source)

at io.vertx.core.impl.contextimpl.executetask(contextimpl.java:320)

at io.vertx.core.impl.eventloopcontext.lambda$executeasync$0(eventloopcontext.java:38)

at io.vertx.core.impl.eventloopcontext$$lambda$9/1778535015.run(unknown source)

at io.netty.util.concurrent.abstracteventexecutor.safeexecute(abstracteventexecutor.java:163)

at io.netty.util.concurrent.singlethreadeventexecutor.runalltasks(singlethreadeventexecutor.java:404)

at io.netty.channel.nio.nioeventloop.run(nioeventloop.java:462)

at io.netty.util.concurrent.singlethreadeventexecutor$5.run(singlethreadeventexecutor.java:897)

at io.netty.util.concurrent.fastthreadlocalrunnable.run(fastthreadlocalrunnable.java:30)

at java.lang.thread.run(thread.java:748)note that the stack trace is generated at the moment when vert.x detects that the threshold has been exceeded which is not necessarily the moment when the thread was actually blocking. in other words, it is probable but it is not guaranteed that the stack trace is showing the actual location where your event loop thread is blocking. you may need to examine multiple stack traces to pinpoint the right location.

you can tweak the watchdog check period and the warning thresholds. here is an example:

vertxoptions options = new vertxoptions();

// check for blocked threads every 5s

options.setblockedthreadcheckinterval(5);

options.setblockedthreadcheckintervalunit(timeunit.seconds);

// warn if an event loop thread handler took more than 100ms to execute

options.setmaxeventloopexecutetime(100);

options.setmaxeventloopexecutetimeunit(timeunit.milliseconds);

// warn if an worker thread handler took more than 10s to execute

options.setmaxworkerexecutetime(10);

options.setmaxworkerexecutetimeunit(timeunit.seconds);

// log the stack trace if an event loop or worker handler took more than 20s to execute

options.setwarningexceptiontime(20);

options.setwarningexceptiontimeunit(timeunit.seconds);

vertx vertx = vertx.vertx(options);note that the first check is not executed right at the application start but is delayed by one check period. in our example, the first check is executed 5 seconds after the application start followed by checks executed every 5 seconds. the concrete thresholds shown in the example worked well for one of my projects, however, your mileage may vary. also, the very first execution of the handlers can be rather slow due to jvm class loading. the performance further improves when the jvm moves from interpreting the byte code to compiling it into the native code and running it directly on the cpu. hence, you are more likely to hit the warning thresholds shortly after the application starts than later on during the application run. it would be great if the threshold values could be dynamically adjusted to avoid the warnings before the jvm warms up. unfortunately, there's no way how to adjust the thresholds in runtime.

it goes without saying that vert.x only checks the threads that were created as a result of calling vert.x apis. if you instantiate your own thread pool outside of vert.x those threads won't be checked. if you want vert.x to check the threads in your custom thread pool, you can ask vert.x to instantiate a checked thread pool for you like this:

// create a thread pool with 20 threads, set blocked thread warning threshold to 10 seconds

workerexecutor executor = vertx.createsharedworkerexecutor("mypool", 20, 10, timeunit.seconds);the good thing about the blocked thread checker is that it is able to detect thread delays regardless of whether they were caused by a call to a blocking api or by executing a compute-intensive task. as such it can serve as a good indicator that there is something seriously wrong with your application.

inspecting stack traces

some event loop delays can be so subtle that they can go unnoticed by the blocked thread checker. imagine a situation where you have a handler that causes a very short delay. the blocked thread checker won't catch this short delay because it is not long enough to reach the threshold; however, if this handler is called very frequently, the aggregate delay caused by this handler can have a great impact on the performance of your application. how to uncover this kind of issue?

a good option is to analyze java thread dumps by hand. you can refer to this article if you want to learn how to do it. alternatively, you can use a java profiler like visualvm to find out in what parts of your code the most processing time is spent. instead of writing long prose about how to use visualvm to troubleshoot a vert.x application, i created a short video for you. you can watch this demo using jmeter and visualvm to figure out the cause of delays of the vert.x event loop:

conclusion

in this article, we talked about the blocked thread checker as a first indicator of the event loop delays. next, i showed you in the video how to troubleshoot event loop delays in practice using visualvm.

i hope that i didn't scare you throughout this series by analyzing all the things that can go wrong when working with the thread model vert.x is based on. in reality, it's not so bad. one just has to pay attention to the event loop model while coding. the awesome performance that vert.x applications can achieve is definitely a sufficient reward for the extra effort.

if you got some battle scars while working with the event loop thread model in vert.x, i would be interested in hearing your stories. also, let me know if you found the video demonstration helpful or if you have suggestions for future videos.

if you have any further questions or comments, feel free to add them to the comment section below.

Published at DZone with permission of Ales Nosek. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments