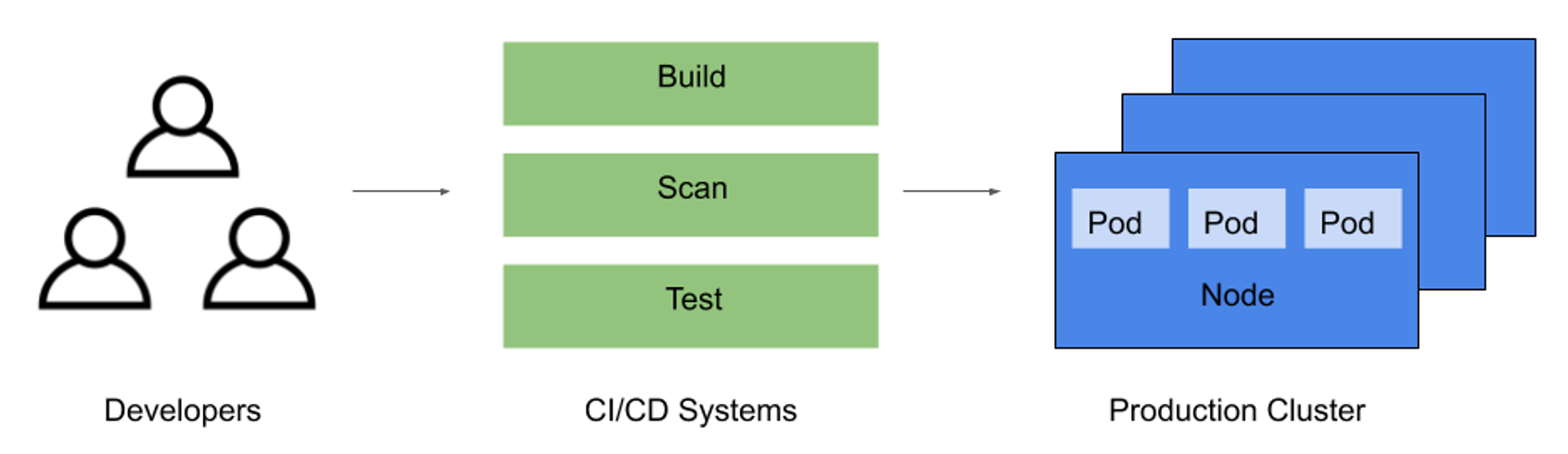

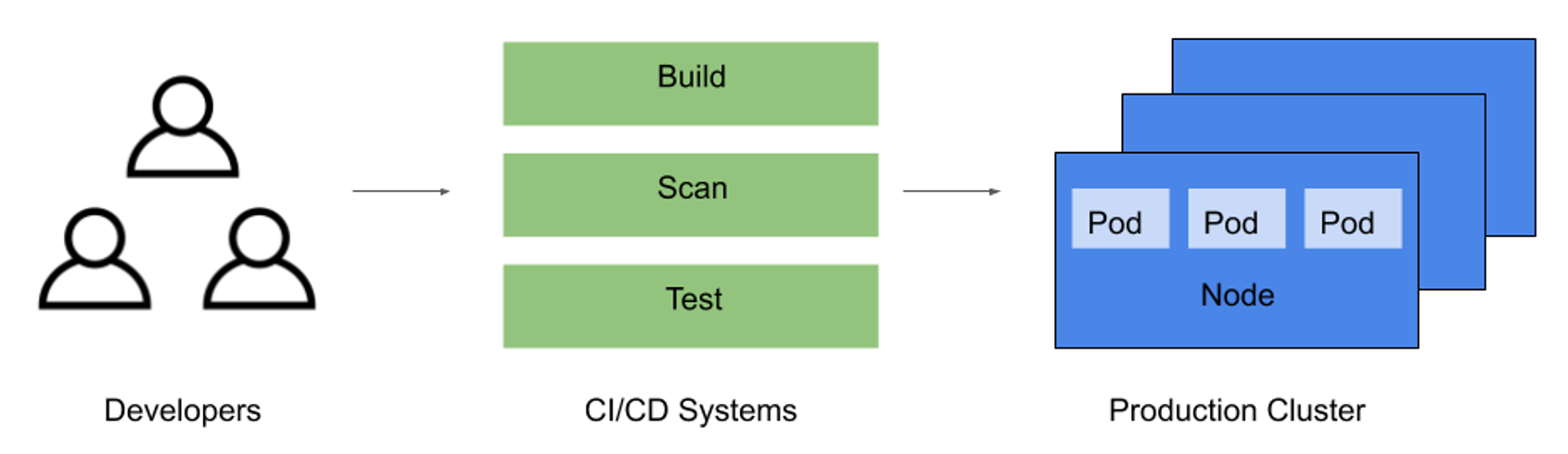

In Kubernetes environments, the software supply chain is a centralized place to make any software changes for propagation into production environments. It also serves as a bottleneck where users can incorporate security measures that impact the rest of the application lifecycle.

Container images constitute the standard application delivery format in Kubernetes environments. Building these images is the primary goal of a cloud-native software supply chain, so securing the supply chain should primarily focus on image security. The wide distribution and deployment of these container images requires a well-thought-out strategy for ensuring their security.

Figure 1: Software supply chain

Base Images

Security of container images often starts with the base operating system (OS) images. Consider using the official language-specific base image from a reputable provider (e.g., OpenJDK) over installing the package on a generic Linux image. Opt for alpine or distroless images from official image registries to keep the base image small. Finally, the base images that are used should also be updated frequently to address any newly disclosed vulnerabilities or other security concerns.

Image Components

Any container images that are built should be kept as minimal as possible. To avoid additional avenues of exploitation, utilize multistage builds in the containerized application for binaries, libraries, and configuration files only. In particular, the following should be avoided in production environments whenever possible:

| Image Contents |

Example |

Package managers |

apt, yum, apk

|

Network tools and clients |

wget, curl, netcat, ssh

|

Unix shells |

sh, bash

|

Secrets |

TLS certificate keys, cloud provider credentials, SSH private keys, database passwords |

Secrets should not be embedded in images since anyone with access to the image — either by downloading it from a registry or once it is built — would be able to view them in plain text, and because it provides unnecessary exposure prior to when the secret needs to be used. In Kubernetes clusters, you can use Kubernetes secrets or tools like Vault or external-secrets to pass this sensitive data to pods.

Another way to manage image components is to utilize the software bill of materials (SBOM), as recommended in the President’s Executive Order on Improving the Nation’s Cybersecurity. SBOMs are critical to securing the supply chain, particularly in augmenting dependency and OS package information, with additional attributes such as licensing, network data, and usage information. Common pitfalls such as using restrictive open-source licenses (e.g., GPL) and end-of-life (EOL) packages can be avoided by maintaining an SBOM.

Image Scanning

Once images are built, they must be scanned to avoid introducing vulnerabilities into your running Kubernetes clusters. Image scanners are available as standalone tools (e.g., trivy, anchore, clair), or in some cases, are integrated with image registries. It is critical to utilize an image scanner with several or all of the capabilities listed here:

Security Areas |

Scanner Capabilities |

- Installed OS packages

- Installed runtime libraries

- Secrets or other sensitive data

|

- Per-layer scanning

- Binary fingerprinting

- File contents testing

- Open-source license checking

|

Vulnerability Types |

Violation Types |

- OS-level vulnerabilities

- Programming language-specific vulnerabilities

|

- Database secret embedded in image

- Critical severity base image vulnerabilities

- Library with unwanted license type used

|

Image scanning should be a requirement for passing image builds. Results can be used to implement policies that determine whether a build should pass or fail based on the number, severity, and type of vulnerabilities detected in a given image. As the last line of defense, Kubernetes admission controllers can be configured to implement an ImagePolicyWebhook to scan all images before it is deployed to the cluster to prevent manual deployments that circumvent CI/CD pipelines.

Build Systems

The infrastructure, namely build and CI systems and pipelines, used to create these images must also be secured. As the number of tools used to deploy infrastructure and cloud-native applications onto Kubernetes grows, remember to secure the entire CI/CD pipeline.

Below are essential security measures to take:

- Limit administrative access to the build infrastructure

- Allow only required network ingress

- Manage any necessary secrets carefully, granting only minimal required permissions

- Use network firewalls to allow access only from trusted sites used to retrieve sources or other files

- Automate vulnerability scanning, license management, and static code analysis for potential security issues in the pipeline

Container Registry

Once an image has been built, it must also be stored securely in an appropriate image registry. A private, internal registry can provide greater security but imposes the added operational overhead of managing registry infrastructure and relevant access controls. An alternative is to use a registry managed by a cloud platform or other provider. Finally, configure alerts and reports for license violations and vulnerabilities to remediate issues on new and existing images.

{{ parent.title || parent.header.title}}

{{ parent.tldr }}

{{ parent.linkDescription }}

{{ parent.urlSource.name }}