Convert Audio File into Text With Machine Learning

Time to visualize some speech.

Join the DZone community and get the full member experience.

Join For FreeIntroduction

Converting audio into text has a wide range of applications: generating video subtitles, taking meeting minutes, and writing interview transcripts. Machine learning makes doing so easier than ever before, converting audio files into meticulously accurate text, with correct punctuation as well!

Development Preparations

For details about configuring the Maven repository and integrating the audio file transcription SDK, please refer to the Development Guide.

Declaring Permissions in the AndroidManifest.xml File

Open the AndroidManifest.xml in the main folder. Add the network connection, network status access, and storage read permissions before <application.

Please note that these permissions need to be dynamically applied. Otherwise, Permission Denied will be reported.

<uses-permission android:name="android.permission.INTERNET" />

<uses-permission android:name="android.permission.ACCESS_NETWORK_STATE" />

<uses-permission android:name="android.permission.READ_EXTERNAL_STORAGE" />Development Procedure

Creating and Initializing an Audio File Transcription Engine

Override onCreate in MainActivity to create an audio transcription engine.

private MLRemoteAftEngine mAnalyzer;

mAnalyzer = MLRemoteAftEngine.getInstance();

mAnalyzer.init(getApplicationContext());

mAnalyzer.setAftListener(mAsrListener);Use MLRemoteAftSetting to configure the engine. The service currently supports Mandarin Chinese and English, that is, the options of mLanguage are zh and en.

MLRemoteAftSetting setting = new MLRemoteAftSetting.Factory()

.setLanguageCode(mLanguage)

.enablePunctuation(true)

.enableWordTimeOffset(true)

.enableSentenceTimeOffset(true)

.create();

enablePunctuation indicates whether to automatically punctuate the converted text, with a default value of false.

If this parameter is set to true, the converted text is automatically punctuated; false otherwise.

enableWordTimeOffset indicates whether to generate the text transcription result of each audio segment with the corresponding offset. The default value is false. You need to set this parameter only when the audio duration is less than 1 minute.

If this parameter is set to true, the offset information is returned along with the text transcription result. This applies to the transcription of short audio files with a duration of 1 minute or shorter. If this parameter is set to false, only the text transcription result of the audio file will be returned.

enableSentenceTimeOffset indicates whether to output the offset of each sentence in the audio file. The default value is false.

If this parameter is set to true, the offset information is returned along with the text transcription result. If this parameter is set to false, only the text transcription result of the audio file will be returned.

Creating a Listener Callback to Process the Transcription Result

private MLRemoteAftListener mAsrListener = new MLRemoteAftListener()After the listener is initialized, call startTask in AftListener to start the transcription.

@Override

public void onInitComplete(String taskId, Object ext) {

Log.i(TAG, "MLRemoteAftListener onInitComplete" + taskId);

mAnalyzer.startTask(taskId);Override onUploadProgress, onEvent, and onResult in MLRemoteAftListener.

@Override

public void onUploadProgress(String taskId, double progress, Object ext) {

Log.i(TAG, " MLRemoteAftListener onUploadProgress is " + taskId + " " + progress);

}

@Override

public void onEvent(String taskId, int eventId, Object ext) {

Log.e(TAG, "MLAsrCallBack onEvent" + eventId);

if (MLAftEvents.UPLOADED_EVENT == eventId) { // The file is uploaded successfully.

showConvertingDialog();

startQueryResult(); // Obtain the transcription result.

}

}

@Override

public void onResult(String taskId, MLRemoteAftResult result, Object ext) {

Log.i(TAG, "onResult get " + taskId);

if (result != null) {

Log.i(TAG, "onResult isComplete " + result.isComplete());

if (!result.isComplete()) {

return;

}

if (null != mTimerTask) {

mTimerTask.cancel();

}

if (result.getText() != null) {

Log.e(TAG, result.getText());

dismissTransferringDialog();

showCovertResult(result.getText());

}

List<MLRemoteAftResult.Segment> segmentList = result.getSegments();

if (segmentList != null && segmentList.size() != 0) {

for (MLRemoteAftResult.Segment segment : segmentList) {

Log.e(TAG, "MLAsrCallBack segment text is : " + segment.getText() + ", startTime is : " + segment.getStartTime() + ". endTime is : " + segment.getEndTime());

}

}

List<MLRemoteAftResult.Segment> words = result.getWords();

if (words != null && words.size() != 0) {

for (MLRemoteAftResult.Segment word : words) {

Log.e(TAG, "MLAsrCallBack word text is : " + word.getText() + ", startTime is : " + word.getStartTime() + ". endTime is : " + word.getEndTime());

}

}

List<MLRemoteAftResult.Segment> sentences = result.getSentences();

if (sentences != null && sentences.size() != 0) {

for (MLRemoteAftResult.Segment sentence : sentences) {

Log.e(TAG, "MLAsrCallBack sentence text is : " + sentence.getText() + ", startTime is : " + sentence.getStartTime() + ". endTime is : " + sentence.getEndTime());

}

}

}

}Processing the Transcription Result in Polling Mode

After the transcription is completed, call getLongAftResult to obtain the transcription result. Process the obtained result every 10 seconds.

private void startQueryResult() {

Timer mTimer = new Timer();

mTimerTask = new TimerTask() {

@Override

public void run() {

getResult();

}

};

mTimer.schedule(mTimerTask, 5000, 10000); // Process the obtained long speech transcription result every 10s.

}

private void getResult() {

Log.e(TAG, "getResult");

mAnalyzer.setAftListener(mAsrListener);

mAnalyzer.getLongAftResult(mLongTaskId);

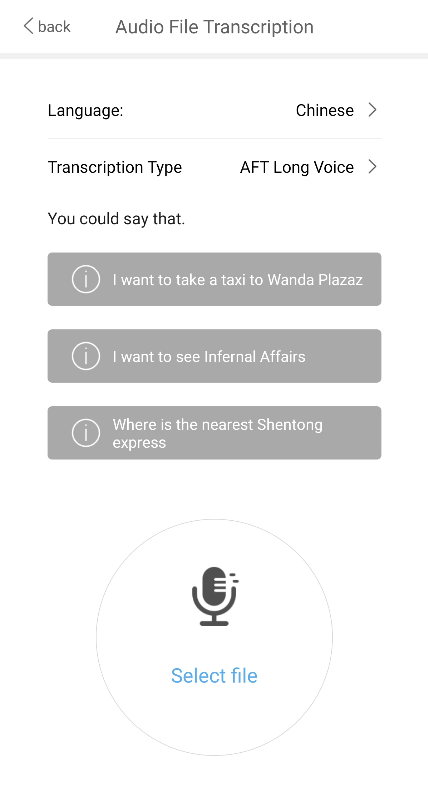

}Actual Effects

Build and run an app with audio file transcription integrated. Then, select a local audio file and convert it into text.

Opinions expressed by DZone contributors are their own.

Comments