Docker, Windows Containers, and DNS

Learn how to dynamically map WinDocks containers to various known IP addresses when build time comes around.

Join the DZone community and get the full member experience.

Join For FreeWinDocks containers are fast and lightweight, and it's simple to use scores of containers to build complex environments using a mix of .NET and SQL Server containers on a laptop. The containers will run unmodified on a shared Test server, or on a Public Cloud VM. While supporting an individual environment is easy enough, complexity grows with the size of the team, scale of containers, and varied network infrastructure. This article focuses on how we've enabled WinDocks containers to be dynamically mapped to known IP addresses at build time.

Containers, Reverse Proxy, and Port Forwarding

Reverse Proxy is another approach that works nicely if all you need is support for HTTP. We needed a solution for more general support for TCP and UDP ports. The goals for the design included the following factors:

- Containers should be refreshed as frequently as desired while working with existing DNS infrastructure.

- DNS zones can be used for segmented support of Dev, Test, and Production.

- A new method of integrating containers without the use of Web.config files.

Each container includes a single port (typical range from 10000 to 10200) and user credentials. Containers are an isolated set of resources to host server-side applications and include mechanisms for throttling memory, CPU, or other resources. SQL Server containers are commonly accessed with SQL Management Studio or other tools. Software developers don't normally need to access code within containers and either use a Git pull or copy code into the container via a DockerFile command. The host administrator has full access to the containers and their associated file systems.

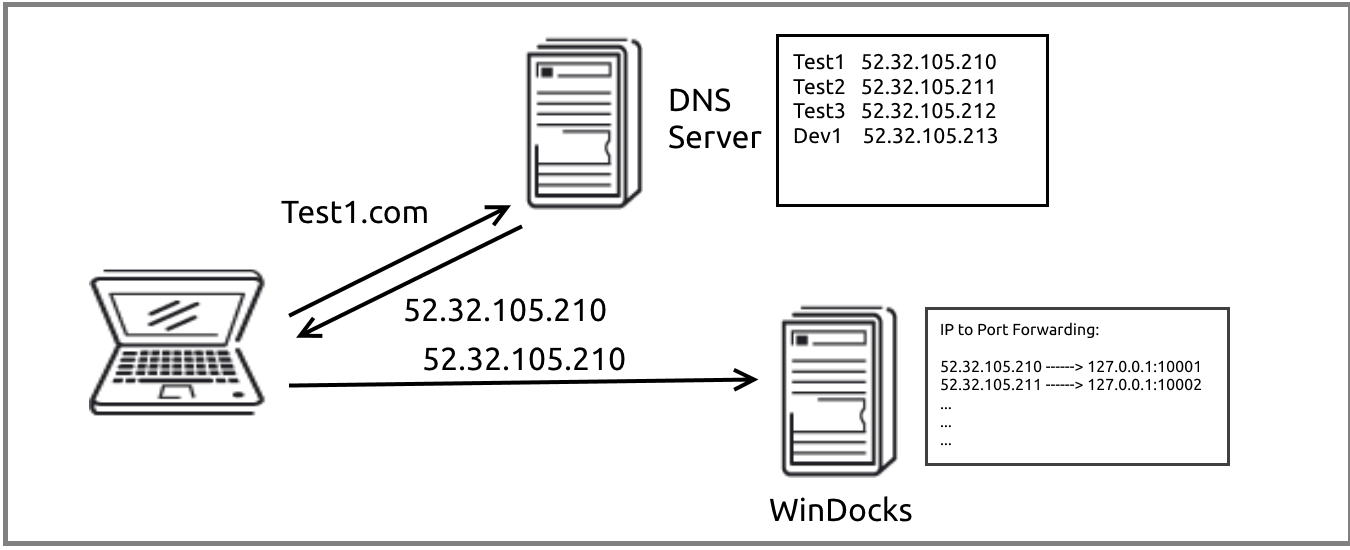

Name to IP Address to Container Port

The solution uses NETSH to map IP addresses to containers during the container build process. An inbound request resolves a name to a known IP address, which is mapped to the container port when the container is created. The naming convention on DNS is not affected as containers are deleted and recreated (as frequently as seconds apart), and this approach supports the assignment of container resources to individuals or teams.

The first step is to create a pool of IP addresses for the WinDocks host. The pool of IP addresses is created using NETSH, with a batch file that looks like the command below. Named services are defined and registered on the DNS host.

netsh in ip add address "Local Area Connection" 192.168.1.111 255.0.0.0

With IP addresses and names registered with DNS, WinDocks automates the mapping of IP addresses to container ports during the container build process. This is accomplished by incorporating the NETSH command into the WinDocks Dockerfile. This integration of a Windows CMD into the WinDocks Dockerfile is supported in the WinDocks Administrative configuration.

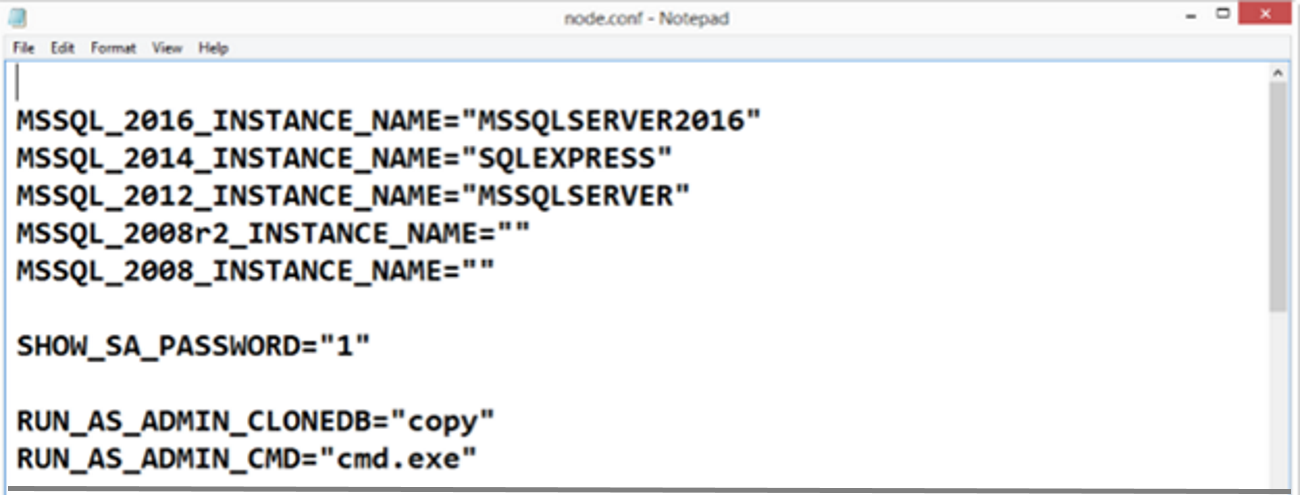

WinDocks administrative privileged commands are defined and configured in the \Windocks\config\node.config. This file allows the administrator to define support for Git and, in this case, NETSH. In the example below, we've enabled a full range of Windows commands with the command:

RUN_AS_ADMIN_CMD= "cmd.exe"

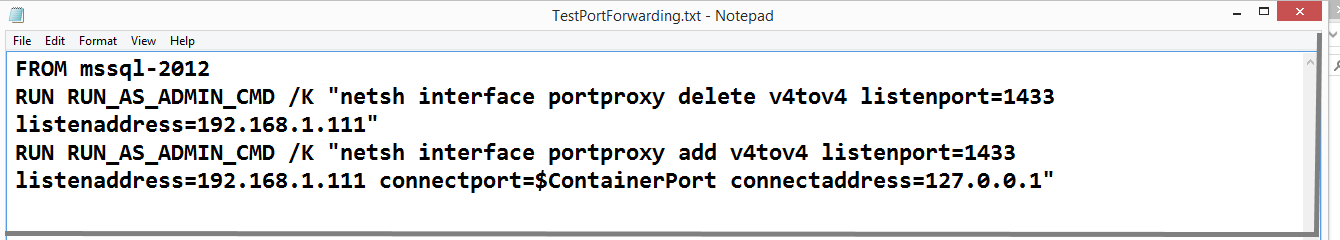

Now that the WinDocks host supports NETSH, we can incorporate the commands into our container build process. DockerFiles begin with a base image, such as "MSSQL-2012" (used below), which is followed by a series of commands that are executed sequentially. In this case, two commands employ NETSH, with the first serving the purpose of deleting previously existing IP mapping. The second command performs the mapping of the IP address to the newly created container port. Note that this process uses an environment variable $ContainerPort.

Achieving Our Goals and Looking Forward to Auto Discovery!

Developers want practical solutions, and this approach fits into existing infrastructure, supports the dynamic nature of containers in dev and test environments, and scales nicely from a laptop to shared dev and test environments, or production usage. The IP to port mapping is accomplished during the container build, with a single integrated step. It also is easy to support with different teams maintaining their own container mapping.

We're also pleased by the nature of the design, as it provides us an alternative to fussing with the web.config files, parsing and performing string replacements in PowerShell scripts!

The growth in Docker containers has highlighted a need for a new generation of service discovery solutions, where a process can register itself dynamically among a pool of resources, and user traffic can be load-balanced across a pool of resources. The approach outlined here is definitely not intended to address the high scalability, geographically distributed, auto-registration of services. We look forward to exploring this with our customers as solutions are brought to market.

Opinions expressed by DZone contributors are their own.

Comments