Eclipse MicroProfile Metrics — Practical Use Cases

Let's talk about monitoring and metrics in enterprise Java and how DevOps practices lead teams to choose microservices.

Join the DZone community and get the full member experience.

Join For FreeAt the end of the 2000s decade, in 2010, one of the main motivations for DevOps was the (relatively) neglected interaction between development, QA, and operations teams. The "DevOps promise" could be summarized as the union between culture, practices, and tools to improve software quality and speed up software development in terms of time to market. Successful implementations bring collateral effects like scalability, stability, security, and development speed.

In line with diverse opinions, to implement a successful DevOps culture, IT teams need to implement practices like

- Continuous integration (CI)

- Continuous delivery (CD)

- Microservices

- Infrastructure as code

- Communication and collaboration

- Monitoring and metrics

In this world of buzzwords, it's difficult to identify DevOps maturity without losing the final goal: to create applications that generate value for the customer in short periods of time.

In this post, we will discuss monitoring and metrics in the Java Enterprise World, specifically how new DevOps practices impact architectural decisions at the technology selection phase.

Metrics in Java Monoliths

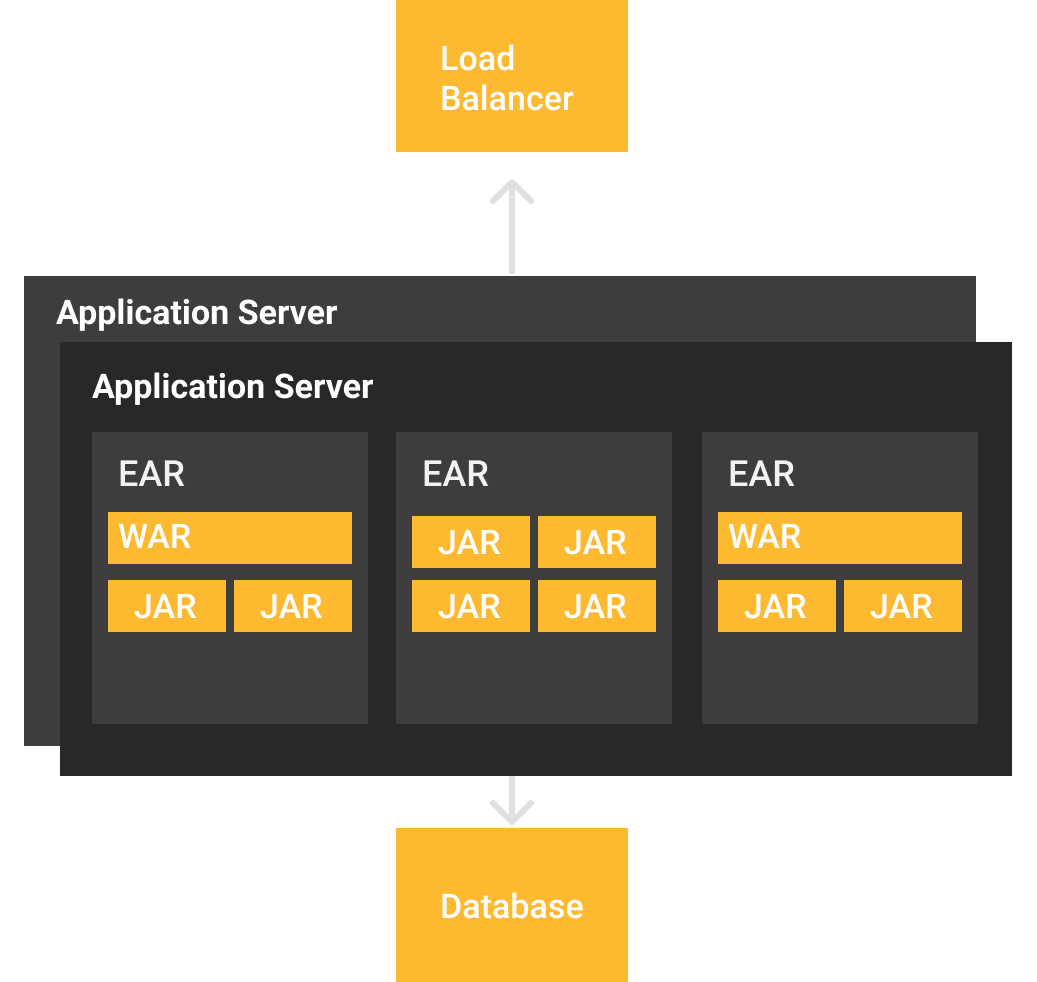

In terms of traditional architectures, monolithic applications have common characteristics like

- Execution over servlet containers and application servers, with the JVM being a long-running process.

- Ideally, these containers are never rebooted, or reboots are allowed on planned maintenance Windows.

- An application could compromise the integrity of the entire monolith under conditions like bad code, bad deployments, and server issues.

Without considering any Java framework, the deployment structure will be similar to the figure below, where we observe that applications are distributed by using .war or .jar files, being collected in .ear files for management purposes. In these architectures, applications are created as modules and separated considering business objectives.

If the need to scale arises, there are basically two options: to scale server resources (vertical) or add more servers with an application copy to distribute clients using a load balancer. It is worth noting that new nodes tend to be also long-running processes, since any application server reboot implies a considerable amount of time that is directly proportional to the quantity of applications that have been deployed.

Hence, the decision to provision (or not) a new application server often is a combined decision between development and operations teams, and with this you have the following options to monitor the state of your applications and application server:

- Vendor or vendor-neutral telemetric APIs, e.g Jolokia, Glassfish REST Metrics

- JMX monitoring through specific tools and ports, e.g. VisualVM, Mission Control

- "Shell wrangling" with tail, sed, cat, top, and htop

It should be noted that, in this kind of deployment, it's necessary to obtain metrics from the application and server, creating a complex scenario for status monitoring.

The rule of thumb for this scenarios is often to choose telemetric/own APIs to monitor application state and JMX/Logs for a deeper analysis of runtime situation, again presenting more questions:

- Which telemetric API should I choose? Do I need a specific format?

- Server's telemetry would be sufficient? How metrics will be processed?

- How do I got access to JMX in my deployments if I'm a Containers/PaaS user?

Reactive Applications

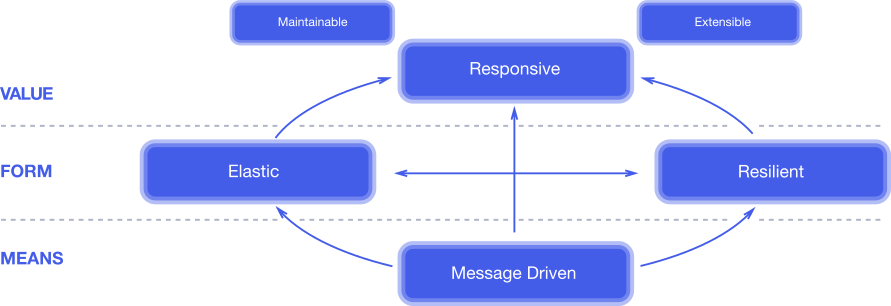

One of the (not so) recent approaches to improve users experience is to implement reactive systems with the defined principles of the Reactive Manifesto.

In short, a reactive system is a system capable of

- Being directed/activated by messages often processed asynchronously.

- Presenting resilience by failing partially without compromising the system.

- Elasticity to provision and halt modules and resources on demand, having a direct impact in resources billing.

- The final result: a responsive system for the user.

Despite not being discussed often, reactive architectures have a direct impact on metrics. With dynamic provisioning, you won't have long-running processes to attach and save metrics, and additionally, the services switch IP addresses depending on client demand.

Metrics in Java Microservices Architectures

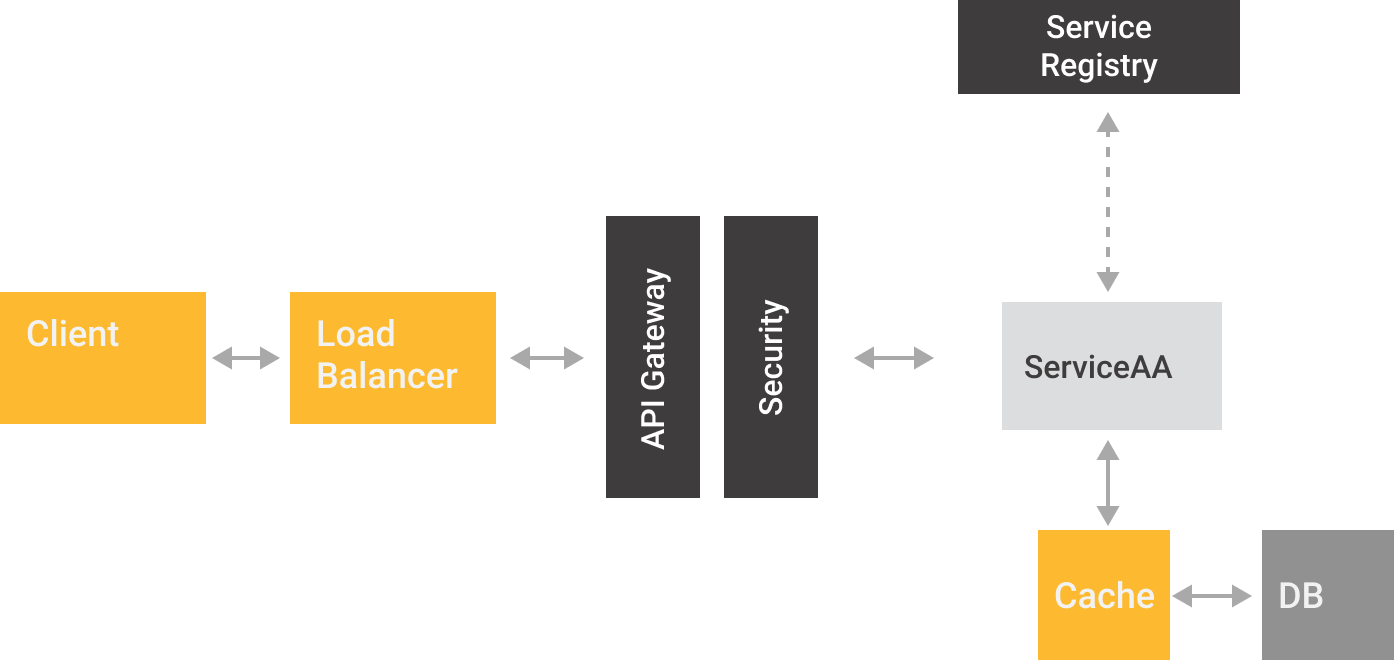

Without considering any particular framework or library, the reactive architectural style strongly suggests the use of containers and/or microservices, especially for resilience and elasticity:

In a traditional microservices architecture, we observe that services are basically short-lived "workers," which could have clones reacting to changes in clients' demands. These environments are often orchestrated with tools like Docker Swarm or Kubernetes, hence each service is responsible for registering itself to a service registry acting as a directory. Being the registry, the ideal source for any metric tool to read services' locations, pulling and saving the correspondent metrics.

Metrics With Java EE and Eclipse MicroProfile

Despite early efforts in the EE space, like an administrative API with metrics, the need for a formal standard of metrics became mandatory due to microservices' popularization. Dropwizard Metrics was one of the pioneers to cover the need for a telemetric toolkit with specific CDI extensions.

In this line, the MicroProfile project has included among its recent versions (1.4 and 2.0) support for health checks and state metrics. Many actual DropWizard users would notice that annotations are similar if not the same. In fact, MicroProfile annotations are based directly on DropWizard's API 3.2.3.

To differentiate between concepts, the Healthcheck API is in charge of answering a simple question: "Is the service running, and how well is it doing?" On the other side, Metrics present instant or periodical metrics on how services are reacting to consumers requests.

The latest version of MicroProfile (2.0) includes support for Metrics 1.1, including:

- Counted

- Gauge

- Metered

- Timed

- Histogram

It is worth to give them a try with practical use cases.

Metrics With Payara and Eclipse MicroProfile

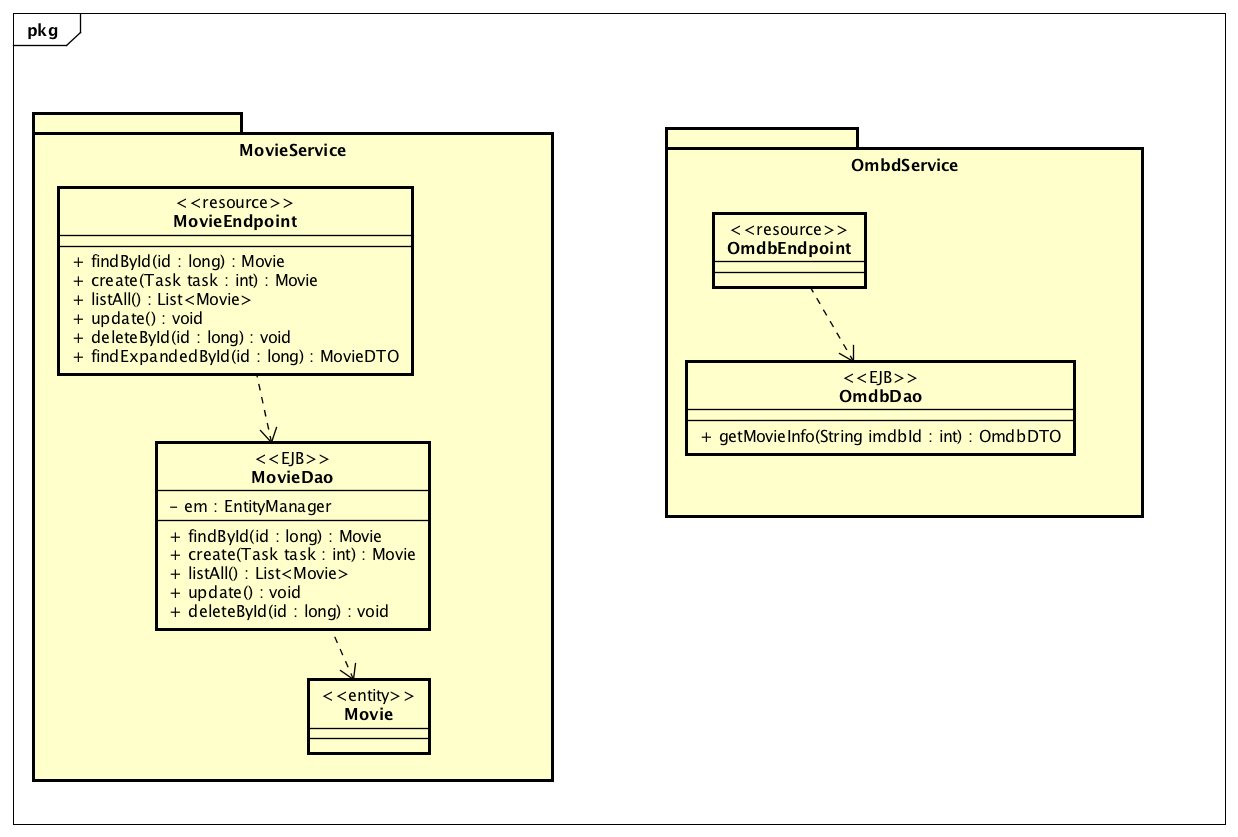

For this test, we will use an application composed of two microservices, as described in this diagram:

Our scenario includes two microservices: OmdbService, focused on information retrieving from OMDB to obtain up-to-date movie information, and MovieService, to obtain movie information from a relational database and mix it with the OMDB plot. The project code is available on GitHub:

To activate support for MicroProfile 2.0, two things are needed: the right dependency in pom.xml and deployment of our application over a MicroProfile-compatible implementation, like Payara Micro.

<dependency>

<groupId>org.eclipse.microprofile</groupId>

<artifactId>microprofile</artifactId>

<type>pom</type>

<version>2.0.1</version>

<scope>provided</scope>

</dependency>MicroProfile uses a basic convention in regards of metrics, presenting three levels:

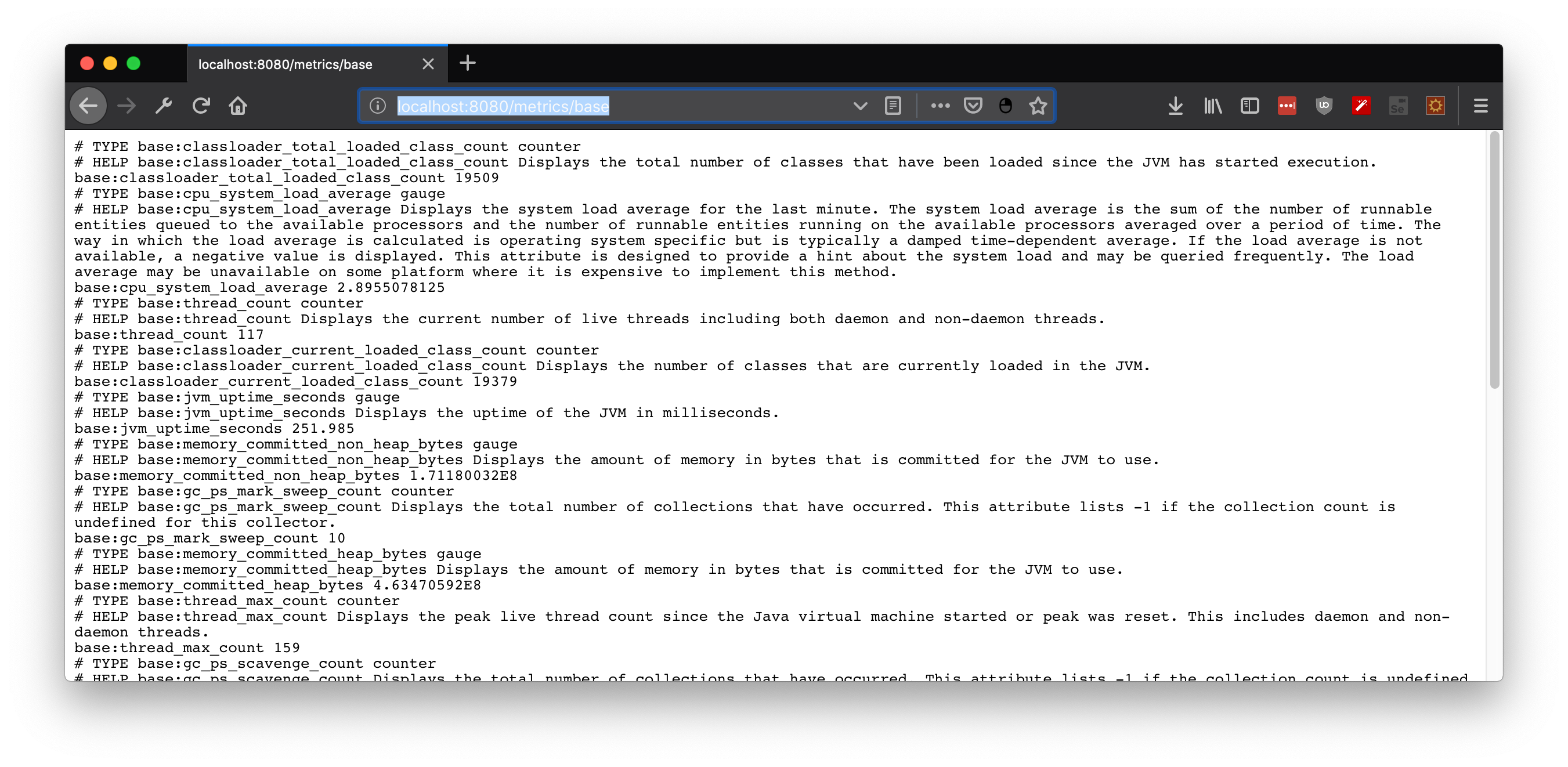

- Base: Mandatory Metrics for all MicroProfile implementations, located at

/metrics/base - Application: Custom metrics exposed by the developer, located at

/metrics/application - Vendor: Any MicroProfile implementation could implement its own variant, located at

/metrics/vendor

Depending on requests header, metrics will be available in JSON or OpenMetrics format. The last one popularized by Prometheus, a Cloud Native Computing Foundation project.

Practical Use Cases

So far, we've established that:

- You can monitor your Java application by using telemetric APIs and JMX.

- Reactive applications present new challenges, especially due to microservices' dynamic and short-running nature.

- JMX is sometimes difficult to implement on container/PaaS -based deployments.

- MicroProfile Metrics is a new proposal for Java (Jakarta) EE environments, working for both monoliths and microservices architectures.

Case 0: Telemetry From JVM

To give a quick look at MicroProfile metrics, boot a MicroProfile-compliant app server/microservice framework with any deployment. Since Payara Micro is compatible with MicroProfile, metrics will be available from the beginning at http://localhost:8080/metrics/base.

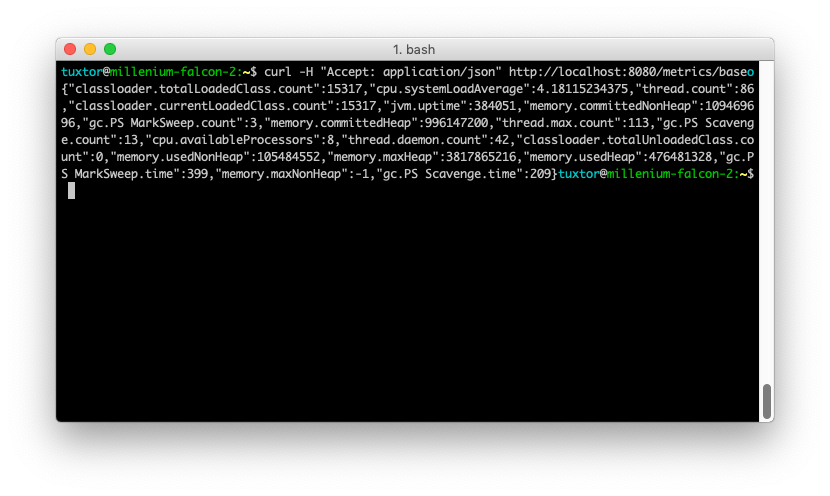

You could switch the Accept request header in order to obtain JSON format, in curl, for instance:

curl -H "Accept: application/json" http://localhost:8080/metrics/base

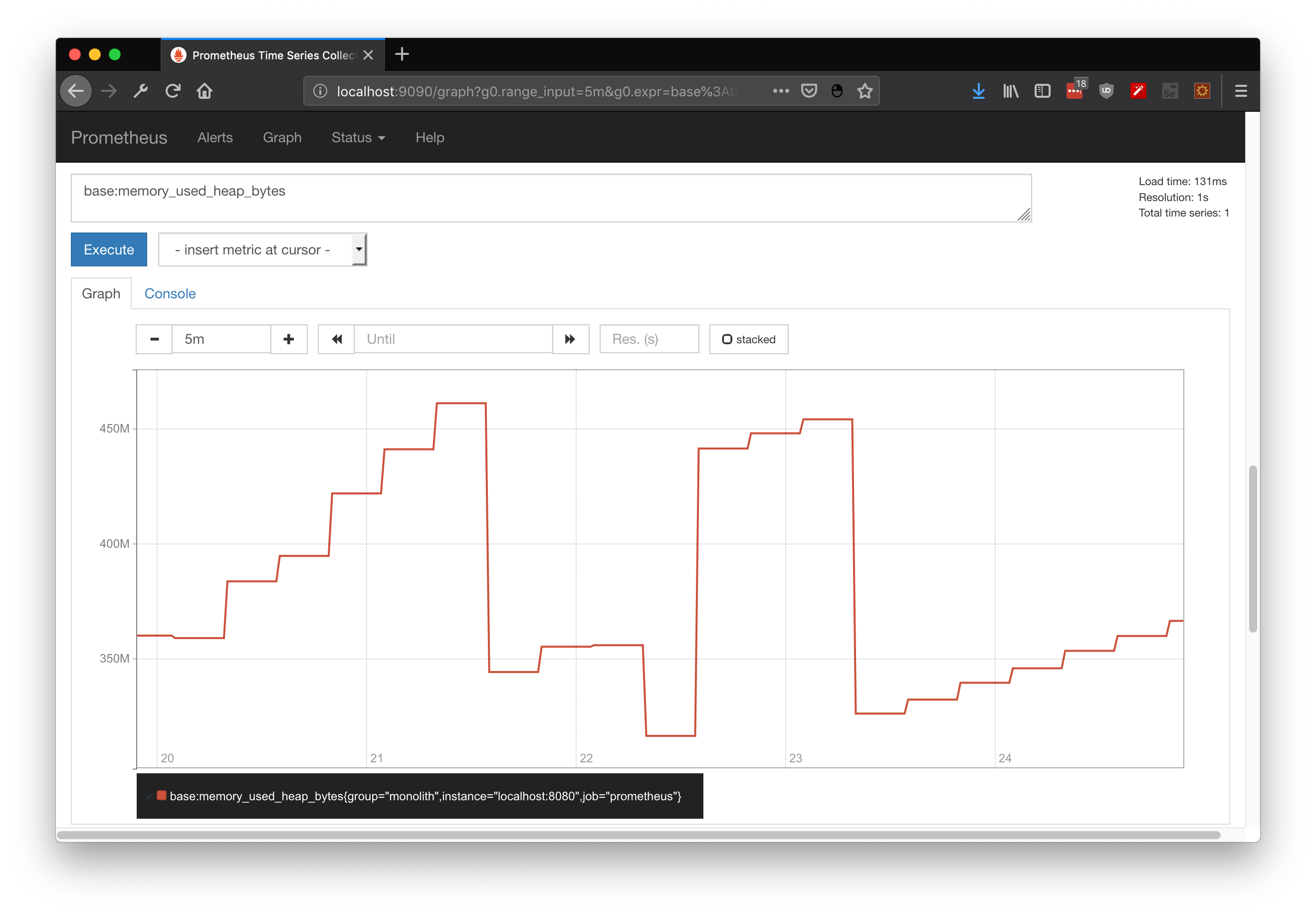

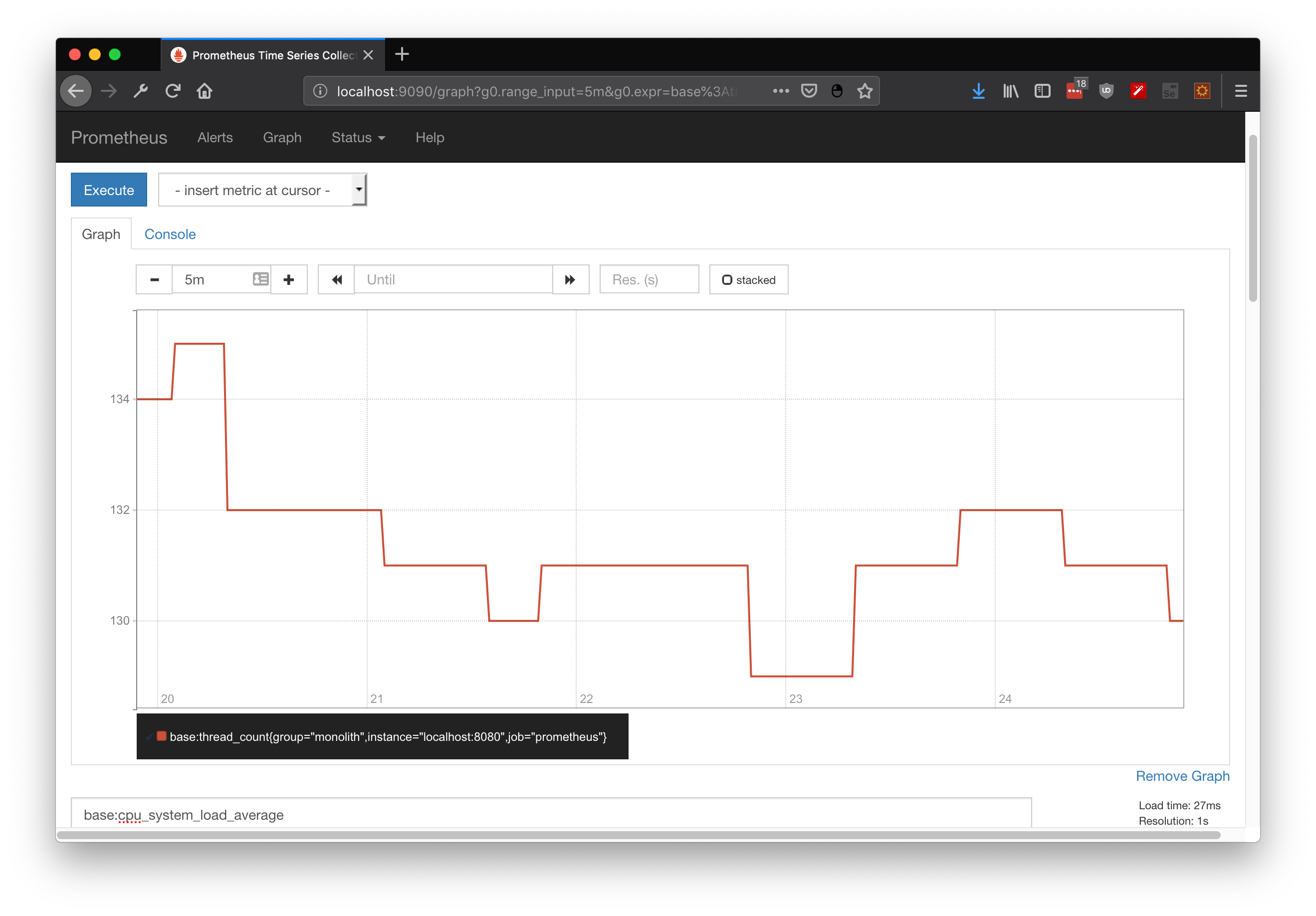

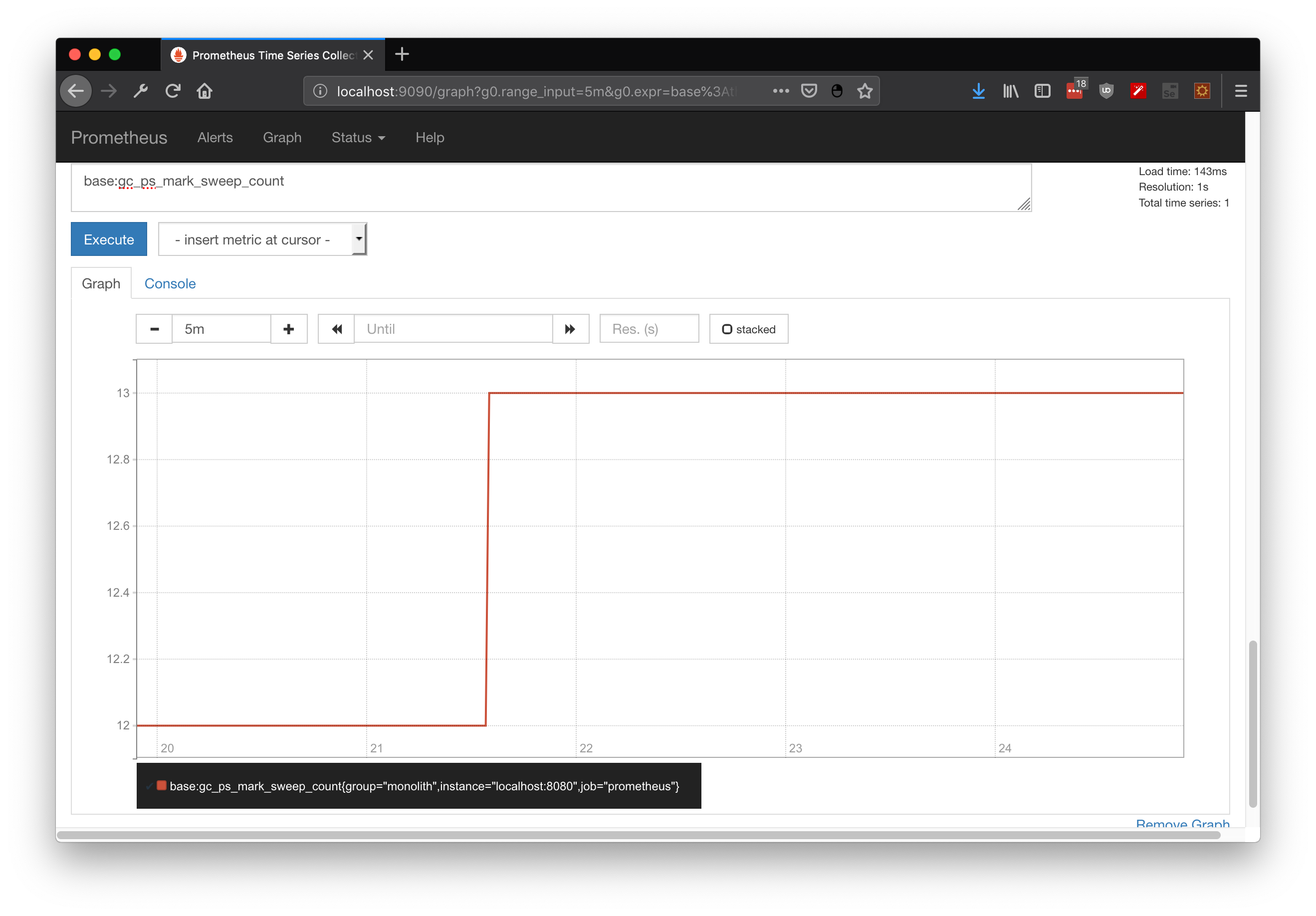

By themselves, metrics are just an up-to-date snapshot of the platform state. If you want to compare these snapshots over time, metrics should be retrieved at constant periods of time — or you could integrate Prometheus, which does it for you. Here, I'll demonstrate some useful queries for JVM state, including heap state, CPU utilization, and GC executions:

base:memory_used_heap_bytes

base:cpu_system_load_average

base:gc_ps_mark_sweep_count

Case 1: Metrics for Microservices

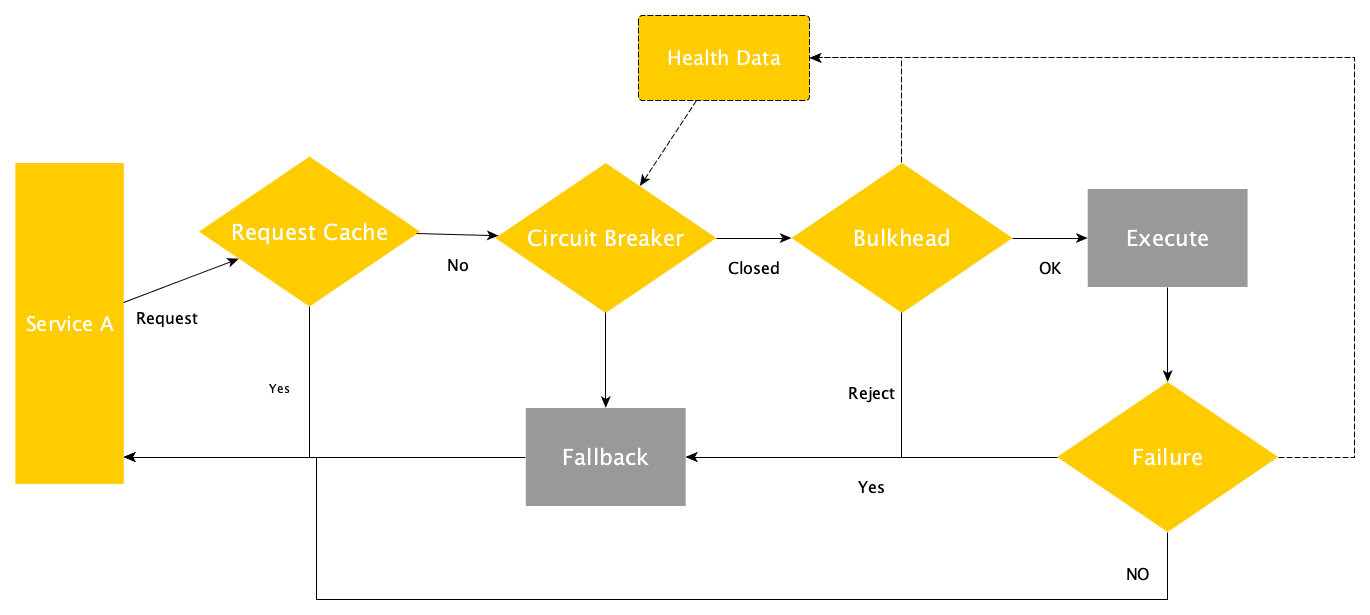

In a regular and "full tolerant" request from one microservice to another, your communication flow will go through the following decisions:

- To use or not a cache to attend the request.

- To cut the communication (circuit breaker) and execute a fallback method if a metric threshold has been reached.

- To reject the request if a bulkhead has been exhausted and execute a fallback method instead.

- To execute a fallback method if the execution reached a failed state.

Many of the caveats on developing microservices come from the fact that you are dealing with distributed computation, so you should include new patterns that already depend on metrics. If metrics are being generated, with exposure, you will gain data for improvements, diagnosis, and issue management.

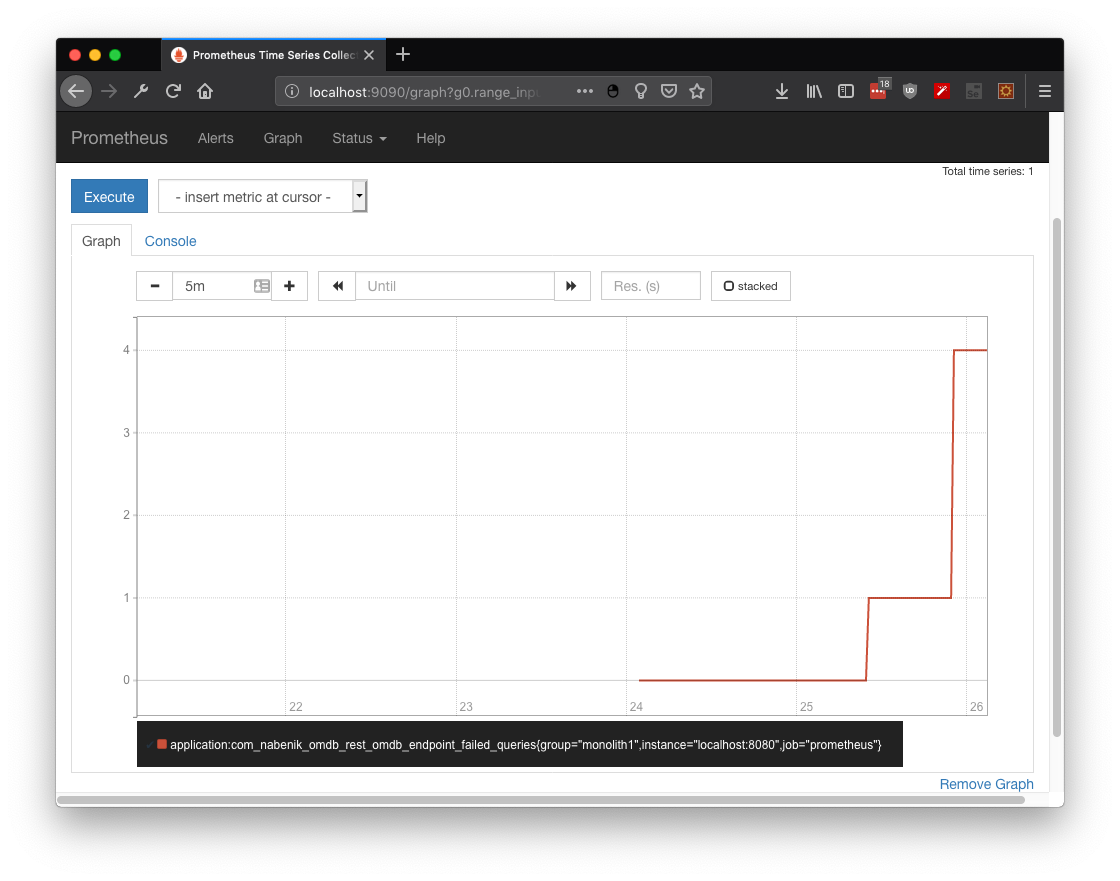

Case 1.1: Counted to Retrieve Failed Hits

The first metric to implement will be Counted, a pretty simple one. Its main objective is to increment/decrement its value over time. In this use case, the metric is counting how many times the service reached the FallBack alternative by injecting it directly into a JAX-RS service:

@Inject

@Metric

Counter failedQueries;

...

@GET

@Path("/{id:[a-z]*[0-9][0-9]*}")

@Fallback(fallbackMethod = "findByIdFallBack")

@Timeout(TIMEOUT)

public Response findById(@PathParam("id") final String imdbId) {

...

}

public Response findByIdFallBack(@PathParam("id") final String imdbId) {

...

failedQueries.inc();

}After simulating a couple of failed queries over OMDB database (no internet) the metric application:com_nabenik_omdb_rest_omdb_endpoint_failed_queries shows how many times my service has invoked the fallback method:

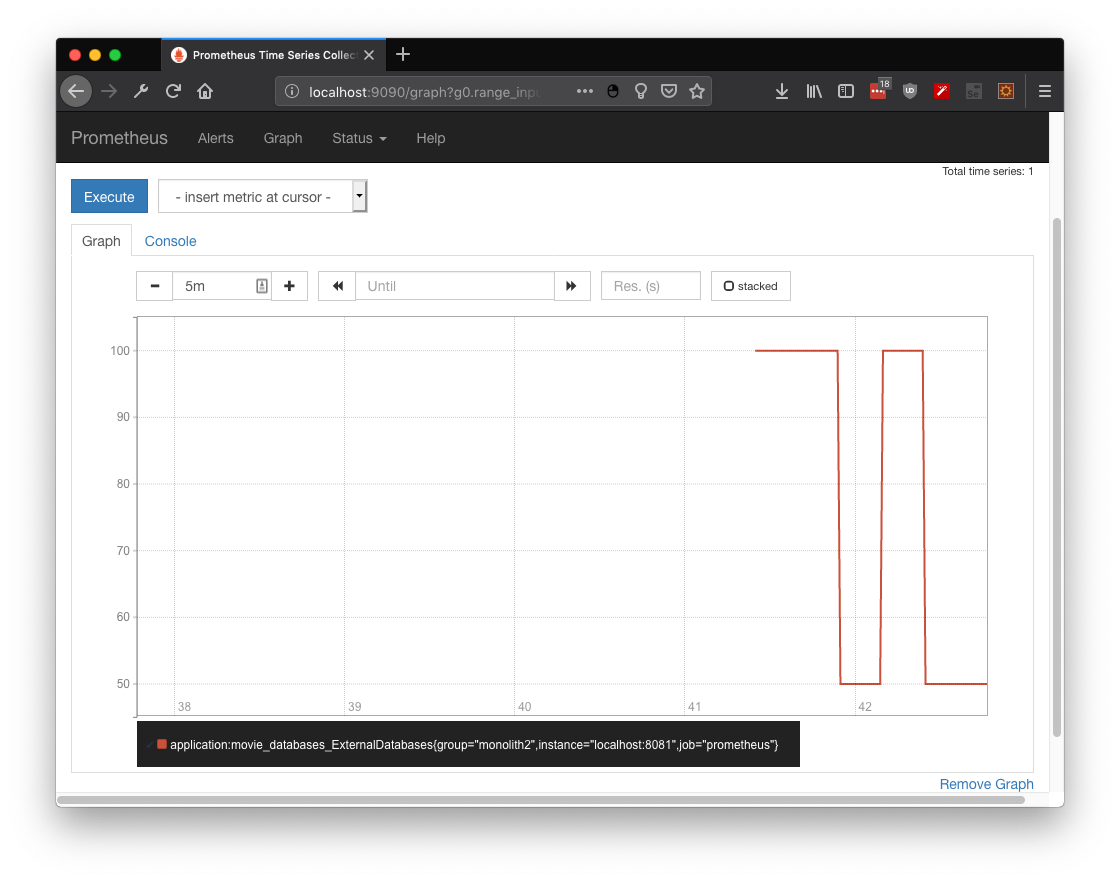

Case 1.2: Gauge to Create Your Own Metric

Although you could depend on simple counters to describe the state of any given service, with gauge, you could create your own metric, like a dummy metric to display 100 or 50 depending on an odd/even random number:

@Gauge(unit = "ExternalDatabases", name = "movieDatabases", absolute = true)

public long getDatabases() {

int number = (int)(Math.random() * 100);

int criteria = number % 2;

if(criteria == 0) {

return 100;

}else {

return 50;

}

}Again, you could search for the metric at Prometheus, specifically application:movie_databases_ExternalDatabases

Case 1.3: Metered to Analyze Request Totals

Are you charging your API per request? Don't worry, you can measure the usage rate with @Metered.

@Metered(name = "moviesRetrieved",

unit = MetricUnits.MINUTES,

description = "Metrics to monitor movies",

absolute = true)

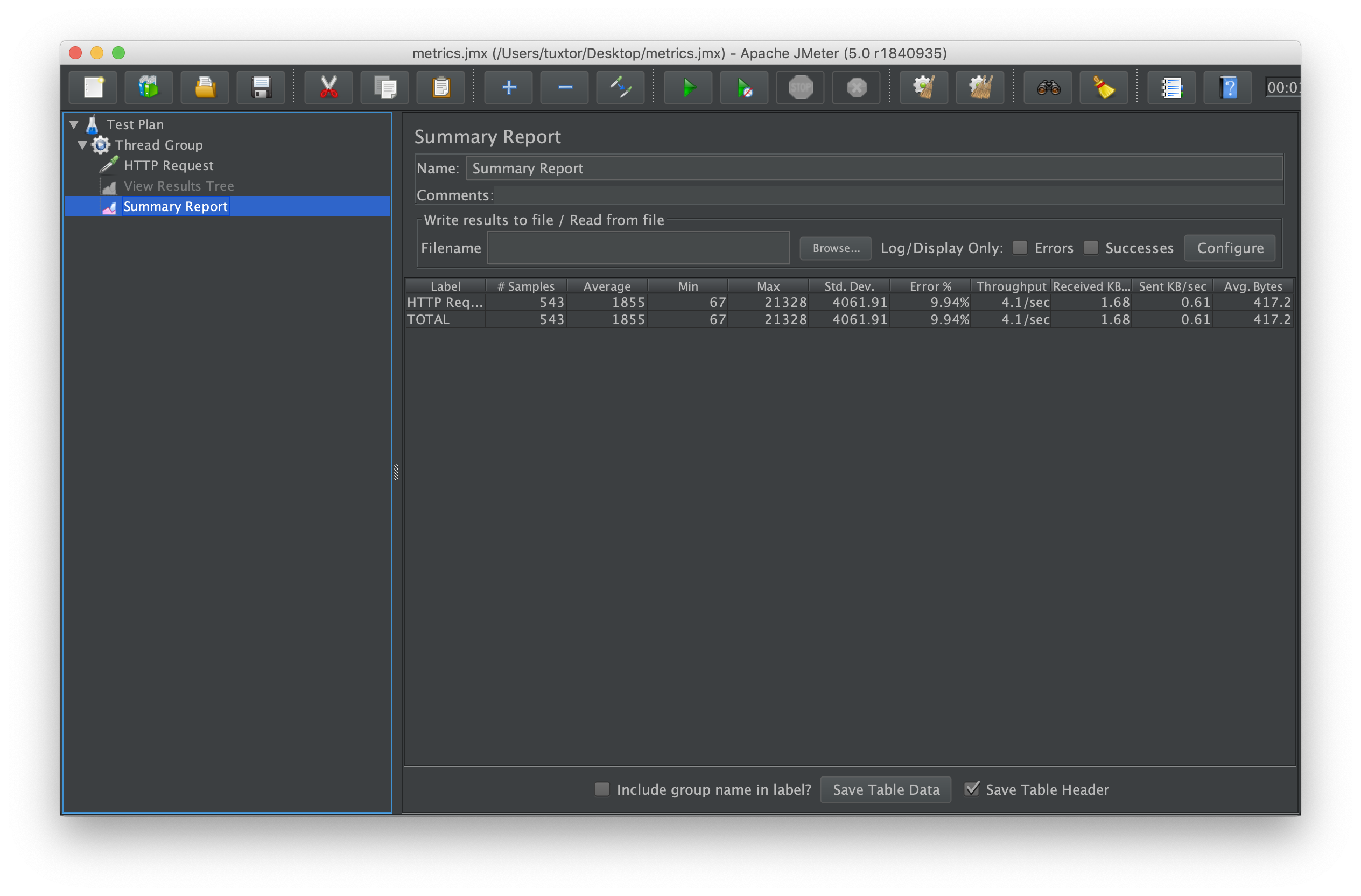

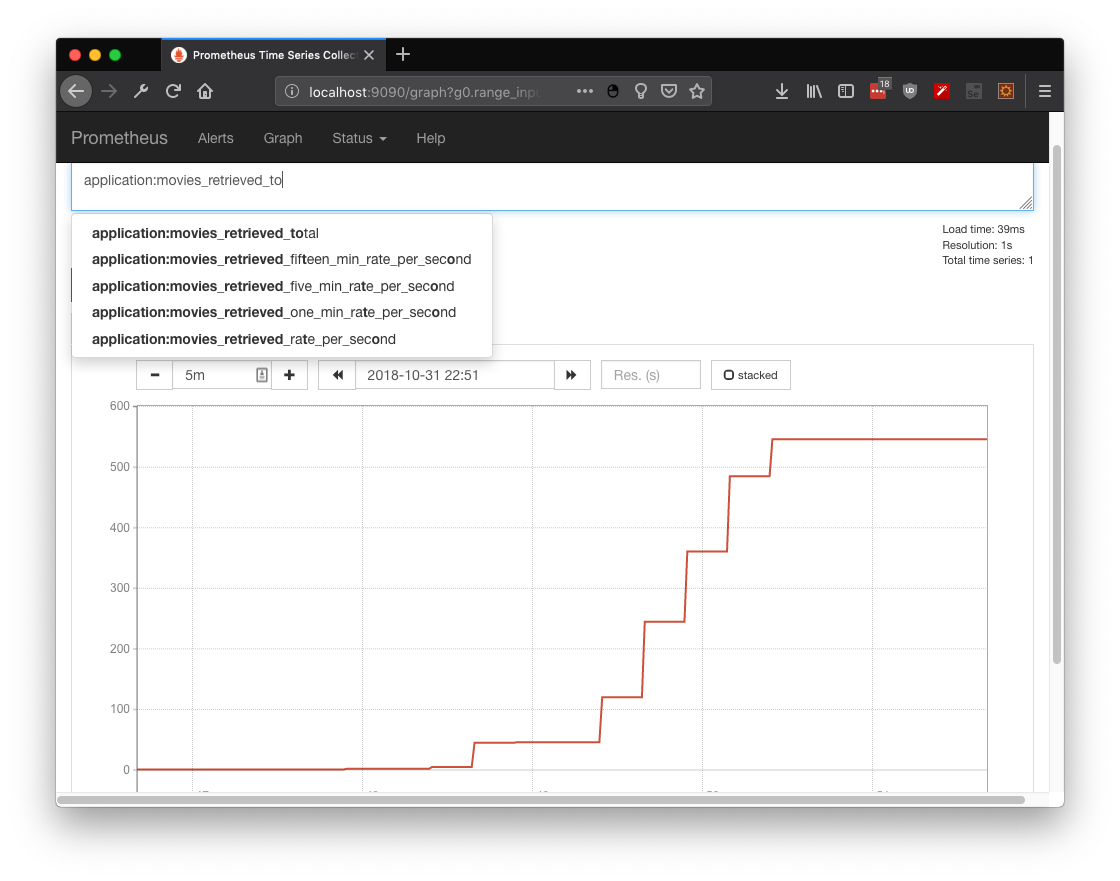

public Response findExpandedById(@PathParam("id") final Long id) In this practical use case, 500 +/- requests were simulated over a one minute period. As you could observe from the metric application:movies_retrieved_total, the stress test from JMeter and Prometheus show the same information:

Case 1.4: Timed to Analyze Your Response Performance

If used properly, @Timed will give you information about requests performance over time units.

@Timed(name = "moviesDelay",

description = "Metrics to monitor the time for movies retrieval",

unit = MetricUnits.MINUTES,

absolute = true)

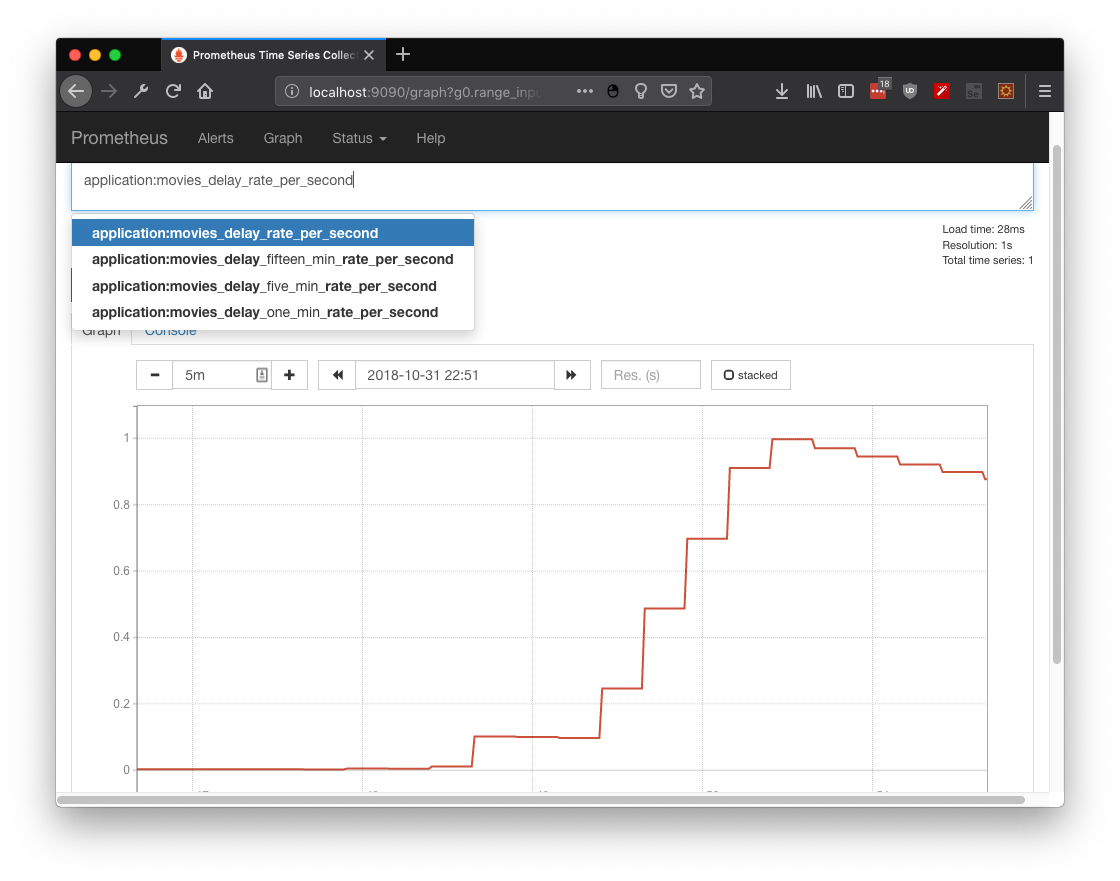

public Response findExpandedById(@PathParam("id") final Long id)By retrieving the metric application:movies_delay_rate_per_second, it's observable that requests take more time to complete at the end of the stress test (as expected with more traffic, less bandwidth and more time to answer):

Case 1.5: Histogram to Accumulate Useful Information

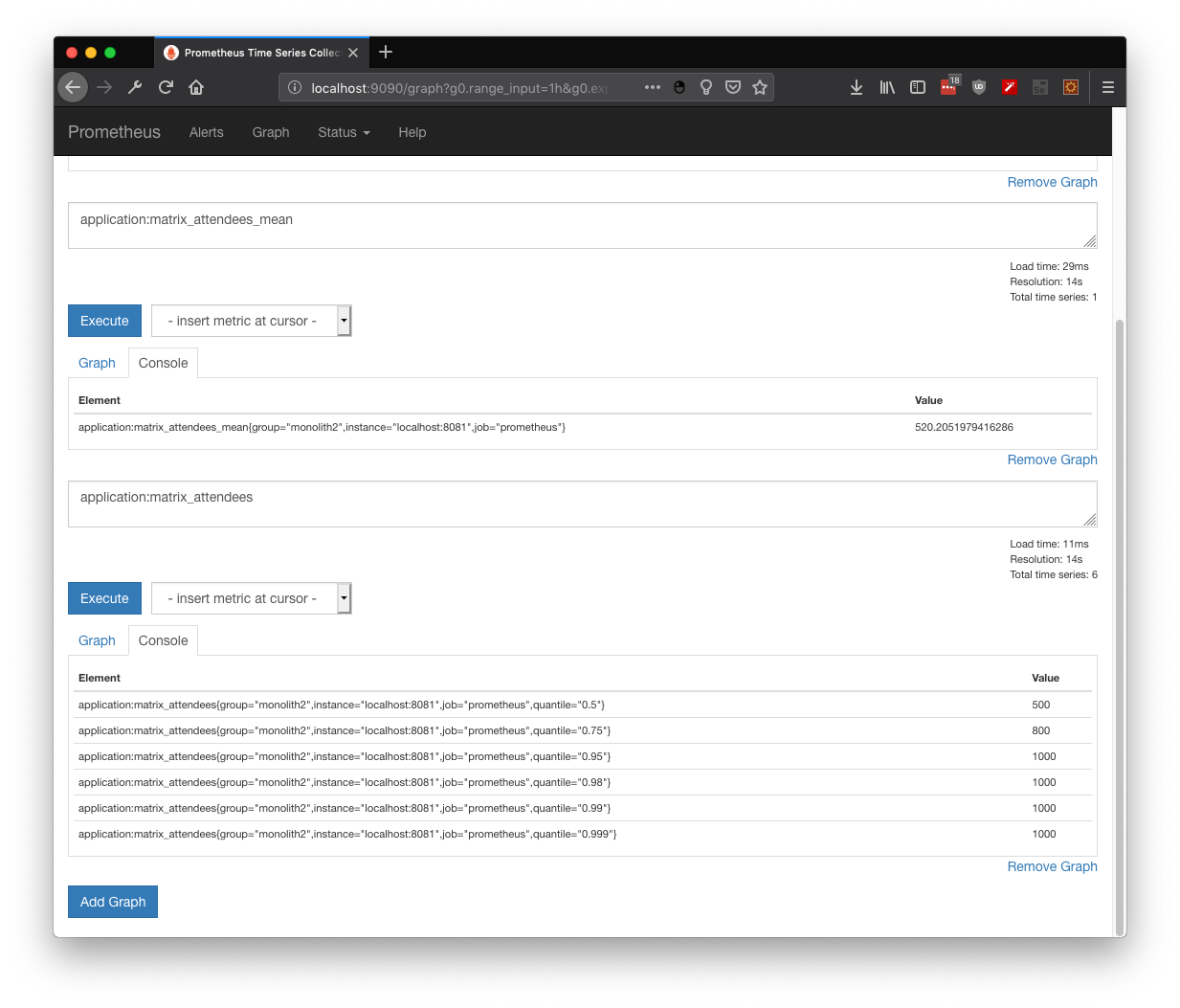

As described on Wikipedia, a histogram is an accurate representation of the distribution of numerical data. We could create our own distribution by directly manipulating the metrics API with any given data, like global attendees:

@Inject

MetricRegistry registry;

@POST

@Path("/add/{attendees}")

public Response addAttendees(@PathParam("attendees") Long attendees) {

Metadata metadata = new Metadata("matrix attendees", MetricType.HISTOGRAM);

Histogram histogram = registry.histogram(metadata);

histogram.update(attendees);

return Response.ok().build();

}And as any distribution, we could get the min, max, average, and values per quantile:

Published at DZone with permission of Víctor Orozco. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments