How to Categorize Performance Testing Defects

Learn how to categorize defects in your software by severity and priority for the most effective QA strategy and execution.

Join the DZone community and get the full member experience.

Join For FreeIn manual/automated testing, testers raise defects in a bug tracking tool, modern methodologies such as Agile, or tools like Jira to create user stories and/or bugs. Each defect has two important particulars: priority and severity. Priority defines how soon the defect needs to be fixed. Severity defines how critical the defect is. A famous example to differentiate between severity and priority is the invalid dimension of a company logo in the header. The company logo carries the brand and value — if the logo dimension is not right, surely, it is a defect. When raising a defect, the tester will assign the following severity and priority as Trivial and Critical respectively.

The reason for this variety is the dimension of the logo will not impact the actual business functions. Users will still be able to perform the operations. But the priority to fix the defect is ASAP, i.e. critical. Developers need to fix the dimensions ASAP in the immediate build. Now, let's talk about performance defects.

Sample Case Study

Assume that you are testing a simple web-based taxi reservation application. Critical business flows will be Registration, Login, Booking a ride, Account, Payment, Help, Driver's Registration, Driver's History, Payment, Reports, Disputes, etc. You will be testing 25 scenarios to validate the performance.

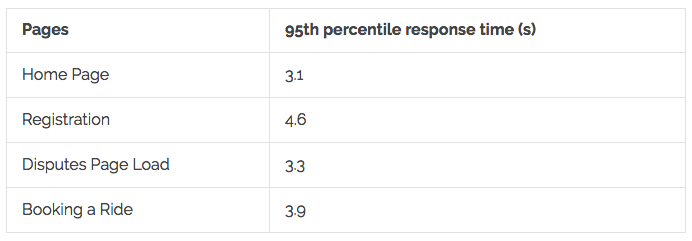

Technical Solution design states 3 seconds as the SLA objective for all the pages. Once the performance testing exercise is completed, you observed that there are variances in the response time in the scenarios.

Scenario

Now the question is, how do you raise your performance defects: in JIRA, QC, Bugzilla, or your favorite bug tracking tool?

What will the severity and priority be for each defect/story? This article will address those.

How to Categorize Performance Testing Defects

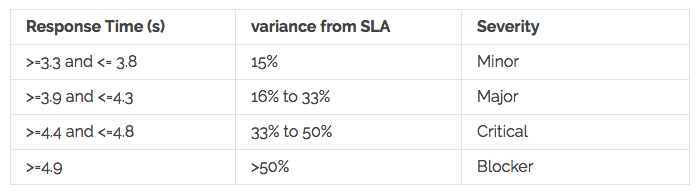

You need to define the performance testing well ahead of time with the help of your architects or business analysts (not developers). From my agile experience, I would try to categorize the performance testing defects into four categories: Blocker, Critical, Major, and Minor. You can ignore trivial things.

Blocker

Assume that you got a new code deployment in your test environment. Once you launch the page, it is taking more than 5 seconds for the first paint, and the time to render the complete page in Internet Explorer is more than 10 seconds, which is a complete blocker for your tests. During the load, definitely it will bring more issues to the table. Without proceeding with your tests, you can easily raise this defect as a complete blocker.

Critical

Assume that you are trying to complete a booking with your web application. After the user clicks on the "Submit" button, they expect to get a valid booking order ID. During the load, if the booking order ID is not being generated or an invalid booking ID is generated, then your complete transaction has a critical defect. Also, if the response time is exceeding 30-50% of the actual SLA, you can raise a defect as Critical.

The main intention of testing your web application is to see whether all the users are able to book and get a valid order ID. If most of the transactions are failing, then you should raise a critical defect.

Major

If any of the major functionalities has a workaround but it needs to be fixed by developers, you can categorize this issue as a Major defect. For example, if you are not able to access a page which cannot be clicked, but it can be navigable using a shortcut key or directly loading the page, then you can raise this as a major defect.

Minor

UI defects, grammatical errors, spelling mistakes, alignment issues, etc. can be categorized as minor or trivial. Also, the response time violation in terms of very minimal value (1 or 2 milliseconds), you can raise this concern as minor or trivial.

The table below is ONLY for reference.

Conclusion

It is important to categorize performance testing defects well before the execution. The above categorization heavily varies from project to project. There is no rule of thumb that needs to be followed. For Google-like websites, even the milliseconds matter, so think wisely and formulate the defect categories.

Published at DZone with permission of NaveenKumar Namachivayam. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments