JPA Caching With Hazelcast, Hibernate, and Spring Boot

In-memory data grids are often used to enhance performance. Learn how to use Hazelcast for caching data stored in the MySQL database accessed by Spring Data DAO objects.

Join the DZone community and get the full member experience.

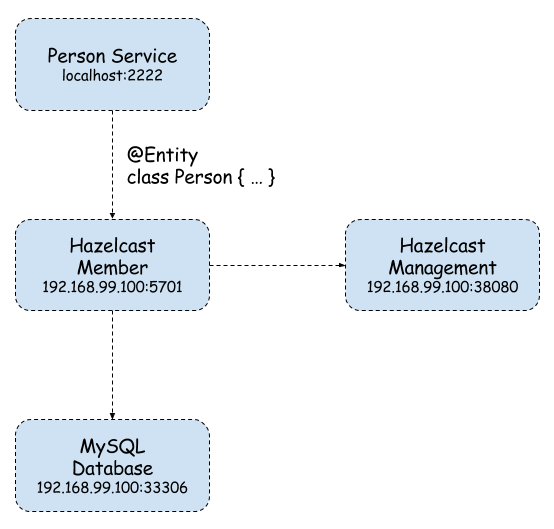

Join For FreeAn in-memory data grid is an in-memory distributed key-value store that enables caching data using distributed clusters. Do not confuse this solution with an in-memory or NoSQL database. In most cases it is used for performance reasons — all data is stored in RAM not in the disk like in traditional databases. The first time I started working with an in-memory data grid, we were considering moving to Oracle Coherence. The solution really made me curious. Oracle Coherence is obviously a paid solution, but there are also some open-source solutions, among which the most interesting seem to be Apache Ignite and Hazelcast. Today, I’m going to show you how to use Hazelcast for caching data stored in the MySQL database accessed by Spring Data DAO objects. Here’s the figure illustrating the architecture of the presented solution.

Implementation

Here's how to implement our solution.

Starting Docker Containers

We use three Docker containers — the first with a MySQL database, the second with a Hazelcast instance, and the third for Hazelcast Management Center — and a UI dashboard for monitoring Hazelcast cluster instances.

docker run -d --name mysql -p 33306:3306 mysql

docker run -d --name hazelcast -p 5701:5701 hazelcast/hazelcast

docker run -d --name hazelcast-mgmt -p 38080:8080 hazelcast/management-center:latestIf we would like to connect with Hazelcast Management Center from Hazelcast instance we need to place custom hazelcast.xml in the /opt/hazelcast catalog inside our Docker container. This can be done in two ways by extending Hazelcast base image or just by copying the file to an existing Hazelcast container and restarting it.

docker run -d --name hazelcast -p 5701:5701 hazelcast/hazelcast

docker stop hazelcast

docker start hazelcastHere’s the most important Hazelcast’s configuration file fragment.

<hazelcast

xmlns="http://www.hazelcast.com/schema/config"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://www.hazelcast.com/schema/config http://www.hazelcast.com/schema/config/hazelcast-config-3.8.xsd">

<group>

<name>dev</name>

<password>dev-pass</password>

</group>

<management-center enabled="true" update-interval="3">http://192.168.99.100:38080/mancenter</management-center>

...

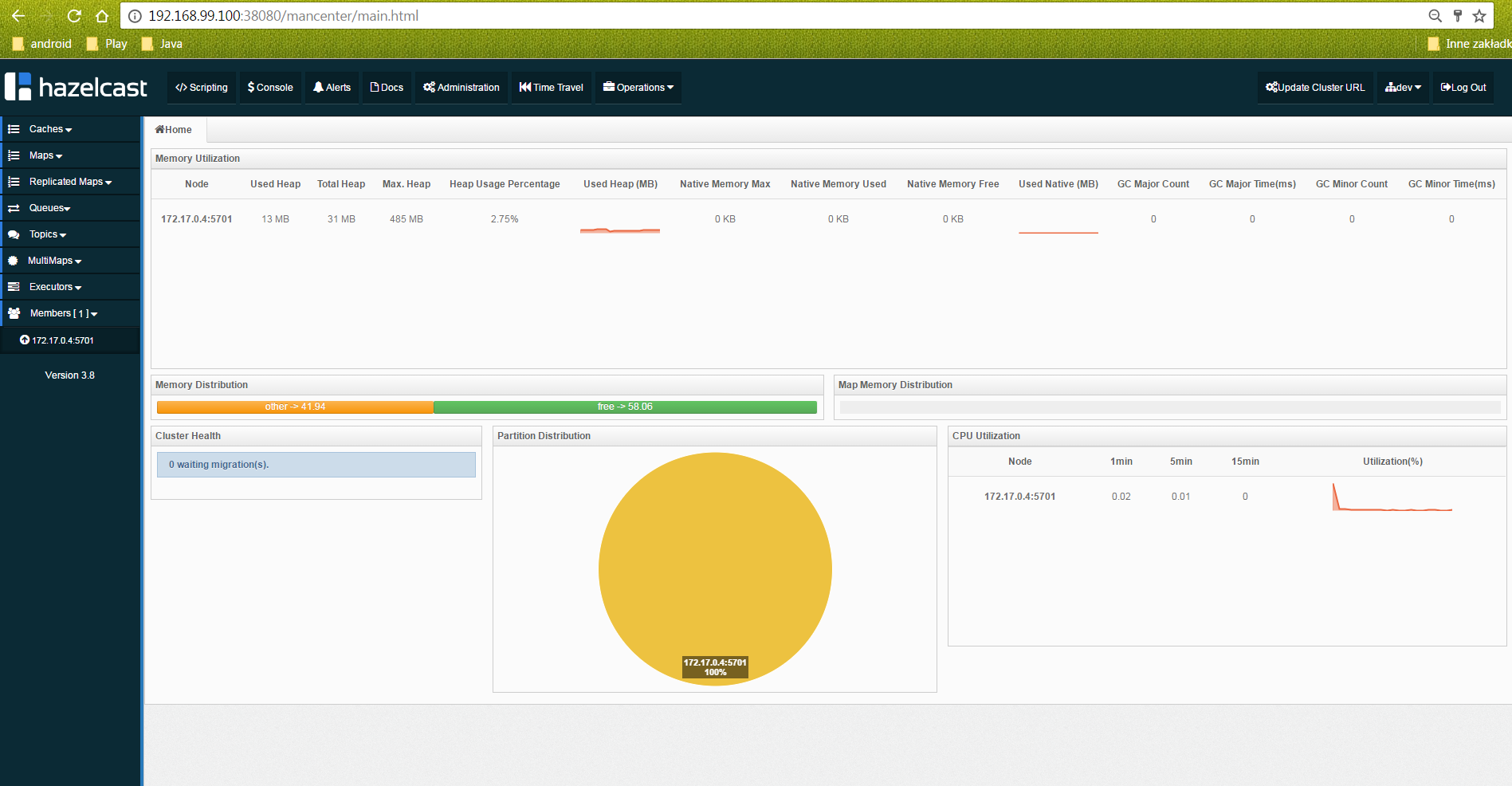

</hazelcast>Hazelcast Dashboard is available under http://192.168.99.100:38080/mancenter address. We can monitor there all running cluster members, maps, and some other parameters.

Maven Configuration

The project is based on Spring Boot 1.5.3.RELEASE. We also need to add Spring Web and MySQL Java connector dependencies. Here’s root project pom.xml.

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>1.5.3.RELEASE</version>

</parent>

...

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<scope>runtime</scope>

</dependency>

...

</dependencies>Inside the person-service module, we declared some other dependencies to Hazelcast artifacts and the Spring Data JPA. I had to override the managed hibernate-core version for Spring Boot 1.5.3.RELEASE because Hazelcast didn’t work properly with 5.0.12.Final. Hazelcast needs hibernate-core in the 5.0.9.Finalversion. Otherwise, an exception occurs when starting an application.

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-jpa</artifactId>

</dependency>

<dependency>

<groupId>com.hazelcast</groupId>

<artifactId>hazelcast</artifactId>

</dependency>

<dependency>

<groupId>com.hazelcast</groupId>

<artifactId>hazelcast-client</artifactId>

</dependency>

<dependency>

<groupId>com.hazelcast</groupId>

<artifactId>hazelcast-hibernate5</artifactId>

</dependency>

<dependency>

<groupId>org.hibernate</groupId>

<artifactId>hibernate-core</artifactId>

<version>5.0.9.Final</version>

</dependency>

</dependencies>Hibernate Cache Configuration

You can properly configure it in several different ways, but for me, the most suitable solution was inside application.yml. Here’s the YAML configuration file fragment. I enabled the L2 Hibernate cache and set Hazelcast native client address, credentials, and cache factory class HazelcastCacheRegionFactory. We can also set HazelcastLocalCacheRegionFactory. The differences between them are in performance — Local Factory is faster since its operations are handled as distributed calls. If you use HazelcastCacheRegionFactory, you can see your maps on Management Center.

spring:

application:

name: person-service

datasource:

url: jdbc:mysql://192.168.99.100:33306/datagrid?useSSL=false

username: datagrid

password: datagrid

jpa:

properties:

hibernate:

show_sql: true

cache:

use_query_cache: true

use_second_level_cache: true

hazelcast:

use_native_client: true

native_client_address: 192.168.99.100:5701

native_client_group: dev

native_client_password: dev-pass

region:

factory_class: com.hazelcast.hibernate.HazelcastCacheRegionFactoryApplication Code

First, we need to enable caching for Person@Entity.

@Cache(usage = CacheConcurrencyStrategy.READ_WRITE)

@Entity

public class Person implements Serializable {

private static final long serialVersionUID = 3214253910554454648L;

@Id

@GeneratedValue

private Integer id;

private String firstName;

private String lastName;

private String pesel;

private int age;

public Integer getId() {

return id;

}

public void setId(Integer id) {

this.id = id;

}

public String getFirstName() {

return firstName;

}

public void setFirstName(String firstName) {

this.firstName = firstName;

}

public String getLastName() {

return lastName;

}

public void setLastName(String lastName) {

this.lastName = lastName;

}

public String getPesel() {

return pesel;

}

public void setPesel(String pesel) {

this.pesel = pesel;

}

public int getAge() {

return age;

}

public void setAge(int age) {

this.age = age;

}

@Override

public String toString() {

return "Person [id=" + id + ", firstName=" + firstName + ", lastName=" + lastName + ", pesel=" + pesel + "]";

}

}DAO is implemented using Spring Data CrudRepository. Sample application source code is available on GitHub.

public interface PersonRepository extends CrudRepository<Person, Integer> {

public List<Person> findByPesel(String pesel);

}Testing

Let’s insert a little more data to the table. You can use my AddPersonRepositoryTest for that. It will insert 1M rows into the person table. Finally, we can call endpoint http://localhost:2222/persons/{id} twice with the same ID. For me, it looks like below — 22ms for the first call and 3ms for the next call, which is read from L2 cache. The entity can be cached only by the primary key. If you call http://localhost:2222/persons/pesel/{pesel}, the entity will always be searched bypassing the L2 cache.

2017-05-05 17:07:27.360 DEBUG 9164 --- [nio-2222-exec-9] org.hibernate.SQL : select person0_.id as id1_0_0_, person0_.age as age2_0_0_, person0_.first_name as first_na3_0_0_, person0_.last_name as last_nam4_0_0_, person0_.pesel as pesel5_0_0_ from person person0_ where person0_.id=?

Hibernate: select person0_.id as id1_0_0_, person0_.age as age2_0_0_, person0_.first_name as first_na3_0_0_, person0_.last_name as last_nam4_0_0_, person0_.pesel as pesel5_0_0_ from person person0_ where person0_.id=?

2017-05-05 17:07:27.362 DEBUG 9164 --- [nio-2222-exec-9] o.h.l.p.e.p.i.ResultSetProcessorImpl : Starting ResultSet row #0

2017-05-05 17:07:27.362 DEBUG 9164 --- [nio-2222-exec-9] l.p.e.p.i.EntityReferenceInitializerImpl : On call to EntityIdentifierReaderImpl#resolve, EntityKey was already known; should only happen on root returns with an optional identifier specified

2017-05-05 17:07:27.363 DEBUG 9164 --- [nio-2222-exec-9] o.h.engine.internal.TwoPhaseLoad : Resolving associations for [pl.piomin.services.datagrid.person.model.Person#444]

2017-05-05 17:07:27.364 DEBUG 9164 --- [nio-2222-exec-9] o.h.engine.internal.TwoPhaseLoad : Adding entity to second-level cache: [pl.piomin.services.datagrid.person.model.Person#444]

2017-05-05 17:07:27.373 DEBUG 9164 --- [nio-2222-exec-9] o.h.engine.internal.TwoPhaseLoad : Done materializing entity [pl.piomin.services.datagrid.person.model.Person#444]

2017-05-05 17:07:27.373 DEBUG 9164 --- [nio-2222-exec-9] o.h.r.j.i.ResourceRegistryStandardImpl : HHH000387: ResultSet's statement was not registered

2017-05-05 17:07:27.374 DEBUG 9164 --- [nio-2222-exec-9] .l.e.p.AbstractLoadPlanBasedEntityLoader : Done entity load : pl.piomin.services.datagrid.person.model.Person#444

2017-05-05 17:07:27.374 DEBUG 9164 --- [nio-2222-exec-9] o.h.e.t.internal.TransactionImpl : committing

2017-05-05 17:07:30.168 DEBUG 9164 --- [nio-2222-exec-6] o.h.e.t.internal.TransactionImpl : begin

2017-05-05 17:07:30.171 DEBUG 9164 --- [nio-2222-exec-6] o.h.e.t.internal.TransactionImpl : committingQuery Cache

We can enable JPA query caching by marking repository method with @Cacheable annotation and adding @EnableCaching to main class definition.

public interface PersonRepository extends CrudRepository < Person, Integer > {

@Cacheable("findByPesel")

public List < Person > findByPesel(String pesel);

}In addition to the @EnableCaching annotation, we should declare HazelcastIntance and CacheManager beans. As a cache manager, HazelcastCacheManager from the hazelcast-spring library is used.

@SpringBootApplication

@EnableCaching

public class PersonApplication {

public static void main(String[] args) {

SpringApplication.run(PersonApplication.class, args);

}

@Bean

HazelcastInstance hazelcastInstance() {

ClientConfig config = new ClientConfig();

config.getGroupConfig().setName("dev").setPassword("dev-pass");

config.getNetworkConfig().addAddress("192.168.99.100");

config.setInstanceName("cache-1");

HazelcastInstance instance = HazelcastClient.newHazelcastClient(config);

return instance;

}

@Bean

CacheManager cacheManager() {

return new HazelcastCacheManager(hazelcastInstance());

}

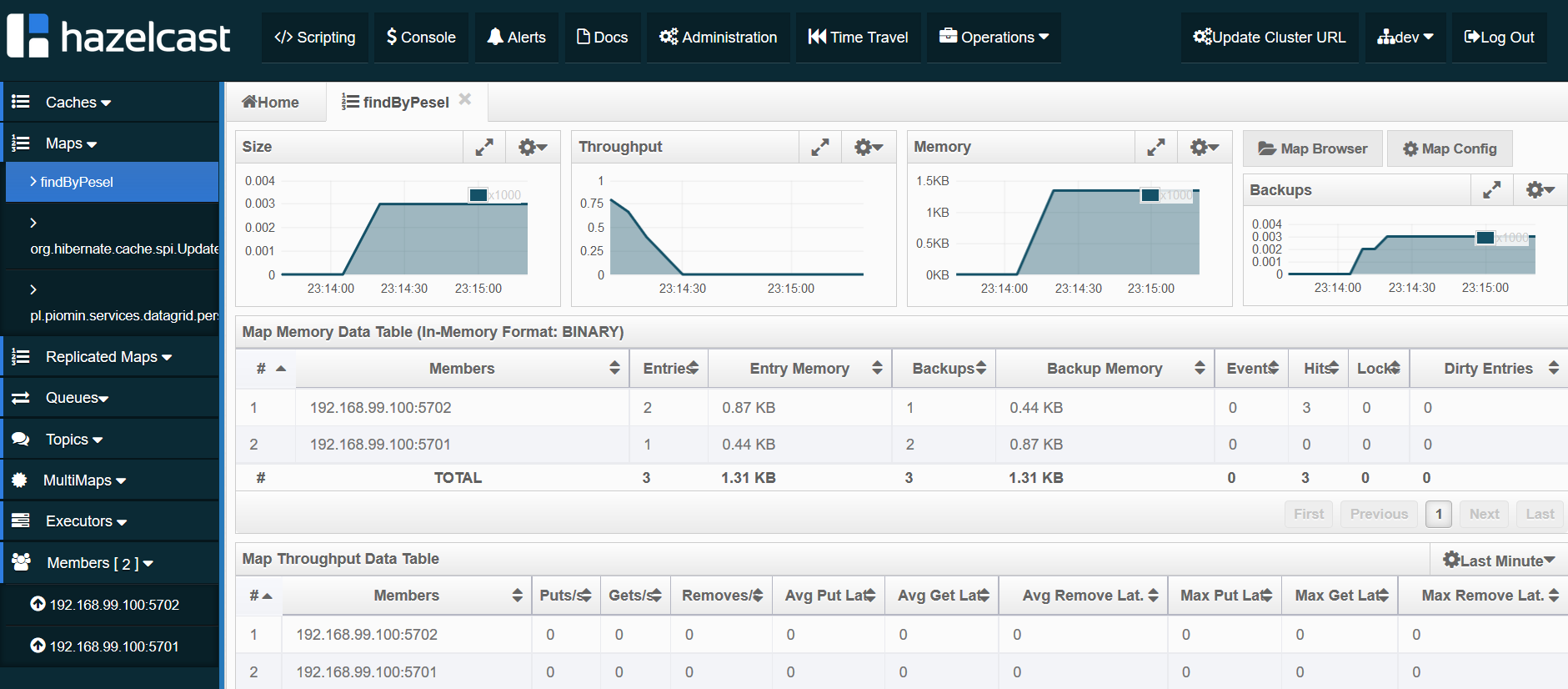

}Now, we should try find the person by the PESEL number by calling the endpoint http://localhost:2222/persons/pesel/{pesel}. The cached query is stored as a map, as you see in the picture below.

Clustering

What is the key functionality of the Hazelcast in-memory data grid? In the previous chapters, we based this on a single Hazelcast instance. Let’s begin with running the second container with Hazelcast exposed on a different port.

docker run -d --name hazelcast2 -p 5702:5701 hazelcast/hazelcastNow, we should perform one change in the hazelcast.xml configuration file. Because the data grid is run inside the Docker container, the public address has to be set. For the first container, it is 192.168.99.100:5701, and for second, 192.168.99.100:5702, because it is exposed on 5702 port.

< network >

... < public - address > 192.168 .99 .100: 5701 < /public-address>

... < /network>When starting a person-service application, you should see in the logs similar to visible the below connection with two cluster members.

Members [2] {

Member [192.168.99.100]:5702 - 04f790bc-6c2d-4c21-ba8f-7761a4a7422c

Member [192.168.99.100]:5701 - 2ca6e30d-a8a7-46f7-b1fa-37921aaa0e6b

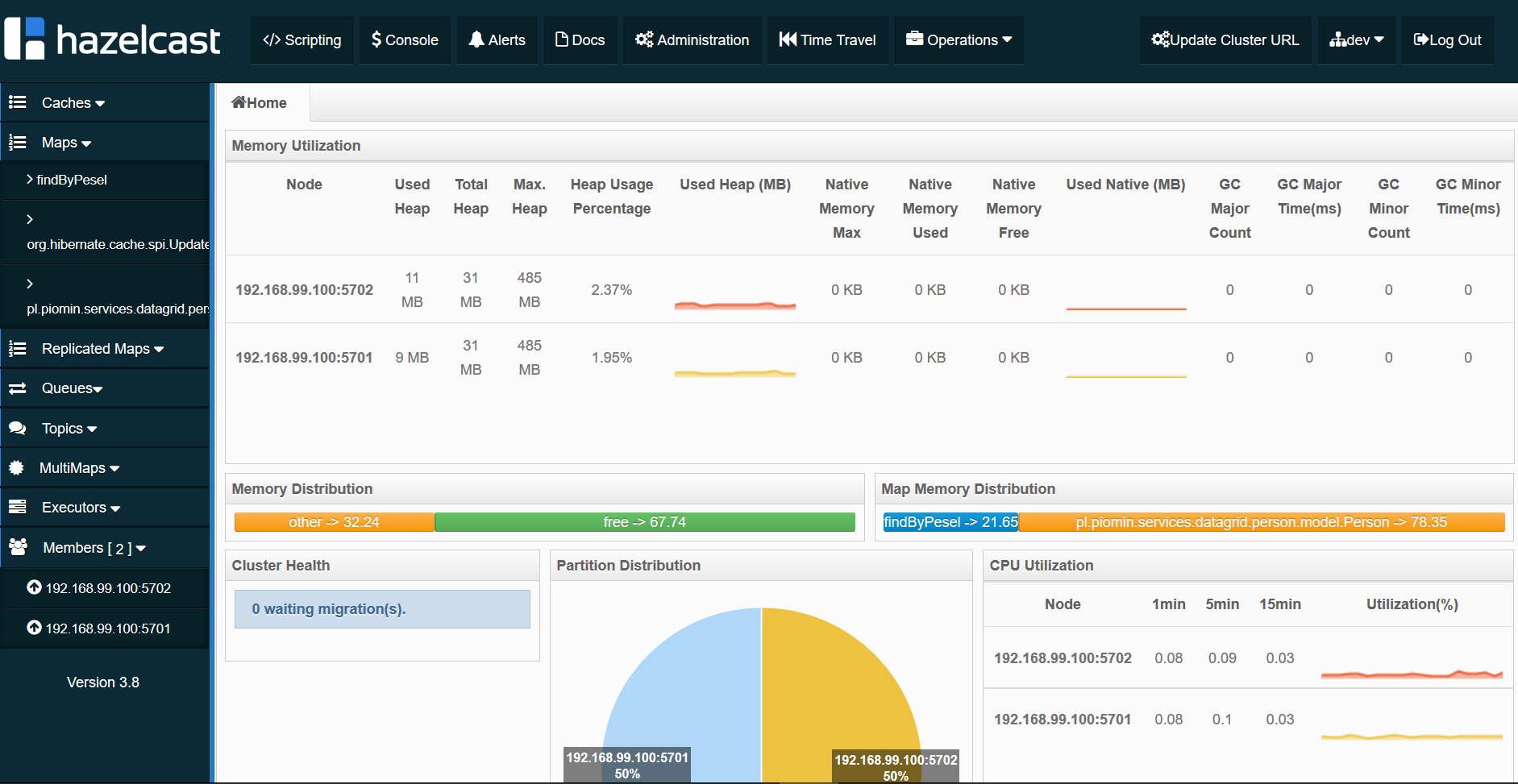

}All Hazelcast running instances are visible in the Management Center.

Conclusion

Caching and clustering with Hazelcast is simple and fast. We can cache JPA entities and queries. Monitoring is realized via the Hazelcast Management Center Dashboard. One problem for me is that I’m able to cache entities only by primary key. If I would like to find an entity by another index like the PESEL number, I have to cache the findByPesel query. Even if the entity was cached before by ID query will not find it in the cache but perform SQL on the database. Only the next query call is cached. I’ll show you smart solution for that problem in my next article about that subject in-memory data grid with Hazelcast.

Published at DZone with permission of Piotr Mińkowski. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments