Kafka Producer Architecture - Picking the Partition of Records

This article covers Kafka Producer Architecture, including how a partition is chosen, producer cadence, partitioning strategies, and consumers.

Join the DZone community and get the full member experience.

Join For Freethis article covers some lower level details of kafka producer architecture. it is a continuation of the kafka architecture and kafka topic architecture articles.

this article covers kafka producer architecture with a discussion of how a partition is chosen, producer cadence, and partitioning strategies.

kafka producers

kafka producers send records to topics. the records are sometimes referred to as messages.

the producer picks which partition to send a record to per topic. the producer can send records round-robin. the producer could implement priority systems based on sending records to certain partitions based on the priority of the record.

generally speaking, producers send records to a partition based on the record’s key. the default partitioner for java uses a hash of the record’s key to choose the partition or uses a round-robin strategy if the record has no key.

the important concept here is that the producer picks partition.

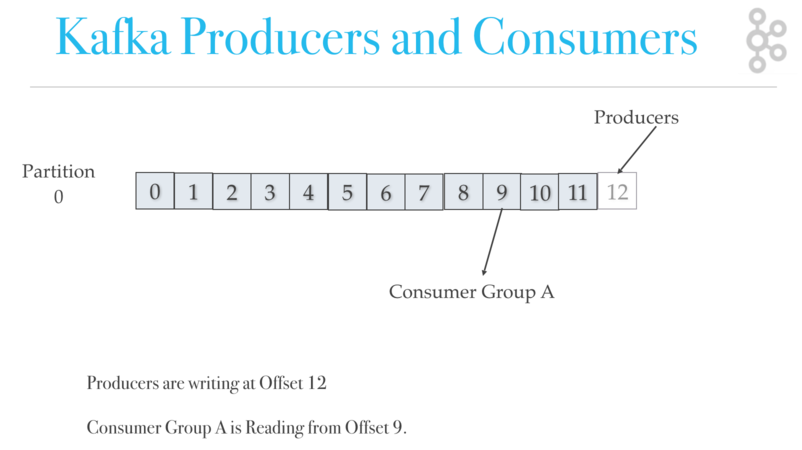

producers are writing at offset 12, while at the same time, consumer group a is reading from offset 9.

kafka producers write cadence and partitioning of records

producers write at their cadence so the order of records cannot be guaranteed across partitions. the producers get to configure their consistency/durability level (ack=0, ack=all, ack=1), which we will cover later. producers pick the partition such that record/messages go to a given partition based on the data. for example, you could have all the events of a certain ‘employeeid’ go to the same partition. if order within a partition is not needed, a ‘round robin’ partition strategy can be used, so records get evenly distributed across partitions.

review of producers

can producers occasionally write faster than consumers?

yes. a producer could have a burst of records, and a consumer does not have to be on the same page as the consumer.

what is the default partition strategy for producers without using a key?

round-robin

what is the default partition strategy for producers using a key?

records with the same key get sent to the same partition.

what picks which partition a record is sent to?

the producer picks which partition a record goes to.

kafka consumer architecture

please continue reading about kafka architecture. the next article covers kafka consumer architecture with a discussion of how records are divided up among consumers in a consumer group, consumer failover, and consumer load balancing.

Published at DZone with permission of Jean-Paul Azar. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments