Read SAP Tables With RFC_READ_TABLE in Mule 4 Using SAP Connector

Learn how to make an RFC to SAP, how to use various operators (like AND, OR, IN, etc.), and what the default structure of RFC_READ_TABLE input looks like.

Join the DZone community and get the full member experience.

Join For FreeIn this article, we are going to see how to make an RFC (Remote Function Call) to SAP and what different options are available to get your desired data. We'll also learn how to make use of various operators like AND, OR, IN, and many more, and what the default structure of RFC_READ_TABLE input looks like.

Whenever you are working with SAP, you will surely go with RFC calls and a lot of developers struggle to write XML queries for RFC.

Depending on your use case you may want to include a different number of fields and different types of operators, which will give you your desired data from SAP.

MuleSoft SAP Connector enables Mule runtime engines to support SAP integration as a certified SAP Java Connector (JCo) that leverages SAP JCo libraries. For connecting Mule with SAP, please check out this article.

Assuming you have established the connection successfully, it is now time to make the first call to RFC (Remote Function Call).

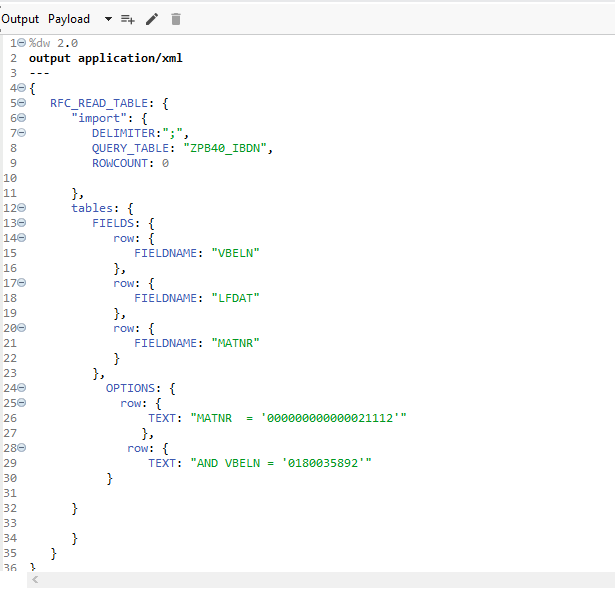

Here I am going to use the Synchronous Remote Function Call and the function will be RFC_READ_TABLE.

To make a call to SAP RFC, you need to build the required XML Format,

%dw 2.0

output application/xml

---

{

RFC_READ_TABLE: {

"import": {

DELIMITER:";",

QUERY_TABLE: "The Name of your query table",

ROWCOUNT: 0

},

tables: {

FIELDS: {

row: {

FIELDNAME: "Field 1"

},

row: {

FIELDNAME: "Field 2"

},

row: {

FIELDNAME: "Field 3"

},

row: {

FIELDNAME: "Field 4"

}

}

}

}

}DELIMITER is basically for separating your data, you can use PIPE "|" or any other separator as well.

ROWCOUNT: 0 indicates you want to have a full copy of data from the queried table, or else you can specify the number of lines. If we can relate it with SQL, then it is like a LIMIT keyword.

Now I will be querying a table and we will see how it gives you output. SAP gives you output in XML only. We can easily convert it to JSON using the transform message.

{

"RFC_READ_TABLE": {

"import": {

"DELIMITER": ";",

"NO_DATA": null,

"QUERY_TABLE": "ZPB40_IBDN",

"ROWCOUNT": "3",

"ROWSKIPS": "0"

},

"tables": {

"DATA": {

"row": {

"WA": "0180035892;20170119;000000000000021112 ;L-ARGININE C 20 KG ; ;20161220;C"

},

"row": {

"WA": "0180035892;20170119;000000000000021112 ;L-ARGININE C 20 KG ; ;20161220;C"

},

"row": {

"WA": "0180035892;20170119;000000000000021112 ;L-ARGININE C 20 KG ; ;20161220;C"

}

},

"FIELDS": {

"row": {

"FIELDNAME": "VBELN",

"OFFSET": "000000",

"LENGTH": "000010",

"TYPE": "C",

"FIELDTEXT": "Delivery"

},

"row": {

"FIELDNAME": "LFDAT",

"OFFSET": "000011",

"LENGTH": "000008",

"TYPE": "D",

"FIELDTEXT": "Delivery Date"

},

"row": {

"FIELDNAME": "MATNR",

"OFFSET": "000020",

"LENGTH": "000040",

"TYPE": "C",

"FIELDTEXT": "Material Number"

},

"row": {

"FIELDNAME": "ARKTX",

"OFFSET": "000061",

"LENGTH": "000040",

"TYPE": "C",

"FIELDTEXT": "Short text for sales order item"

},

"row": {

"FIELDNAME": "ZWF_STATUS",

"OFFSET": "000102",

"LENGTH": "000020",

"TYPE": "C",

"FIELDTEXT": "Workflow Status"

},

"row": {

"FIELDNAME": "ERDAT",

"OFFSET": "000123",

"LENGTH": "000008",

"TYPE": "D",

"FIELDTEXT": "Date on which the record was created"

},

"row": {

"FIELDNAME": "WBSTK",

"OFFSET": "000132",

"LENGTH": "000001",

"TYPE": "C",

"FIELDTEXT": "Goods Movement Status (All Items)"

}

},

"OPTIONS": null

}

}

}As you can see, I gave the row count as three and that is the reason we got three rows in the output. It is separated by the ;.

If you see the object FIELDS, it tells you which values columns you selected with the description as well. OPTIONS are showing as null because we have not provided any value to it. OPTIONS are basically the where clause of SQL, which means you can get data for a specified column value.

Let's see how to implement it in Mule 4:

The input payload to SAP looks like the above image, and in OPTIONS, I have given two values and used the AND operator for that. It will return all the values that match these conditions.

You can use different types of logical operators depending on your requirement, such as OR, IN, and LIKE.

The operators =, <>, <, >, <=, and >= are equivalent to EQ, NE, LT, GT, LE, and GE respectively.

This is how you can get the data from the SAP RFC Read Table.

Opinions expressed by DZone contributors are their own.

Comments