Solr Index Size Analysis

Join the DZone community and get the full member experience.

Join For Freein this post i’m going to talk about a set of benchmarks that i’ve done with solr. the goal behind it is to see how each parameter defined in the schema affects the size of the index and the performance of the system.

the first step was to fetch the set of documents that i was going to use in the tests. i wanted the documents to be composed of real text, so i started to look for sources in internet. the first one that i really liked was twitter. they provide a rest api that allows you to read a continuous stream of tweets, composed of approximately 1% of all the public tweets. each tweet is expressed as a json object, and carries meta-data about the message and the author. while this source allowed me to get a good number of documents in a short time (about 1.7 million tweets in 2 days), they were really small, so i started to look for a source of bigger documents, finally choosing wikipedia. i downloaded the documents through http using the “random article” feature in their site, obtaining about 160,000 articles in a couple of days. at the time of writting, the site download.wikipedia.org , which provides an easy way of downloading a bunch of articles, was out of service.

the next step was to design the schema. because one of the objectives is to see how each change in the schema affects the size of the index, i used many different combination of parameters, as to measure the influence of each one of them. on each case, the database of stop-words was populated using the top 100 terms of each set of documents, obtained from the administration panel of solr. for both datasets, the “omitnorms”, “termvectors” and “stopwords” parameters are referred to the “text” field. in all cases, the value of the parameters “termoffsets” and “termpositions” is the same as “termvectors”.

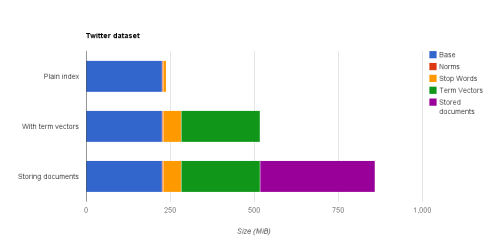

in the first figure you can see the size of the index for each

schema for the twitter data-set, and which proportion of the index

corresponds to each parameter. remember that this data-set has lots of

documents (about 1.7 million) but each one is small (240 bytes on

average). there are many remarkable things here. the first one is that

the space occupied by the term vectors (~280 mib when not using stop

words) is almost equal to the space occupied by the inverted index

itself (~240 mib). in second place, the space saved by omitting norms

is almost negligible (~2 mib). third, the space saved by using stop

word is doubled when storing term vectors, going from about 4% of the

index to about 10%. finally, the space occupied by the stored fields

(~340 mib) is considerably bigger than the space occupied by the

inverted index itself.

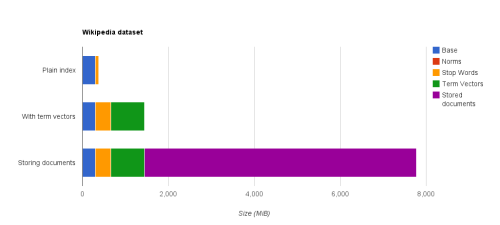

in the second figure you can see the same information for the

wikipedia data-set. the size occupied by the norms is still negligible

(< 1mib), however, the size occupied by the stop words has increased

to about 22% of the index size when not storing term vectors, and

about 25% when storing them. this time, the size occupied by the term

vectors (~1067 mib) is almost three times the space occupied by the

inverted index itself (~380 mib). finally, the size of the stored

documents (~6330 mib) is more than four times the size of the index with

term vectors stored.

at this point, we can state some conclusions concerning the size of the index:

- when the number of fields is small, the size of the norms is negligible, independently of the size and number of documents.

- when the documents are large, the stop words help reducing the size of the index significantly. maybe here is important to note two things. in first place, the documents fetched from wikipedia are writen using traditional language, and are all writen in english, while the documents fetched from twitter are writen using modern language, and in many different languages. in second place, i didn’t measure the precision and recall of the system when using stop words, so it is possible that the findability in a real scenario won’t be good.

- if you’re storing the documents, and they are big enough, it’s not so important if you store the term vectors or not, so if you’re using a feature such as highlighting and you are looking for good performance, you should store them. if you’re not storing documents, or your documents are small, you should think twice before storing the term vectors, because they’re going to increase significantly your index’s size.

i hope you find this post useful. currently i’m working on a set of

benchmarks to measure the influence of each one of these parameters in

the performance of the system, so if you liked this post, stay tuned!

Published at DZone with permission of Juan Grande, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments