The History and Development of Performance Testing

From the Center of Excellence to Waterfall to Agile and open source, performance testing has seen a lot of changes in its short life. We're now in CoE 2.0.

Join the DZone community and get the full member experience.

Join For FreeModern software delivery practices change the game for what’s possible and how quickly your team can go from an idea to realization. These are exciting times whether you are far along in the journey and already shipping code multiple times per day or just getting started with a goal of shipping more often than once per quarter.

With the shift to faster cycle times, there is a consensus that testing must “shift left” to earlier in the software delivery lifecycle in order to achieve increased test coverage without slowing things down.

The “shift left” for performance testing requires a democratization of tools and processes, a move from specialists, centralization, and complexity to distributed teams and accessible tools that every team member can use. Suddenly, the old tools don’t fit and development teams are taking matters into their own hands. There is more than a little chaos as a result!

It’s worth noting how we got here to understand why this change is taking place and how you can pivot from the old ways to the new most easily, transforming what is now a pain point into an opportunity to do far more testing, more often, and with less heroics needed.

Performance Testing With the Center of Excellence (CoE)

In the Waterfall era, legacy performance testing tools like LoadRunner were powerful, but they were also complex to set up and use. Using them required highly trained specialists and the tools themselves had restrictive licensing schemes. To cope with these constraints, the performance testing Center of Excellence (CoE) was born.

The CoE controlled the testing infrastructure, designed and executed performance tests, performed detailed analysis and reported the results back. Development teams didn’t create or run tests; they created service tickets requesting tests from the centralized specialists.

The queue for testing was often long, with weeks or even several months becoming the norm. In the Waterfall era, this bottleneck limited how often and how rigorously performance testing could be done, but with adequate planning and long enough release cycles, it was accepted as a manageable fact of life.

The Flow to Agility

As Waterfall gave way to the short release cycles of Agile and DevOps, it became clear that the old “plan way in advance and then wait for your testing window” performance testing approach just didn’t fit. The requirement for handoffs and the wait through the bottleneck were in fundamental conflict with agile itself. It’s no wonder that agile teams began using their new-found autonomy to work around the CoE and its queue.

Consider some fundamental aspects of DevOps and Agile, and it becomes clear why this was bound to happen:

At its root, DevOps is based on three core principles:

Flow. Create small batch sizes and eliminate constraints in order to get new features and abilities out into the real world early and often.

Feedback. This is why you want to get code out early and often — to get fast feedback. Continuous feedback enables identification issues and bugs in near real-time, while recent changes are fresh in the minds of the team.

Continuous experimentation. Flow and fast feedback make it safer to experiment and to take risks as a means for creating a better and innovative product.

Agile, in turn, has two practices that focus on making flow possible:

Break the work up into multiple small teams that work in parallel.

Design the teams to be autonomous and self-contained to avoid handoffs and queues. Less friction means more flow.

Agility Through Open-Source Performance Testing Tools

The solution for breaking free of the centralized bottleneck in performance testing was to turn to open-source tools, themselves driven by decentralized contributions from many. Open-source tools are constantly updated by developers from all over the world who work in parallel and without a guiding Product Manager. Developers get constant feedback from the community that is using the tools. They keep changing the code while constantly improving the product.

Open-source performance testing provided a low-friction way for any developer to become an Agile tester and begin doing testing independently of the CoE. All they needed to do was download and start working. If they were lacking knowledge, they could use Google, YouTube videos, and tool forums. Open-source provided an open market of shared knowledge, plugins and design patterns and it was “free like a puppy.” Getting started was very easy.

Obviously, though, not everything comes for free. There were gaps to fill, either with home-grown “roll your own” tooling or by leveraging a solution like CA BlazeMeter, which enhances open-source performance testing tools, and especially JMeter, with scalability, reporting, collaboration, and test management features.

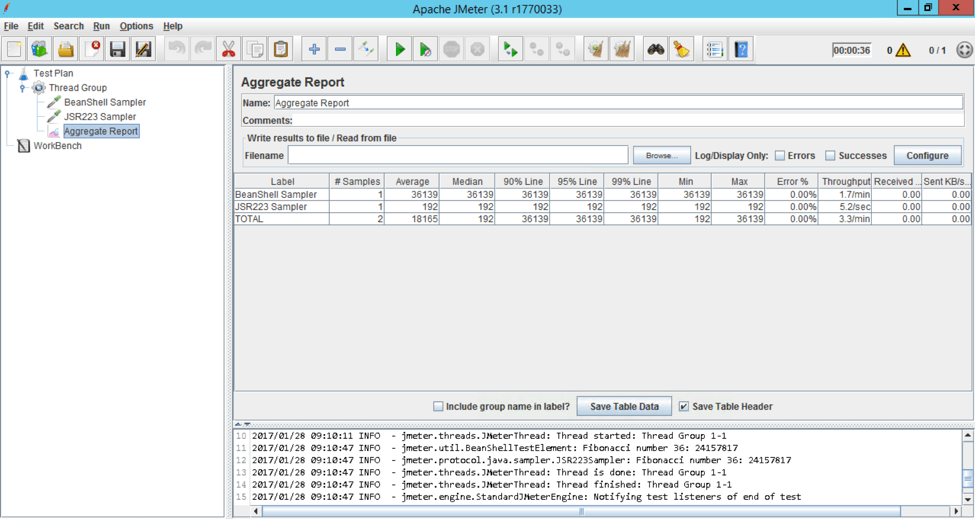

JMeter:

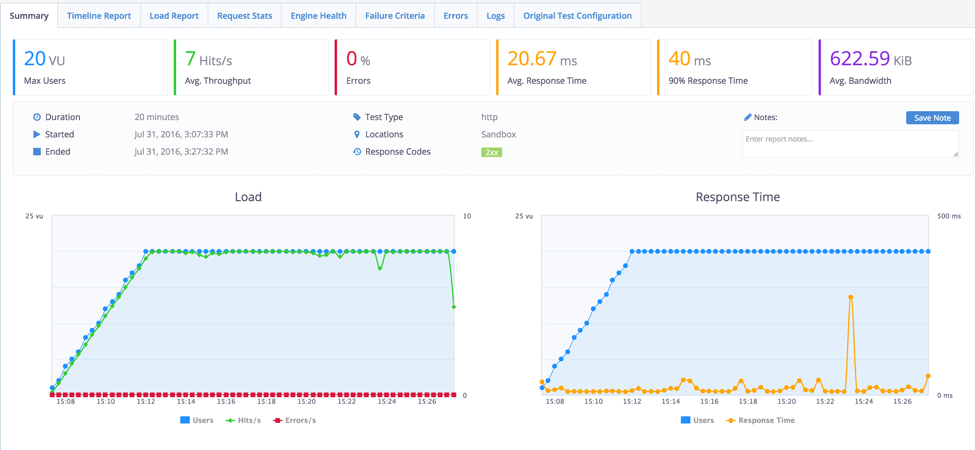

CA BlazeMeter:

CoE 2.0: From Constraint to Enablement, From the Center to the Edge

In this new world of CoE 2.0, the “E” in CoE becomes “Enablement” and the CoE moves from being a centralized bottleneck to leading and facilitating the move to democratization. As a provider of the democratization framework, the CoE hands out logins, API keys, and automation interfaces rather than service tickets.

All of this happens as part of developing a continuous delivery pipeline, and to this end, open-source performance testing tools can also be amplified with open-source Continuous Integration tools like Jenkins and Taurus. Used together with commercial platforms like CA BlazeMeter, these tools allow test cycle time to be compressed by parallelizing tests and running tests immediately without waiting in any queue. Speed and test coverage increase 10x, 100x or even 1000x over old practices depending on your approach and where you started. As you progress down this path, you approach near real-time detection of errors, the fast feedback so critical to success at speed.

Published at DZone with permission of Dave Karow. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments