The Kubernetes Attack Surface

By every measure, Kubernetes is the de-facto standard for automating the deployment and management of cloud-native applications. Its adoption is transforming the ways in which organizations of every size, in every industry, develop and release software using technologies such as containers, microservices, and declarative APIs. In parallel, these new technologies and architectures give rise to broad security risks and challenges that organizations must protect. Kubernetes introduces a new threat environment — one that is as dynamic, fast-moving, and as active as containerized applications themselves.

Kubernetes — with its breadth of cluster components and associated tooling — also introduces complexity for an organization’s end users. It requires teams to learn new skills and adopt new security workflows across development and operations. This complexity can expose organizations to a potentially expansive set of attack vectors throughout Kubernetes environments that stem from vulnerabilities, misconfigurations, or other operational issues.

As Kubernetes increasingly becomes a foundational infrastructure platform that underpins modern software delivery, securing Kubernetes itself and the cloud-native applications that run on top become critical. The broader Kubernetes community has undertaken several efforts to increase security awareness, such as conducting a security audit of the Kubernetes platform, publishing a Kubernetes attack matrix based on the MITRE ATT&CK framework, publishing a security whitepaper on best practices, and establishing various industry-standard security benchmarks.

In addition, the Open Source Security Foundation (OpenSSF) is now leading the Alpha-Omega Project to improve software supply chain security. On the application side, the OWASP Top 10 now includes “Insecure Design” and “Security Misconfiguration” to further shift security left. These efforts can help development, operations, and security leaders develop effective strategies for implementing new security measures to protect both applications and the Kubernetes infrastructure on which they run.

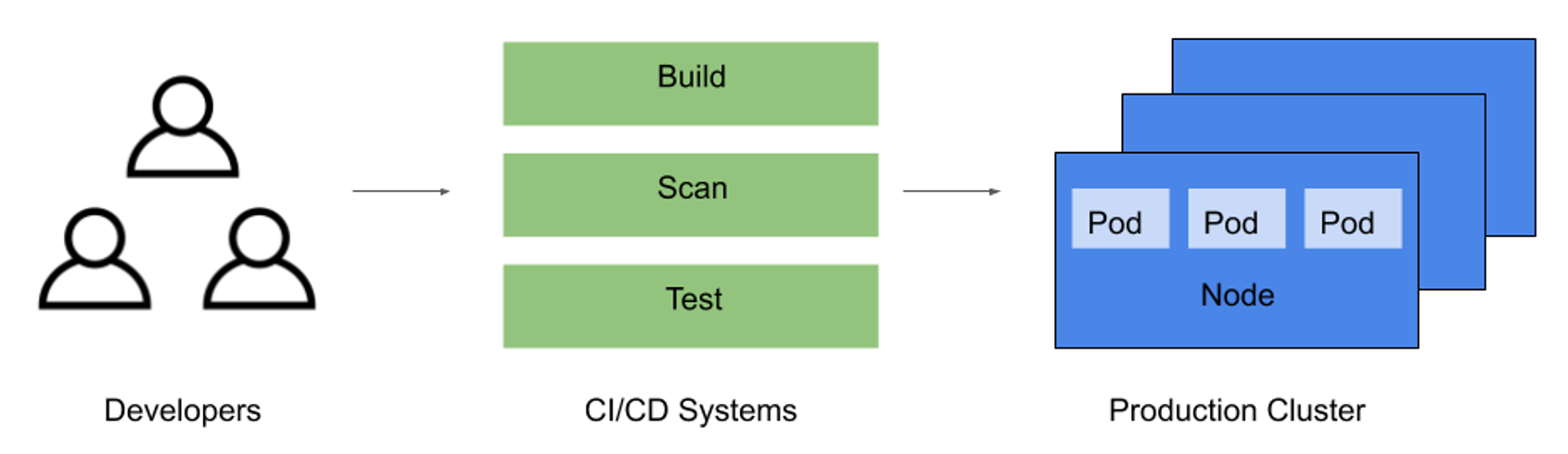

To understand how to secure cloud-native applications, it is imperative to know how to protect the underlying Kubernetes environment and its relevant attack surface. The attack surface within a Kubernetes cluster consists of three main areas:

- The software supply chain for building the immutable artifacts used to deploy and run containers

- Infrastructure components that must be provisioned and configured to run Kubernetes

- Deployed and running containers that make up individual Kubernetes applications

Nearly all Kubernetes threat vectors can be mapped into one of these three categories. This Refcard utilizes these categories as a framework to describe key security concepts that comprehensively span the Kubernetes infrastructure and applications.