Creating Your Own E-Mail Service With Haraka, PostgreSQL, and AWS S3

There are many paid email services out there that offer various integration features. However, most of the time, they aren’t 100% customizable to one’s requirements.

Join the DZone community and get the full member experience.

Join For Freei’m sure anyone who will be reading this post online has used email. it’s a pretty convenient method of communicating and there are a lot of free email service providers out there.

if you are a developer like myself, you have a lot of paid email services out there that offer various integration options and features. however, most of the time, they aren’t 100% customizable to one’s requirements. even setting up your own email server doesn't really allow you to customize every aspect of the email pipeline.

however, if you are a developer like me, we can use haraka. it’s an smtp server written in node.js that has a plugin-based architecture. what that means is that we can pretty much do whatever we want with haraka by writing plugins. all you need is a little bit of javascript knowledge. if you don’t have it, just acquire it. javascript is a pretty easy language to learn, and you don’t need a lot of advanced skills to write a haraka plugin.

in this post, we will be exploring how we can set up haraka to receive and send emails, how to write a plugin to validate recipients and accept or reject the email, how to write a plugin to place incoming emails in an amazon aws bucket.

it’s going to be a long post!

installing haraka

you will first need to have npm and node.js installed in your system. the installation instructions are pretty clear and straightforward.

once node and npm setup is completed, all you have to do is install haraka by typing in:

npm install -g haraka

the

-g

flag is for installing haraka globally. you might have to provide root privileges on a unix-based system.

once haraka is installed, you need to create a configuration for it. without a configuration, you can’t do anything.

haraka -i /path/to/haraka_test

this will create a directory named

haraka_test

that has all the configurations necessary to run a haraka server instance.

the

haraka_test

contains the following directories and files.

- config: a directory containing various configuration files.

- docs: a directory containing documentation.

- plugins: a directory containing the custom plugins.

- package.json: a file containing additional node dependencies that the plugins need to run.

one important file to note right now is the

config/smtp.ini

file. you can change server listening details like the port, ip, etc. from that file. the default port for haraka is

25

, which requires root/admin privileges depending on the system you are using.

now that’s just an overview of what's created when you create the initial configuration. we will go into more details as we proceed down this post.

so, let’s start her up.

sudo haraka -c /path/to/haraka_test/you will see some log output on the console, and then the server should start up. while there’s nothing much you can do right now the way this server is setup, it’s the first step!

receiving emails

after the server is installed successfully, it’s time to set it up to pick up incoming emails. here’s the thing to do this properly, you will need your own domain.

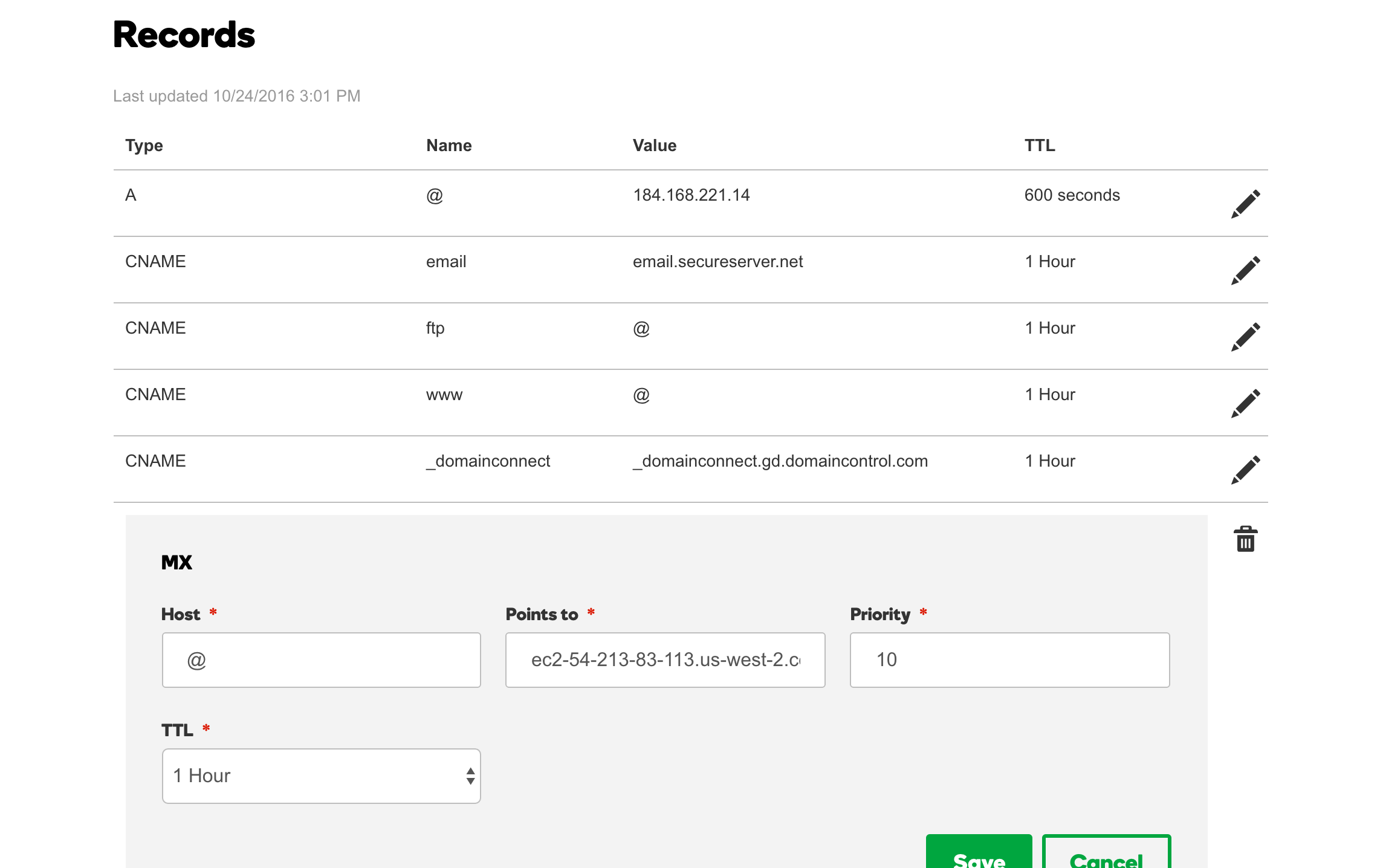

mx record

now everyone knows, or i hope they do, what a dns record is; basically, that’s what tells everyone which domain is pointed at which server.

there’s something called a

mx

record as well. that’s shorthand for

mail exchange

. now this record tells anyone who wants to check which

mail exchange server(s)

are associated with a domain.

i got

thihara.com

from godaddy, and you can set your

mx

record from their dns management screen. it basically looks like this:

you want this to point to the address of the server you are running the haraka server from. note that i have the mx record pointed at the dns of my aws ec2 instance. you can’t point it to an ip address directly, at least not with godaddy.

host_list

when you want haraka to accept emails for your domain, you need to add it to the

/path/to/haraka_test/config/host_list

file. for example, to accept emails for

thihara.com

(i.e., [email protected], [email protected]) you need to add

thihara.com

to the

host_list

file as a single line.

smtp

it’s worth noting that the responsibility of an smtp server like haraka is two-fold.

- receiving and accepting emails and then forwarding them to their destinations.

- sending outgoing emails.

in no way will the smtp server itself store the emails, nor does it have any concept of an inbox or mailbox (where emails specific to a single user will be stored).

storing emails and maintaining inboxes is generally done by separate servers like imap or pop3.

setting up a queue

a queue is a term that haraka uses to describe how the email is forwarded. the queue is going to decide what will happen to the emails that are received by the server. you can decide to use a queue that will discard the received emails for testng purposes, or use a plugin that will forward the emails to a pop3 or an imap server. even put the emails in an s3 bucket or in some kind of a database.

swaks

swaks is a script that you can use to test the smtp server by sending emails. it can be found here .

once downloaded and installed, the script can be used to test the email receiving functionality on a locally setup haraka server like this:

swaks -s localhost -t [email protected] -f [email protected]or to test your dns and server associations simply use

swaks -t [email protected] -f [email protected]you will see an output similar to

=== trying localhost:25...

=== connected to localhost.

<- 220 thiharas-macbook-pro.local esmtp haraka 2.8.8 ready

-> ehlo thiharas-macbook-pro.local

<- 250-thiharas-macbook-pro.local hello unknown [127.0.0.1], haraka is at your service.

<- 250-pipelining

<- 250-8bitmime

<- 250-size 0

<- 250-starttls

<- 250 auth plain login cram-md5

-> mail from:<[email protected]>

<- 250 sender <[email protected]> ok

-> rcpt to:<[email protected]>

<- 250 recipient <[email protected]> ok

-> data

<- 354 go ahead, make my day

-> date: mon, 28 nov 2016 09:46:46 +0530

-> to: [email protected]

-> from: [email protected]

-> subject: test mon, 28 nov 2016 09:46:46 +0530

-> x-mailer: swaks v20130209.0 jetmore.org/john/code/swaks/

->

-> this is a test mailing

->

-> .

<** 451 (919bcdd3-2819-44c5-9e48-ce0aafd2abf7.1)

-> quit

<- 221 thiharas-macbook-pro.local closing connection. have a jolly good day.

=== connection closed with remote host.

you will notice that the final response code is

451

. this is because we haven’t really set up a queue yet. we will get to that down the line!

of course, the localhost will be replaced with

thihara.com

or whatever is the recipient domain if you were testing against an actual dns baked email server.

you can see what other syntax is supported by the tool from their website. it basically supports everything related to emails, including attachments, embedded html, etc.

writing the s3 queue plugin

now our email server is setup to receive emails, let see how we can write a haraka queue plugin to push the received emails to an aws s3 bucket.

you need to add the aws dependencies since our plugin is going to need to connect to an aws s3 bucket. so go to the

/path/to/haraka_test

directory and type in

npm install --save aws-sdkplugin and file names

now that all of your dependencies are in place for this plugin, let’s start writing it.

let’s name out plugin

aws_s3_queue

. consequently, the code for the plugin behavior will go into a file named

aws_s3_queue.js

.

you can create the skeletal files for the plugin by using the following command.

haraka -c /path/to/haraka_test -p aws_s3_queuethis command will create two files:

-

/path/to/haraka_test/plugins/aws_s3_queue.js. -

/path/to/haraka_test/docs/plugins/aws_s3_queue.md.

the

aws_s3_queue.js

file contains the source code for the plugin, while the

aws_s3_queue.md

is the read me file.

there are three function hooks that you need to implement in the

aws_s3_queue.js

file.

- register: place your initialization code in this function.

- hook_queue: this is where you need to place the code that actually does the work.

- shutdown: place your de-initialization code in here.

required classes

you need to first import the following classes into the plugin.

var aws = require("aws-sdk"),

zlib = require("zlib"),

util = require('util'),

async = require("async"),

transform = require('stream').transform;

now, you might realize that we didn’t define or install

async

as a dependency. don’t worry, it’s already included in the core haraka server code, enabling you to use it in the plugin.

initialization

let’s write our

register

function to load the configurations and initialize the aws sdk.

exports.register = function () {

this.logdebug("initializing aws s3 queue");

var config = this.config.get("aws_s3_queue.json");

this.logdebug("config loaded : "+util.inspect(config));

aws.config.update({

accesskeyid: config.accesskeyid, secretaccesskey: config.secretaccesskey, region: config.region

});

this.s3bucket = config.s3bucket;

this.zipbeforeupload = config.zipbeforeupload;

this.fileextension = config.fileextension;

this.copyalladdresses = config.copyalladdresses;

};

the most important thing to note here is how we are loading the configurations through a file. we are basically loading a

json

file named

aws_s3_queue.json

from the

/path/to/haraka_test/config

directory. the config module of haraka already knows that it has to parse the json data since the file has a

.json

extension.

you can get more details about config parsing by reading the

readme.md

file of the

config parsing code repo

.

we are also updating the aws configurations with the necessary authentication details, loaded from the config file. the config file will look like this.

{

"accesskeyid": "access key id",

"secretaccesskey": "secret access key",

"region": "us-west-2",

"s3bucket": "s3-bucket-name",

"zipbeforeupload": true,

"fileextension": ".eml.raw.gzip",

"copyalladdresses": true

}now, another important configuration that needs to be done is setting the plugin timeout. in haraka, the timeout for a plugin defaults to 30 seconds. this is a very reasonable timeout, but we are talking about a file upload over the internet, so that might not be enough.

we can set a custom plugin timeout for a plugin by creating a file named

<plugin name>.timeout

inside the

/path/to/haraka_test/config

and placing the timeout in seconds inside that file.

so, the timeout configuration for our s3 queue plugin will be named

aws_s3_queue.timeout

. it will contain only one line of text, the number of seconds the plugin should be allowed to process a request before being timed out, in this case

120

for 120 seconds, or 2 minutes.

actual work

now let’s write the code that will handle each incoming email request.

exports.hook_queue = function (next, connection) {

var plugin = this;

var transaction = connection.transaction;

var emailto = transaction.rcpt_to;

var gzip = zlib.creategzip();

var transformer = plugin.zipbeforeupload ? gzip : new transformstream();

var body = transaction.message_stream.pipe(transformer);

var s3 = new aws.s3();

var addresses = plugin.copyalladdresses ? transaction.rcpt_to : transaction.rcpt_to[0];

async.each(addresses, function (address, eachcallback) {

var key = address.user + "@" + address.host + "/" + transaction.uuid + plugin.fileextension;

var params = {

bucket: plugin.s3bucket,

key: key,

body: body

};

s3.upload(params).on('httpuploadprogress', function (evt) {

plugin.logdebug("uploading file... status : " + util.inspect(evt));

}).send(function (err, data) {

plugin.logdebug("s3 send response data : " + util.inspect(data));

eachcallback(err);

});

}, function (err) {

if (err) {

plugin.logerror(err);

next();

} else {

next(ok, "email accepted.");

}

});

};we are doing a few things in this function. first, we are checking the configurations to see if the file should be compressed with the zip algorithm before being uploaded. since mime is a text-based protocol, you will save some space, at the expense of processing power, by compressing the email file.

then we are going to see if the email should be copied to all the inboxes of the recipients if there are multiple recipients. if that is the case, then we will do so; otherwise, we will only copy the email into the first address in the list.

you might want to take the

cc

and

bcc

fields into consideration and filter out the email addresses that don’t belong to your domain. there’s no point in keeping inboxes for users that does’t exist in your domain or web app.

we will then create the file key by prefixing it with the email address and suffixing it with the file extension defined in the configuration. for example, for zipped files, we can use the

.zip

format. by prefixing the file name with the email address we can easily retrieve all of the emails for a particular address or user from the aws s3 bucket.

then we just iterate over the addresses and upload them into the aws s3 bucket. now here we have a chance to make a huge improvement when there are multiple inboxes the file need to be uploaded to. instead of uploading the file every time we can use aws s3 sdk’s copy method to copy the already uploaded file over to the other inboxes. this should save the upload bandwidth and time for all the other addresses present in the email.

now, the other curious thing you see in this code is the use of a custom class named

transformstream

when we are not zipping the file.

var transformer = plugin.zipbeforeupload ? gzip : new transformstream();

var body = transaction.message_stream.pipe(transformer);

for some reason, the

message_stream

of the transaction object wouldn't work as a normal node.js stream without a transforming stream in-between. now, this is fine when we are zipping up the file, but the code will just hang and timeout when we don’t have the

zip

transforming stream. so, we need to create a dummy transform stream that will just pass along the data without any modification.

the

transformstream

class looks like this.

var transformstream = function() {

transform.call(this);

};

util.inherits(transformstream, transform);

transformstream.prototype._transform = function(chunk, encoding, callback) {

this.push(chunk);

callback();

};and there you go, we have written most of the code for our aws s3 plugin.

de-initialization or destruction

the last job of the plugin is to clean up after itself. we can use the

destroy

method to implement any of the cleaning operations.

exports.shutdown = function () {

this.loginfo("shutting down queue plugin.");

};as you can see don’t need to clean anything up in this plugin, so it just contain a log message!

activating the plugin

all you have to do is to activate the plugin by entering the following line in the

/path/to/haraka_test/config/plugins

file.

aws_s3_queue

if you wish to organize the plugins, you can create a folder named

queue

and put the

aws_s3_queue.js

file in there. then you need to change the plugins file to reflect the change by adding the following line.

queue/aws_s3_queuetest it out

you can send an email using

swaks

and test out the new plugin.

if the plugin is working correctly, you will see that instead of the previous

451

response code, you received a

250

status code.

<- 250 email accepted. (bcd02c7a-2cbf-4d2e-be65-17eec769bf2c.1)

-> quitwriting the validity plugin

now that we are receiving emails, we need a way to figure out how to validate the email addresses to make sure that they do in fact exist in our application. otherwise, we will end up accepting pretty much anything that’s sent our way by anyone.

we can do this by writing another plugin to check the validity of the recipient addresses. let’s make the plugin connect to a

postgresql

database instance and check the user’s validity from the data present.

you need to add the postgresql dependencies since our plugin is going to need to connect to an

postgresql

database. so go to the

/path/to/haraka_test

directory and type in

npm install --save pglet’s try not go into all the details in here like we did with the aws s3 plugin, since we have covered most of the things necessary for plugin writing already.

plugin and file names

now that all of your dependencies are in place for this plugin let’s start writing it.

let’s name our plugin

rcpt_to.validity

. consequently, the code for the plugin behavior will go into a file named

rcpt_to.validity.js

.

you can create the skeletal files for the plugin by using the following command.

haraka -c /path/to/haraka_test -p rcpt_to.validityjust like before this command will create two files

-

/path/to/haraka_test/plugins/rcpt_to.validity.js. -

/path/to/haraka_test/docs/plugins/rcpt_to.validity.md.

required classes

we need to import the following classes for our plugin.

var util = require('util'),

pg = require('pg');initialization

let’s see that the plugin’s initialization code looks like.

exports.register = function () {

this.logdebug("initializing rcpt_to validity plugin.");

var config = this.config.get('rcpt_to.validity.json');

var dbconfig = {

user: config.user,

database: config.database,

password: config.password,

host: config.host,

port: config.port,

max: config.max,

idletimeoutmillis: config.idletimeoutmillis

};

//initialize the connection pool.

this.pool = new pg.pool(dbconfig);

/**

* if an error is encountered by a client while it sits idle in the pool the pool itself will emit an

* error event with both the error and the client which emitted the original error.

*/

this.pool.on('error', function (err, client) {

this.logerror('idle client error. probably a network issue or a database restart.'

+ err.message + err.stack);

});

this.sqlquery = config.sqlquery;

};

as you can see, just like before, we are loading the configurations from a file named similar to the plugin

rcpt_to.validity.json

.

then, we initialize the connection pool for postgresql. i’m not going to talk about all the database related code since it’s beyond the scope of this post.

the configuration file will look like this:

{

"user": "thihara",

"database": "haraka",

"password": "",

"host": "127.0.0.1",

"port": 5432,

"max": 20,

"idletimeoutmillis": 30000,

"sqlquery": "select exists(select 1 from valid_emails where email_id=$1) as \"exists\""

}actual work

let’s see what our user validity plugin actually does!

exports.hook_rcpt = function (next, connection, params) {

var rcpt = params[0];

this.logdebug("checking validity of " + util.inspect(params[0]));

this.is_user_valid(rcpt.user, function (isvalid) {

if (isvalid) {

connection.logdebug("valid email recipient. continuing...", this);

next();

} else {

connection.logdebug("invalid email recipient. deny email receipt.", this);

next(deny, "invalid email address.");

}

});

};

exports.is_user_valid = function (userid, callback) {

var plugin = this;

plugin.pool.connect(function (err, client, done) {

if (err) {

plugin.logerror('error fetching client from pool. ' + err);

return callback(false);

}

client.query(plugin.sqlquery,

[userid], function (err, result) {

//release the client back to the pool by calling the done() callback.

done();

if (err) {

plugin.logerror('error running query. ' + err);

return callback(false);

}

return callback(result.rows[0].exists);

});

});

};

here we are using the

sqlquery

given in the configuration file, to determine if the recipient is a valid user. all the code is pretty standard.

one thing worth noting are the

next()

and the

next(deny, "invalid email address.")

. this where we are accepting and rejecting the email.

next()

method will continue the operation, and

next(deny, "invalid email address")

method will reject the email with an error message

invalid email address

.

now, you might be wondering what is

deny

and where does it come from. well, it’s defined in a separate package called

haraka-constants

and you can import and use it for clarity’s sake. however, it will be available my the time the plugin runs anyway, so you can use it the way i have, as well.

de-initialization or destruction

now for the cleanup part.

exports.shutdown = function () {

this.loginfo("shutting down validity plugin.");

this.pool.end();

};we are actually doing some work here by closing down the postgresql connection pool when the plugin is stopping.

activating the plugin

all you have to do is to activate the plugin by entering the following line in the

/path/to/haraka_test/config/plugins

file.

rcpt_to.validitytest it out

make sure your database is up and that it has a valid email address for the plugin to authorize.

now send an email witha valid recipient, an invalid recipient will occur in the email rejection with a message like this

<** 550 invalid email address.

-> quitwhat can the plugins be used for?

now these plugins should get you started on the way to writing your own email service. the aws plugin is meant to show you guys how you can use any medium you want to store the email files. if you were going to adapt it to production, you might want to consider parsing the email and pushing a json document with the parsed content, maybe extracting the attachments separately and just include the file links in the json document.

you can use the validity plugin to make sure only registered user email addresses are accepted by the smtp server. you can pretty much use any other data source you wish for this purpose as well.

the haraka’s plugin system lets you bend the server to your will with ease, it can be used to setup custom email pipelines when existing services are either, too expensive or too rigid to do what you want.

outgoing

now that we have successfully setup our incoming email pipeline, let’s see how we can configure haraka to send emails.

this involves setting up and enabling two plugins since their documentation is sufficient to setup and enable them i will not be going to those details in this post.

auth/flat_file plugin

to enable outgoing emails we need to set the

connection.relaying

to

true

from a plugin. while it’s rather easy to write your own plugin to do this, the easiest way to do so is by enabling the

auth/flat_file

. detailed instructions for this plugin can be found in the

documentation

plugin.

tls plugin

before you can enable the

auth/flat_file

you need to enable the

tls

plugin, the instructions for enabling the plugin can be found in the

documentation

.

i just used a self-signed certificate to enable tls, but depending on whether you are setting something up for production or testing purposes you might want to consider buying an actual certificate.

testing

once that’s done, you can use

swaks

to test your email server’s outgoing capabilities using the following command.

swaks -f [email protected] -t [email protected] -s localhost -p 587 -au username -ap password

this will send a test email to

[email protected]

from

[email protected]

.

note that i’ve provided the outgoing port as

587

, that’s the port my haraka server is listening for outgoing emails. you can find more details about that from

here

.

you can tell your haraka server to listen to both port

25

for incoming emails, and

587

for outgoing emails, by adding the following lin of configuration to

/path/to/haraka_test/config/smtp.ini

file.

listen=[::0]:587,[::0]:25now, you are done. you can, of course, write an authentication plugin yourself that will use a better mechanism than a flat file to store user credentials...or, you can keep this as is and rely on other security measures like firewalls or access control lists to keep your server’s outbound capabilities secure.

conclusion

this guide should take you quite far in setting up your email service, or email pipeline to do whatever you want. the source code for the haraka configuration can be found in this github repository .

i’ve also written a small java application that lists emails stored in an aws s3 bucket as well as send simple text based emails. you can find the source code in this github repository .

the application is just a trivial application to demonstrate that the content of this post is sufficient to start a basic email service. of course, there’s a lot more that’s needed to do before you have a fully functional service, like encryption, site building, a rich ui client, etc. hopefully, this will point you in the right direction with a jump start.

Published at DZone with permission of Thihara Neranjya. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments