CRUD Operations using Microsoft Dynamic 365 Connector in Mule

Read this article to view a tutorial on how to understand CRUD operations using Microsoft Dynamic 365 in Mule.

Join the DZone community and get the full member experience.

Join For FreeThe Microsoft Dynamics 365 Customer Relationship Management (CRM) connector for Anypoint platform enables integration with Microsoft Dynamics 365 Web API.

This connector lets you perform operations to:

- Authorize or unauthorize server access.

- Create, update, and delete entities.

- Retrieve a single entity or query multiple entities.

- Associate and disassociate entities.

- Execute actions.

This document will help you understand basic CRUD operations using Microsoft Dynamic 365 connector.

To work with this connector, first, obtain a client ID and secret for your app by logging into the Microsoft Azure Active Directory portal at portal.azure.com.

Before starting, you will need access to a Microsoft Dynamics 365 instance (online or on-premise) leveraged by Azure Active Directory; that is, Azure Active Directory is the Identity Provider that provides access to an application. Once you are done with these accesses you can start creating our Project in Anypoint.

Workflow:

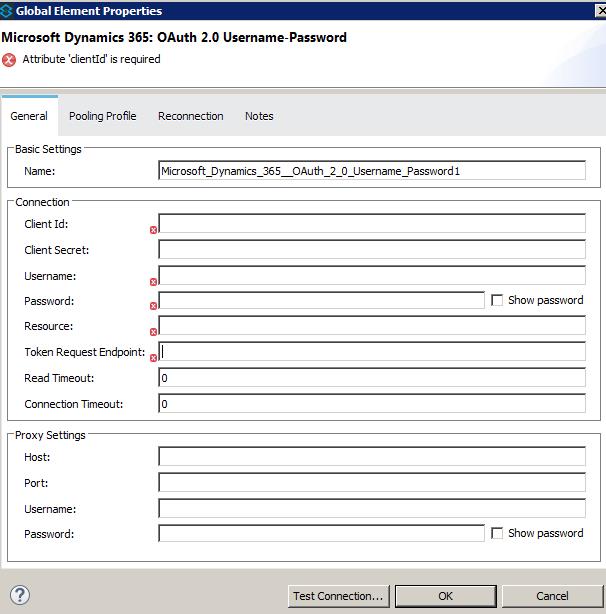

First, download the Microsoft Dynamic 365 Connector from Anypoint Exchange. Use this connector in your flow with Microsoft Dynamic 365 OAuth2.0 Username and Password configuration. After that, provide the required values for connector configuration:

Create:

The connector allows creating new Entity in Dynamics CRM from MuleSoft. We can use Create or Create Multiple Operation.

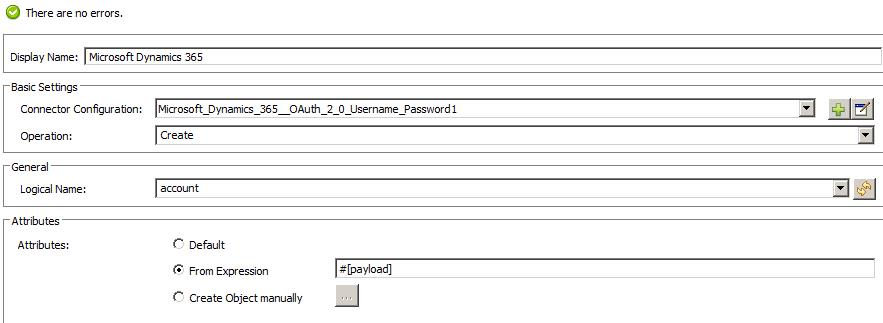

- Create: This Operation will create a single entity and returns entity id in response. For this select Operation as Create and select the Logical Name as the Entity type which you want to create. In Layman terms, you can think of "Entity Type" as "Table," which contains multiple entities (records), and each entity has a unique id known as Entity ID just like the Primary key in the database table.

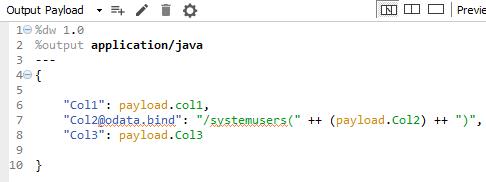

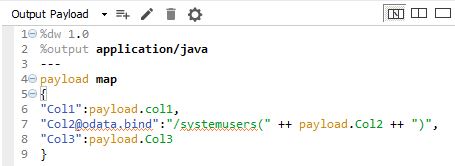

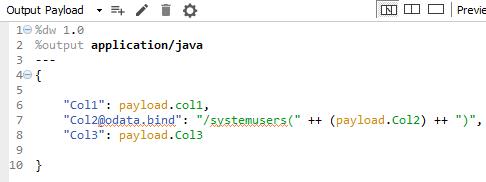

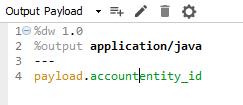

The payload will contain the attributes that we need to insert to create Entity. You need to pass it in object format. You can use dataweave to map the values:

To pass the value to the binded attribute, you need the syntax: “[attribute in Dynamics]@odata.bind”:“/[entityname in which current entity exist, usually appended with 's' in end for collection, you can refer to entity's logical or schema name in CRM](“[entity id]”)”

Example, in the snippet above, “Col2” is the binded value in Dynamic 365. Binded value means that it is part of another entity and is binded in this entity as well, similar to foreign key in Database. “systemuser” is the entity to which this attribute actually belongs and it is referenced in Account entity.

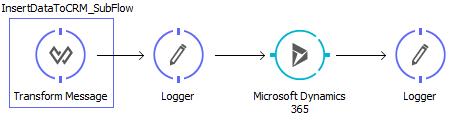

Overall Flow will look like below:

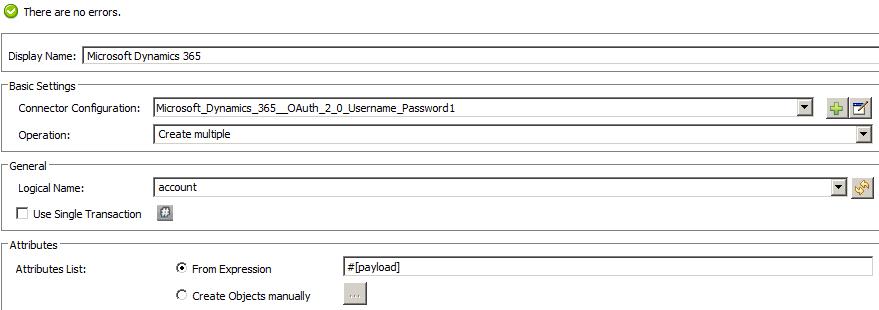

- Create Multiple: This Operation will create multiple entities and returns entity IDs in response. For this select Operation as Create Multiple and Select the Logical Name as the Entity type which you want to create.

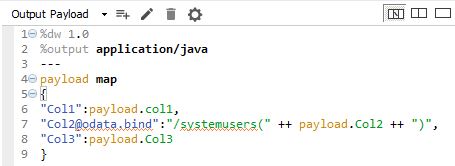

The payload will contain the attributes that we need to insert to create Entity. You need to pass it in object format. You can use dataweave to map the values:

Update:

We can update the existing entity using Dynamic 365 connector. We can use Update and Update Multiple operations.

- Update: This operation performs an update on a single entity in Dynamics 365 from MuleSoft. For this, select Operation as Update and select the Logical Name as the entity type that you want to update, similar to Create.

Then, map the payload accordingly. You can use Dataweave to map the values:

- Update Multiple: This operation performs updates on multiple entities in Dynamics 365 from MuleSoft. For this, select Operation as Update Multiple and select the Logical Name as the entity type that you want to update, similar to Create Multiple.

Map the payload accordingly. You can use Dataweave to map the values:

Delete:

We can delete the existing entity using Dynamic 365 connector. We can use Delete and Delete Multiple operations.

- Delete: This operation deletes entity from Dynamic 365 CRM. We need to provide the entity ID that we need to delete. For this, select Operation as Delete and select the Logical Name as the entity type which you want to delete.

Provide the Entity ID in payload input, eg. below in dataweave:

- Delete Multiple: This operation deletes multiple entities from Dynamic 365 CRM. We need to provide a list of entity IDs that we need to delete. For this, select operation as Delete Multiple and select Logical Name as the Entity type which you want to delete. In this, in Dataweave you will see in output metadata as List<List<String>>.

Provide the Entity ID list in payload input, eg. below in dataweave:

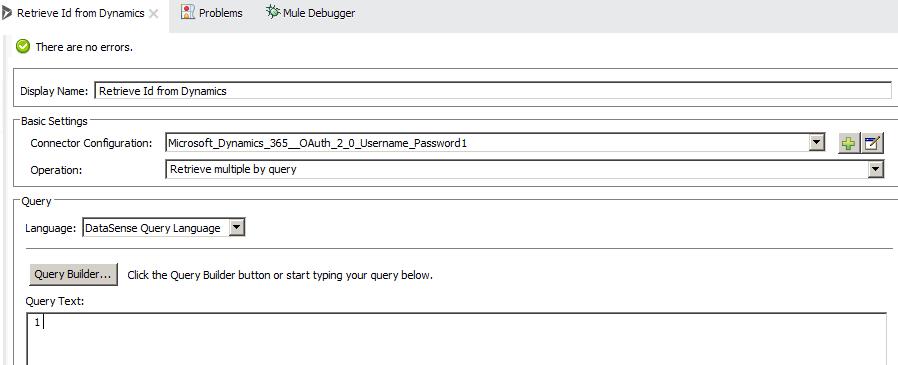

Retrieve Multiple by Query:

With this, you can retrieve data from Dynamic 365 CRM using a normal SQL query. In the connector configuration, select Operation as Retrieve multiple by the query.

You can type the query in Query Text or use the query builder to build the query for you.

It will return the result in Object format. To understand this data, you can use Object to JSON transformer.

Opinions expressed by DZone contributors are their own.

Comments