Effective Reporting for Cloud Well-Architectured Frameworks

Follow a personal experience to obtain effective reporting for a well-architected assessment and utilize a template/guide to leverage for similar situations.

Join the DZone community and get the full member experience.

Join For Free“It sucks…We can’t show this to the customer,” was my colleague's reaction to my first report draft.

I performed a well-architected assessment for a customer, created a report filled with shortcomings, vulnerabilities, and technical how-to fixes. Then, I shared it with my non-developer colleagues for a peer review. They were baffled, and after a few minutes of back and forth debates, one of them politely conveyed to me that it sucks and that we could not show it to the customer.

In retrospect, they were right: As a developer, my report was merely a long list of technical issues full of jargon that only cloud engineers could make sense of. However, we had to present the report to the CIO and the executive committee for funding and approval. Therefore, with help of my colleagues and through a few iterations, I created an easy-to-understand report for a different type of audience. Later on, the customer approved it and they embarked on an improvisation process. Based on that experience, I am going to provide you with a template/guide so you can leverage it for similar situations. My customer was using Azure but this guide is platform agnostic.

Before jumping into the guide, here are some general tips about a well-architected assessment:

- Currently, all major cloud providers have their own framework to perform cloud assessment, and they keep evolving (i.e., Google Cloud Architecture Framework, AWS Well-Architected Tool, Microsoft Azure Well-Architected Framework). Use the framework of your cloud provider, as it's fit for your purpose and can empower you with granular assessment/solution/reporting depending on services that you use. However, do not limit yourself only to that, and try to leverage tools from other providers. In my case, the customer used Azure so I used Azure’s tool for the service-specific part of the report; meanwhile leveraged AWS’s as it provided (at least at the time) a better perspective on the overall aspect of cloud operation.

- Cloud assessment frameworks typically consist of five major pillars: security, cost optimization, operational excellence, reliability, and performance efficiency. To prevent any future disappointment, before starting the assessment, ask your customer to prioritize the pillars according to their needs. Make it clear that you must prioritize your efforts accordingly, and that improvisation of cloud environment is an ongoing effort: it can get better, but always will be imperfect.

- Don’t blindly trust what the customer/development team answers to framework questionnaires. It often happens that they underestimate the severity of issues/overestimate their methodology approach. Go through their answers together with them, and review their answers.

- Make sure to avoid personal attacks/finger pointing at any cost throughout the whole process. Clarify and ensure this to all the respondents, and ask them to be as honest as possible (as it is the only way to diagnose root causes and ultimately to fix them).

- Whenever your proposed solution/service costs additional money, clearly mention it in your report. Every time.

Here is the format we used. Within each section, try to answer the listed questions (there are sample answers for a guide). You can customize the whole thing according to your needs.

1. Executive Summary

This section has to be understandable by all types of audiences, as well as give a 50,000-foot view of the whole thing.

- What is this report about?

- What did you assess? (Pillars, etc.)

- How did you do the assessment? (Workshops, hands-on investigation, etc.)

- Why the customer is using the Cloud? What are the key takeaways? In our case, design, security, operational control, and governance were the main points/issues; therefore, I elaborated on them. In a nutshell, most of the issues were because of a lack of centralized monitoring, automation, and control. However, remember to mention positive aspects, too. It cannot be all bad. Do not be like me that initially just bombarded the report with only bad things. Customers should not feel dumb.

- What is your solution? How are you trying to help the customer to overcome those issues?

- What are the milestones to improve the situation? Briefly summarize what each milestone will achieve.

2. Context

Methodology

- How did you do the assessment? Workshops, hands-on investigation, etc.

Assumptions

- Which assumptions did you receive from the customer?

- What is the priority of the pillars?

- Which workloads are covered? Do you have to deal with mission-critical workloads? If yes, what are SLAs, etc.? Is there any recovery objective defined?

- List any other assumptions that readers have to be aware of.

Summary of Findings

This section gives a fast, yet insightful view of the situation. Keep it understandable for a non-technical audience; avoid using jargon. Keep this section concise; it should be readable within 1-2 minutes.

Positive

It cannot be all bad. List some positive aspects of the environment/team/operation/strategy.

Needs Improvement

Yes, this title is much more diplomatic than the one, which I originally used.

Detailed Findings

This section outlines more details about the most important issues (from the highest priority pillars) along with recommendations. For more clarity, associate each issue with a severity level, preferably with some color. Briefly, advise the customer on how to approach the issues. In our case, “critical” issues required immediate attention; otherwise, they could result in data breach/security incidents. Here is an example:

# |

Severity |

Category |

Observation |

Recommendation |

1 |

Critical |

General |

Too permissive roles |

There are too many global admins. Grant roles by following the least privilege principle. |

2 |

Critical |

VM |

Too permissive network rules |

Restrict inbound and outbound Network Security Group rules to specific CIDR. In addition, enable Just-in-time access to management ports |

3 |

High |

VM |

Unsupervised SQL instances |

Enable SQL Vulnerability Assessment |

4 |

Medium |

General |

Inconsistent tagging |

Leverage Azure Policy to ensure consistent tagging across all resources |

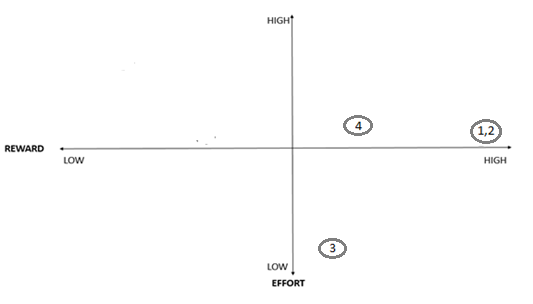

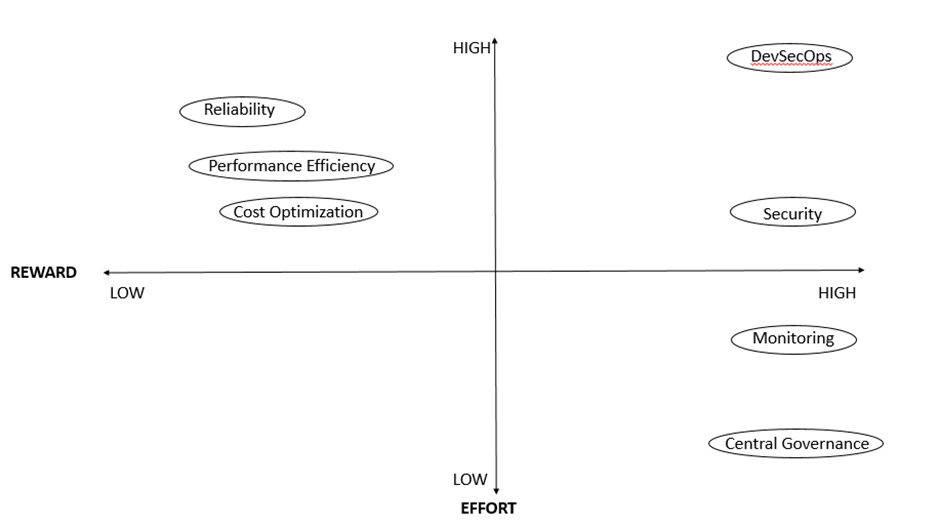

Provide a corresponding effort-reward matrix for the aforementioned issues. You may want to advise the customer to address issues from button-right as it can provide quick wins.

Finding Summary by Pillar

This section targets a technical audience. Create a subsection for each of the pillars, and list all the discovered points (negative and positive). Be specific. Here are some examples:

Operational Excellence

[Customer],

- Does not have a fully automated integration and deployment setup

- Has a limited process or mechanism to mitigate deployment risks

- Has codified and automated a few components/operations

- Uses Git for version control but does not follow any of established git workflows

Security

[Customer],

- Does not have any process or mechanism to audit, manage and minimize permissions

- Relies on a centralized identity provider, which makes it easier to audit and to secure user authentication.

Remediation by Pillar

This section targets a technical audience. List action points to address major issues from each pillar. Be specific. Here are a few examples:

- Operational Excellence

- Tagging

- Currently, resources have tags, but there is no mechanism to ensure consistency across all resources. [Customer] can achieve that by creating policies in Azure Policy (more info).

- Security

Virtual Machines

- Enable vulnerability assessment. Azure has an integrated tool that can help [customer] do that (more info). Please note that this can result in additional costs. [customer] can get updated cost info here.

- Enable Azure Endpoint Protection to help against malware (more info).

Azure Functions

- System-assigned identity management allows cloud services to authenticate without the need for storing credentials. Go to the Identity section and enable it.

3. Effort and Reward

Make it clear that improvement is a matter of trade-off. One cannot make everything perfect, though gradual improvement (aligned with one’s interests) is the most effective way to start improving. Then, provide a high-level reward-effort matrix for the current state, consistent with sub-sections addressed in the previous section. Here is an example:

Recommendations

How do you recommend the customer to address the issues? Here are a few recommendations:

- Start with bottom-right issues as they provide quick wins.

- Centralized governance and monitoring are prerequisites for all the pillars. To achieve those, the customer can leverage built-in tools, at the least. Do not forget to mention that such services may cost additional money.

- Recommend the customer to take a data-driven approach. Start it by leveraging cloud providers’ built-in tools, reviewing their reports regularly, and optimizing the environment accordingly.

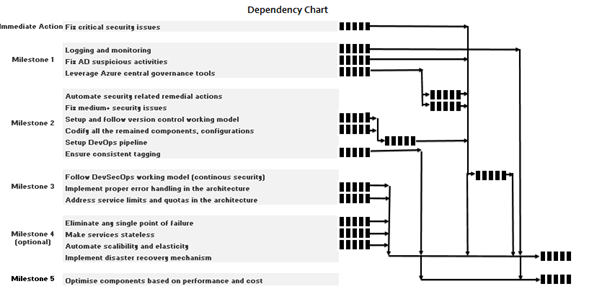

4. Proposed Roadmap

Recommend the customer to fix critical issues ASAP. Then propose milestones, each comprised of action points. Here are some examples:

Milestone 1

- Configure built-in logging and monitoring tools in Azure components.

- Configure and leverage Azure central governance tools, review them at least weekly, and tune the architecture accordingly.

- Address suspicious authentications/authorizations in AD review.

Milestone 2

- Automate security remedial actions with help of Azure Policy and other tools mentioned in the Central Governance section.

- Setup and follow a suitable version control working model.

- Codify all the remained applications and configurations into ARM templates.

- Setup DevOps pipeline and avoid any kind of manual deployment.

- Ensure consistent tagging throughout resources.

Dependency Chart

Outline dependencies between proposed actions from the roadmap. Following is an example. The figure is only for illustration helping the customer to understand the relationship between the activities.

5. Appendix

Add any missing detail or notes.

Opinions expressed by DZone contributors are their own.

Comments