Histogram Functions in Accelerate vImage

How to use Apple's vImage API in Swift to manipulate the histogram of images programmatically.

Join the DZone community and get the full member experience.

Join For FreeWhilst Core Image has an amazing range of image filters, the iOS SDK Accelerate framework's vImage API includes some impressive histogram functions that CI lacks. These include histogram specification, pictured above, which applies a histogram (e.g. calculated from an image) to an image, and contrast stretch which normalizes the values of a histogram across the full range of intensity values.

vImage's API isn't super friendly to Swift developers, so this blog post demonstrates how to use these functions. These examples originate from a talk I gave at ProgSCon, and you can see the slideshow for the talk here.

Converting Images to vImage Buffers and Vice Versa

Much like Core Image has its own CIImage format, vImage uses its own format for image data: vImage_Buffer. Buffers can easily be created from a Core Graphics CGImage with a few steps. First of all, we need to define a format: this will be 8 bits per channel and four channels per pixel: red, green, blue and, lastly, alpha:

let bitmapInfo:CGBitmapInfo = CGBitmapInfo(

rawValue: CGImageAlphaInfo.Last.rawValue)

var format = vImage_CGImageFormat(

bitsPerComponent: 8,

bitsPerPixel: 32,

colorSpace: nil,

bitmapInfo: bitmapInfo,

version: 0,

decode: nil,

renderingIntent: .RenderingIntentDefault)Given a UIImage, we can pass its CGImage to vImageBuffer_InitWithCGImage(). This method also needs an empty buffer which will be populated with the bitmap data of the image:

let sky = UIImage(named: "sky.jpg")!

var inBuffer = vImage_Buffer()

vImageBuffer_InitWithCGImage(

&inBuffer,

&format,

nil,

sky.CGImage!,

UInt32(kvImageNoFlags))Once we're done filtering, converting a vImage buffer back to a UIImage is just as simple. This code is nice to implement as an extension to UIImage as a convenience initializer. Here we can accept a buffer as an argument, create a mutable copy, and pass it to vImageCreateCGImageFromBuffer to populate a CGImage:

extension UIImage

{

convenience init?(fromvImageOutBuffer outBuffer:vImage_Buffer)

{

var mutableBuffer = outBuffer

var error = vImage_Error()

let cgImage = vImageCreateCGImageFromBuffer(

&mutableBuffer,

&format,

nil,

nil,

UInt32(kvImageNoFlags),

&error)

self.init(CGImage: cgImage.takeRetainedValue())

}

}Contrast Stretching

vImage filters generally accept a source image buffer and write the result to a destination buffer. The technique above will create the source buffer, but we'll need to create an empty buffer with the same dimensions as the source as a destination:

let sky = UIImage(named: "sky.jpg")!

let imageRef = sky.CGImage

let pixelBuffer = malloc(CGImageGetBytesPerRow(imageRef) * CGImageGetHeight(imageRef))

var outBuffer = vImage_Buffer(

data: pixelBuffer,

height: UInt(CGImageGetHeight(imageRef)),

width: UInt(CGImageGetWidth(imageRef)),

rowBytes: CGImageGetBytesPerRow(imageRef))With the inBuffer and outBuffer in place, executing the stretch filter is as simple as:

vImageContrastStretch_ARGB8888(

&inBuffer,

&outBuffer,

UInt32(kvImageNoFlags))...and we can now use the initializer above to create a UIImage from the outBuffer:

let outImage = UIImage(fromvImageOutBuffer: outBuffer)Finally, the pixel buffer created to hold the bitmap data for outBuffer needs be freed:

free(pixelBuffer)Contrast stretching can give some great results. This rather flat image:

Now looks like this:

Conclusion

vImage, although suffering from a rather unfriendly API, is a tremendously powerful framework. Along with a suite of histogram operations, it has functions for deconvolving images (e.g. de-blurring) and some interesting morphology functions (e.g. dilating with a kernel which can be used for lens effects such as starburst and bokeh simulation).

My Image Processing for iOS slide deck explores different filters and the companion Swift project contains demonstration code.

The code for this project is available in the companion project to my image processing talk. I've also wrapped up this vImage code in a Core Image filter wrapper which is available in my Filterpedia app.

It's worth noting that the Metal Performance Shaders framework also includes these histogram operations.

Addendum: Contrast Stretching With Core Image

Many thanks to Simonas Bastys of Pixelmator who pointed out that Core Image does indeed have contrast stretching through its auto-adjustment filters.

With the sky image, Core Image can create a tone curve filter with the necessary values using this code:

let sky = CIImage(image: UIImage(named: "sky.jpg")!)!

let toneCurve = sky

.autoAdjustmentFiltersWithOptions(nil)

.filter({ $0.name == "CIToneCurve"})

.firstThe points create a curve that looks like this:

Which, when applied to the sky image:

if let toneCurve = toneCurve

{

toneCurve.setValue(sky, forKey: kCIInputImageKey)

let final = toneCurve.outputImage

}....yields this image:

Thanks again Simonas!

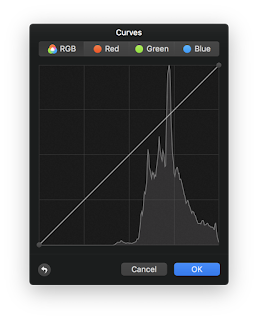

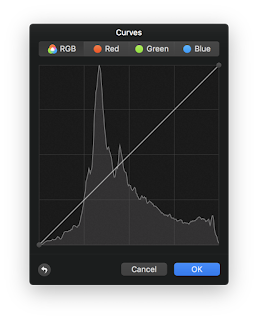

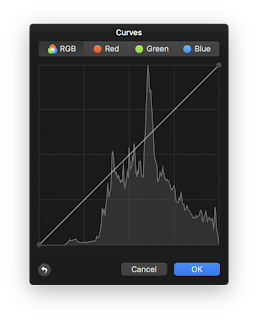

FYI, the histograms for the images look like this (thanks to Pixelmator). vImage stretches the most!

Original image:

vImage contrast stretch

Core Image contrast stretch

Published at DZone with permission of Simon Gladman. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments